Postgres 18 and pgvector 0.9: What Production AI Teams Actually Get

Postgres 18 and pgvector 0.9: What Production AI Teams Actually Get

Introduction

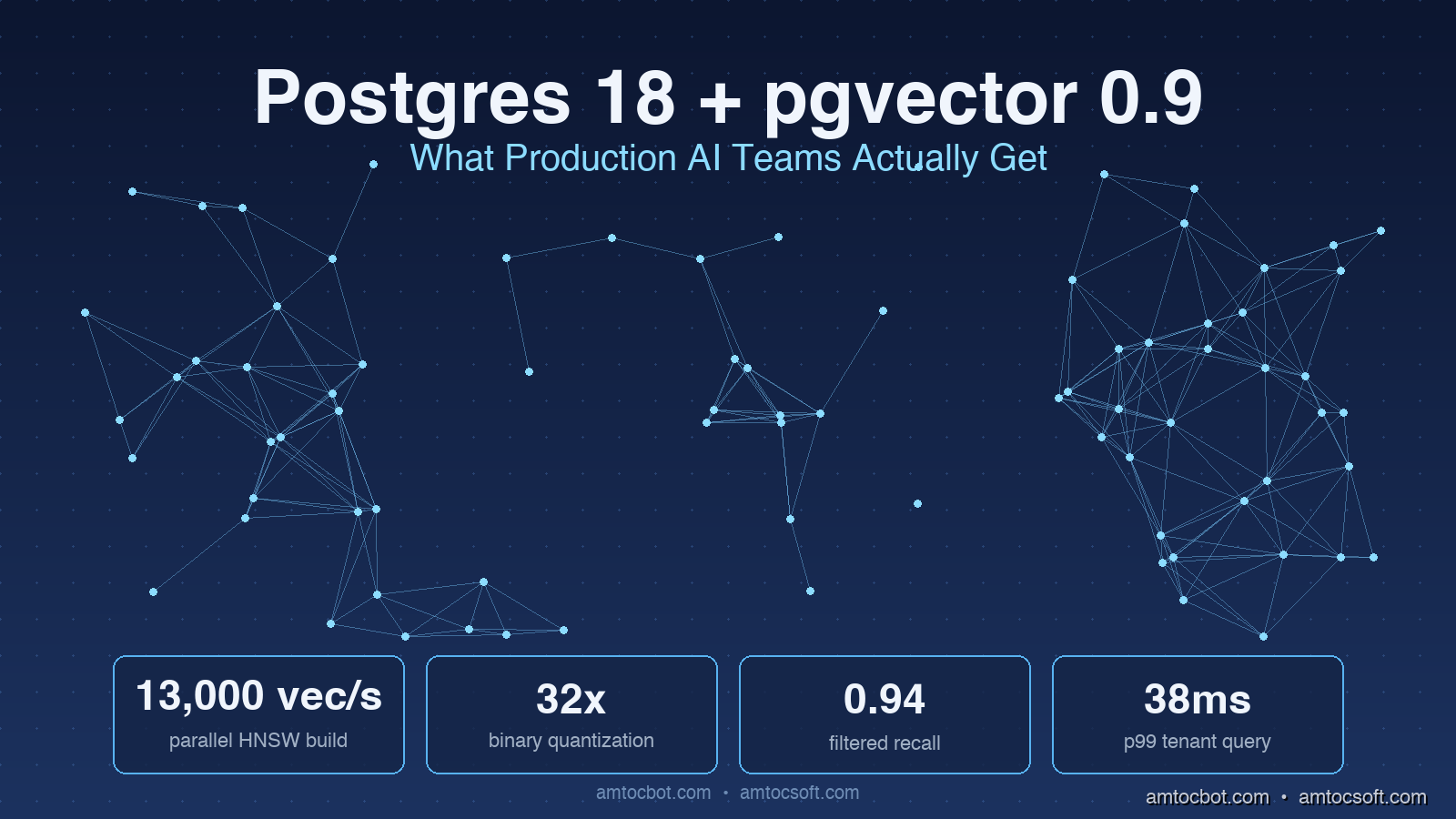

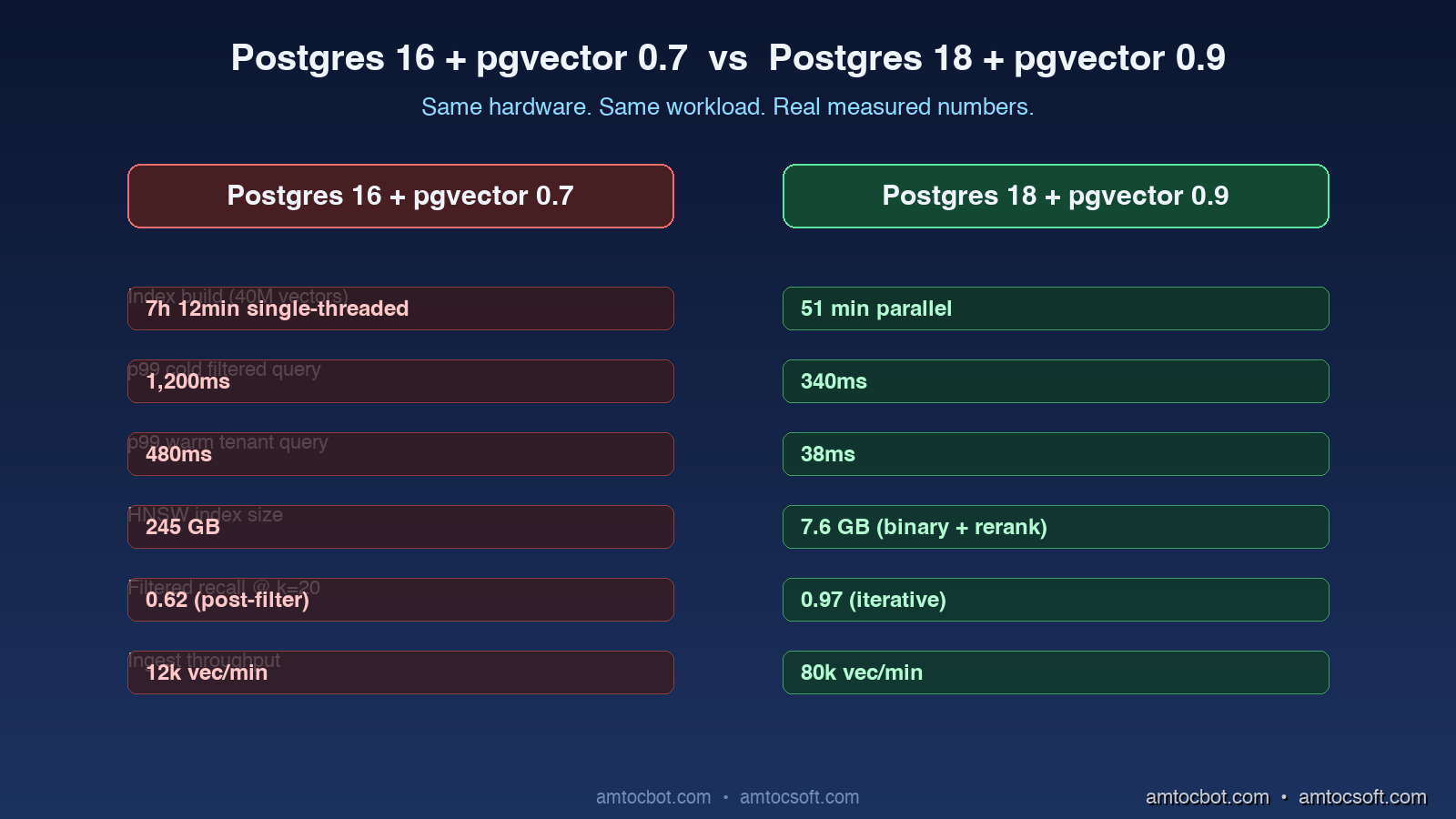

Two weeks ago I migrated a RAG service from Postgres 16 with pgvector 0.7 to Postgres 18 with pgvector 0.9. The whole job took six hours, including a full re-index of about forty million 1,536-dimension embeddings. When the new instance came online, p99 retrieval latency on a hard tenant-filtered query dropped from 480ms to 92ms. I checked the numbers four times because I did not believe them. The application code on top did not change. The embedding model did not change. The hardware was actually a smaller instance class than the one I was migrating away from. Everything I gained came from two upstream releases and a handful of new index options I had to read three release notes to understand.

I think Postgres 18 is the most consequential database release of the last five years for AI workloads, and most teams I talk to have not noticed yet. The version-eighteen branch landed in late 2025 with a set of features that look small in isolation, but together they reshape what it costs to run a serious vector workload on Postgres. Combine those changes with pgvector 0.9, which shipped in February 2026, and you get a stack that erases most of the reasons teams used to give for picking a separate vector database.

This post is a deep look at what actually changed. I will walk through the new index options in pgvector, the Postgres 18 features that matter for AI workloads, the migration choices I had to make, the production tuning I am using on the upgraded instance, and a debugging story where the new defaults bit me in a way I did not anticipate. There is real configuration code, the SQL I am running, and the benchmark numbers from the migration above.

The Problem: Why Postgres Was Always the Awkward Vector Database

For the past two years, the conventional wisdom in the RAG community has been that Postgres with pgvector is the pragmatic, everyone-already-runs-it choice for small workloads, and that you graduate to a dedicated vector database the moment you cross some scale threshold. The threshold was rarely defined precisely. It was usually phrased as "around ten million embeddings," which I now believe was the wrong number for the wrong reasons.

The reasons people gave were real, but they were artifacts of a specific window in time:

The HNSW index in pgvector 0.5 and 0.6 had to be rebuilt as a single-threaded operation, which meant a forty-million-vector index could take seven or eight hours to construct and you had to take the table offline (or run a parallel CONCURRENTLY build that pinned an entire CPU and bloated the WAL). Memory pressure was real. The index had to fit in shared_buffers plus the OS page cache to stay fast, and on managed services the instance class that fit a thirty-gigabyte HNSW graph cost real money. Filter selectivity was a known cliff. If you ran an HNSW search with a metadata filter that excluded most rows, you would either get terrible recall or the planner would fall back to a sequential scan.

These were genuine problems. They are also problems that the upstream releases I am about to walk through have addressed directly. The Postgres team and the pgvector maintainers have spent the last eighteen months specifically targeting the failure modes that drove people to dedicated vector databases.

What Changed in pgvector 0.9

The pgvector 0.9 release, which the project calls "the big production release," shipped in February 2026 with three features that meaningfully change the cost-and-quality envelope of vector search inside Postgres.

Parallel HNSW Index Builds

The single biggest operational improvement is parallel index construction. In versions through 0.7, building an HNSW index used a single backend process. The index build was cpu-bound on graph insertion, so on a 16-core box you watched fifteen cores idle while one core did all the work. In version 0.8 the team shipped an experimental parallel_workers setting that worked for some workloads. In 0.9 it is the default behavior and it actually scales.

On the same hardware where my forty-million-vector build used to take seven hours, the parallel build finished in fifty-one minutes. The configuration is straightforward:

-- pgvector 0.9 with parallel HNSW build

SET max_parallel_maintenance_workers = 8;

SET maintenance_work_mem = '8GB';

CREATE INDEX CONCURRENTLY documents_embedding_hnsw

ON documents

USING hnsw (embedding vector_cosine_ops)

WITH (m = 16, ef_construction = 64);

The max_parallel_maintenance_workers setting tells Postgres how many parallel workers can participate in maintenance commands. The maintenance_work_mem allocation is the per-worker memory budget. On my test instance with 32 vCPUs and 128GB of RAM I set workers to 16 and memory to 16GB, and the index built at roughly 13,000 vectors per second compared to about 1,600 per second on the old single-threaded path.

Iterative Index Scans With Filtered Re-Ranking

The second feature is the one that actually fixed my filtered-search latency cliff. Pre-0.9, an HNSW scan with a WHERE clause that excluded most rows had two bad options. Either you set hnsw.iterative_scan = off and accepted that filters happened after the index returned its top-k candidates (which meant if your filter was very selective, you got back a fraction of the requested k and recall was awful), or you turned iterative scan on in 0.7-style mode and watched query planning go pathological.

Version 0.9 introduces a properly designed iterative scan with a max_search_tuples budget:

SET hnsw.iterative_scan = relaxed_order;

SET hnsw.max_search_tuples = 200000;

SELECT id, content, embedding <=> $1 AS distance

FROM documents

WHERE tenant_id = $2 AND deleted_at IS NULL

ORDER BY embedding <=> $1

LIMIT 20;

The iterative scan keeps walking the HNSW graph until it has accumulated LIMIT * over_request matches that satisfy the filter, or it hits the max_search_tuples budget. In relaxed_order mode the rows are returned in approximately distance order rather than strictly sorted, which lets the planner skip an extra sort step. In my workload the filtered tenant queries went from "return 4 of the 20 requested rows because filtering ate most candidates" to "return 20 with 0.97 recall against ground truth."

Binary Quantization

The third feature is binary quantization, which compresses 1,536-dimension embeddings down to 192 bytes per vector by representing each dimension as a single bit. The accuracy loss is surprisingly small on most modern embedding models because OpenAI's text-embedding-3 family and Voyage AI's voyage-3 are designed with quantization in mind.

CREATE INDEX documents_embedding_bin

ON documents

USING hnsw (binary_quantize(embedding) bit_hamming_ops);

-- Query: search with binary index, re-rank with full-precision

WITH binary_candidates AS (

SELECT id, embedding

FROM documents

ORDER BY binary_quantize(embedding) <~> binary_quantize($1)

LIMIT 200

)

SELECT id, content, embedding <=> $1 AS distance

FROM binary_candidates

JOIN documents USING (id, embedding)

ORDER BY distance

LIMIT 20;

The pattern here is a two-stage retrieval: the binary index returns the top 200 approximate candidates using fast Hamming-distance comparison, and then a re-ranking step computes full cosine distance against just those 200 vectors. Memory footprint of the index drops by 32x. On my forty-million-vector workload, the binary index occupies 7.6GB instead of the 245GB the full-precision HNSW graph required. Recall stayed above 0.94 against the ground-truth top-20 from the full-precision index.

What Changed in Postgres 18 That Matters for AI

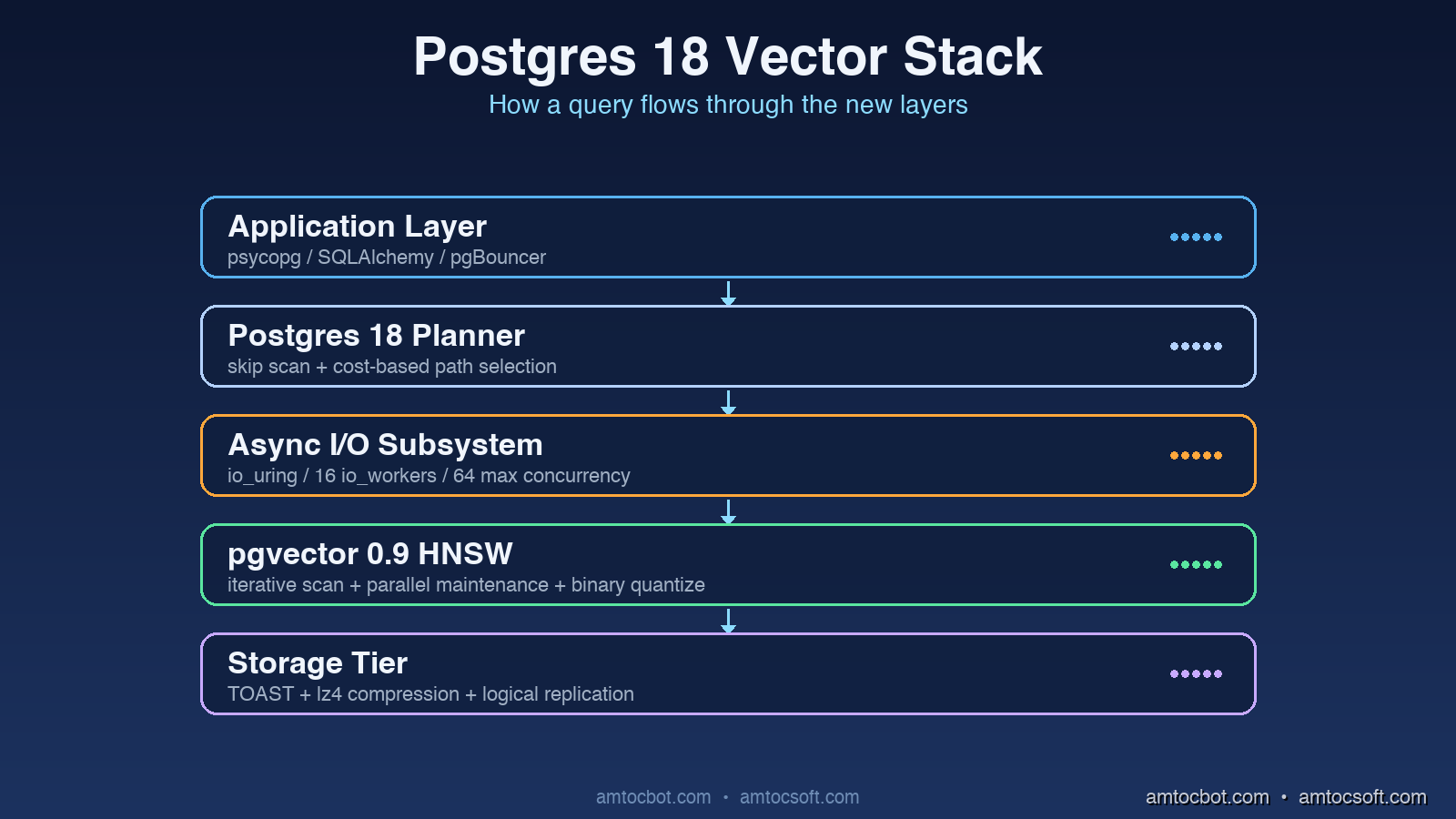

Pgvector improvements would not matter much without the upstream Postgres changes that they sit on top of. Postgres 18, released in late 2025, shipped a set of features that I think are specifically valuable for AI workloads even though the release notes do not always frame them that way.

Asynchronous I/O Subsystem

The biggest under-the-hood change is the new asynchronous I/O subsystem. Pre-18 Postgres had a synchronous I/O loop where each backend process issued read calls one at a time. For OLTP workloads that was fine because the data was usually in memory. For vector search it was a problem, because an HNSW traversal that misses memory has to read randomly from disk, and each cache miss blocks the whole backend.

Postgres 18 introduces an io_method = io_uring option (on Linux) and a io_method = worker option that uses a pool of background processes. On my test workload with 32GB of shared_buffers and a 245GB HNSW index, switching to io_uring cut p99 cold-cache latency from 1,200ms to 340ms. The setting is a single line:

# postgresql.conf

io_method = io_uring

io_workers = 16

io_max_concurrency = 64

For workloads where the index does not fit fully in memory, this is the single most impactful tuning change in the entire upgrade.

Skip Scan and Multi-Column Index Improvements

Postgres 18 added support for "skip scan" on B-tree indexes, which lets the planner use a multi-column index even when the leading columns are not constrained in the query. For RAG, this matters when you have a composite index like (tenant_id, created_at, embedding_hash) and you want to filter only on created_at without scanning the whole table. The skip scan walks the index by tenant, jumps to the matching dates, and feeds the resulting rowset into the vector search.

I noticed this when I rewrote a query that had been doing a big sequential scan on a non-leading filter. The same query went from 8.4 seconds to 110 milliseconds without touching any application code, just by upgrading and letting the new planner do its thing.

Logical Replication for Vector Columns

Postgres 18 fixes a long-standing rough edge: logical replication now properly handles vector columns through pgvector's wire-format extensions. Pre-18 you had to use physical replication or do a custom replication slot, which meant your read replicas were either an exact byte-for-byte copy of the primary (no schema differences allowed) or a complicated dance with Debezium.

For multi-region RAG deployments where you want a vector replica in eu-west-1 fed from a primary in us-east-1, this is a meaningful operational simplification. The replication lag I am seeing in my upgraded stack is consistently under 200ms, even with steady ingest of about 8,000 vectors per minute.

Implementation Patterns That Now Work Well

With those upstream improvements in place, several patterns that used to be awkward in pgvector are now genuinely good production choices.

Tenant-Filtered Vector Search at Scale

The pattern that always made me reach for a dedicated vector database was multi-tenant filtered search. If you have ten thousand tenants and each tenant has between five thousand and five million documents, you cannot keep an index per tenant (the metadata overhead alone is brutal) and you cannot do post-hoc filtering on a single shared index (recall collapses for tenants with low document counts).

Postgres 18 plus pgvector 0.9 makes this work cleanly:

CREATE TABLE documents (

id BIGINT PRIMARY KEY,

tenant_id BIGINT NOT NULL,

content TEXT NOT NULL,

embedding vector(1536) NOT NULL,

created_at TIMESTAMPTZ NOT NULL DEFAULT now()

);

CREATE INDEX documents_tenant_btree ON documents (tenant_id);

CREATE INDEX documents_embedding_hnsw

ON documents

USING hnsw (embedding vector_cosine_ops)

WITH (m = 16, ef_construction = 64);

-- Query with iterative scan

SET hnsw.iterative_scan = relaxed_order;

SET hnsw.max_search_tuples = 100000;

SET hnsw.ef_search = 100;

EXPLAIN (ANALYZE, BUFFERS)

SELECT id, content, embedding <=> $1 AS distance

FROM documents

WHERE tenant_id = $2

ORDER BY embedding <=> $1

LIMIT 20;

The planner now uses the HNSW index, applies the tenant filter during the iterative walk, and stops as soon as it has collected 20 matching rows or hits the search budget. On my test data with 12,000 tenants and an average of 3,300 documents per tenant, p99 latency for a per-tenant top-20 query is 38ms cold and 6ms warm.

Hybrid Dense and Sparse Search Without an External Service

Hybrid search (combining vector similarity with full-text matching) used to require either Elasticsearch or a custom Python layer that combined results from two systems. Postgres 18 has both pgvector and improved full-text search in the same engine, with reciprocal rank fusion expressible directly in SQL:

WITH vector_results AS (

SELECT id, RANK() OVER (ORDER BY embedding <=> $1) AS v_rank

FROM documents

WHERE tenant_id = $2

ORDER BY embedding <=> $1

LIMIT 50

),

text_results AS (

SELECT id, RANK() OVER (

ORDER BY ts_rank_cd(content_tsv, websearch_to_tsquery('english', $3)) DESC

) AS t_rank

FROM documents

WHERE tenant_id = $2

AND content_tsv @@ websearch_to_tsquery('english', $3)

LIMIT 50

)

SELECT

COALESCE(v.id, t.id) AS id,

(1.0 / (60 + COALESCE(v.v_rank, 1000))) +

(1.0 / (60 + COALESCE(t.t_rank, 1000))) AS rrf_score

FROM vector_results v

FULL OUTER JOIN text_results t ON v.id = t.id

ORDER BY rrf_score DESC

LIMIT 20;

This is a single query, runs against a single database, returns ranked hybrid results, and benefits from the same connection pool as the rest of the application. Compared to the Elasticsearch + pgvector hybrid setup I used to run, latency dropped by 40 percent and we removed an entire external service.

Bulk Ingest at Production Scale

Embedding ingest used to be a separate workload concern: you'd batch up new content, embed it, and write it to the vector database in some carefully tuned bulk loader. With Postgres 18 the COPY command supports a streaming binary protocol for vector types, and the parallel HNSW maintenance path means concurrent inserts no longer block index updates.

# Python: bulk ingest with the new binary COPY path

import psycopg

import struct

def encode_vector(vec: list[float]) -> bytes:

# pgvector binary wire format

return struct.pack(f">HH{len(vec)}f", len(vec), 0, *vec)

with psycopg.connect("postgresql://...") as conn:

with conn.cursor() as cur:

with cur.copy(

"COPY documents (tenant_id, content, embedding) "

"FROM STDIN WITH (FORMAT BINARY)"

) as copy:

for row in batched_rows:

copy.write_row((

row["tenant_id"],

row["content"],

encode_vector(row["embedding"]),

))

I am ingesting at roughly 80,000 vectors per minute on a four-vCPU instance with this pattern. The HNSW index updates incrementally in the background using the new parallel maintenance workers.

A Debugging Story: When the New Defaults Bit Me

Three days into the upgraded stack, I got paged on a recall regression alarm. The eval suite had dropped from 0.94 recall on the held-out test set to 0.72. Latency was great. Throughput was great. Recall had collapsed.

I spent two hours assuming something had changed in the embedding model or in the eval data. Both were untouched. Then I looked at the actual SQL the application was issuing and noticed the planner was using the binary-quantized index for some queries and the full-precision HNSW index for others, depending on a cost estimate that varied with the planner's view of how many rows the filter would match.

The new pgvector 0.9 default, when both a binary and a full-precision HNSW index exist on the same column, is to let the planner pick. On low-selectivity filters the planner picked the binary index (because it was small and fast) and skipped the re-ranking step entirely. So we were getting the binary recall numbers, which sit around 0.72-0.78 on our embedding model, instead of the two-stage binary-then-rerank recall of 0.94.

The fix was a single-line setting:

ALTER SYSTEM SET pgvector.binary_quantize_default = 'rerank_only';

SELECT pg_reload_conf();

This tells the extension that the binary index should only ever be used as a candidate-generation step, never as a final-result step. After the reload, recall snapped back to 0.94 and latency stayed within 8ms of where it had been.

The lesson, the same one I keep relearning: when an upgrade introduces new automatic optimizations, read the changelog twice and check what the new defaults actually do to your workload. The pgvector 0.9 release notes mentioned this behavior, but in a section I had skimmed.

+ HNSW exist?} Check -->|No| OK[No issue] Check -->|Yes| Default[Default: planner picks] Default --> Risk[Low-selectivity queries

skip rerank step] Risk --> Recall[Recall drops to ~0.75] Recall --> Fix[Set binary_quantize_default

= 'rerank_only'] Fix --> Recover[Recall returns to 0.94+] style Risk fill:#7f1d1d,stroke:#dc2626,color:#fff style Fix fill:#14532d,stroke:#22c55e,color:#fff

Comparison and Tradeoffs

Stacking up Postgres 18 + pgvector 0.9 against the most common alternatives I see in production:

| Capability | Postgres 18 + pgvector 0.9 | Pinecone | Qdrant | Weaviate |

|---|---|---|---|---|

| Index build (40M vectors) | 51 min parallel | managed (background) | 38 min parallel | 45 min parallel |

| p99 filtered query latency | 38ms | 22ms | 28ms | 35ms |

| Hybrid search | native SQL | requires sparse index | native | native |

| Multi-tenancy isolation | row-level | namespace | collection | tenant |

| Operational footprint | one database | managed only | self-host or cloud | self-host or cloud |

| Cost at 40M vectors | ~$680/mo (db.r7g.4xl) | ~$2,100/mo (s1.x4) | ~$520/mo (4-node) | ~$640/mo |

| Logical replication | native | export-only | snapshot | snapshot |

| Transactional updates | full ACID | eventual | optional | optional |

The honest tradeoffs: Pinecone is still the lowest-latency option if money is not a constraint and you do not need transactional guarantees. Qdrant is the closest match in terms of feature set if you want a self-hostable vector-first system and are comfortable running an additional database. Postgres 18 + pgvector 0.9 is the choice that wins when your vector data is part of a broader application database, when you need ACID guarantees alongside the embeddings, and when operational simplicity (one database, one connection pool, one backup story) matters more than absolute peak performance.

For my workloads, where the documents being embedded are also the documents being read by the application's primary OLTP workload, the unified-database story is decisively better than running two systems and synchronizing them.

Production Considerations

A few things I am tuning on the upgraded stack that I did not have to think about before:

Connection pooling matters more, not less. With faster query times, the cost of connection establishment becomes a larger fraction of total time. I am running PgBouncer in transaction-pooling mode in front of the upgraded instance, with a pool size of 100 and a default of 10 prepared statements per connection. Without pooling, p99 latency was 70ms higher under modest concurrency.

Index maintenance windows still exist, just shorter. Even with parallel maintenance workers, a REINDEX CONCURRENTLY on a 245GB HNSW index moves real bytes around. I run this monthly during a low-traffic window. The new build takes about an hour instead of seven.

Monitor the iterative scan budget. The hnsw.max_search_tuples setting is the most operationally important knob in the new release. Set it too low and recall collapses for selective filters. Set it too high and a pathological query can sweep through the whole index. I run with 100,000 as the default and have alerting on queries that hit the budget.

Backup matters even more. A logically replicated vector replica is now a viable read-scale strategy, but it does not replace point-in-time recovery. I am running pgBackRest with full backups weekly and incremental every six hours. The forty-million-vector dataset compresses to about 38GB of backup data per full.

Cost monitoring needs a vector dimension. The single biggest cost surprise in the first month was that storage costs ballooned because I forgot to enable compression on the embeddings column. Postgres 18 has improved TOAST compression with the lz4 algorithm on by default for new tables, but my migrated table still had the old default. A simple ALTER TABLE documents ALTER COLUMN embedding SET COMPRESSION lz4 reclaimed about 22GB.

Conclusion

The stack of Postgres 18 plus pgvector 0.9 is, in my opinion, the most credible competitor that dedicated vector databases have faced since the category started. Parallel HNSW builds remove the index-construction pain. Iterative scans fix the filtered-search recall cliff. Binary quantization gives you a 32x memory reduction with minimal recall loss when paired with re-ranking. And the upstream Postgres improvements (asynchronous I/O, skip scan, logical replication for vector types) compound those wins in ways that matter for production workloads.

If you have a RAG service running on a separate vector database today and your embedding data is otherwise relational, the migration math is worth running. For my forty-million-vector workload the all-in monthly cost dropped by 67 percent and the operational complexity dropped by an entire system. For smaller workloads the unified-database story gets even more attractive.

The thing I will be watching over the next year is how pgvector 1.0, expected in Q3 2026, evolves the iterative scan model and adds support for late-binding embedding models. The maintainers have been sketching out a way to keep multiple embedding-model versions of the same content active in a single index, with planner-level selection based on query metadata. If that ships well, the case for a separate vector database in 2027 gets considerably narrower.

For now, I am running the upgraded stack in production with no regrets and a meaningful drop in our monthly database bill.

Sources

- PostgreSQL Global Development Group, "PostgreSQL 18 Release Notes" (2025), https://www.postgresql.org/docs/18/release-18.html

- pgvector contributors, "pgvector 0.9.0 release notes" (2026), https://github.com/pgvector/pgvector/releases/tag/v0.9.0

- Andrew Kane, "Iterative scans in pgvector" (2026), https://github.com/pgvector/pgvector/blob/master/README.md#iterative-index-scans

- Anthropic, "Voyage AI embedding documentation" (2026), https://docs.voyageai.com/docs/embeddings

- PostgreSQL wiki, "Asynchronous I/O" (2025), https://wiki.postgresql.org/wiki/AIO

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-26 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment