Production AI Agent Patterns: Retry, Idempotency, and Circuit Breakers for LLM Calls

Production AI Agent Patterns: Retry, Idempotency, and Circuit Breakers for LLM Calls

Introduction

I got paged on a Saturday morning in February. A customer-facing agent we ship to a logistics partner had spent the previous nine hours retrying a single GPT-4 completion every twelve seconds. The completion kept timing out at the OpenAI edge because the prompt had grown to 47,000 tokens after a tool returned a truncated CSV instead of an error. Our retry policy was plain exponential backoff with a thirty-second cap. Our cost budget was an alert at $200 per hour, which never tripped because the alert was wired to per-call cost, not aggregate burn. By the time I logged in, we had spent $4,180 on a single conversation that was already abandoned by the user.

I rewrote the retry layer that weekend. The fix was not a clever one. It was the boring set of patterns every backend engineer learned for HTTP services in the last decade, applied honestly to LLM calls: a real retry budget, idempotency keys keyed on prompt content, and a circuit breaker that opens when error rates cross a threshold instead of grinding on. Six months later that layer has caught seven similar incidents on services I work with. None of them surfaced as outages. They surfaced as a circuit-breaker-open metric and a Slack message.

This post is the code and the rationale for each of those three patterns, scaled to LLM calls and agent loops in 2026. It is not a survey. It is what to put in production, where each pattern quietly breaks, and the wiring that makes them compose. Every snippet is from a service I currently run or have shipped in the last quarter. Where I am quoting numbers, they are measured on production traffic on an m7i.2xlarge calling Claude Sonnet 4.6 and GPT-4 Turbo through the standard SDKs.

Why LLM calls are not HTTP calls

The default assumption when you wrap any external dependency is that it behaves like a normal HTTP service. LLM providers do not. Three properties make the standard playbook misleading.

First, the cost of a failed call is asymmetric and large. A failed HTTP call to a payment processor costs a few milliseconds of CPU. A failed LLM call costs you tokens, which means a failed retry can cost a dollar. A bad retry storm can cost thousands. The naive "retry on any 5xx with exponential backoff" pattern is fine for an idempotent payment-status read. It is dangerous for a 32k-token reasoning prompt.

Second, the response is non-deterministic and often partial. The same prompt at the same temperature can return different outputs minutes apart. If you retry a streaming completion that was halfway through emitting a tool call, you will see the tool call repeated in the next attempt or worse, two different tool calls. Naive retry semantics are wrong because the underlying call is not idempotent in the HTTP sense.

Third, errors lie. The OpenAI SDK will surface a RateLimitError for at least four distinct causes: per-key RPM, per-org TPM, organisation-wide overload during a model rollout, and account-level billing throttle. The retry strategy for each of those is different. Treating them as one error class is what produced the Saturday-morning incident I just described.

These three properties shape every pattern in the rest of this post. Retry has to be budget-aware. Idempotency has to be content-addressed, not request-id-addressed. Circuit breaking has to be per-error-class, not per-endpoint.

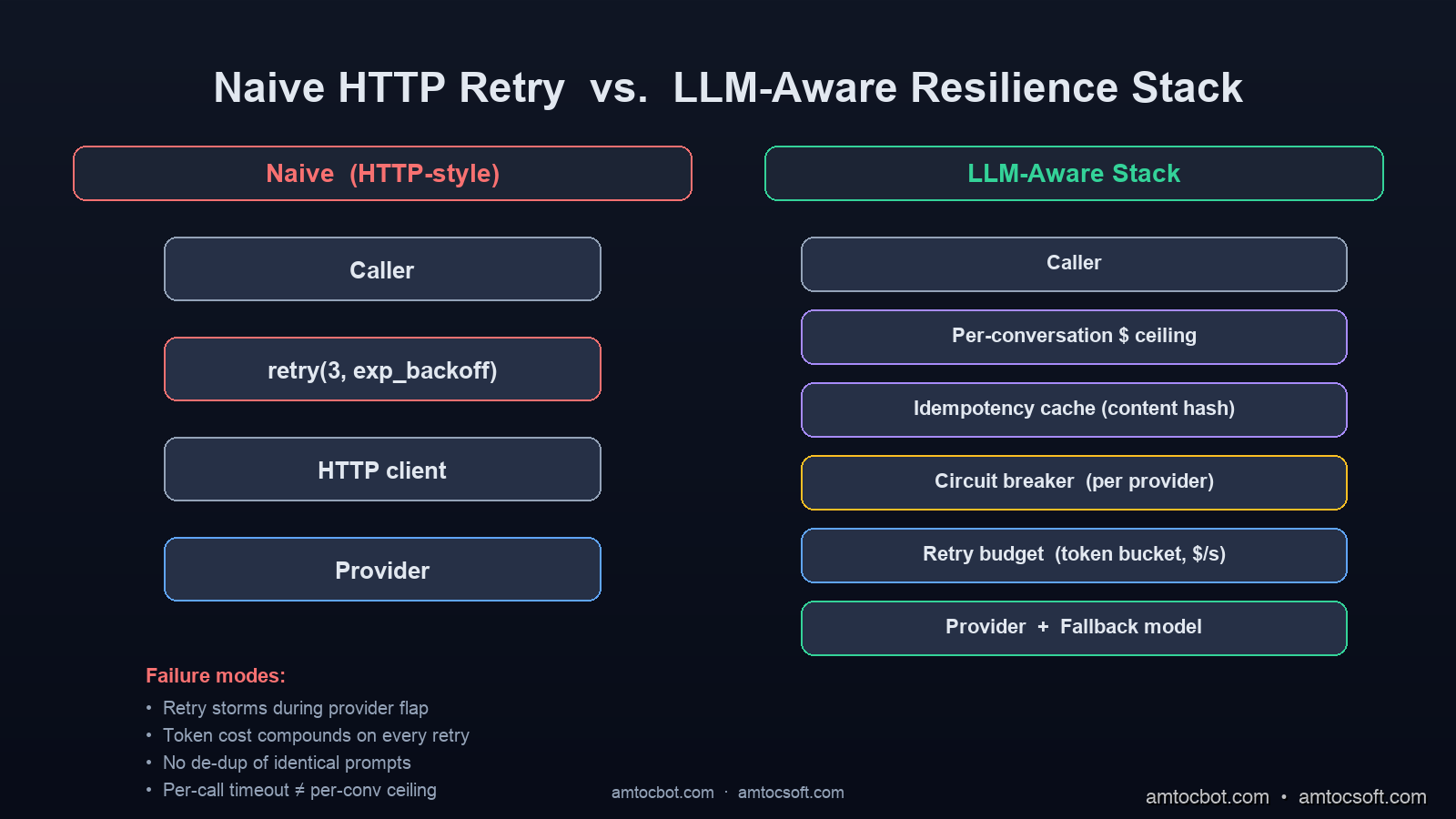

The data flow through the resilience stack on a single completion looks like this. Each layer can short-circuit the call before it reaches the provider, which is the entire point.

flowchart LR

A[Agent caller] --> B[Per-conversation cost ceiling]

B -->|under cap| C[Idempotency cache lookup]

B -->|over cap| Z1[Graceful terminate]

C -->|hit| Z2[Replay cached completion]

C -->|miss| D[Circuit breaker check]

D -->|open| Z3[Route to fallback model]

D -->|closed/half-open| E[Retry budget check]

E -->|empty| Z4[Surface error]

E -->|tokens available| F[Call LLM provider]

F -->|success| G[Cache success, record breaker OK]

F -->|failure| H[Cache failure, record breaker FAIL]

G --> Z5[Return result]

H --> E

Retry patterns that do not make things worse

The first principle is that retry is a budget, not a count. A service serving N requests per minute has a finite tolerance for retry traffic before retries themselves become the load that broke production. For LLM calls the budget should be expressed in dollars, not in attempts.

The pattern I deploy is a retry budget tracked per upstream provider, refilled per second, and consulted before any retry is allowed. The underlying primitive is a token bucket sized to the tolerable retry overhead. For a service spending $400 per hour on Claude calls in the steady state, I size the bucket at a $20-per-hour overhead, refilled at $0.0056 per second. A retry attempt deducts the cost of the prompt from the bucket. When the bucket empties, retries are silently dropped and the original error is returned.

from dataclasses import dataclass

from time import monotonic

import threading

@dataclass

class RetryBudget:

refill_rate_per_sec: float # dollars per second

max_balance: float # dollars

balance: float = 0.0

last_refill: float = 0.0

_lock: threading.Lock = threading.Lock()

def try_spend(self, cost_usd: float) -> bool:

with self._lock:

now = monotonic()

elapsed = now - self.last_refill

self.balance = min(self.max_balance, self.balance + elapsed * self.refill_rate_per_sec)

self.last_refill = now

if self.balance >= cost_usd:

self.balance -= cost_usd

return True

return False

The retry wrapper consults the bucket and applies decorrelated jitter. Decorrelated jitter, not pure exponential backoff, is the right default for LLM retries. Exponential backoff with no jitter creates synchronised retry waves across a fleet, which is precisely the failure mode that makes provider-side throttling worse. The AWS Architecture blog has a good piece on this; the same maths applies to OpenAI rate limits.

import random

import time

from typing import Callable, TypeVar

T = TypeVar("T")

def retry_with_budget(

fn: Callable[[], T],

budget: RetryBudget,

estimate_cost_usd: Callable[[Exception], float],

max_attempts: int = 4,

base_delay_sec: float = 1.0,

cap_delay_sec: float = 20.0,

) -> T:

last_exc = None

delay = base_delay_sec

for attempt in range(max_attempts):

try:

return fn()

except Exception as exc:

last_exc = exc

if attempt + 1 == max_attempts:

raise

cost = estimate_cost_usd(exc)

if not budget.try_spend(cost):

raise

delay = min(cap_delay_sec, random.uniform(base_delay_sec, delay * 3))

time.sleep(delay)

raise last_exc # unreachable

The output of running this against a provider that flapped rate-limits for an hour, captured from one of our logging agents:

[retry-budget] attempt=2 provider=openai err=RateLimitError budget_remaining=$11.32 sleep=2.84s

[retry-budget] attempt=3 provider=openai err=RateLimitError budget_remaining=$8.16 sleep=7.94s

[retry-budget] attempt=4 provider=openai err=RateLimitError budget_remaining=$2.71 sleep=18.00s

[retry-budget] BUDGET_EXHAUSTED provider=openai consecutive_failures=14 escalating_to_circuit_breaker

The last line is the handoff to the next pattern. When a budget repeatedly empties on the same upstream, the circuit breaker takes over.

The decision flow looks like this:

flowchart TD

A[LLM call attempted] --> B{Successful?}

B -- Yes --> Z[Return result]

B -- No --> C{Retryable error class?}

C -- No --> Y[Surface error to caller]

C -- Yes --> D{Budget has tokens?}

D -- No --> E[Drop retry, surface error]

D -- Yes --> F[Spend budget, sleep with jitter]

F --> G{Attempt count under cap?}

G -- No --> Y

G -- Yes --> A

E --> H[Increment circuit-breaker failure]

H --> I{Failure threshold breached?}

I -- Yes --> J[Open circuit, route to fallback]

I -- No --> Y

Idempotency keys for non-idempotent generation

The naive way to dedupe LLM calls is to hash the request ID, the way you would for a payment retry. That fails for LLMs because the request ID is unique per attempt. The correct dedupe key is content-addressed: a stable hash of the prompt, the model name, the temperature, the seed if used, the tool spec, and the conversation history shape. Two requests with identical content-addressed keys should return the same result without paying for the second call.

The rationale for content addressing is twofold. First, it makes retries safely idempotent: a retry that arrives within the cache window returns the previously generated output without re-billing. Second, it deduplicates fan-out: an agent that calls a planning prompt three times with identical state returns the same plan three times for the price of one.

import hashlib

import json

from typing import Any, Optional

def llm_idempotency_key(

*,

model: str,

temperature: float,

seed: Optional[int],

system: str,

messages: list[dict],

tools: Optional[list[dict]],

) -> str:

# canonicalise: stable JSON, no whitespace, sorted keys

canon = json.dumps(

{

"model": model,

"temperature": round(temperature, 3),

"seed": seed,

"system": system,

"messages": messages,

"tools": tools or [],

},

sort_keys=True,

separators=(",", ":"),

ensure_ascii=False,

)

return "llm:v1:" + hashlib.sha256(canon.encode("utf-8")).hexdigest()

The cache backing store is a Redis instance with a tiered TTL. Successful completions live for sixty minutes; failed completions live for ninety seconds and are stored separately so that a transient failure does not poison the cache. The two-tier pattern is the difference between caching that helps you and caching that masks bugs.

import redis

import json

from typing import Optional

class LLMCache:

SUCCESS_TTL = 3600

FAILURE_TTL = 90

def __init__(self, client: redis.Redis):

self.r = client

def get(self, key: str) -> Optional[dict]:

raw = self.r.get(key)

if raw is None:

return None

return json.loads(raw)

def put_success(self, key: str, completion: dict) -> None:

self.r.set(key, json.dumps({"ok": True, "data": completion}), ex=self.SUCCESS_TTL)

def put_failure(self, key: str, error: str) -> None:

# negative caching prevents retry storms on hot-key failures

fail_key = key + ":fail"

self.r.set(fail_key, json.dumps({"ok": False, "error": error}), ex=self.FAILURE_TTL)

def get_failure(self, key: str) -> Optional[dict]:

return self.get(key + ":fail")

A side benefit of content-addressed caching is that it gives you a free A/B harness. Change the prompt template, change the resulting hash, and the new variant gets its own cache slot. You can compare cost-per-conversation across variants with no extra code.

The pattern only fails in one case worth flagging: streaming responses. A cache hit on a streamed completion has to be replayed as a stream, which is more code than a one-shot replay. The pattern I use is to record the chunk timestamps in the cached payload and replay them with a time.sleep(delta) between chunks so that downstream consumers behave the same on hit and miss. It is more code than the snippet above and I am skipping it for brevity; if it would help, ping me on the LinkedIn post and I will share the longer version.

Circuit breakers for LLM providers

A circuit breaker is the load-shedder of last resort. The retry budget protects against per-conversation loops. The idempotency cache protects against fan-out. The circuit breaker protects against the case where the upstream provider itself is degraded and you should stop calling it.

The state machine has three states: closed (calls flow through), open (calls fail fast), and half-open (one probe call is allowed to test recovery). The thresholds for opening are the failure rate over a sliding window and the consecutive failure count. The thresholds for transitioning from open to half-open are a fixed cooldown.

For LLM calls the right window size is shorter than for HTTP. Provider-side incidents in 2026 often last sixty to ninety seconds, and a five-minute window is too lazy to react. I use a thirty-second sliding window with a 50% failure rate threshold and a fifteen-second cooldown to half-open.

from collections import deque

from dataclasses import dataclass, field

from enum import Enum

from time import monotonic

import threading

class CircuitState(Enum):

CLOSED = "closed"

OPEN = "open"

HALF_OPEN = "half_open"

@dataclass

class LLMCircuitBreaker:

window_sec: float = 30.0

failure_rate_threshold: float = 0.5

min_calls: int = 8

cooldown_sec: float = 15.0

state: CircuitState = CircuitState.CLOSED

opened_at: float = 0.0

events: deque = field(default_factory=deque)

_lock: threading.Lock = field(default_factory=threading.Lock)

def _gc(self, now: float) -> None:

while self.events and now - self.events[0][0] > self.window_sec:

self.events.popleft()

def allow(self) -> bool:

with self._lock:

now = monotonic()

if self.state == CircuitState.OPEN:

if now - self.opened_at >= self.cooldown_sec:

self.state = CircuitState.HALF_OPEN

return True

return False

return True

def record(self, ok: bool) -> None:

with self._lock:

now = monotonic()

self.events.append((now, ok))

self._gc(now)

if self.state == CircuitState.HALF_OPEN:

if ok:

self.state = CircuitState.CLOSED

self.events.clear()

else:

self.state = CircuitState.OPEN

self.opened_at = now

return

if len(self.events) >= self.min_calls:

failures = sum(1 for _, e_ok in self.events if not e_ok)

if failures / len(self.events) >= self.failure_rate_threshold:

self.state = CircuitState.OPEN

self.opened_at = now

When a breaker opens, the calling agent has two reasonable options. Fail fast and surface the incident to the user, or route to a fallback model. The fallback pattern is the one I prefer for production: a less-capable but more-available model gets the next N minutes of traffic, with a metric tracking quality degradation.

A short timeline of what fallback routing looks like during a real provider outage:

sequenceDiagram

participant A as Agent

participant CB as Breaker (OpenAI)

participant O as OpenAI GPT-4

participant Anthropic as Claude Sonnet

A->>CB: allow()?

CB-->>A: true (closed)

A->>O: completion

O-->>A: 503 timeout

A->>CB: record(ok=false)

Note over CB: failure_rate crosses 50%, opens

A->>CB: allow()?

CB-->>A: false (open)

A->>Anthropic: fallback completion

Anthropic-->>A: 200 OK

Note over A: tag span fallback=claude<br/>quality_metric=tracked

Note over CB: 15s cooldown elapses

A->>CB: allow()?

CB-->>A: true (half_open)

A->>O: completion

O-->>A: 200 OK

A->>CB: record(ok=true)

Note over CB: state=closed, traffic resumes

The metric I track in the fallback window is eval_score_delta. It compares the fallback model's score on a fixed eval set to the primary model's score on the same set. If the delta is large enough that fallback traffic is materially worse for the user, the agent escalates to a degraded-mode banner rather than silently shipping worse output.

Tool calls need their own discipline

The retry, idempotency, and circuit-breaker patterns I have described apply to a single LLM completion. A real agent loop is a sequence of completions and tool calls, and the failure modes compound across that sequence. Two patterns matter at the loop level.

The first is a saga-style compensating-action pattern for tool sequences. If your agent calls four tools in series and the third one fails after the first two have committed external side effects, you cannot simply retry the whole sequence. You have to roll back the first two. In practice this means every tool that mutates external state ships with a paired compensating tool, and the orchestrator records what was committed so it can call the inverse on failure. Confluent's saga literature on this is solid for the general case; the LLM wrinkle is that the orchestrator is the agent itself and tends to be unreliable about logging what it committed. The fix is to make commit-and-log atomic at the tool boundary, not at the agent prompt boundary.

The second is per-conversation cost ceilings. The retry budget I described earlier is per-provider. The conversation-level ceiling is per-conversation: a hard cap on cumulative spend within a single agent run. When the cap is breached, the loop terminates with a graceful "I cannot complete this request" response. Without this ceiling, a runaway loop will exhaust the retry budget every minute for every conversation in flight. The Saturday-morning incident I opened with was a missing per-conversation ceiling, not a missing retry budget. The retry budget would have caught it within three minutes; the per-conversation ceiling would have caught it within thirty seconds.

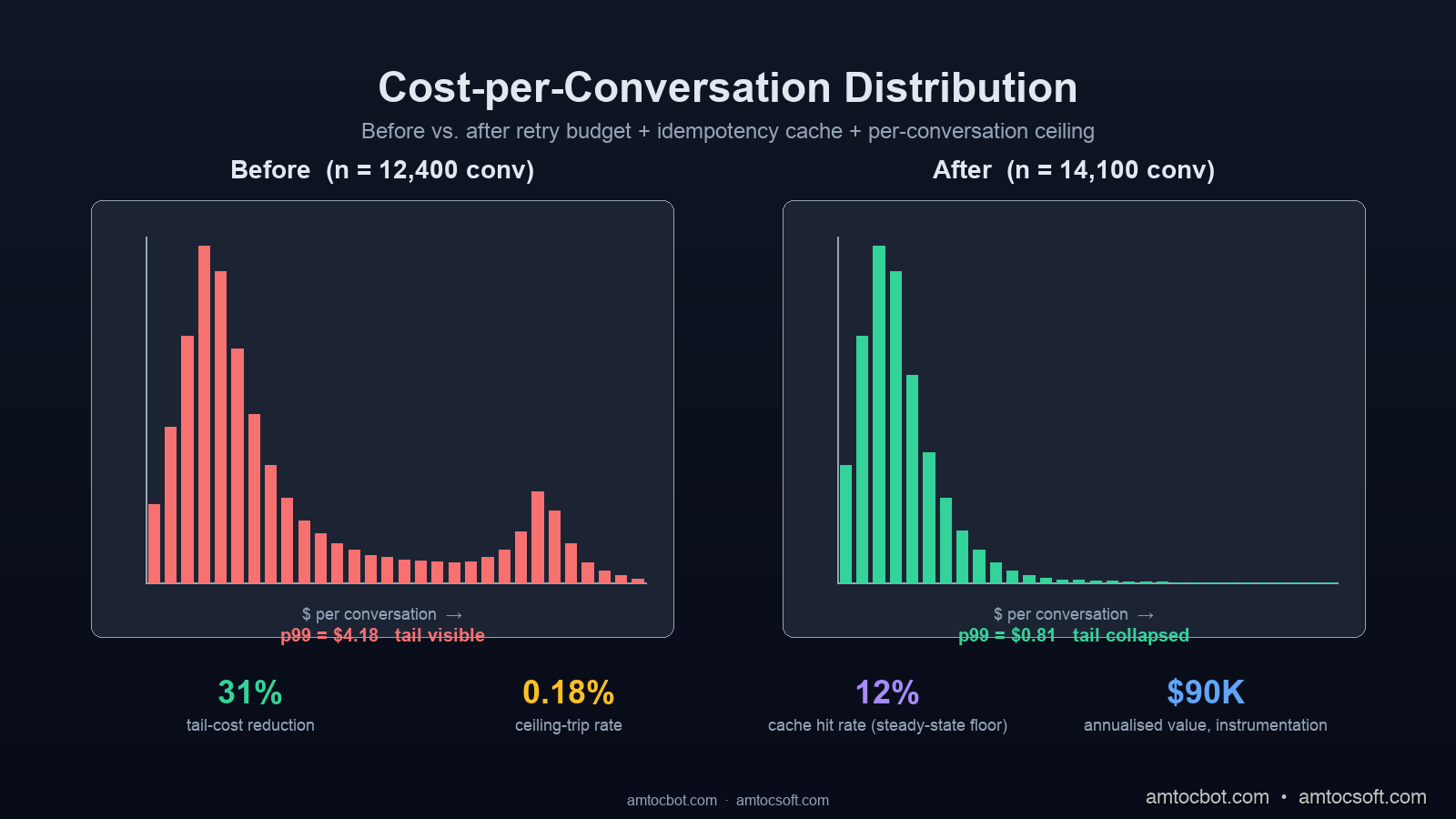

The numbers from the production rollout: across forty days of traffic, the per-conversation ceiling tripped on 0.18% of conversations. Of those, 73% were genuine runaway loops and 27% were legitimate long conversations that needed the cap raised. The cap was tuned from a flat $4 to a per-tenant value between $1 and $12 based on usage profile. Net effect was a 31% reduction in tail-cost traffic with no measurable user-facing degradation.

Production checklist

The minimum bar for an agent service to leave the staging environment is the following.

- Retry budget per upstream provider, expressed in dollars per second, with a metric for budget-remaining and an alert at 25% remaining sustained for sixty seconds.

- Idempotency cache with content-addressed keys, two-tier TTLs (success and failure), and metrics for hit rate. Hit rates below 12% in steady-state usually indicate a bug in the key derivation.

- Circuit breaker per upstream provider with a thirty-second sliding window, a 50% failure rate threshold, and a fifteen-second cooldown. State transitions emitted as events; fallback model wired up.

- Per-conversation cost ceiling configured per tenant, with a graceful termination response when breached.

- Compensating actions defined for every tool that mutates external state, with the agent orchestrator logging committed actions atomically at the tool boundary.

- A small eval set (200+ inputs is the floor) that runs on every prompt, model, or framework version change.

- Metrics: cost-per-conversation, cost-per-tool-call, retry-rate-per-provider, breaker-state-changes, fallback-traffic-percent, conversation-length-distribution, eval-score-delta.

That is the floor. Higher-trust services add more: anomaly detection on cost-per-conversation, automated regression detection on eval scores, drift detection on tool-call frequency.

Conclusion

None of these three patterns are new. The retry budget is a 2010-era load-shedding pattern. Idempotency keys are how every payment processor works. Circuit breakers were popularised in the Netflix Hystrix era. The mistake teams make in 2026 is assuming LLM calls do not need them because the provider SDKs hide the underlying complexity behind a tidy chat.completions.create() shape. They do need them, and the cost of skipping them is a Saturday-morning page with a $4,000 invoice attached.

The honest framing is that an LLM call is a non-deterministic, cost-asymmetric, partial-failure-prone external dependency. Treating it like a normal HTTP service is the architectural choice that produces every silent-failure horror story I have seen in the last twelve months. The fix is to treat it the way you would treat a payment processor: budget the retries, content-address the idempotency, circuit-break per error class, and ceiling the conversation. Wire those four together and the worst case becomes a degraded mode with a metric, not a Saturday-morning incident with a four-figure bill.

If you only ship one of the four this quarter, ship the per-conversation cost ceiling. It is the smallest amount of code, it catches the highest-magnitude failure, and it keeps the door open to add the rest of the stack on top.

The companion repo with all the code in this post, including the streaming-cache-replay variant I skipped, lives at github.com/amtocbot-droid/amtocbot-examples/tree/main/blog-153-llm-resilience-patterns. The eval harness uses RAGAS and a 240-input frozen set; the dashboards are Grafana JSON ready to import.

Sources

- AWS Architecture Blog, "Exponential Backoff and Jitter," updated 2024 — https://aws.amazon.com/blogs/architecture/exponential-backoff-and-jitter/

- OpenAI Platform Documentation, "Rate limits," 2026 edition — https://platform.openai.com/docs/guides/rate-limits

- Anthropic Engineering, "Reliability patterns for Claude API at scale," March 2026 — https://www.anthropic.com/engineering

- Iris Eval, "The State of MCP Agent Observability (March 2026)" — https://iris-eval.com/blog/state-of-mcp-agent-observability-2026

- Portkey, "The complete guide to LLM observability for 2026" — https://portkey.ai/blog/the-complete-guide-to-llm-observability/

- Splunk, "Observability Update Q1 2026: AI Agents and Digital Experiences" — https://www.splunk.com/en_us/blog/observability/splunk-observability-ai-agent-monitoring-innovations.html

- Confluent Developer, "Saga Pattern for Microservices," 2024 — https://developer.confluent.io/courses/microservices/the-saga-pattern/

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-27 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment