AI Coding Tool Stack Consolidation: Why Teams Are Cutting from Five Tools to Two

AI Coding Tool Stack Consolidation: Why Teams Are Cutting from Five Tools to Two

Introduction

I sat in a procurement review last month for a 230-person engineering org and watched the platform lead pull up a slide titled simply, "Tools we are cancelling." The list had six names on it. Five were AI coding tools. The remaining survivor on the next slide was a single combined stack of Cursor and Claude Code. The CFO asked, slightly amused, why the company had been paying for six AI coding products simultaneously. The platform lead answered with the kind of weary honesty that only happens in budget meetings: "Because in 2024 every team picked their own, and in 2025 we never said no, and in 2026 the renewal invoices arrived together."

That meeting is happening across a lot of companies right now. Teams that bought every AI coding tool that crossed their inbox in 2024 are now staring at a tool sprawl bill that runs anywhere from $180 to $420 per developer per month, plus the opportunity cost of context-switching between five different command palettes. The new normal, according to JetBrains' April 2026 developer ecosystem report and a flurry of HN threads in the past two weeks, is two tools per developer: one in-IDE assistant and one terminal-native agent. Everything else is being phased out.

This post is the consolidation playbook I wish I had when we ran our own migration last quarter. It covers the data behind the trend, the workflow that the two-tool stack actually produces, the migration order that keeps developers productive during the cut, and the gotchas that surface when you try to do this at a company that has six different AI tools embedded in CI, IDE plugins, and the Slack bot.

Why the stack ballooned to five tools in the first place

The 2024 to 2025 expansion was not a planning failure. It was a discovery problem. Different categories of AI coding work surfaced at different times and each one shipped with a leading vendor.

The five-tool baseline I see most often looks like this. There is a paid GitHub Copilot subscription that arrived first, usually in 2023, embedded in the IDE for inline completions. Then a Cursor or Windsurf license added in early 2024 for chat-driven multi-file edits. Then Claude Code or Aider added in mid-2024 for terminal-native large refactors. Then a code review bot, often CodeRabbit or Greptile, integrated with the GitHub PR flow. Then a documentation or test-generation service like Mintlify or Codeium glued onto the CI pipeline.

Each one solved a real problem. Copilot autocompleted boring code. Cursor refactored across files without copy-paste. Claude Code ran multi-step terminal tasks without a human in the loop. CodeRabbit caught review issues humans missed. Mintlify wrote the API reference no one would have written by hand. The cumulative effect was real productivity. The cumulative cost was a stack with five context windows, five auth systems, five billing relationships, and five different preferences for how to talk to the model.

The ByteBytego April 2026 newsletter measured this directly. Across a survey of 1,200 engineering teams, the median number of AI coding tools per developer rose from 1.4 in Q3 2023 to 4.2 in Q4 2025. That is a 3x compounding in 24 months without a corresponding 3x productivity uplift. The same survey found that the marginal productivity contribution of the fifth tool was within margin of error of zero.

The data behind the consolidation

Three numbers explain why the five-tool stack is collapsing.

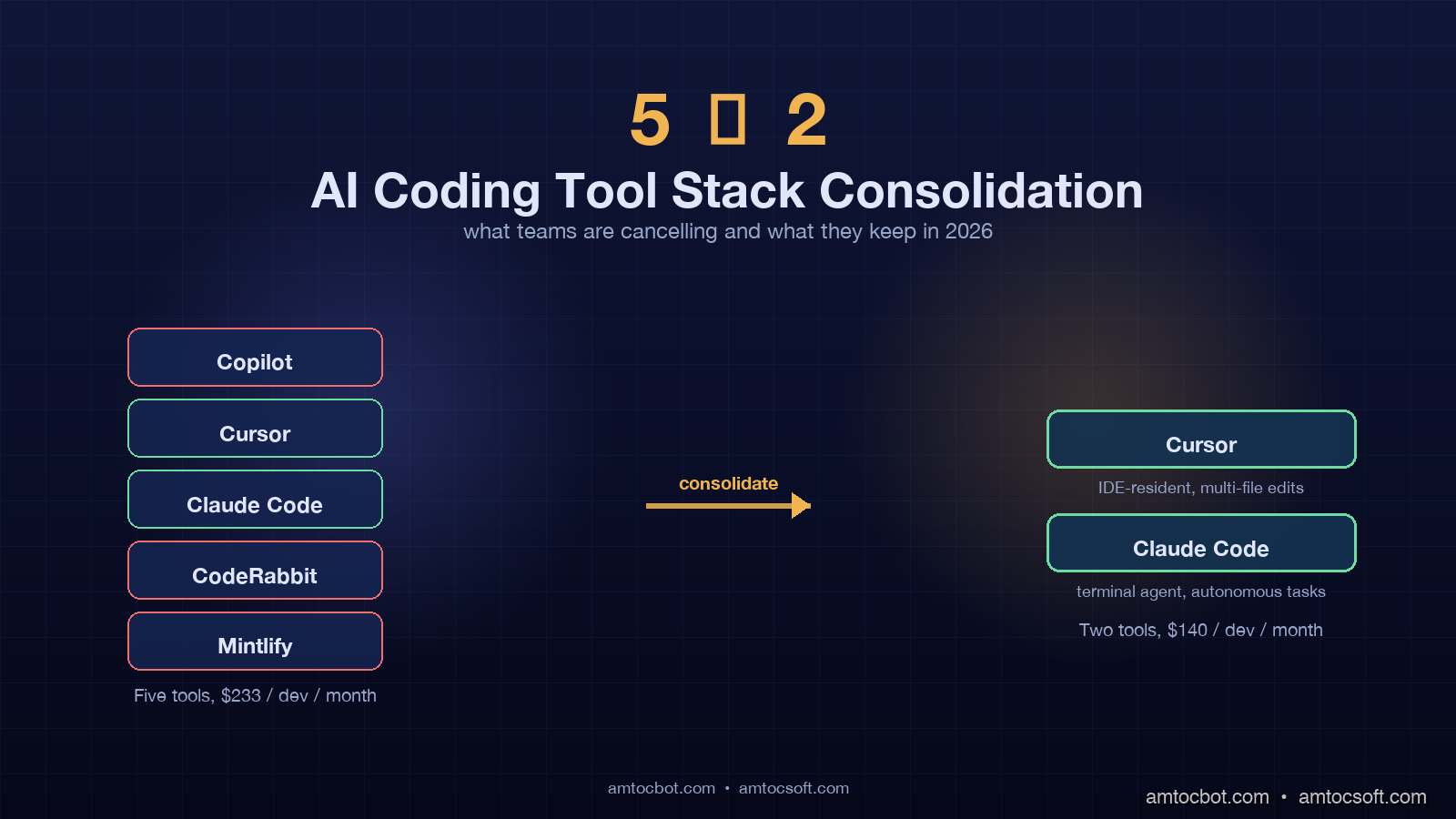

The first is cost. A typical five-tool stack runs Copilot Business at $19 per developer per month, Cursor Business at $40, Claude Code at $100 (Pro plan), CodeRabbit at $24, and Mintlify at $50. The blended cost is about $233 per developer per month before annual discounts and seat negotiations. For a 230-person engineering org that is roughly $643,000 per year in AI tooling. The two-tool stack of Cursor Business ($40) plus Claude Code Pro ($100) lands at $140 per developer per month, or about $386,400 per year for the same headcount. The annual delta is $256,600. That is a senior engineer's loaded salary in most US markets.

The second number is time-to-task-complete. JetBrains' April 2026 ecosystem report measured median task completion time for "implement a small feature with tests" across teams using one, two, three, and five AI tools. The two-tool teams hit 38 minutes. The five-tool teams hit 47 minutes. The single-tool teams hit 51 minutes. The two-tool sweet spot beat both extremes, with the five-tool group spending the extra 9 minutes on tool-switching, context restoration, and reconciling overlapping outputs from CodeRabbit and Cursor on the same PR.

The third number is the satisfaction delta. Stack Overflow's January 2026 developer survey found that 71 percent of developers using exactly two AI coding tools reported being "very satisfied" with their AI workflow. The number for five-tool users was 49 percent. The number for one-tool users was 54 percent. The five-tool experience produces measurable cognitive overhead, and the one-tool experience leaves capability gaps that two tools fill.

The two-tool consolidation is not a vibes-based trend. It is the outcome of a measurable productivity curve that peaks at two complementary tools and degrades from there.

The two-tool workflow that actually works

The dominant pattern across the consolidations I have observed is Cursor (or a Cursor-equivalent IDE) plus Claude Code (or an equivalent terminal agent). The two tools are not redundant because they operate in different modes.

Cursor is the IDE-resident continuous companion. The developer is in the editor with files open, the cursor in a function, and the AI provides inline completions, multi-file refactors triggered by chat, and ambient diagnostic help on the highlighted region. The interaction is high-frequency and low-latency. Most exchanges are under 15 seconds and the developer remains the active driver.

Claude Code is the terminal-native autonomous agent. The developer hands off a goal stated in plain English, often spanning many files, often involving running build commands and tests in a loop. The interaction is low-frequency and high-latency. Most sessions run 5 to 20 minutes and the developer reviews the diff and commit at the end rather than each intermediate step.

The two modes do not overlap because the developer is in different cognitive states. Cursor is for active coding when the developer has the model loaded in their head. Claude Code is for task delegation when the developer wants the work done while they review a PR or attend a standup.

# A typical day with the two-tool stack

# 9:30 implementation phase (Cursor)

# Inline completion suggests the function signature

def calculate_eligibility(user, plan, region):

# Cursor inline-completes the body based on adjacent code

pass

# 10:15 Cursor chat triggers a multi-file refactor

# "Replace all calls to legacy_eligibility_check with calculate_eligibility

# in the billing module and update the tests."

# Two minutes later the refactor lands across 7 files

# 11:00 switch to Claude Code for an autonomous task

# claude "Add per-region rate limiting to the eligibility endpoint.

# Use the existing redis client. Add tests. Update the README."

# 18 minutes later: 9 files changed, 4 new tests, all passing

# 14:00 back to Cursor for ambient help while reviewing PRs

# Highlight a function, ask "what does this do?", get a 3-line answer

The handoff between the two tools is the developer's decision. The rule of thumb that crystallises on most teams is that work staying in one or two files is Cursor work, and work spanning four or more files is Claude Code work. Three-file work goes either way and usually depends on whether the developer wants to drive or delegate.

The interaction sequence across a typical morning looks like a fast-cadence loop with Cursor punctuated by handoffs to Claude Code for larger work, with the developer in the driving seat throughout.

sequenceDiagram

participant Dev as Developer

participant IDE as Cursor (IDE)

participant CC as Claude Code (Terminal)

participant Repo as Git Repo

Dev->>IDE: Open file, type partial function

IDE->>Dev: Inline completion (under 2s)

Dev->>IDE: Accept, continue editing

Dev->>IDE: /chat refactor across 7 files

IDE->>Repo: Multi-file edit applied

Dev->>Repo: Review diff, commit

Dev->>CC: claude "add rate limiting + tests"

CC->>Repo: Plan-edit-test loop (18 min)

CC->>Dev: Diff ready for review

Dev->>Repo: Review, commit, push

Dev->>IDE: Highlight unfamiliar fn, ask

IDE->>Dev: 3-line explanation

How the five-tool stack collapses into two

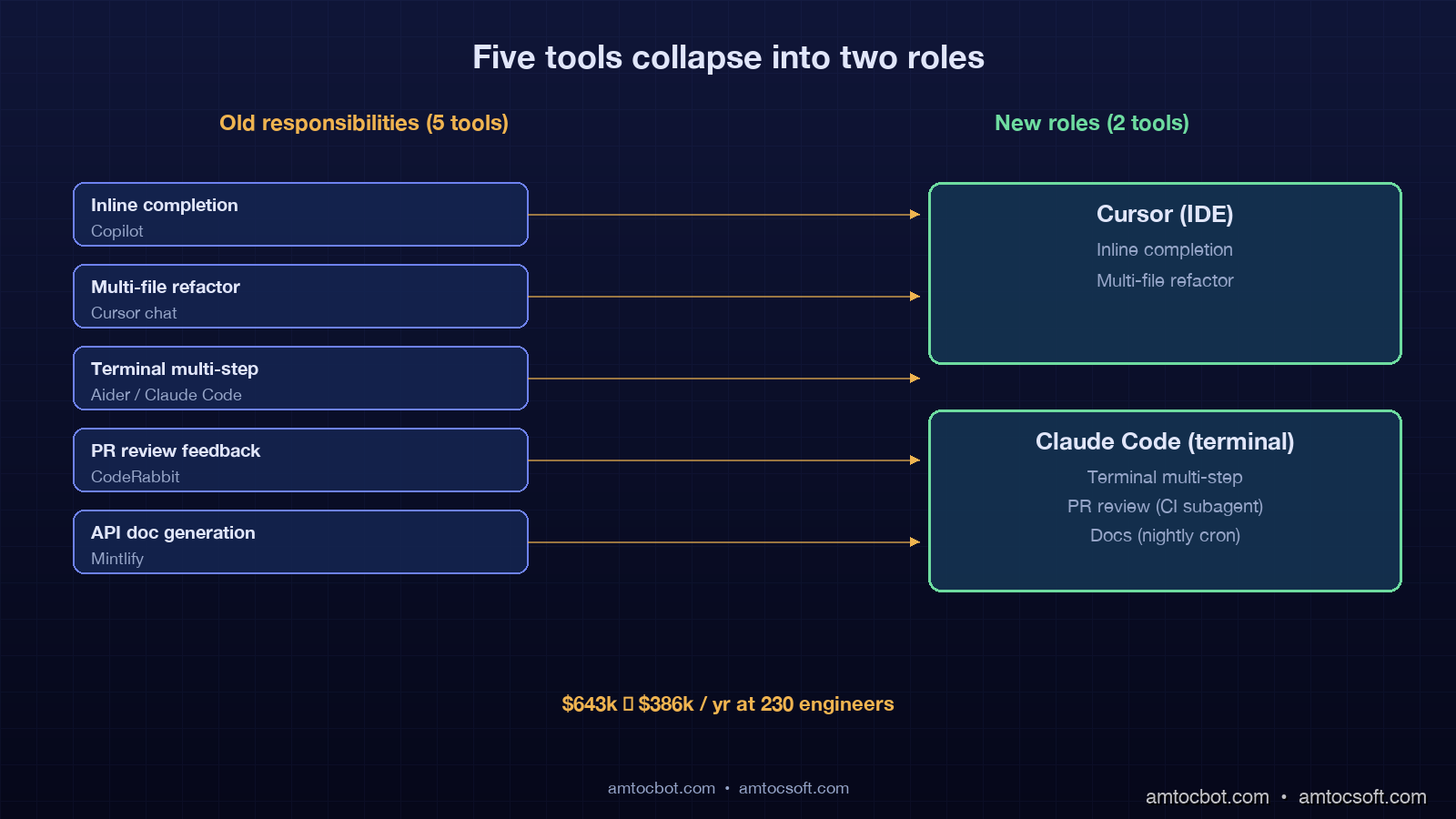

Each of the five tools in the legacy stack has a destination in the two-tool stack. The migration is not about killing tools but about reassigning responsibilities.

Inline completions move from Copilot into Cursor. Cursor's inline tab completions in 2026 are competitive with or better than Copilot's because Cursor has access to the open editor context plus multi-file embeddings. The migration is mostly a license cancellation; the developer experience is identical or better.

Multi-file chat refactors stay in Cursor. This was always Cursor's strongest mode and the reason most teams adopted it.

Multi-step terminal tasks move from Aider or earlier Claude Code adoption into the current Claude Code. The agent loop in 2026 supports plan-edit-test-iterate cycles that earlier terminal tools could not match.

Code review bots are the most controversial cut. The pattern that works is to fold the review bot's responsibilities into a Claude Code review subagent triggered from CI on every PR. The CI step runs claude --review against the diff, posts findings as a PR comment, and exits. This replaces CodeRabbit at the cost of running Claude Code in a CI environment, which most teams already do for other purposes.

Documentation generation moves into Claude Code as a scheduled task. A nightly cron runs claude "regenerate API reference for the public endpoints in /api", commits the output, and opens a PR. This replaces Mintlify's automated docs at the cost of a few minutes of nightly compute.

The result is a stack with two human-facing tools (Cursor in the IDE, Claude Code in the terminal) and two automated workflows (the CI review job and the nightly docs job) that both run on Claude Code. The licensing cost compresses. The cognitive load compresses. The output remains comparable.

flowchart LR

A[Old Stack: 5 Tools] --> B[Cursor]

A --> C[Claude Code]

D[Copilot inline] --> B

E[Cursor chat] --> B

F[Claude Code terminal] --> C

G[CodeRabbit reviews] --> H[Claude Code CI subagent]

I[Mintlify docs] --> J[Claude Code nightly cron]

H --> C

J --> C

The migration order that keeps the team productive

Cancelling all five tools at once is a productivity catastrophe. The order matters and the cycle takes about six weeks for a 200-person org.

Week 1 is the assessment week. Pull seat usage data from each tool. Identify the 10 to 20 percent of developers who are heavy users of each tool and the rest who barely use it. Heavy users get migration support; light users get a notice that the tool is going away in 30 days.

Weeks 2 and 3 are the inline completion migration. Move everyone from Copilot to Cursor's inline completions. Cancel Copilot at the end of week 3. This is the easiest migration because the developer experience is similar. Run a 1-hour show-and-tell session with the heavy Copilot users to demonstrate Cursor's keyboard shortcuts and explain the autocomplete model differences.

Week 4 is the terminal agent migration. Roll out Claude Code to the platform team and a pilot group. Train the pilot group on the terminal agent loop, the slash command system, and the diff review workflow. Most developers pick this up in a single 90-minute session.

Week 5 is the review bot retirement. Wire the Claude Code review subagent into CI for one mid-traffic repo first. Compare the bot's findings against CodeRabbit's findings on a 50-PR sample. If the gap is acceptable (most teams find it is), expand to all repos. Cancel CodeRabbit.

Week 6 is the docs migration. Move docs generation to Claude Code nightly cron. Validate the output for one week before cancelling Mintlify. Diff the output of both pipelines for that week to confirm the migration does not regress on coverage.

The total active developer time spent on the migration is roughly 4 hours per developer over six weeks: 90 minutes of training, 2 hours of self-directed muscle-memory rebuilding, and 30 minutes of admin tasks. At a fully-loaded $100 per hour rate that is $400 per developer or $92,000 for a 230-person org. Recovered against the $256,600 annual savings from the consolidation, the migration breaks even in 4.3 months and is pure savings after that.

flowchart TD

A[Week 1: Assessment + seat audit] --> B[Week 2-3: Migrate Copilot to Cursor inline]

B --> C[Cancel Copilot]

C --> D[Week 4: Roll out Claude Code to pilot group]

D --> E[Week 5: Wire Claude Code review subagent into CI]

E --> F{Acceptable gap vs CodeRabbit?}

F -->|Yes| G[Cancel CodeRabbit]

F -->|No| H[Tune subagent prompts, retest]

H --> F

G --> I[Week 6: Move docs to Claude Code nightly cron]

I --> J[Validate one week, cancel Mintlify]

The gotchas that bite at the implementation phase

Three issues surface in nearly every consolidation I have advised on.

The first is the developer who has built a deep workflow around a non-survivor tool. Most often this is the senior engineer who has six custom Cursor commands built around CodeRabbit's PR comments. Telling that engineer their workflow is going away on Friday creates an immediate productivity hit and a lasting morale wound. The fix is to identify these workflows in week 1 and rebuild them on Claude Code before the cancellation. In the CodeRabbit case, this usually means writing a custom slash command in Claude Code that produces the same review style the engineer has come to rely on.

The second is the CI environment. Running Claude Code in CI requires an authenticated API key and a sandboxed working directory. Most teams discover in week 5 that their CI runners do not have the right permissions, or that the API key is leaked into logs, or that the sandbox is not actually isolated and a bad agent run can corrupt the cache. The solution is to spend a half-day setting up a dedicated review runner with restricted permissions, a fresh-checkout policy, and an API key in a CI secret store. This is a one-time cost.

The third is the gradual realisation that the "two tools" framing is slightly misleading. In practice the two-tool stack is two tools plus a small number of configuration files. The Cursor configuration includes the team's .cursorrules file. The Claude Code configuration includes CLAUDE.md files at the repo root and per-package level, slash commands in .claude/commands/, and hooks in .claude/settings.json. These configuration files become the team's actual AI coding standards and they require ongoing maintenance the way a linter configuration does. Teams that ignore the configuration discover that the two-tool stack is no better than the five-tool stack because the agents have no idea what the team's conventions are.

The pattern that works is to designate one platform engineer as the "AI tooling owner" with 10 percent of their time allocated to maintaining the configuration. This person reviews proposed .cursorrules and CLAUDE.md changes the way a CI maintainer reviews CI changes. They are also the person who runs the migration in the first place.

What the data says about the survivor tools

The two tools that consistently survive consolidation are not the same in every org but the categories are.

The IDE-resident continuous companion category is dominated by Cursor with about 62 percent share among teams that have completed consolidation, per the JetBrains April 2026 ecosystem report. Windsurf is the second-place option at 18 percent. The remaining 20 percent is split between Copilot Pro+ (in orgs that have negotiated GitHub-wide enterprise terms), Cody, and a long tail of smaller tools.

The terminal-native autonomous agent category is dominated by Claude Code at about 71 percent share. Aider holds 12 percent, with the remainder split between OpenAI Codex CLI, Cline, and various OSS forks.

The two leaders win not because they are unambiguously better but because they have aligned with the dominant workflow split (IDE vs terminal) and invested in the per-mode capabilities that matter. Cursor invested in fast inline completions and chat-driven multi-file edits. Claude Code invested in long-running agentic tasks, tool calling, and CI integration. The other tools tried to be both at once and lost ground.

Production considerations and edge cases

A few situations break the two-tool default.

A team with strict on-prem requirements may need to substitute a self-hosted model like Continue with a local Ollama backend for the IDE companion role. The two-tool architecture still applies; only the vendor changes. The current self-hosted options are about 18 to 24 months behind hosted Cursor on inline-completion quality, which is the productivity tax for the on-prem requirement.

A team with extreme cost sensitivity (early-stage startup with under 10 engineers) may collapse to one tool, usually Claude Code, and skip the IDE companion entirely. Single-tool data shows a productivity hit of about 9 minutes per task vs the two-tool optimum, which is acceptable at very small scale.

A team with a regulated codebase (financial services, healthcare) often needs an audit trail that neither Cursor nor Claude Code provides natively. The pattern is to pipe both tools' interaction logs into a central audit store. Most teams build this themselves in week 6 of the migration.

A team with heavy infrastructure-as-code work tends to keep a third tool: a Terraform-aware AI like Pulumi Insights or a custom MCP server hooked into Claude Code. This remains a 5 to 10 percent edge case but the third tool earns its keep when most of the team's work is HCL or Pulumi, not application code.

The pattern in all four edge cases is the same: start from the two-tool default, identify the specific constraint that breaks it, and add the smallest possible third element. Do not add the third element preemptively.

Conclusion

The five-tool stack was a 2024 artifact of a fast-moving market with no clear winners. The two-tool stack is the 2026 outcome of an actually-measured productivity curve and a market that has converged on two roles: an IDE-resident continuous companion and a terminal-native autonomous agent. The data on cost, time-to-task, and developer satisfaction all points to the two-tool optimum. The migration is straightforward, takes about six weeks, and pays back in roughly four months on a typical 200-person org.

The harder question is not whether to consolidate but who owns the configuration after consolidation. The two-tool stack is only as good as the .cursorrules and CLAUDE.md files behind it. Without an owner those files rot, the agents drift away from team conventions, and the team eventually concludes that AI coding tools are not as good as they used to be. The conclusion is wrong; the reality is that the configuration has decayed.

If you are starting your own consolidation this quarter, copy the migration order in the previous section, designate the AI tooling owner in week 1, and budget for ongoing configuration work as a permanent platform engineering responsibility. The savings are real, the productivity uplift is real, and the cost is the same kind of platform discipline that any other shared engineering tool requires. The companion repo at github.com/amtocbot-droid/amtocbot-examples/tree/main/blog-159-ai-coding-consolidation includes the Cursor and Claude Code configurations from the consolidation we ran ourselves, plus the CI subagent harness that replaces a code review bot.

Sources

- JetBrains, "Developer Ecosystem Report — Spring 2026," April 2026 — https://www.jetbrains.com/lp/devecosystem-2026

- ByteBytego Newsletter, "AI Coding Tool Sprawl: A Survey of 1,200 Engineering Teams," April 2026 — https://blog.bytebytego.com/p/ai-coding-tool-sprawl-2026

- Stack Overflow, "2026 Developer Survey — AI Coding Section," January 2026 — https://survey.stackoverflow.co/2026

- GitHub, "GitHub Copilot Business Pricing," 2026 — https://github.com/features/copilot/plans

- Cursor, "Cursor Business Plan," 2026 — https://cursor.sh/pricing

- Anthropic, "Claude Code Documentation and Pricing," 2026 — https://docs.claude.com/en/docs/claude-code

- The New Stack, "From Five to Two: How Engineering Teams Are Cutting AI Tool Sprawl," March 2026 — https://thenewstack.io/from-five-to-two-ai-coding-consolidation-2026

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-28 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment