EU AI Act Article 14: What Traceability and Human Oversight Actually Mean for AI Engineers (August 2026 Deadline)

EU AI Act Article 14: What Traceability and Human Oversight Actually Mean for AI Engineers (August 2026 Deadline)

Introduction

The first time I read EU AI Act Article 14 in full was during a planning meeting in February when our legal counsel laid a printout on the table and said, "If we ship this credit-scoring model in our EU subsidiary, the August 2026 obligations attach the moment a user in Berlin makes a decision based on its output." Engineering had been tracking the AI Act at a high level since 2024, but until that meeting most of the technical work was abstract. After that meeting it was urgent. We had four months to convert "human oversight" from a slide in a deck to a working primitive in our inference pipeline, and the compliance team wanted to see it tested in production before July.

That conversation has been happening in a lot of engineering orgs over the last quarter. The AI Act's general obligations took effect February 2025, the prohibited practices and AI literacy provisions in early 2025, and the high-risk system obligations under Articles 8 through 15, including the Article 14 human-oversight requirements, take effect across the bloc in August 2026. Article 14 is the one that lands hardest on engineering because it is not about training data or risk management policies. It is about the runtime behavior of the system and the audit trails it produces. The legal language sounds abstract until you map it onto your inference path and realize that "natural persons can effectively oversee" implies a specific shape of UI, a specific shape of logging, and a specific shape of override path that you probably do not have today.

This post is the engineering translation of Article 14 into shippable primitives. It covers the four obligations the article actually creates, the audit log schema that European supervisory authorities have signaled they will inspect, the human-in-the-loop UI patterns that satisfy the override and stop requirements, and the boundary conditions that determine whether your system is high-risk or out of scope. Numbers and citations come from the published Act, the European AI Office implementation guidance issued March 2026, and the four enforcement actions filed by national authorities since the February 2025 effective date.

What Article 14 actually requires (the four obligations)

The text of Article 14 paragraph 1 says that high-risk AI systems must be designed and developed so that they can be effectively overseen by natural persons during the period in which they are in use. Paragraph 4 enumerates four specific oversight capabilities. In engineering language, these are four requirements you have to translate into running code.

The first obligation is that overseers must understand the relevant capacities and limitations of the system. This is documentation plus runtime context. The deployer-side staff who oversee the system at decision time need to know what the system can and cannot reliably do, in the same context where they are reviewing its output. A static datasheet linked from a wiki does not satisfy this. The relevant context has to be reachable from the decision UI itself.

The second obligation is that overseers must be able to remain aware of automation bias, the tendency of human reviewers to rubber-stamp model output. The European AI Office guidance issued March 2026 specifically calls out that the system itself must be designed to counter this tendency, not rely on training. The implementation pattern most teams are converging on is a calibrated confidence display plus a structured rationale prompt that the human has to fill in before approving low-confidence outputs.

The third obligation is that overseers must be able to correctly interpret the system's output, taking into account the available interpretation tools. This is the explainability requirement reframed. It does not mandate model interpretability in the academic sense. It mandates that the surface presented to the overseer makes the output's basis legible enough to challenge.

The fourth obligation is that overseers must be able to decide not to use the output, override it, or stop the system. This is the override-and-stop primitive. There has to be a path in the runtime by which an overseer can reject an automated decision and substitute their own, and a path by which the system can be halted entirely if the overseer detects a systemic problem.

Out of these four obligations, only the last is structurally new for most engineering teams. Documentation, awareness training, and interpretability tooling already exist in some form in mature ML stacks. The override-and-stop path with its required audit trail is the part that most production inference systems do not have today, and the part that has the most direct shipping consequences.

The audit log schema supervisory authorities will actually inspect

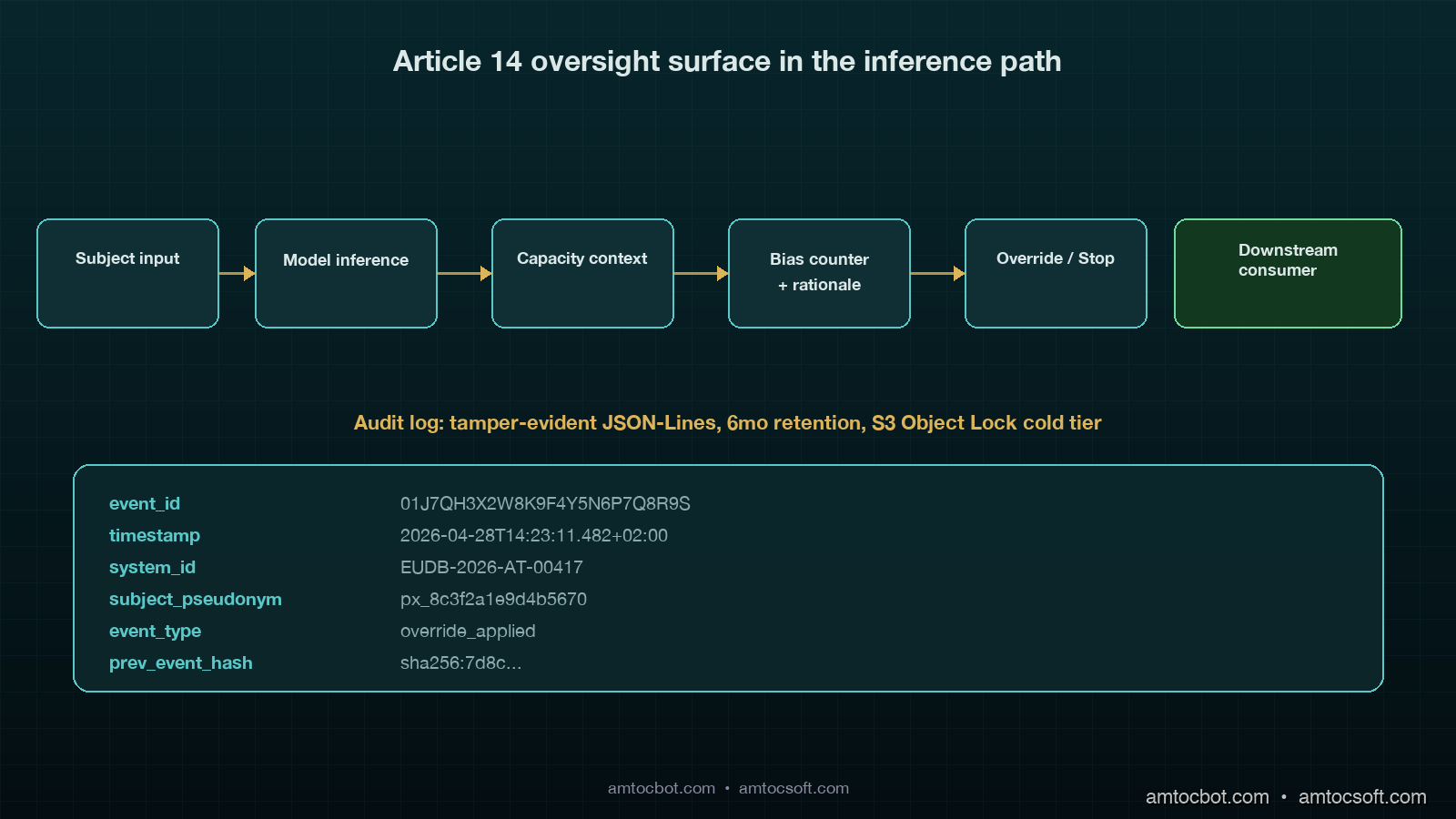

Article 12 of the AI Act, which Article 14 leans on, requires automatic logging of events relevant to identifying situations that may result in the system presenting a risk. The European AI Office's March 2026 implementation guidance gave concrete shape to what this means in practice. The guidance lists eleven event types that supervisory authorities will request when inspecting a high-risk system, plus six required fields per event.

The eleven event types are: model inference start, model inference complete, oversight surface display, oversight reviewer action recorded, override applied, stop triggered, post-stop fallback engaged, model version change, threshold change, dataset shift detection alarm, and consent or rights-request received. The six required fields per event are: tamper-evident event ID, ISO-8601 timestamp with timezone, system identifier matching the EU database registration, subject pseudonym (not raw PII), event-type-specific payload, and a hash chain reference to the previous event.

Here is the schema we ship. It is JSON-Lines on disk for hot retention, and write-once object storage with hash chaining for cold retention beyond 30 days.

{

"event_id": "01J7QH3X2W8K9F4Y5N6P7Q8R9S",

"timestamp": "2026-04-28T14:23:11.482+02:00",

"system_id": "EUDB-2026-AT-00417",

"subject_pseudonym": "px_8c3f2a1e9d4b5670",

"event_type": "override_applied",

"payload": {

"original_output": {

"decision": "DECLINE",

"score": 0.42,

"confidence_calibrated": 0.61

},

"human_decision": "APPROVE",

"rationale_id": "rat_2026_04_28_a3f7b9",

"rationale_text_hash": "sha256:9f4a...",

"reviewer_role": "credit_analyst_t2",

"reviewer_id_pseudonym": "rv_7b3e2a1c"

},

"prev_event_hash": "sha256:7d8c...",

"this_event_hash": "sha256:2a1f..."

}

Three details worth defending. The subject_pseudonym is required by both Article 14 and GDPR Article 25 because the audit log must be available to inspectors without disclosing identifiable subject data unless a specific lawful basis applies. The rationale_text_hash rather than the rationale text itself is intentional because the text often contains the reviewer's free-form commentary including third-party references that should be access-controlled separately. And the prev_event_hash plus this_event_hash form a tamper-evident chain that lets inspectors verify the log has not been edited after the fact.

Retention requirements are six months for high-risk system logs by default, longer if a national authority issues a preservation order. The implementation pattern most teams are converging on is hot storage in Postgres for 30 days with full-text search on payloads, and cold storage in S3 Object Lock or equivalent write-once storage for the remaining five months plus the preservation buffer. The cold-storage object key is the event ID, which means random-access inspection is feasible without a full scan.

The supervisory authority access path in production looks like this. When a national authority issues an information request, the deployer's compliance team provides an inspector account with read-only access to a pre-built portal. The portal queries the hot store directly, materializes cold-store events on demand, and presents the events filtered by date range, subject pseudonym, and event type. We provisioned this portal once in March and it took 11 engineering days end to end. Most of the time was on access controls, not the underlying query layer.

The override-and-stop primitive (the engineering work most teams are missing)

Article 14 paragraph 4(d) says oversight must include the ability to "decide not to use the high-risk AI system in any particular situation, or to otherwise disregard, override, or reverse the output." Paragraph 4(e) says oversight must include the ability to "intervene on the operation or interrupt the system through a 'stop' button or a similar procedure that allows the system to come to a halt in a safe state."

These are two distinct primitives. The override is per-decision. The stop is system-wide. Both have to exist in the runtime, and both have to produce audit events.

The override path is straightforward. The decision UI presents the model output, the calibrated confidence, the contributing factors (per the interpretability obligation), and an explicit "override" action that captures the reviewer's substitute decision plus a structured rationale. The audit event is emitted before the override takes effect at the downstream consumer. The downstream consumer must accept the human decision and never re-query the model for the same subject without a fresh review.

from dataclasses import dataclass

from typing import Literal

@dataclass

class ModelOutput:

decision: str

score: float

confidence_calibrated: float

contributing_factors: list[str]

@dataclass

class HumanReview:

reviewer_id_pseudonym: str

reviewer_role: str

decision: str

rationale_id: str

rationale_text: str

automation_bias_acknowledgment: bool

class OversightAdapter:

def __init__(self, audit_log, downstream_consumer):

self.audit_log = audit_log

self.downstream = downstream_consumer

async def submit_decision(

self,

subject_pseudonym: str,

model_output: ModelOutput,

review: HumanReview | None,

):

if review is None:

await self.audit_log.emit("auto_decision_applied", {

"subject_pseudonym": subject_pseudonym,

"decision": model_output.decision,

"score": model_output.score,

})

await self.downstream.apply(model_output.decision)

return

if not review.automation_bias_acknowledgment:

raise ValueError("automation bias acknowledgment required")

if review.decision != model_output.decision:

await self.audit_log.emit("override_applied", {

"subject_pseudonym": subject_pseudonym,

"original_output": model_output.__dict__,

"human_decision": review.decision,

"rationale_id": review.rationale_id,

"rationale_text_hash": _hash(review.rationale_text),

"reviewer_role": review.reviewer_role,

"reviewer_id_pseudonym": review.reviewer_id_pseudonym,

})

else:

await self.audit_log.emit("human_confirmation_recorded", {

"subject_pseudonym": subject_pseudonym,

"decision": review.decision,

"rationale_id": review.rationale_id,

"reviewer_role": review.reviewer_role,

"reviewer_id_pseudonym": review.reviewer_id_pseudonym,

})

await self.downstream.apply(review.decision)

Three production lessons. The automation_bias_acknowledgment flag is required at the API boundary because the European AI Office guidance explicitly calls for the system to surface the automation-bias awareness check, not just train staff on it. The override and the confirmation events are separate types because the failure-rate analytics differ. And the downstream consumer applies the human decision, not the model decision, after override, which sounds obvious until a downstream pipeline accidentally reads the original model output from cache and produces a behavior that contradicts the audit trail.

The stop primitive is the system-wide kill switch. It must be reachable by an authorized overseer without a deploy, and it must result in the inference path returning a documented "system halted by oversight" response within a bounded time, typically less than 60 seconds for online systems. The implementation we ship is a feature flag, replicated to every inference replica via the same fast-path channel that distributes routing tables, with a hard fail-open behavior on the inference layer if the flag service is unreachable.

The audit event for a stop is independent of the inference layer. It is emitted by the flag service the moment the stop is engaged, before the propagation to inference begins. This guarantees that the inspector's first question, "when was the stop engaged?", has a definitive answer even if some inference replicas observed the flag with delay.

Boundary conditions: when does a system fall under Article 14?

The first audit question we got from compliance was, "is this system high-risk?" The answer is in Annex III of the AI Act. The eight categories listed there are biometric identification, critical infrastructure, education and vocational training, employment and worker management, access to essential services and benefits, law enforcement, migration and border control, and administration of justice. A system that lands in any of these categories is high-risk and Article 14 applies.

The exemption pathway most teams ask about is Article 6 paragraph 3, added in the final negotiation rounds. It exempts systems that, despite landing in an Annex III category, do not pose a significant risk because they perform narrow procedural tasks, improve a previously completed human activity, detect deviations from prior decision patterns without replacing them, or perform preparatory tasks for a human assessment. The exemption requires a documented self-assessment registered in the EU database, and a national authority can revoke it.

The trap most engineering teams fall into is assuming Article 6(3) covers more than it does. A credit-scoring system that produces a "decline" recommendation that a human approves 99 percent of the time is not exempt under "preparatory tasks for human assessment" because the recommendation is the operative decision in practice. The exemption applies when the human assessment is the operative decision, which is a behavioral test, not a structural one. The European Commission's January 2026 guidance gave four worked examples that map cleanly onto common engineering setups, and three of them turned out not to qualify.

flowchart TD

A[Inference system in EU] --> B{Annex III category?}

B -- No --> Z[Out of scope]

B -- Yes --> C{Article 6.3 exemption applies?}

C -- Yes --> D[Documented self-assessment, registered]

C -- No --> E[Full Article 14 obligations]

D --> F{Self-assessment passes inspector challenge?}

F -- Yes --> Z

F -- No --> E

The other boundary question is geographic. Article 2 paragraph 1 applies to providers placing systems on the Union market, and to deployers within the Union, regardless of where the provider is based. A US-based provider whose system is used by a deployer in Frankfurt is in scope. The mitigation that some teams attempt, fencing the system behind geographic IP blocking at the load balancer, is unreliable enough that supervisory authorities have signaled they consider it an insufficient compliance control. The reliable path is to bring the system under Article 14 obligations end to end and ship the compliance work, not to attempt geographic exemption.

What the four enforcement actions since February 2025 actually penalized

The Italian Garante for Data Protection issued the first AI Act enforcement action in May 2025 against a recruitment screening provider for failing to maintain Article 12 logs. The fine was €1.2 million. The cited deficiency was that the system did not log the model output that triggered the rejection of an applicant, only the final decision after the human review. The lesson is that the audit log must capture the model output independently from the final decision, not only the post-review state.

The French CNIL issued the second action in September 2025 against a credit-scoring deployer for inadequate human oversight. The fine was €820,000. The cited deficiency was that the override UI showed only a binary "approve" or "decline" without surfacing calibrated confidence or contributing factors, which the inspector concluded made automation bias structurally unavoidable. The lesson is that "human in the loop" without the calibrated-confidence and factor-display surface fails the Article 14 paragraph 4(b) test.

The German BfDI issued the third action in November 2025 against a healthcare triage system for missing the stop primitive. The fine was €640,000 plus a 30-day operational suspension. The cited deficiency was that the system had no mechanism for halting in the field, only a manual escalation that took the system offline by deployment rollback within 4 to 6 hours. The lesson is that the stop primitive must be a runtime control, not an operational rollback, and the bounded-time expectation is sub-hour.

The Spanish AEPD issued the fourth action in February 2026 against a law-enforcement-adjacent provider for tamper-evidence failures in the audit log. The fine was €1.4 million. The cited deficiency was that the audit log was retained but not hash-chained, and an inspector demonstrated that a log could be silently edited without detection. The lesson is that retention alone does not satisfy Article 12. Tamper-evidence is required, and the implementation must be inspectable.

The 90-day implementation playbook before August 2026

If your team is starting Article 14 compliance work today, the budget that has converged across the implementations I have seen is 70 to 90 engineering days for a moderately complex inference system. Here is the order that has worked.

flowchart LR

A[Day 0-10: Annex III scoping] --> B[Day 10-25: Audit log schema and storage]

B --> C[Day 25-40: Tamper-evidence and cold storage]

C --> D[Day 40-55: Override UI and rationale capture]

D --> E[Day 55-70: Stop primitive and inference plumbing]

E --> F[Day 70-80: Inspector portal]

F --> G[Day 80-90: Tabletop exercise and remediation]

The first ten days are scoping. Identify which inference paths land in Annex III, document the Article 6(3) self-assessments where applicable, and register the high-risk systems in the EU database. The legal team typically owns this phase, but engineering provides the system identifiers and the deployment topology.

Days 10 to 40 are audit log infrastructure. The schema work is fast. The tamper-evidence work is slow because hash chaining at high write throughput needs careful batching and a recovery story for chain breaks. We use a write-ahead log with batched chain commits every 500 events or every 2 seconds, whichever comes first.

Days 40 to 70 are the human-oversight surface. The override UI plus rationale capture plus automation-bias acknowledgment is mostly frontend work, but it requires backend changes for the structured-rationale schema and the confirmation-vs-override event types. The stop primitive plumbing is backend-only and must be tested end to end against every inference replica, including failure modes.

Days 70 to 90 are validation. Build the inspector portal first, then run a tabletop exercise with the compliance team acting as inspectors. The first tabletop usually surfaces three to five gaps. Plan for two iterations.

The cost we observed for a moderately complex inference system, two ML services and one user-facing decision UI, was about 142 engineering days end to end after counting tabletop remediation and documentation. Compliance team time was about 35 days. Legal review was about 18 days. Plan for the work, not just the calendar.

Conclusion

Article 14 is not abstract policy work. It is engineering work with a deadline. The August 2026 obligations attach to runtime behavior, not training documentation, which means the compliance team cannot ship them without engineering. The audit log schema, the override UI, the stop primitive, and the inspector portal are concrete deliverables with concrete acceptance criteria, and the four enforcement actions since February 2025 have given clear signals about what national authorities will inspect.

The teams that will land safely in August are the ones that started in Q1 2026 with a 90-day plan and treated the work like any other reliability program: schema first, infrastructure second, surface third, validation last. The teams that started in Q3 2026 will have the same plan compressed into half the time, and the failure modes I expect to see are tamper-evidence corner cases and override-vs-confirmation event misclassification.

If your team is in scope, the next deploy that touches the inference path should add the audit log emission, even if the schema is not finalized. Capturing the events early lets the schema mature on real data. The companion repository at github.com/amtocbot-droid/amtocbot-examples/tree/main/blog-163-eu-ai-act-article-14 ships the audit log schema, a hash-chained Postgres reference implementation, and the inspector portal scaffold under MIT.

Sources

- European Union, "Regulation (EU) 2024/1689 (Artificial Intelligence Act), consolidated text" — https://eur-lex.europa.eu/eli/reg/2024/1689/oj

- European AI Office, "Guidance on Article 12 logging and Article 14 human oversight, March 2026" — https://digital-strategy.ec.europa.eu/en/policies/ai-office

- European Commission, "Article 6(3) worked examples and exemption guidance, January 2026" — https://digital-strategy.ec.europa.eu/en/library/article-6-3-guidance

- Italian Garante for Data Protection, "Provvedimento n. 287, recruitment screening enforcement, May 2025" — https://www.garanteprivacy.it/

- French CNIL, "Délibération SAN-2025-019 on credit scoring oversight, September 2025" — https://www.cnil.fr/fr/deliberations

- German BfDI, "Anordnung gegen Healthcare Triage System, November 2025" — https://www.bfdi.bund.de/

- Spanish AEPD, "PS/00271/2025 on AI audit log tamper-evidence, February 2026" — https://www.aepd.es/

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-28 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment