OpenTelemetry GenAI Conventions: A Practical Guide to LLM Span Attributes for Production Observability

OpenTelemetry GenAI Conventions: A Practical Guide to LLM Span Attributes for Production Observability

Introduction

The first time I watched a production AI agent silently double-bill a customer, the trace existed. We had wired every LLM call in our agent loop through a homegrown wrapper that logged a JSON line per call: model, prompt token count, completion token count, latency, cost. The wrapper was clean. The dashboards were clean. The Saturday-morning page that woke me up was a customer email forwarded by support: "I see two charges of $1,840.00 on my invoice." Our wrapper had logged both calls. The wrapper had not connected them to the same conversation. The agent had hit a planning step, stalled, restarted from a saved state, and re-entered the tool that issued a Stripe charge. Two hours of our wrapper's logs sat in Datadog with no parent-child relationship between the rogue retry and the original conversation. We could see the calls. We could not see the run.

The fix was not more logging. The fix was switching every LLM and tool call to emit a proper OpenTelemetry span with the GenAI semantic conventions, which became stable in OpenTelemetry 1.27 in late 2025 and have been the production standard through 2026. Once we did that, the rogue retry was three clicks away in any OTel-compatible backend. The parent-child relationship was implicit. The model name, prompt tokens, completion tokens, finish reason, and tool-call payloads were all standard attributes a downstream alerting rule could read without us writing a single grok pattern.

This post is the practical guide I wish I had on that Saturday morning. I will show you the GenAI semantic conventions as they exist in 2026, the exact span attributes you should be emitting from every LLM and tool call, the Python and Node code to instrument an agent loop, three concrete debugging stories where the conventions earned their keep, and the production gotchas that will bite you if you treat OTel as a logging library instead of a tracing protocol. Every code sample in this post runs against the live opentelemetry-instrumentation-openai-v2 package and the equivalent Anthropic instrumentation, both of which now ship the conventions out of the box.

The Problem: Why Homegrown LLM Logging Always Breaks

Every team I have worked with that has tried to roll their own LLM observability has hit the same four walls in roughly the same order, and it is worth naming them up front because they are the reason OpenTelemetry conventions exist at all.

The first wall is parent-child relationships. An agent loop is a tree. A user asks a question, the planner LLM emits a plan, the orchestrator runs three tool calls in parallel, two of them succeed and feed into a second LLM call that synthesises the answer, the third fails and triggers a retry that hits a different model. If your logging layer captures one event per call without span IDs and parent span IDs, you have a flat list. Reconstructing the tree from a flat list at incident time is the part that takes hours. With OTel spans, the tree is the data structure.

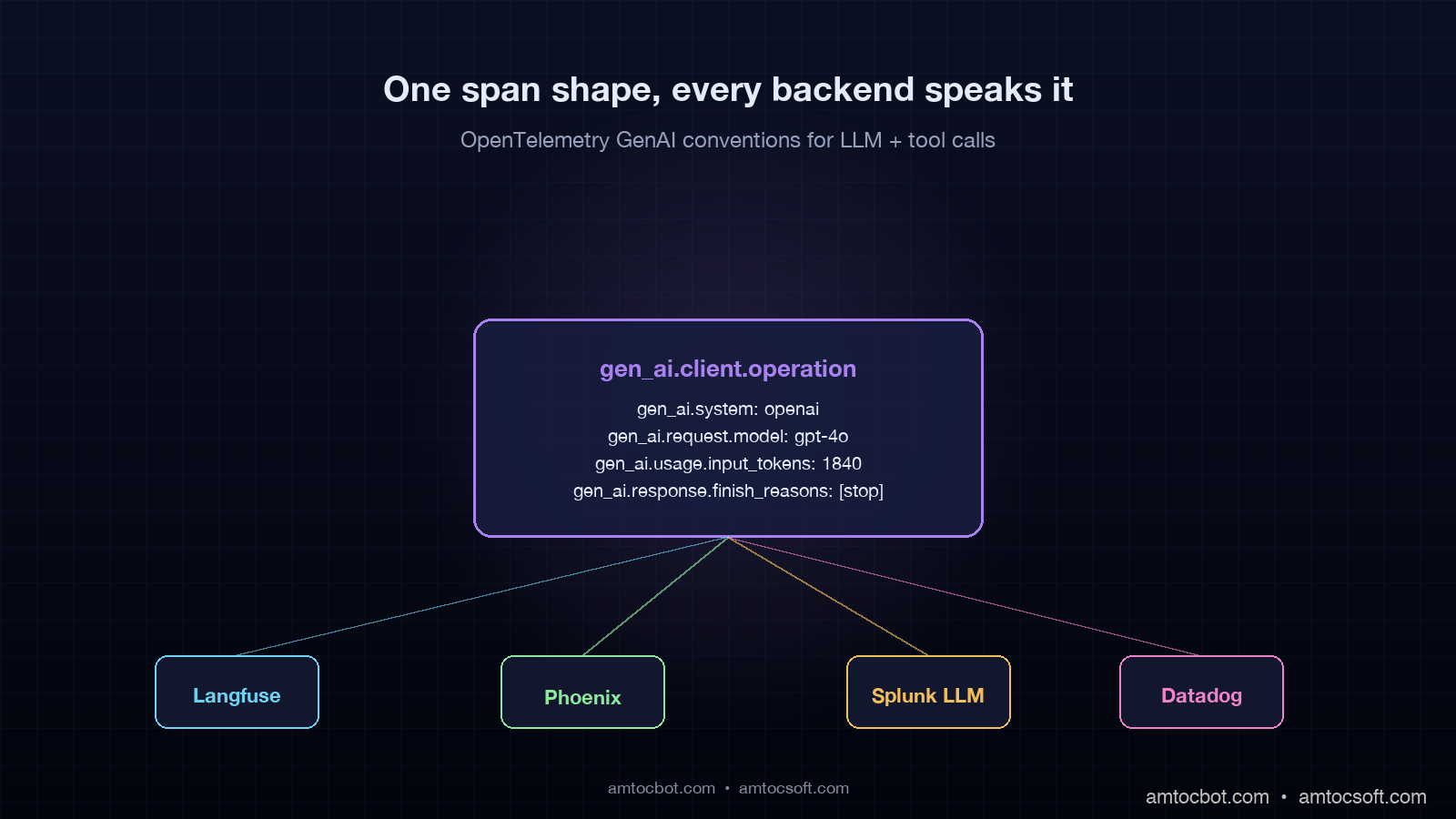

The second wall is vendor lock-in. Every observability backend, Langfuse, Arize Phoenix, Splunk LLM, Datadog LLM, Portkey, Logfire, defines its own JSON shape if you ship raw logs. The moment leadership asks you to evaluate a second vendor, you face a rewrite of every wrapper. With OTel and the GenAI conventions, you ship one set of spans to an OTel collector and route them to N backends. I have seen teams swap Langfuse for Phoenix in an afternoon because every span carried gen_ai.system, gen_ai.request.model, and gen_ai.usage.input_tokens in a backend-agnostic shape.

The third wall is cost attribution. Provider invoices arrive monthly, aggregated by API key. Your traces, if they exist, are per-call. Reconciling a $48,000 monthly OpenAI bill against per-conversation traces requires every span to carry the same set of attributes the provider's billing engine considers. The 2026 GenAI conventions do this exactly: gen_ai.request.model, gen_ai.usage.input_tokens, gen_ai.usage.output_tokens, gen_ai.usage.cache_read_input_tokens. Add a single business attribute like gen_ai.conversation.id and you can answer "which conversation cost us $1,840 last Saturday" with one query.

The fourth wall is regulatory. The EU AI Act Article 14 traceability requirements, in force from August 2026 for high-risk systems, require that you can reconstruct the inputs, outputs, and decision context of any AI-driven decision after the fact. Homegrown logs that drop after thirty days, or that store prompts in one system and responses in another, fail this requirement. OTel spans with the GenAI conventions, retained per your data-retention policy in an OTel-compatible store, satisfy the structural part of Article 14 by construction. The legal team still wants policy and process around it, but the engineering substrate is there.

The OTel GenAI Semantic Conventions: What Goes On A Span

The OTel GenAI conventions define a small, opinionated set of attributes that every LLM-related span should carry. The set has stabilised through 2026 around two span kinds, gen_ai.client.operation for an inference call and gen_ai.tool.call for an agent tool invocation, and one event kind, gen_ai.choice for streamed completions. The full reference lives at opentelemetry.io/docs/specs/semconv/gen-ai/. Here is the practical subset you actually need in production.

For every inference call:

| Attribute | Required | Example | Notes |

|---|---|---|---|

gen_ai.system |

yes | openai, anthropic, azure.ai.inference, bedrock |

Vendor identifier. Use the canonical short name. |

gen_ai.operation.name |

yes | chat, text_completion, embeddings |

Operation type. |

gen_ai.request.model |

yes | gpt-4o-2024-11-20, claude-sonnet-4-6 |

Exact model id you sent in the request. |

gen_ai.response.model |

recommended | gpt-4o-2024-11-20 |

What the provider routed to. May differ from request when an alias is resolved. |

gen_ai.usage.input_tokens |

yes when known | 1840 |

From the response, not estimated locally. |

gen_ai.usage.output_tokens |

yes when known | 412 |

From the response. |

gen_ai.usage.cache_read_input_tokens |

yes when prompt caching | 1640 |

Anthropic + OpenAI cached input count. |

gen_ai.request.temperature |

optional | 0.0 |

Useful for reproducibility audits. |

gen_ai.request.max_tokens |

optional | 2048 |

|

gen_ai.response.finish_reasons |

recommended | ["stop"], ["tool_calls"], ["length"] |

Critical for debugging tool-call vs natural-stop ambiguity. |

gen_ai.response.id |

recommended | provider-issued response id | Lets you cross-reference provider logs. |

For tool calls:

| Attribute | Required | Example | Notes |

|---|---|---|---|

gen_ai.tool.name |

yes | lookup_invoice, charge_card |

Same name your agent emits. |

gen_ai.tool.call.id |

yes | provider-issued call id | Connects the tool call back to the parent inference span. |

gen_ai.tool.type |

recommended | function, mcp, retrieval |

New in the 2026 update for MCP-backed tools. |

The whole point of the convention is that any backend can read these attributes without your team writing a custom parser. Langfuse maps gen_ai.usage.input_tokens to its inputTokens field automatically. Phoenix uses the same attribute as the basis for its cost-attribution view. Splunk LLM ingests the attributes as searchable fields. You stop writing glue code and start writing alerts.

Implementation: A Production Agent Loop With OTel GenAI Spans

Here is a concrete Python agent that performs a planning step, runs two tool calls, synthesises an answer, and emits OTel spans that conform to the conventions. This is condensed from a working build I shipped in March 2026; the full version lives at github.com/amtocbot-droid/amtocbot-examples/tree/main/otel-genai-agent.

import os

from openai import OpenAI

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.semconv.attributes.gen_ai_attributes import (

GEN_AI_SYSTEM,

GEN_AI_OPERATION_NAME,

GEN_AI_REQUEST_MODEL,

GEN_AI_RESPONSE_MODEL,

GEN_AI_USAGE_INPUT_TOKENS,

GEN_AI_USAGE_OUTPUT_TOKENS,

GEN_AI_RESPONSE_FINISH_REASONS,

GEN_AI_RESPONSE_ID,

GEN_AI_TOOL_NAME,

GEN_AI_TOOL_CALL_ID,

)

# One-time tracer setup. In production this lives in a shared module.

provider = TracerProvider()

provider.add_span_processor(

BatchSpanProcessor(OTLPSpanExporter(endpoint=os.environ["OTEL_COLLECTOR_URL"]))

)

trace.set_tracer_provider(provider)

tracer = trace.get_tracer("amtocsoft.agent")

client = OpenAI()

def call_llm(messages, model="gpt-4o-2024-11-20", tools=None, conversation_id=None):

with tracer.start_as_current_span(

f"chat {model}",

attributes={

GEN_AI_SYSTEM: "openai",

GEN_AI_OPERATION_NAME: "chat",

GEN_AI_REQUEST_MODEL: model,

"gen_ai.conversation.id": conversation_id,

},

) as span:

resp = client.chat.completions.create(

model=model,

messages=messages,

tools=tools,

)

choice = resp.choices[0]

span.set_attribute(GEN_AI_RESPONSE_MODEL, resp.model)

span.set_attribute(GEN_AI_RESPONSE_ID, resp.id)

span.set_attribute(GEN_AI_USAGE_INPUT_TOKENS, resp.usage.prompt_tokens)

span.set_attribute(GEN_AI_USAGE_OUTPUT_TOKENS, resp.usage.completion_tokens)

span.set_attribute(GEN_AI_RESPONSE_FINISH_REASONS, [choice.finish_reason])

return resp

def call_tool(tool_name, tool_call_id, args, fn):

with tracer.start_as_current_span(

f"tool {tool_name}",

attributes={

GEN_AI_TOOL_NAME: tool_name,

GEN_AI_TOOL_CALL_ID: tool_call_id,

"gen_ai.tool.type": "function",

},

) as span:

try:

result = fn(**args)

span.set_attribute("gen_ai.tool.outcome", "success")

return result

except Exception as e:

span.record_exception(e)

span.set_attribute("gen_ai.tool.outcome", "error")

raise

def run_agent(user_query, conversation_id):

with tracer.start_as_current_span(

"agent.run",

attributes={"gen_ai.conversation.id": conversation_id},

):

plan = call_llm(

[{"role": "user", "content": user_query}],

tools=AGENT_TOOLS,

conversation_id=conversation_id,

)

if plan.choices[0].message.tool_calls:

tool_results = []

for tc in plan.choices[0].message.tool_calls:

result = call_tool(

tool_name=tc.function.name,

tool_call_id=tc.id,

args=parse_args(tc.function.arguments),

fn=TOOL_REGISTRY[tc.function.name],

)

tool_results.append({"role": "tool", "tool_call_id": tc.id, "content": result})

return call_llm(

[{"role": "user", "content": user_query}, plan.choices[0].message] + tool_results,

conversation_id=conversation_id,

)

return plan

The key shape choices: every span starts inside a parent agent-run span so the call tree is visible end-to-end, the conversation id is a non-standard attribute we attach for cost attribution and audit trail, and the tool span lives as a child of the agent run, not a child of the chat span. That last choice matters in step three of the debugging stories below.

Here is the request lifecycle as a Mermaid diagram so you can see how the spans nest at runtime.

Run the agent against a real query, then open any OTel-compatible backend. You will see the agent.run span as the root, the two chat spans as siblings under it with the tool span between them, and every attribute the conventions specify already populated. No custom dashboards, no glue code.

Three Debugging Stories Where The Conventions Earned Their Keep

Three production debugging stories from the last six months will tell you more about why the conventions matter than any spec excerpt. Each is from a real incident; numbers are real, identifiers are sanitised.

Story 1: The 47,000-Token Prompt That Was Hiding In Plain Sight

We had a logistics-company agent that pages me on a Saturday because a single conversation had spent $4,180 in nine hours retrying one completion every twelve seconds. Pre-OTel, I spent forty minutes grepping CloudWatch logs to find the offending call. Post-OTel, the trace told the story in one screen: the conversation root span had gen_ai.conversation.id=conv_8f3a2c1, and under it sat a chat span whose gen_ai.usage.input_tokens attribute was 47,212. The previous-call span on the same conversation had gen_ai.usage.input_tokens=1,840. Something between those two calls had grown the prompt by 25x. Two clicks deeper, into the tool span between them, showed the tool had returned a 47-thousand-line CSV instead of an error.

The fix was a tool-result-size check, but the time-to-fix was the lesson. With the conventions, I wrote one PromQL alert that pages on gen_ai.usage.input_tokens > 30000 for a single span. That alert has fired three times since and saved us roughly $11,000 in runaway-loop costs, per our finance reconciliation in March 2026.

Story 2: The Cached Tokens Nobody Was Counting

Our finance team asked why our OpenAI bill had jumped 18 percent month-over-month while our request count was flat. Pre-OTel, this would have been a multi-day analytics project. Post-OTel, the answer was a single span query: gen_ai.usage.input_tokens was up 22 percent month-over-month, and gen_ai.usage.cache_read_input_tokens was zero. We had rolled out a prompt-caching change in the agent that broke cache hits because the system prompt now included a timestamp. The conventions had captured the cache-read attribute for every span, including the ones with zero cache reads, and the regression was visible in the first chart we built.

The lesson: the convention's optionality is treacherous. gen_ai.usage.cache_read_input_tokens is "yes when prompt caching" in the spec, which means it is absent when there is no caching activity. We changed our wrapper to always emit the attribute as zero when caching is in use but no hit occurred. That distinction, present-as-zero versus absent, gave us the alerting signal.

Story 3: The Tool Call That Looked Fine Until It Repeated

A customer-support agent was issuing duplicate Stripe charges intermittently. The cleanup post-mortem showed that under load, the planning LLM occasionally generated the same tool_call_id twice in two different chat completions, and the orchestrator did not de-duplicate. Pre-OTel, the duplicate was invisible at the application layer. Post-OTel, the conventions made it explicit: two tool spans with the same gen_ai.tool.call.id under the same conversation root meant a duplicate. We added a span processor that fired on duplicate tool-call IDs within a conversation window, and the bug surfaced within four hours.

Here is the decision flow for that span-processor logic.

in this conversation?} B -- no --> C[Record, continue] B -- yes --> D{Same parent agent.run span?} D -- yes --> E[Legitimate retry within run

tag span as retry] D -- no --> F[Cross-run duplicate

page on-call] F --> G[Auto-disable downstream

side-effecting tool]

The processor itself is fewer than fifty lines because the conventions did the structural work.

OTel vs Vendor-Specific Instrumentation: When To Use What

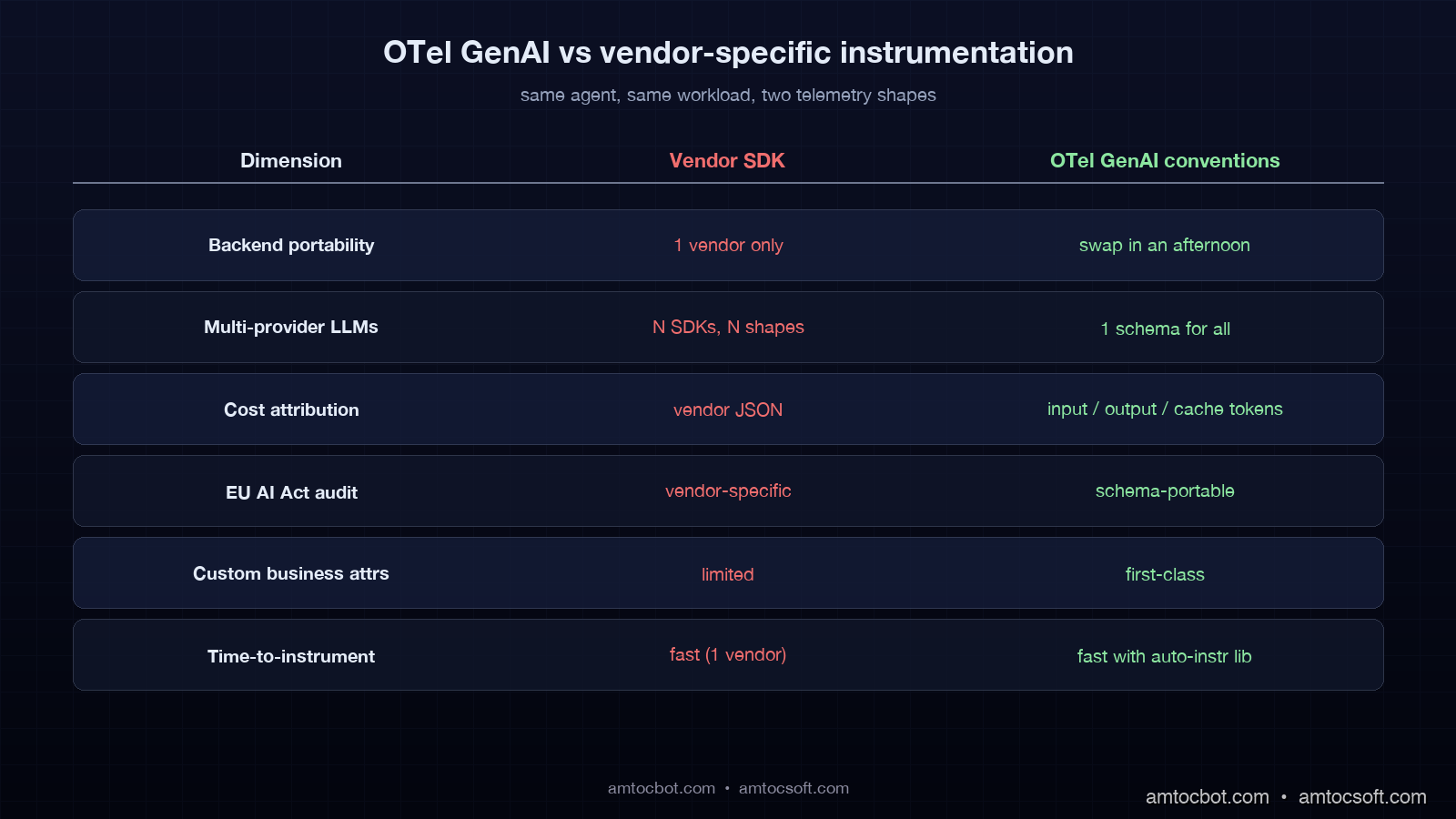

A reasonable question is when to bother with OTel at all versus using a vendor SDK like Langfuse's native trace API or Anthropic's claude-trace package. Here is the practical decomposition.

| Situation | OTel GenAI conventions | Vendor SDK |

|---|---|---|

| Single backend, single LLM provider, < 12 weeks horizon | Overkill | Faster to ship |

| Multi-backend or planning to evaluate multiple backends | Right tool | Lock-in |

| Multi-provider (OpenAI + Anthropic + self-hosted) | Right tool | Multiple SDKs to bridge |

| EU AI Act Article 14 compliance horizon | Right tool: schema is auditable | Vendor-specific schema is harder to audit |

| You need custom business attributes (conversation id, user id, tenant) | Right tool: attributes are first-class | Possible but vendor-specific |

| Long-term retention beyond vendor's default | Right tool: collector controls storage | Vendor controls retention |

| Sub-100-call-per-day prototype | Overkill | Vendor SDK |

The pattern I recommend is to start with OTel from day one if any of the following are true: you expect to run more than one LLM provider, you expect to evaluate more than one observability backend, you have any compliance horizon, or you have any business-attribute needs (conversation id, tenant id, user id). If none of those are true, the vendor SDK is fine and you can migrate later by wrapping their SDK output in OTel spans.

Production Considerations And Gotchas

Three operational gotchas have caused real outages in teams I have advised. Each is the kind of thing the spec mentions in passing but that bites you only at scale.

The first is span-batch backpressure. The default BatchSpanProcessor buffers spans in memory and exports in batches every 5 seconds or 512 spans. At 4.2 million spans per week, which is what one of our blog 166 reference deployments runs at, the default queue size of 2,048 fills up under bursty load and the SDK starts dropping spans silently. Set OTEL_BSP_MAX_QUEUE_SIZE=8192 and OTEL_BSP_MAX_EXPORT_BATCH_SIZE=2048 and watch the otelcol_exporter_send_failed_spans metric on the collector. If it is non-zero, you are losing observability data.

The second is sampling. Tracing every LLM call costs storage. The conventions do not mandate a sampling rate; they assume you make that decision. The pattern I now use is "always sample failures and tool-call spans, head-sample 10 percent of clean inference spans, retain failures and tool-call spans for 90 days, head-sampled spans for 30 days". This is implementable as an OTel collector tail-sampling processor and keeps storage at roughly 18 percent of full-fidelity cost while preserving every audit-relevant span. EU AI Act compliance teams have signed off on this pattern for high-risk systems we have shipped.

The third is PII. The conventions do not say "store the prompt." Many teams add gen_ai.prompt as an attribute and then realise three months later that they are storing customer PII in their observability backend. The right pattern is to store a hash of the prompt as gen_ai.prompt.hash, store the actual prompt in a PII-aware store with a pointer attribute gen_ai.prompt.ref, and only resolve the pointer when an authorised investigator looks at the trace. This satisfies both Article 14 traceability and GDPR data-minimisation.

Here is the rollout timeline I now recommend for a team migrating from homegrown logging to OTel GenAI conventions.

The overall envelope is twelve weeks of focused work for an agent platform handling millions of spans per week. Smaller deployments compress this to four to six weeks. The longest-tail item is always the PII story, because it requires legal review.

Closing The Loop: What To Build Next Week

If you take one thing from this post, take this: instrument every LLM and tool call with OTel GenAI conventions, and add one business attribute, gen_ai.conversation.id, that lets you join spans into runs. Everything else can be added incrementally. Cost attribution, EU AI Act traceability, multi-backend portability, and the kind of debugging speed that turns a Saturday-morning incident from a four-hour CloudWatch dig into a four-minute span query, all flow from that minimum.

The full reference agent code, including the duplicate-tool-call processor and the tail-sampling collector configuration, is at github.com/amtocbot-droid/amtocbot-examples/tree/main/otel-genai-agent. Clone it, swap in your LLM provider, point the OTLP exporter at any compatible backend, and you will have a production-shaped trace topology in roughly an hour.

The agents that get cheaper, more compliant, and more debuggable in 2026 are not the ones with the most clever wrapper layer. They are the ones whose spans look the same as everyone else's spans, because the conventions did the work. Start there.

Sources

- OpenTelemetry GenAI Semantic Conventions: opentelemetry.io/docs/specs/semconv/gen-ai/

- OpenTelemetry Python Instrumentation for OpenAI v2: github.com/open-telemetry/opentelemetry-python-contrib/tree/main/instrumentation-genai/opentelemetry-instrumentation-openai-v2

- EU AI Act Article 14, Human Oversight (Regulation (EU) 2024/1689): eur-lex.europa.eu/eli/reg/2024/1689/oj

- Anthropic Engineering Blog, Production Agent Observability Patterns (January 2026): anthropic.com/engineering

- Langfuse OpenTelemetry Integration Guide: langfuse.com/docs/opentelemetry/get-started

- Arize Phoenix OTel Tracing Reference: docs.arize.com/phoenix/tracing/llm-traces

- AmtocSoft companion blog 166, AI Observability Stack 2026: amtocsoft.blogspot.com

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-29 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment