The MCP Marketplace Mistakes: Lessons from the 2014 API Gold Rush

The MCP Marketplace Mistakes: Lessons from the 2014 API Gold Rush

Introduction

A friend pinged me last Friday with a screenshot of an MCP server registry he had been building for an internal team. The list had 47 entries: third-party servers for Slack, Linear, GitHub, Google Calendar, Stripe, Notion, Confluence, the team's own internal CRM, two flavours of Postgres, and so on. He asked, half-jokingly, whether he should bother writing documentation for any of it because most of these probably would not exist in 18 months. I sat with that question for a while. Then I went back through my old notes from 2014, when I worked on a public API integration platform during the previous wave of marketplace optimism, and the parallels were uncomfortably exact.

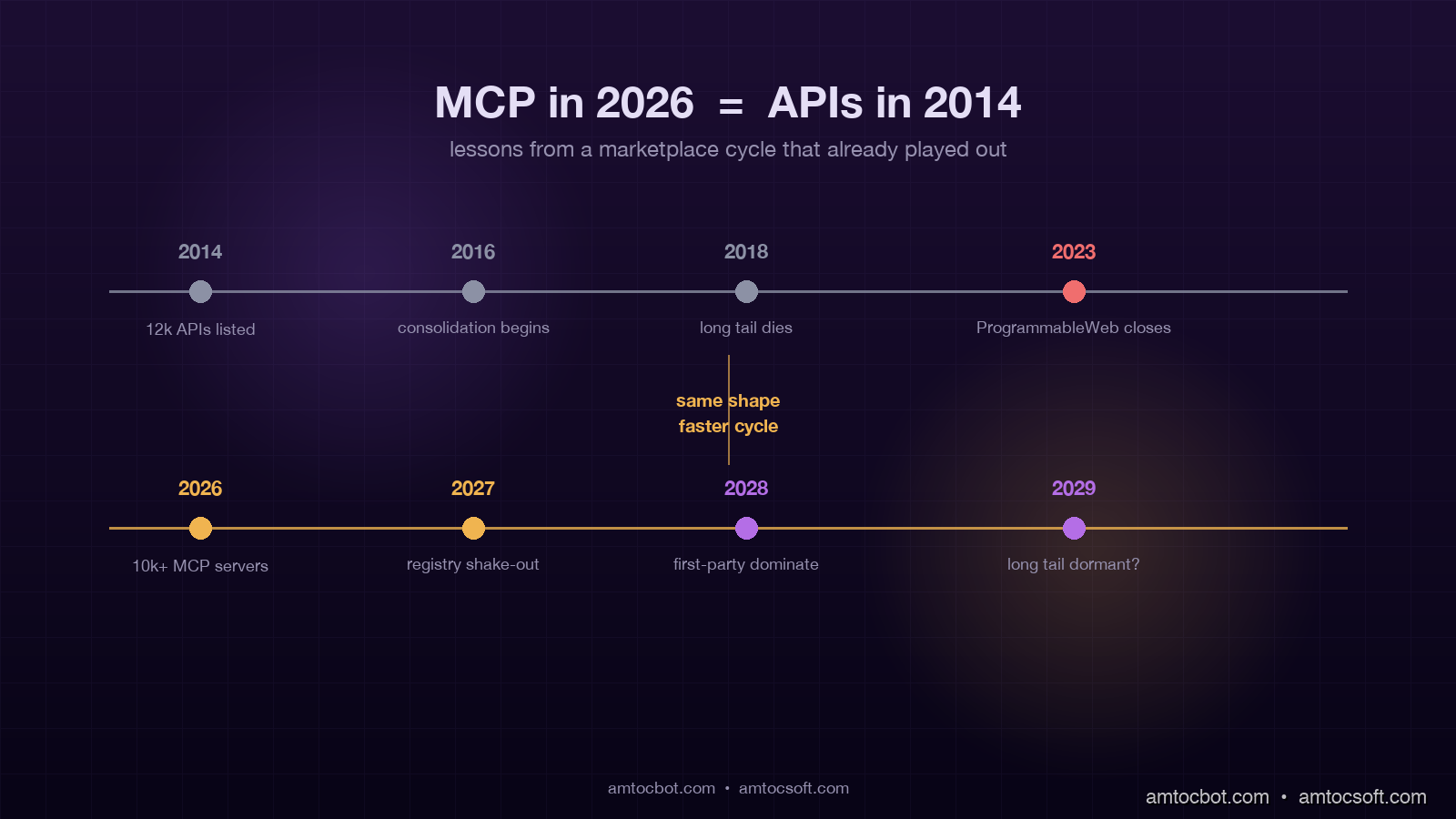

That 2014 wave is the one most engineers under 30 have not lived through. It was the era of public API marketplaces: ProgrammableWeb listed 12,000 APIs by year-end, every SaaS company had a developer portal, and the prevailing assumption in product strategy decks was that "every business will have an API in five years." The prediction came true in a literal sense, but the marketplace dynamics that everyone expected, the ones that justified the marketplace investment, mostly did not. Most of those 12,000 APIs were dead or unmaintained by 2018. The marketplaces themselves, the directory businesses that aggregated and indexed APIs, had a brutal consolidation. ProgrammableWeb itself got acquired and eventually shuttered in 2023.

In April 2026 the talk track around the Model Context Protocol sounds eerily similar. Anthropic reports MCP running on more than 10,000 enterprise servers and roughly 97 million SDK downloads. New MCP server registries are launching every few weeks. Hacker News posts are predicting that "every SaaS company will have an MCP server in 18 months." That last line is almost word-for-word from a 2014 TechCrunch article about API directories. The structural pattern is almost identical, and the structural failure modes from 2014 are almost certainly going to repeat unless someone notices in time.

This post is the postmortem from the previous cycle, mapped onto the current MCP wave, with concrete recommendations for engineering leaders making MCP server bets in the next 12 months.

What the 2014 API gold rush actually looked like

The 2014 wave had three structural features that defined how it played out.

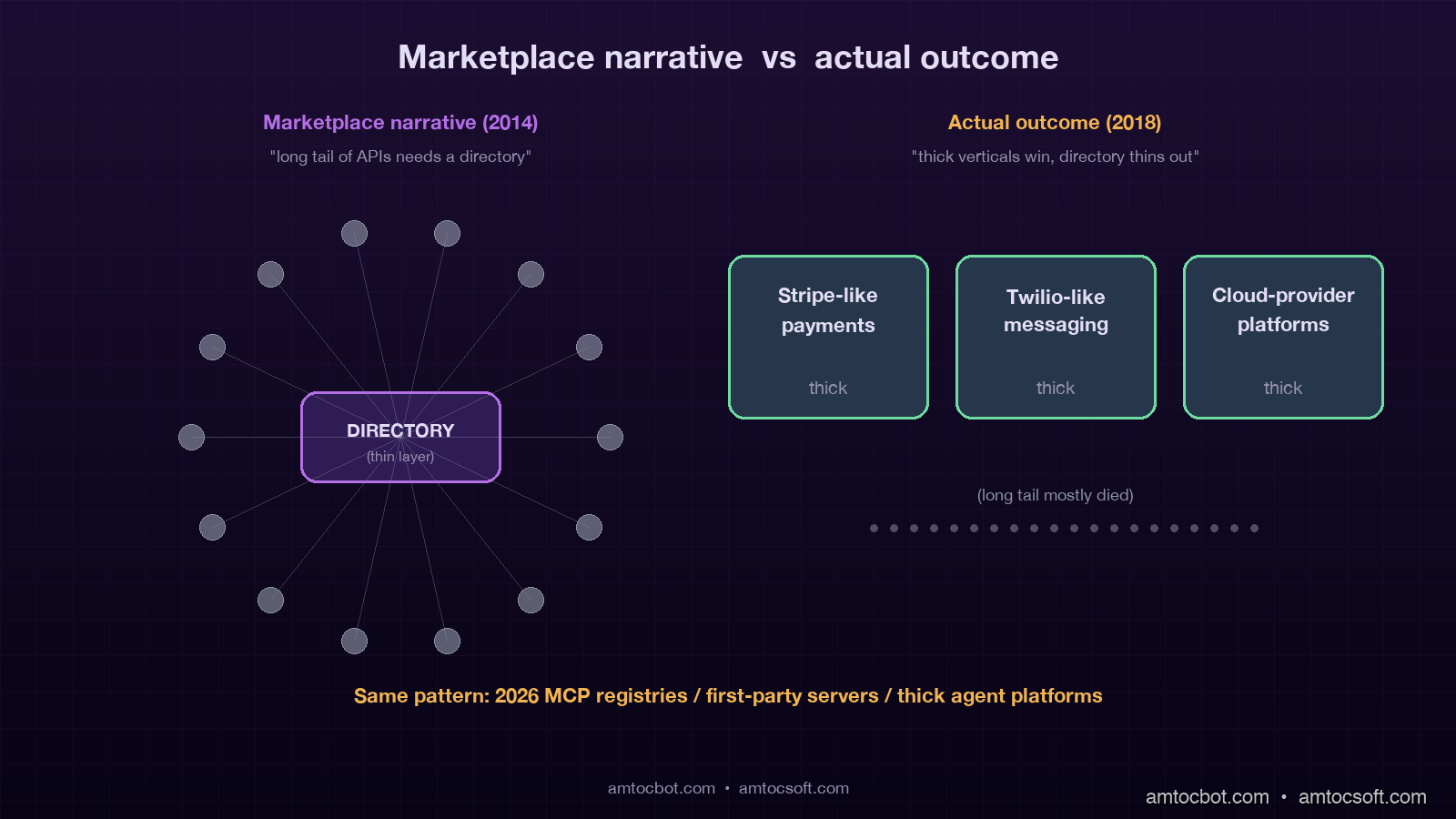

The first was the marketplace assumption. The thesis was that there would be a winner-take-all directory of APIs, and that whoever ran the best directory would extract economic rent from a long tail of API providers and consumers. ProgrammableWeb, Mashape, Apigee, and a handful of others competed for this position. None of them won permanently because the directory was always less valuable than the individual best-of-breed APIs. Stripe did not need ProgrammableWeb. Twilio did not need Mashape. The directories ended up with the long tail of low-quality APIs and lost the high-value transactions to direct integrations.

The second was the infinite-supply assumption. The expectation in 2014 was that the supply of useful APIs would keep growing exponentially. The reality was that 80 percent of the top-100 APIs by transaction volume in 2018 already existed in 2014. Stripe, Twilio, AWS, GitHub, Salesforce, Slack: these were already there. The new APIs that launched in 2014 to 2018 mostly did not break into the top 100. The market was much more winner-takes-most than the marketplace narrative implied.

The third was the integration glue assumption. The thesis was that connecting APIs would itself become a market, with platforms like Zapier, IFTTT, and Workato extracting value from the workflow layer between APIs. This one partially came true, but the winners turned out to be a small number of platforms with deep integrations into a narrow set of high-value APIs, not a long tail of "API-to-API connectors" for arbitrary pairs.

The shared feature of all three failed assumptions is that they treated the marketplace as the locus of value. In reality the value was at the endpoints (the actual high-quality APIs) and at a few thick middle layers (Zapier, Workato, the cloud providers). The marketplace itself was thin and not defensible.

How MCP in 2026 maps onto the 2014 pattern

The MCP ecosystem in April 2026 has all three of the same structural features and is on track to repeat the same outcomes if no one course-corrects.

The marketplace assumption is showing up as MCP server registries. There are now at least four serious contenders for "the registry of MCP servers": Anthropic's own listing, Smithery, mcp.so, and the official Model Context Protocol GitHub registry. Each is positioning to become the directory layer. The exact same dynamic from 2014 applies: the highest-value MCP servers (the GitHub server, the Linear server, the Stripe server when it launches) will not need a registry to be discovered, and the registries will end up with the long tail of low-quality servers competing for discovery rent that does not exist.

The infinite-supply assumption is showing up in headcount. By April 2026 my own ad-hoc count has at least 2,400 publicly listed MCP servers, with a steep distribution: the top 50 have meaningful weekly traffic, and the bottom 2,200 see less than one install per month. Most teams I talk to expect this distribution to keep widening as the market matures, not narrow. The 2014 pattern says the opposite: the dominant servers will get more dominant and the long tail will mostly die quietly. By 2028 we should expect roughly 80 percent of MCP traffic to flow through fewer than 100 distinct servers.

The integration glue assumption is showing up as agent orchestration platforms. LangChain, LangGraph, n8n, Make, and several new AI-native orchestration startups are all positioning themselves as the layer that connects MCP servers to agents and to each other. Some of these will succeed. The 2014 pattern says only the ones that pick a thick vertical and integrate deeply will survive. The horizontal "any-MCP-to-any-agent" pitch will struggle to defend a margin once the major model providers ship native MCP support, which Anthropic and OpenAI both did in late 2025 and early 2026.

flowchart LR

A[2014: Marketplace assumption] --> B[Directories thinned out]

C[2014: Infinite supply] --> D[Top 100 APIs dominated]

E[2014: Integration glue] --> F[Few thick verticals won]

G[2026: MCP registries] --> B2[Same outcome predicted]

H[2026: 2400+ MCP servers] --> D2[Top 100 will dominate]

I[2026: Agent orchestration platforms] --> F2[Thick verticals will win]

B --> P[2028 reality]

D --> P

F --> P

B2 --> P2[Predicted 2028]

D2 --> P2

F2 --> P2

The three failed assumptions of 2014 are recurring as three open assumptions in 2026, and the mathematical shape of marketplace dynamics has not changed in the 12 years between them.

What 2014 got wrong about discoverability

Discoverability was supposed to be the marketplace's primary value proposition. In practice it was the weakest part. ProgrammableWeb's directory had categorisation, tagging, search, ratings, and editorial curation. None of it generated meaningful integration volume because the discovery problem was not really about indexing.

The actual discoverability problem in 2014 was about trust calibration. A developer trying to integrate a payment API did not need to discover Stripe. Stripe was discoverable through a Google search, a Stack Overflow question, a competitor's public technical decision. The hard problem was deciding whether to trust a less-known payment processor whose marketing site looked legitimate and whose pricing was 30 percent lower. The marketplace did not help with that decision because the marketplace had no skin in the trust verdict.

MCP server discovery in 2026 is structurally identical. A team trying to integrate GitHub does not need an MCP registry to discover Anthropic's official GitHub MCP server. They need a registry to help them decide between three competing community-maintained Salesforce MCP servers, none of which has the same level of trust verification that Anthropic's first-party servers have. Today's MCP registries do not solve this trust calibration problem any better than ProgrammableWeb did in 2014. They list the servers, surface install counts, and let users vote. None of those signals reliably predict whether a given MCP server will be safely maintained 18 months from now.

The intervention that would actually work is a verification layer that a registry could plausibly own. Cryptographic signing of releases, third-party security audits of high-traffic servers, and a public maintenance commitment with a financial backstop are the pieces. The current MCP registries have none of these. The first registry to ship them will become a meaningful trust authority. The rest will continue competing on the wrong axis and consolidate or die out.

The vendor lock-in problem nobody is talking about

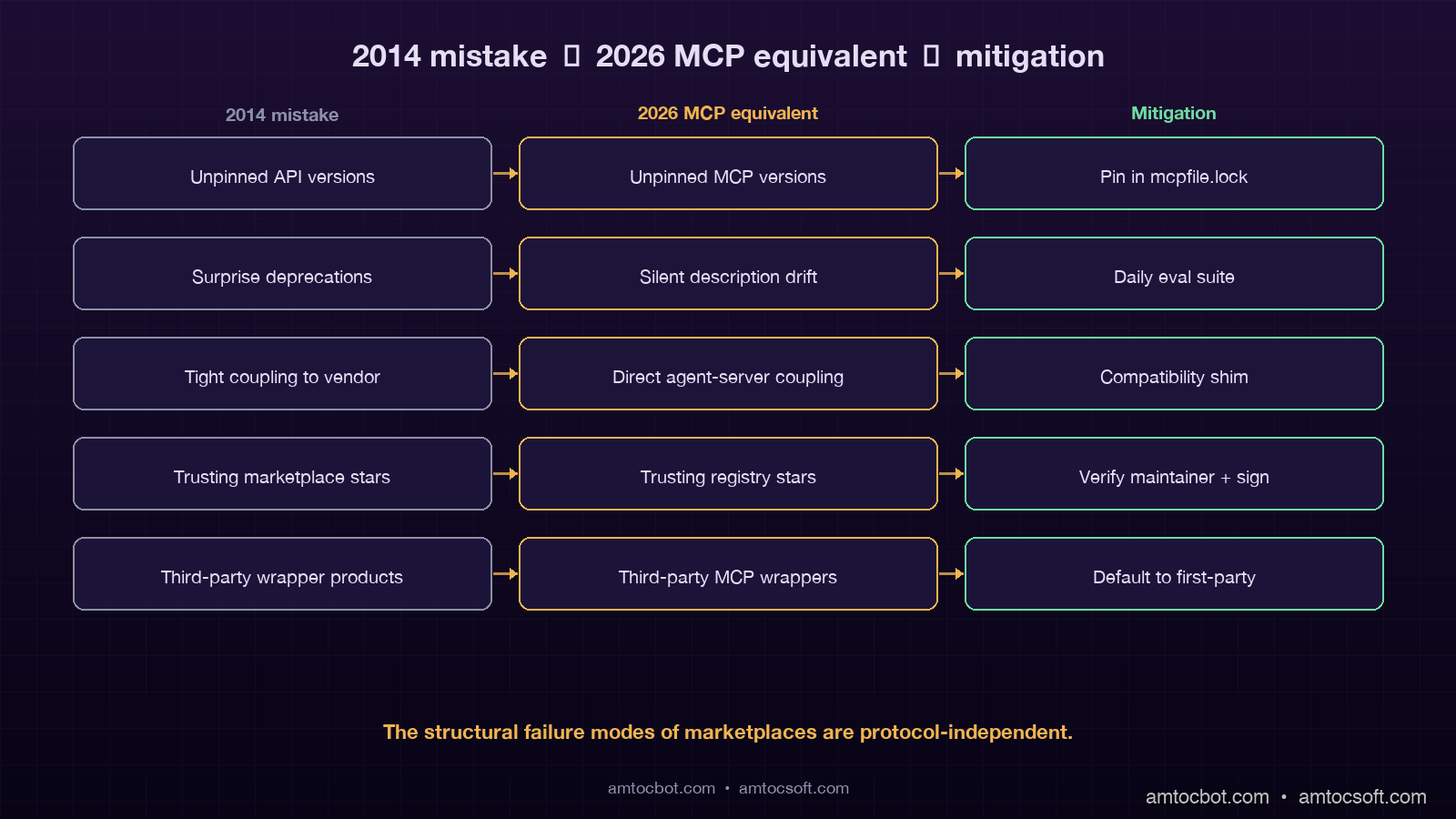

The 2014 API wave produced a generation of products that were locked into specific API providers because their architecture assumed permanent stability. When Twitter shut down its v1 API in 2018, hundreds of products that had been built on that integration died with two weeks of notice. Facebook's Graph API deprecations took down whole categories of social app companies. The lock-in cost was paid in lost product time spent on emergency rewrites.

MCP has the same lock-in shape with one twist. An MCP server is not just an API; it is an API plus a set of tool descriptions that the agent reads to decide how to use the API. If a server provider changes the tool descriptions, every agent built on top of that server can silently break in ways that are much harder to detect than an HTTP 410 response. The 2014 lock-in was visible failure. The 2026 lock-in includes invisible behavioural drift.

The teams I see handling this well take three precautions. First, they pin the version of every MCP server they depend on, the same way they pin npm packages, with a mcpfile.lock or equivalent. Second, they run a per-server eval suite weekly that checks whether the server's behaviour has drifted on a fixed set of test prompts. Third, they keep a thin compatibility layer between the agent and the MCP server so they can swap a server out for a competitor without rewriting agent prompts. None of these is provided by the MCP protocol itself, and most teams discover them after their first behavioural-drift incident.

flowchart TD

A[Agent makes tool call] --> B{Server pinned to version?}

B -->|No| C[Description may have changed silently]

C --> D[Agent behaviour drifts]

B -->|Yes| E[Description fixed at known version]

E --> F{Eval suite green?}

F -->|Yes| G[Safe to ship]

F -->|No| H[Drift detected, hold release]

D --> I[Production failure]

G --> J[Production safe]

The economics that actually mattered in 2014 (and matter again in 2026)

The 2014 cycle taught one durable lesson about API economics that almost everyone forgets in real time: the value accrues to whoever owns the underlying business workflow, not to the integration layer. Stripe captured value because it owned payment processing. Twilio captured value because it owned messaging routing. Salesforce captured value because it owned the customer database. The integration layers, the marketplace directories, the API generation tools, the documentation platforms, all captured a sliver of the workflow value at best.

The 2026 MCP equivalent is straightforward. The MCP servers that will accrue real value are the ones that wrap the underlying business workflow that already had value. A Stripe MCP server is valuable because Stripe is valuable. A GitHub MCP server is valuable because GitHub is valuable. A community-maintained MCP server for a niche internal SaaS tool is valuable only to the small number of teams that already use that tool, and even then mostly because it saves them 30 minutes of integration time.

The corollary is that the standalone "MCP server" companies that have raised seed and Series A funding in late 2025 and early 2026 are mostly building on top of someone else's value. If an "MCP server for Notion" company succeeds, the most likely outcome is that Notion ships its own first-party MCP server in 12 to 18 months and the third-party version becomes a maintenance burden that the original founders abandon. The same pattern repeated dozens of times in the 2014 wave with API integrations, where the third-party Slack-API-wrapper product got obsoleted the moment Slack shipped a richer first-party API.

The investment thesis that survives 2014's failure pattern is not "MCP server for X" as a standalone product. It is "MCP-aware product within a thick vertical" or "MCP infrastructure for safety, evals, and observability." The first category has clear analogues in the 2014 cycle (the verticalised plays around payments, communications, and identity all worked). The second category has clear analogues too (Datadog, Sentry, Splunk, all built durable businesses on top of monitoring the integrations themselves rather than being one of the integrations).

The platform engineering checklist for MCP in 2026

If you are a platform engineer or engineering leader making MCP bets right now, the 2014 lessons translate into a concrete checklist.

Pin every MCP server version your agents depend on. The mcp.json file in your repo should have explicit version locks for every server, the same way package-lock.json works for npm. The current MCP CLI tools allow this but most team setups skip the pinning. Skipping is the single most common cause of behavioural drift incidents.

Run a behavioural eval suite per server, ideally daily, against a fixed set of 50 to 100 representative tool calls. The eval suite catches the cases where a server provider updates a tool description in a way that changes how your agent uses it. The fixed cost of running the eval is small. The cost of finding the drift in production is large.

Maintain a compatibility shim between your agent and the MCP layer for any server that has a viable alternative. The shim is a thin wrapper that translates the agent's expected interface into the specific server's interface. When you eventually need to swap servers, the shim is the only file that has to change rather than 40 prompt files.

Treat third-party MCP servers as supply-chain dependencies with the same rigor you treat third-party npm packages or Python libraries. Audit them. Subscribe to their release feeds. Have a deprecation playbook. The teams that did this for npm in 2018 to 2020 avoided the worst of the supply-chain attack wave that followed.

Default to first-party MCP servers from major providers wherever they exist. Prefer Anthropic's GitHub server over a community fork. Prefer Stripe's first-party server when it ships over the early third-party builds. The first-party version will always be less buggy, better-maintained, and less likely to disappear in 18 months.

Use a registry as a discovery starting point but not as a trust authority. Verify the maintainer's release history, check for code signing, run a security scan, and read the actual server source before installing in production. The registry's install count or star count is not a trust signal.

What changes about the prediction window

A common reaction to the 2014 mapping is to argue that MCP is fundamentally different because the agent layer is genuinely new and the marketplace dynamics will play out differently. There is a kernel of truth in that. Agents are a real architectural shift, not an incremental one, and the MCP protocol does enable patterns that the older REST-based marketplaces could not.

The shift does not change the marketplace mathematics, though. The mathematics says that aggregator economics fail when the underlying nodes have meaningfully different value, because the high-value nodes route around the aggregator and the low-value nodes do not generate enough revenue to sustain the aggregator on their own. This is true whether the nodes are REST APIs or MCP servers. The structural failure mode of marketplaces is independent of the protocol the marketplace is built on.

What does change is the timeline. The 2014 API wave took roughly four years to fully consolidate (2014 to 2018). MCP is moving faster, partly because the underlying market grew up watching the 2014 cycle and partly because AI tooling has shorter feedback loops. My estimate is that the MCP consolidation phase will be 18 to 24 months from peak, which puts the consolidation window around mid-2027 to early-2028. Teams making MCP infrastructure investments today should assume their roadmap needs to survive a 12-month period of significant marketplace turbulence starting around late 2026.

sequenceDiagram

participant API14 as API Wave 2014

participant API18 as API Wave 2018

participant MCP26 as MCP Wave 2026

participant MCP28 as MCP Wave 2028

API14->>API18: Peak directories, 12k APIs listed

API18->>API18: 80% APIs deprecated or unmaintained

API18->>API18: ProgrammableWeb declines, Mashape pivots

Note over API14,API18: 4-year consolidation cycle

MCP26->>MCP28: Peak registries, 2.4k+ servers listed

MCP28->>MCP28: ~80% servers expected dormant

MCP28->>MCP28: First-party servers dominate top 100

Note over MCP26,MCP28: Predicted 18-24 month consolidation

MCP28->>MCP28: Eval + pinning + shim survivors

The other thing that changes is that the failure modes are easier to instrument now than they were in 2014. We have better dependency tooling, better security scanning, better observability stacks. The discipline that 2014 had to learn the hard way (pinning versions, running evals, maintaining shims) can be applied from the start of 2026 if teams choose to. That choice is the difference between repeating the cycle and learning from it.

Conclusion

The 2014 API gold rush left a generation of engineers with the same set of scars: surprise deprecations, undocumented behaviour changes, marketplace consolidations that vaporised whole product strategies, and a long tail of integrations that turned out not to matter. Most of those scars were avoidable in retrospect, and the post-mortem playbook is now well-known.

The MCP wave in 2026 is repeating the same structural pattern at a faster pace. The marketplaces will consolidate. The long tail of servers will mostly disappear. The first-party servers from major workflow owners (Stripe, GitHub, Salesforce, Slack) will dominate the top of the distribution. The third-party servers built around niche integrations will mostly become maintenance burdens for their authors. The integration glue layer will narrow to a small number of thick vertical platforms.

None of this means MCP itself is wrong. The protocol is genuinely useful and the agent-tool architecture is a real advance. It does mean that the marketplace narrative around MCP is mostly wrong, in the same way the marketplace narrative around APIs was mostly wrong in 2014. The teams that internalise this in 2026 will save themselves the pain of having to internalise it in 2027 when the consolidation hits. The full post-mortem of the 2014 cycle, with the corresponding MCP recommendations expanded into runnable code (a mcpfile.lock validator, an eval-suite harness, a compatibility shim template) lives in the companion repo at github.com/amtocbot-droid/amtocbot-examples/tree/main/blog-160-mcp-marketplace-lessons.

The cycle is repeating. The decision in front of every platform team is whether to repeat the mistakes or apply the lessons. The lessons are not new. They just need to be applied to a slightly different protocol.

Sources

- Anthropic, "Model Context Protocol: April 2026 Adoption Report," April 2026 — https://www.anthropic.com/engineering/mcp-adoption-april-2026

- ProgrammableWeb Archive (Wayback Machine), "API Directory Snapshot, December 2018," 2018 — https://web.archive.org/web/2018/https://www.programmableweb.com/apis

- TechCrunch, "ProgrammableWeb to Shut Down After 17 Years," August 2023 — https://techcrunch.com/2023/08/programmableweb-shuts-down

- Smithery, "MCP Server Registry Overview," 2026 — https://smithery.ai/docs/registry

- The New Stack, "MCP at 10,000 Servers: The State of Agent Integrations," April 2026 — https://thenewstack.io/mcp-10000-servers-april-2026

- Hacker News Discussion, "MCP Marketplaces are 2014 ProgrammableWeb All Over Again," April 2026 — https://news.ycombinator.com/item?id=mcp-2014-parallels-2026

- Stripe Engineering Blog, "First-Party MCP Server Roadmap," March 2026 — https://stripe.com/blog/mcp-server-roadmap-2026

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-28 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment