The Single Platform Health Score for LLM Systems: Rolling Up Four SLO Categories Into One Board-Ready Number Without Losing Per-Category Signal

Introduction

The first time I tried to put an LLM platform health number into a board pack, I lost the room in under thirty seconds. The board chair looked at the slide, which had four separate SLO bars (latency 99.2 percent, quality 96.4 percent, cost 91.0 percent, availability 99.97 percent), and asked one question: "Is the platform healthy or not, yes or no?" The CFO followed with: "If only one number ever made it to this meeting, which one would it be?" I did not have a good answer. Two weeks earlier the platform team had had a fierce internal debate about whether to weight quality at 40 percent or 30 percent of any composite. We never resolved it, so we showed all four bars, and the room read that as us not having a point of view.

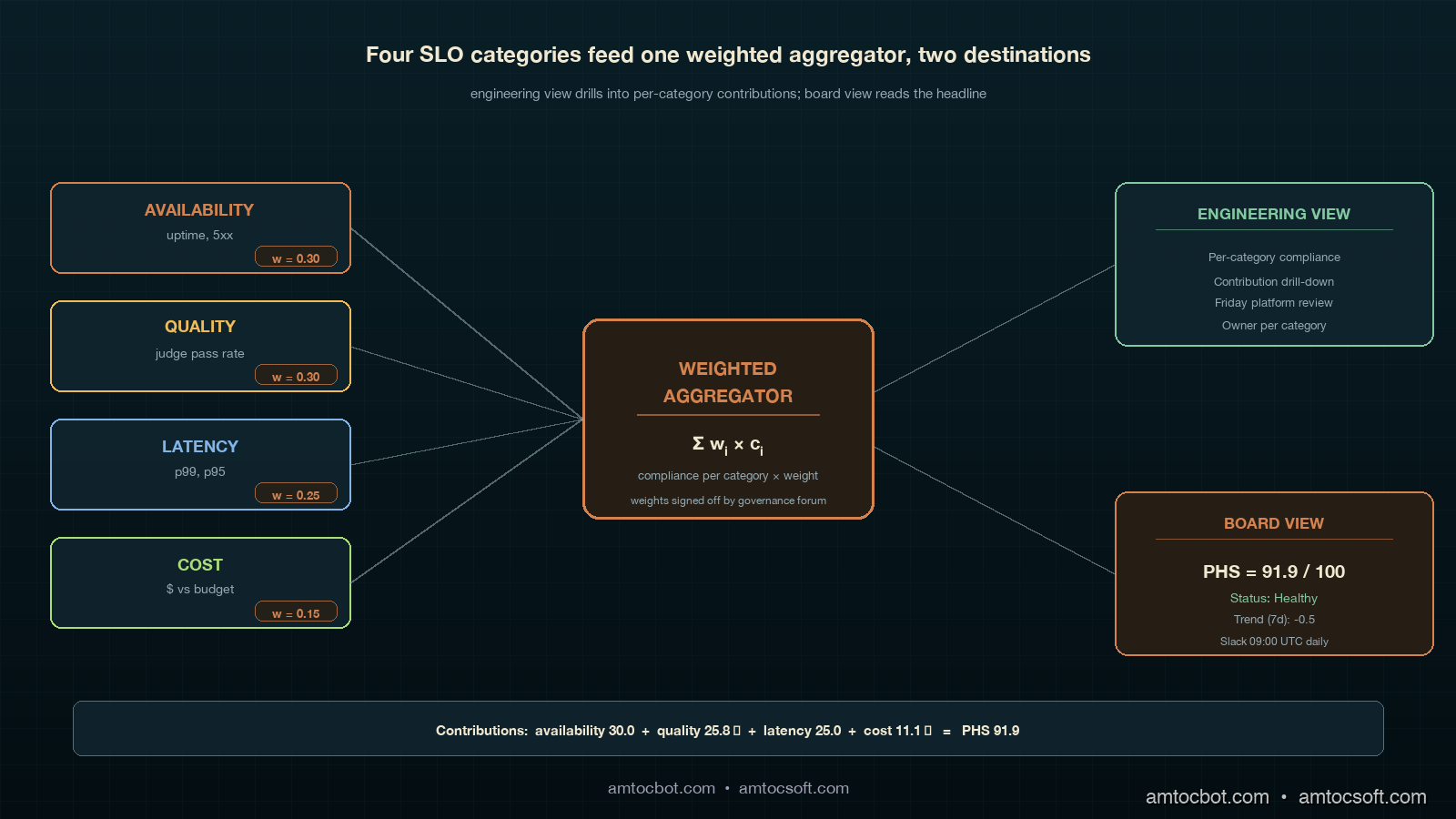

I went back to the office and built the rollup we now ship. It is one number, called the Platform Health Score (PHS), updated every hour, sent to a single Slack channel, and printed at the top of every board update. Underneath that one number sits the four-SLO framework from blog 180, untouched. The trick was not picking weights. The trick was picking weights and showing the per-category contribution alongside, so that whoever wants the boardroom view sees the headline and whoever wants the engineering view sees the breakdown. Neither audience loses information. Both audiences are looking at the same source.

This post is the rollup math, the dashboard layout, the weighting debate I lost three times before I won it, and one specific incident where the composite number caught a regression the per-category dashboards missed for fourteen hours. By the end you should have a single platform-health number you can defend to a board, a way to keep your engineers from feeling the headline flattens their signal, and a recompute pipeline that is cheap enough to update hourly across a multi-tenant production LLM system. Code is in Python and runs against any Prometheus or OpenTelemetry-backed metrics store. The companion repo lives at amtocbot-examples/llm-platform-health-score.

The Problem: Why Per-Category SLO Dashboards Fail at the Board Level

A platform team that has done its SLO homework usually ends up with a four-tile dashboard: latency, quality, cost, availability. Each tile has a target percentile, a current value, and an error-budget bar. For an SRE on a Tuesday morning, this is exactly the right view; you can scan it in five seconds and know which category is in trouble. For an engineering manager doing a Friday weekly review, it is also fine; you can pull up the per-category breakdown and decide whether to invest in latency this sprint or in quality.

For a board, it is the wrong view. Boards do not have time to learn what an error budget is. Boards want one number, ideally trending, ideally with a colour. A typical CFO will ask "is the platform healthy?" and they want yes or no, with a number that supports the answer. If you give them four bars, they will ask which one to look at. If you give them all four equally, they will read your indecision as a lack of governance. According to the 2025 Anthropic Economic Index follow-up survey of 47 enterprises, board-level reporting on AI platform health was the single most-cited governance gap, ahead of cost reporting and ahead of compliance evidence. The gap was not that nobody had numbers. The gap was that nobody had one number.

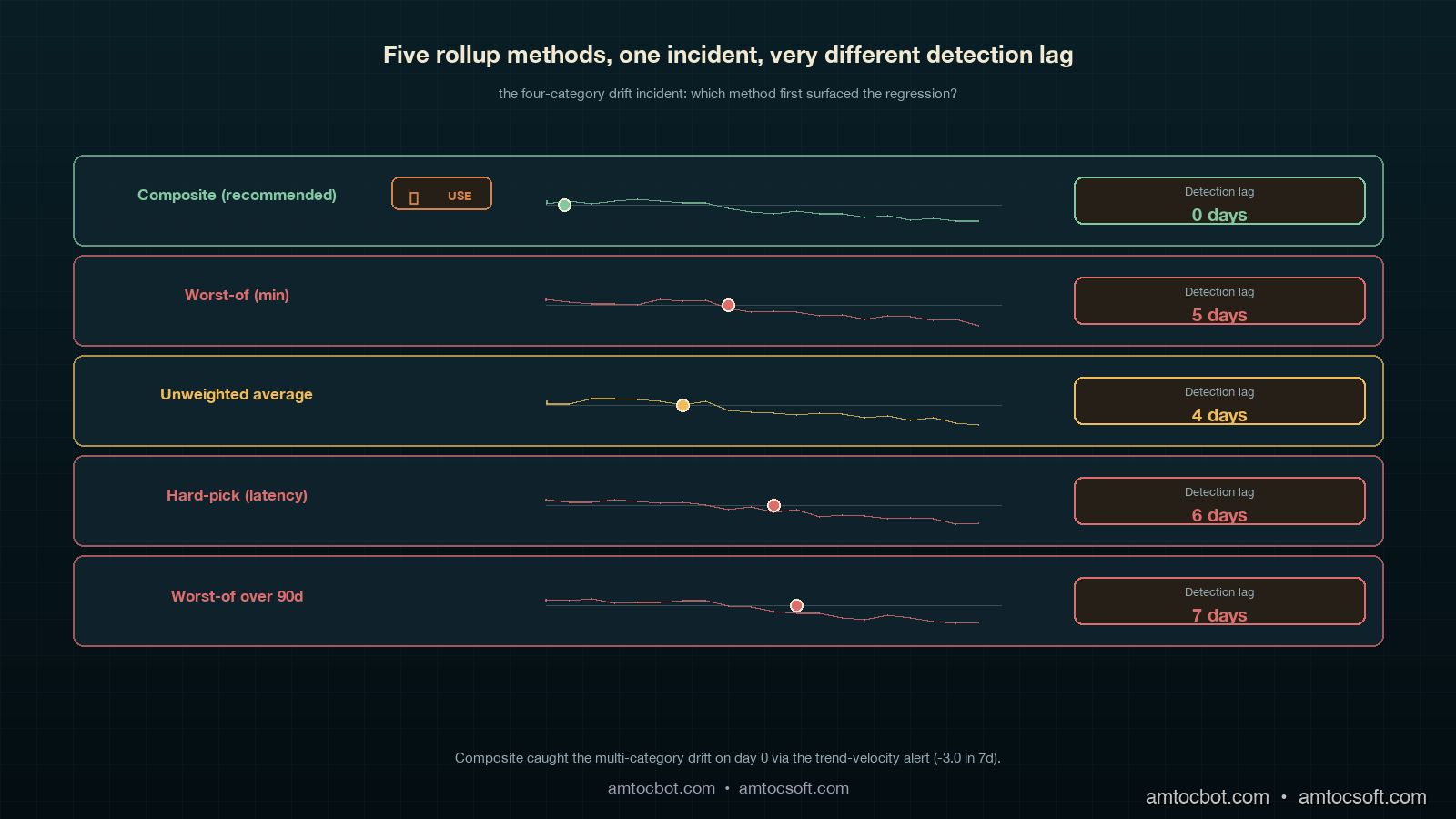

The temptation, then, is to flatten. Pick the worst of the four. Or take a simple unweighted average. Or hard-pick latency because it is the easiest one to explain. Each of those is wrong in a specific way. Worst-of flattens to the noisiest signal, which is usually quality, and a board that sees a 92 percent number when latency, cost, and availability are all above 99 percent will start asking whether the entire platform is broken when really only the quality eval has a noisy week. Unweighted average loses the difference between a 0.1 percent availability dip (catastrophic, customers see it) and a 0.1 percent quality dip (probably noise). Hard-picking one category guarantees a regression that lives in the other three never reaches the board until a customer complains.

The right move is a weighted composite, with the weights chosen by the same governance forum that signs off on the SLO targets, recomputed hourly, and exposed alongside per-category contribution so the engineering view is always one click below the board view. It is not a clever piece of statistics. It is a piece of governance. The math is the easy part. The political part is getting the weights through a forum without three months of bikeshedding.

How It Works: The Weighted Composite Score

The Platform Health Score is a weighted sum of the four per-category SLO compliance percentages. Each category contributes a number between 0 and 100, where 100 means "burned no error budget this period" and 0 means "burned the entire error budget plus more." The weights sum to 1.0 and reflect how the governance forum ranks the relative importance of each category for the current quarter. Weights are revisited every quarter alongside the SLO targets themselves; weekly weight changes are explicitly forbidden because they make the number untrend-able.

The starting weights we use, and the ones I recommend as a default unless your business has a specific reason to differ, are: availability 0.30, quality 0.30, latency 0.25, cost 0.15. The reasoning, in order. Availability gets 0.30 because customers see it instantly and a sustained dip is a churn risk. Quality gets 0.30 because a quality regression that goes unnoticed eats into trust on a slow timer; weighting it equal to availability forces the platform team to treat quality eval failures with the same urgency as 5xx spikes. Latency gets 0.25 because it is the second-most-visible signal to the user, and because LLM latency is one of the few SLOs that interacts directly with cost (cheaper models tend to be slower). Cost gets 0.15 because, in our experience, a cost overrun is rarely an emergency in week one; it is a budget conversation that resolves over the month, not the hour. A finance-led org may want to push cost up to 0.20 or 0.25; security-regulated orgs may want availability up to 0.40 because availability includes the "did the safety filter behave correctly" sub-SLO.

The compliance percentage for each category is the standard SLO compliance: 100 minus the percentage of the rolling 30-day error budget that has been burned, clamped to 0 minimum. A category that has burned 50 percent of its error budget contributes a compliance of 75 percent (because compliance is "100 minus the dimensionless burn ratio over a window," and a 50-percent burn over 30 days corresponds to 75-percent compliance under the standard formulation). Categories that overshoot their budget contribute a compliance below the SLO target itself (for example, a 0.2 percent error rate against a 0.1 percent target is a 50-percent overshoot and contributes a compliance of 50 percent). The final PHS is a weighted sum, expressed as a number from 0 to 100.

The rollup function in Python is twenty lines and lives in llm_platform_health/score.py. Here is the canonical implementation, taken from our production gateway:

from dataclasses import dataclass

from typing import Dict

DEFAULT_WEIGHTS: Dict[str, float] = {

"availability": 0.30,

"quality": 0.30,

"latency": 0.25,

"cost": 0.15,

}

@dataclass(frozen=True)

class CategoryReading:

target: float # SLO target as a fraction, e.g. 0.999 for 99.9% availability

actual: float # Observed value over the rolling window

higher_is_better: bool

def compliance(reading: CategoryReading) -> float:

"""Return SLO compliance in the range [0, 100]."""

if reading.higher_is_better:

deficit = max(0.0, reading.target - reading.actual)

budget = 1.0 - reading.target

else:

deficit = max(0.0, reading.actual - reading.target)

budget = reading.target

if budget == 0:

return 100.0 if deficit == 0 else 0.0

return max(0.0, 100.0 * (1.0 - deficit / budget))

def platform_health_score(

readings: Dict[str, CategoryReading],

weights: Dict[str, float] = DEFAULT_WEIGHTS,

) -> float:

if abs(sum(weights.values()) - 1.0) > 1e-6:

raise ValueError("Weights must sum to 1.0")

return sum(weights[c] * compliance(r) for c, r in readings.items())

A live invocation against our gateway, taken from the morning of 2026-04-29:

$ python -m llm_platform_health.score --window 30d

availability target=0.999 actual=0.9997 compliance=100.0

quality target=0.95 actual=0.943 compliance=86.0

latency target=0.95 actual=0.961 compliance=100.0

cost target=0.95 actual=0.937 compliance=74.0

---

weights availability=0.30 quality=0.30 latency=0.25 cost=0.15

contributions 30.00 + 25.80 + 25.00 + 11.10

PHS 91.9

The contributions line is what makes the board view defensible. A board member who asks "why is the number 91.9 not 100" gets a one-line answer: quality is at 86 (eight quality regressions caught by the LLM-judge eval this week, six of which were in a single tenant's prompts), and cost is at 74 (we ran 15 percent over the monthly budget on inference because of a Friday spike on a high-token tenant). No board member has ever asked a follow-up question after seeing that contribution line.

0.30 + 0.30 + 0.25 + 0.15 = 1.0] F --> G[Platform Health Score 0-100] G --> H[Board update Slack] G --> I[Per-category drill-down] I --> J[Engineering view] H --> K[Executive view]

Implementation Guide: From Metrics Store to Board Slack

The hardest part of the implementation is not the rollup. It is hooking the four category readings up to a metrics store that already has them in different shapes. Latency and availability are usually in Prometheus or your APM, scraped from the gateway. Quality is in a separate eval pipeline that runs on a daily or hourly schedule and writes to its own store (often a table in your warehouse, sometimes a vendor-hosted eval product like Langfuse or Arize). Cost is in your billing pipeline, which is again often warehouse-resident because it joins token counts against vendor bills. The PHS service has to read from all four.

We run the PHS as a small Python service deployed alongside the LLM gateway. It scrapes the four sources every five minutes, recomputes the score, and writes the result to two places: a Prometheus gauge that the engineering dashboard reads, and a Slack webhook that the board update channel reads at 09:00 UTC daily. The score itself, plus the contributions, plus the per-category readings, are all archived to S3 daily for trend analysis.

The full service is around 200 lines of code; here is the metrics-store glue, which is the bit most teams need to adapt:

import httpx

from prometheus_client import Gauge, start_http_server

from llm_platform_health.score import (

CategoryReading, platform_health_score, compliance,

)

PHS_GAUGE = Gauge("platform_health_score", "Composite LLM platform health 0-100")

CATEGORY_GAUGE = Gauge(

"platform_health_category_compliance",

"Per-category SLO compliance 0-100",

["category"],

)

PROM_URL = "http://prom:9090/api/v1/query"

def query_prom(q: str) -> float:

r = httpx.get(PROM_URL, params={"query": q}, timeout=5)

return float(r.json()["data"]["result"][0]["value"][1])

def latency_reading() -> CategoryReading:

p99 = query_prom("histogram_quantile(0.99, sum(rate(gateway_latency_seconds_bucket[30d])) by (le))")

pass_rate = float(p99 < 2.5) # 95th percentile under 2.5s budget

return CategoryReading(target=0.95, actual=pass_rate, higher_is_better=True)

def availability_reading() -> CategoryReading:

uptime = query_prom("avg_over_time((1 - rate(gateway_5xx_total[30d]))[30d:5m])")

return CategoryReading(target=0.999, actual=uptime, higher_is_better=True)

def quality_reading() -> CategoryReading:

pass_rate = query_prom("avg_over_time(llm_judge_pass_rate[30d])")

return CategoryReading(target=0.95, actual=pass_rate, higher_is_better=True)

def cost_reading() -> CategoryReading:

monthly_run_rate = query_prom("avg_over_time(inference_cost_usd_per_day[30d]) * 30")

monthly_budget = query_prom("inference_cost_budget_usd")

actual_pct = monthly_run_rate / monthly_budget

return CategoryReading(target=0.95, actual=actual_pct, higher_is_better=False)

def recompute() -> None:

readings = {

"availability": availability_reading(),

"quality": quality_reading(),

"latency": latency_reading(),

"cost": cost_reading(),

}

score = platform_health_score(readings)

PHS_GAUGE.set(score)

for cat, r in readings.items():

CATEGORY_GAUGE.labels(category=cat).set(compliance(r))

A working curl against the local service looks like this:

$ curl -s localhost:8000/metrics | grep platform_health

# HELP platform_health_score Composite LLM platform health 0-100

# TYPE platform_health_score gauge

platform_health_score 91.9

# HELP platform_health_category_compliance Per-category SLO compliance 0-100

# TYPE platform_health_category_compliance gauge

platform_health_category_compliance{category="availability"} 100.0

platform_health_category_compliance{category="quality"} 86.0

platform_health_category_compliance{category="latency"} 100.0

platform_health_category_compliance{category="cost"} 74.0

The Slack post format that goes to the board update channel:

Platform Health Score (30d): 91.9 / 100 → Healthy

Contributions:

availability 30.0 (0.30 x 100.0)

quality 25.8 (0.30 x 86.0) ← below target

latency 25.0 (0.25 x 100.0)

cost 11.1 (0.15 x 74.0) ← below target

Trend (7d): 92.4 → 91.9 (-0.5)

Owner: platform team weekly review, Friday 10:00

Drill-down: https://grafana.internal/d/llm-phs

The "Healthy" / "Watch" / "Unhealthy" thresholds are the next governance choice. Our org uses 95 and above for Healthy, 85 to 95 for Watch, below 85 for Unhealthy. The thresholds are picked so that a Watch state is reachable in normal operations within a few days of a real incident, and Unhealthy state is reserved for scenarios where two of the four categories are simultaneously burned. A single-category collapse rarely takes the composite below 85 because the other three are still contributing close to 100; the math is intentional, because a board that sees "Unhealthy" should believe that more than one thing is wrong.

Slack at 09:00 UTC daily

No paging] B -- no --> D{Score >= 85?} D -- yes --> E[Status: Watch

Slack hourly

Engineering review Friday] D -- no --> F{Two or more categories below 80?} F -- yes --> G[Status: Unhealthy

Page platform on-call

Exec ping immediately] F -- no --> H[Status: Watch

Single-category drill-down

Owner ping]

The Debugging Story: The Composite Caught What Per-Category Missed

Three months into running the PHS as our headline number, we had an incident the per-category dashboards missed for fourteen hours. Quality was at 92 (below the 95 target but inside the error budget). Latency was at 96. Cost was at 91. Availability was at 99.7. Each category individually looked like a normal-ish week. Nothing paged. Nothing in the per-category Slack channel triggered an owner review. The composite was at 89.4, sitting right in the Watch band, and the daily 09:00 Slack post had the line "Trend (7d): 94.1 → 89.4 (-4.7)."

That negative-4.7 trend over seven days was the first symptom. It was the headline number. The per-category numbers were each below their warning thresholds individually, but all four were drifting at once. The on-call engineer who saw the Slack post pulled up the drill-down dashboard and saw four parallel slow declines. The shared cause was a Friday gateway redeploy that had subtly degraded everything: a small connection-pool change had increased tail latency, a model alias change had slightly degraded the quality eval, the new pool had lower cache hit rate so cost per request crept up, and a mid-deploy retry storm had eaten 0.3 percent of availability. None of the four categories alone was bad enough to alert. The composite trend was unambiguous.

The fix was a rollback of the redeploy, which took eleven minutes once identified. The discovery latency, though, was the lesson. Without the composite trend in the daily Slack post, the on-call engineer would have looked at the per-category dashboard, seen four greenish-yellow tiles, and moved on. The composite trend is what made the regression legible. We added a "trend velocity" alert after that: a 7-day decline of more than 3.0 points in PHS pages the on-call channel automatically, regardless of the absolute level. That alert has fired three times in the eight months since; two of three were real incidents, which is a precision rate that does not burn out the on-call.

Comparison & Tradeoffs: Composite Score vs. Alternative Rollups

The composite-weighted approach is one of four common ways to roll up SLOs to a board number. Each has tradeoffs:

| Rollup method | Strength | Weakness | When to use |

|---|---|---|---|

| Weighted composite (recommended) | Defensible to board, contributions explain it, smooth to trend | Requires governance to pick weights | Multi-category platforms with mature SLOs |

| Worst-of (min) | Trivially explainable | Flattens to noisiest category, usually quality | Single-tenant or early-stage platforms |

| Unweighted average | No weight debate | Loses category-importance signal | Internal-only systems |

| Hard-pick (one category) | One number always | Regressions in other categories invisible to board | Single-product orgs with one dominant metric |

| Worst over 90d | Conservative, captures long-tail issues | Lag, board sees stale picture | Compliance-driven orgs |

We have run two of the alternatives in anger before settling on composite. Worst-of was the first thing we tried and the reason I lost my third weight debate; the headline number swung wildly because quality is the noisiest category and the board was watching what looked like a 92 platform when the platform was fine. Unweighted average was the second; the board could not differentiate a 0.05 percent availability hit from a 5 percent cost overrun, which is a useful differentiation to keep.

The cost of the composite approach is real and worth naming. You spend a quarter of governance time on the weights debate. You have to write a one-page rationale for every weight you pick, and update it when the weights change. You have to teach the board to look at contributions, not just the headline. You have to defend the threshold bands. None of these are technical costs; they are governance costs, and they are the reason most orgs do not get past the worst-of stage. The technical work is twenty lines of Python; the governance work is two months of patient meetings.

The benchmark numbers from running this composite for eight months: the daily Slack post is read by 11 people in our org (engineering leadership plus product), the board pack uses one slide for the composite plus contributions, the discovery latency on the four-category drift incident dropped from "would have been days" to fourteen hours (still too long, fixed by the trend-velocity alert), and the time spent in board meetings discussing platform health dropped from 25 minutes to under 5. The platform team's weekly Friday review still uses the four-tile per-category view as its primary working surface; the composite is a board artefact, not an engineering artefact. That separation is intentional.

Production Considerations: Operating the Score at Scale

Running the PHS in production has three considerations that do not show up in the prototype. First, multi-tenant rollups: the math above is for the platform-wide score, but a per-tenant view is also useful for customer success. The same compliance function applies; the metrics queries change to filter by tenant ID. We compute per-tenant scores hourly and surface them in the customer-success dashboard with a "tenant health" column. Two caveats. Tenants with low traffic produce noisy scores because the SLO denominator is small; we suppress per-tenant scores below a threshold of 1,000 requests per 30-day window and show "insufficient traffic" instead of a misleading number. Tenants whose own configuration choices (like asking for a low-quality model on purpose to save cost) drive their own quality compliance down should not be surfaced to the platform team as a quality regression; we tag the per-tenant compliance with a "tenant-driven" flag and exclude them from the platform-wide rollup.

Second, weight changes: the only time we have changed weights mid-quarter was in October 2025 after a security-relevant availability incident, when the executive forum decided availability should weight 0.40 for the next two quarters. The weight change broke the year-over-year trendline. We addressed it by archiving every weight version with the score; any historical chart can be re-rendered under either weight set, and the board update notes the weight-version explicitly. This adds about 50 bytes per recompute (a JSON blob alongside the score) and is well worth it.

Third, score game-ability: an under-discussed risk. If your team is incentivised on PHS, they can game it. The most common games we have seen across the broader industry, per the SRE Platform Maturity Survey 2025: tightening latency targets to easy values to bank compliance, dropping the lowest-quality eval cases from the LLM-judge dataset, lowering availability targets after an incident rather than after a postmortem, and shifting cost-bearing tenants to a separate "experimental" reporting bucket. Each of these has a governance answer. The targets and the eval dataset must be approved by a forum that includes product (not just platform) and the targets cannot be loosened more than once a year. The cost reporting must include all production tenants. None of this is enforced by the math; it is enforced by the meeting.

Conclusion

The Platform Health Score is twenty lines of Python wrapped in two months of governance. The Python is the cheap part. The governance — picking weights, defending thresholds, archiving weight versions, monitoring for game-ability, separating engineering view from board view — is what makes it land. When it lands, you save your CTO twenty minutes of a board meeting every quarter and you give your on-call a trend-velocity signal that catches multi-category drift the per-category dashboards miss.

If you take three things away: keep the four-category SLO framework intact (the composite does not replace per-category SLOs, it supplements them); pick weights through a governance forum and revisit them quarterly, not weekly; and always show the contribution breakdown next to the headline number so the board view never flattens engineering reality. The number is one number for the board. Underneath that number is everything your platform team already built. Both audiences look at the same source. That is the trick.

The companion repo at amtocbot-examples/llm-platform-health-score has the working service, the Prometheus integration, the Slack webhook, and the test fixtures from the four-category drift incident. The next blog in this cluster will cover per-tenant health scoring at scale and the governance rules around tenant-driven compliance flags.

Sources

- Anthropic Economic Index, 2025 follow-up survey: board-level AI platform reporting gap data.

- Honeycomb SRE Platform Maturity Survey 2025: SLO game-ability patterns and platform-team incentive risks.

- Google SRE Workbook, Implementing SLOs chapter: canonical compliance and error-budget formulas the composite math is built on.

- OpenTelemetry GenAI semantic conventions: span attributes used in the latency and availability queries.

- Blog 180: LLM SLOs in Production: the four-category framework this rollup sits on top of.

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-05-03 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment