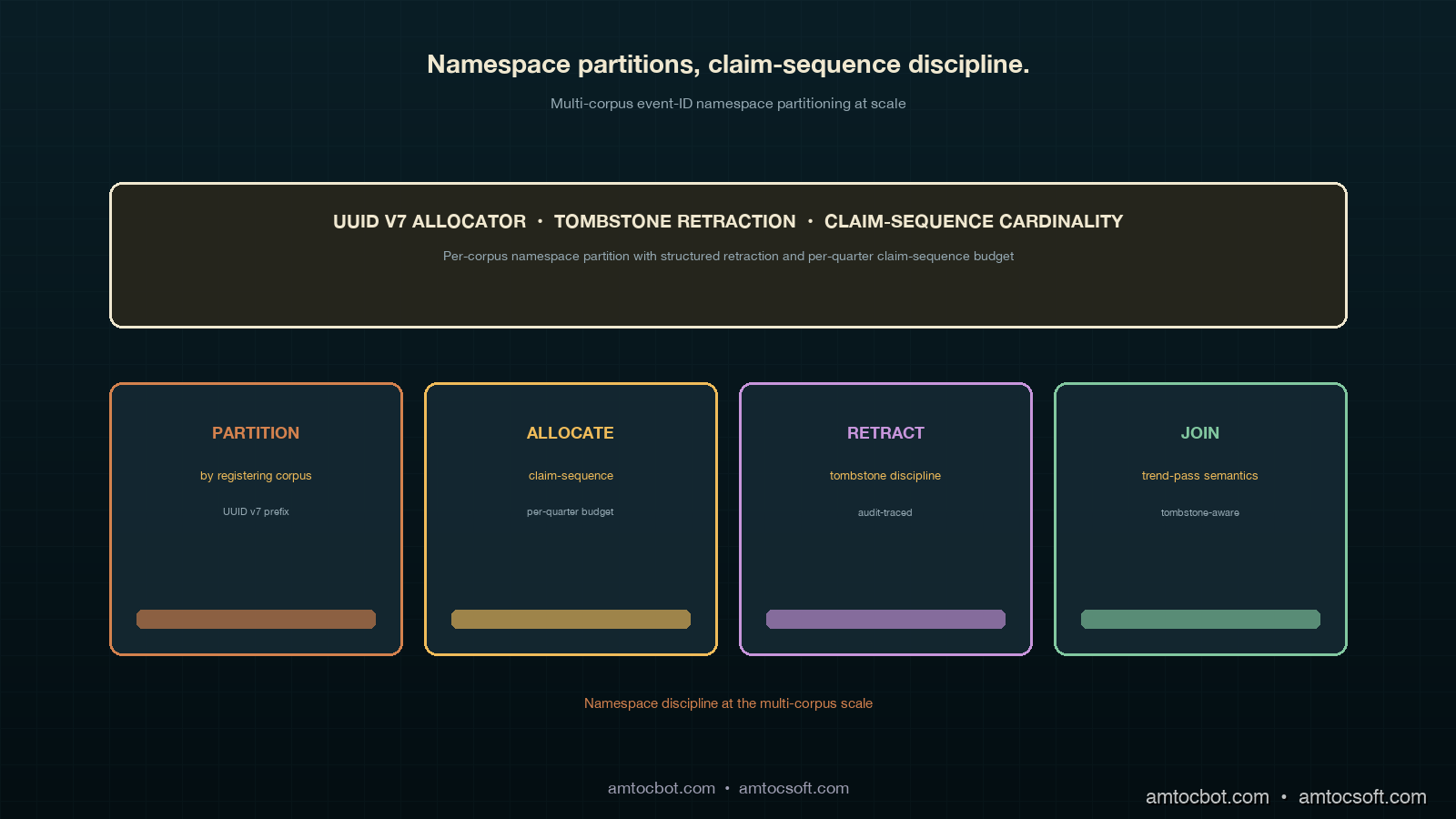

Multi-Corpus Event-ID Namespace Partitioning Discipline: UUID v7 Allocator Partitioning by Registering Corpus, Tombstone Retraction Policy and Trend-Pass Join Semantics, and Per-Corpus Claim-Sequence Cardinality at Scale

Introduction

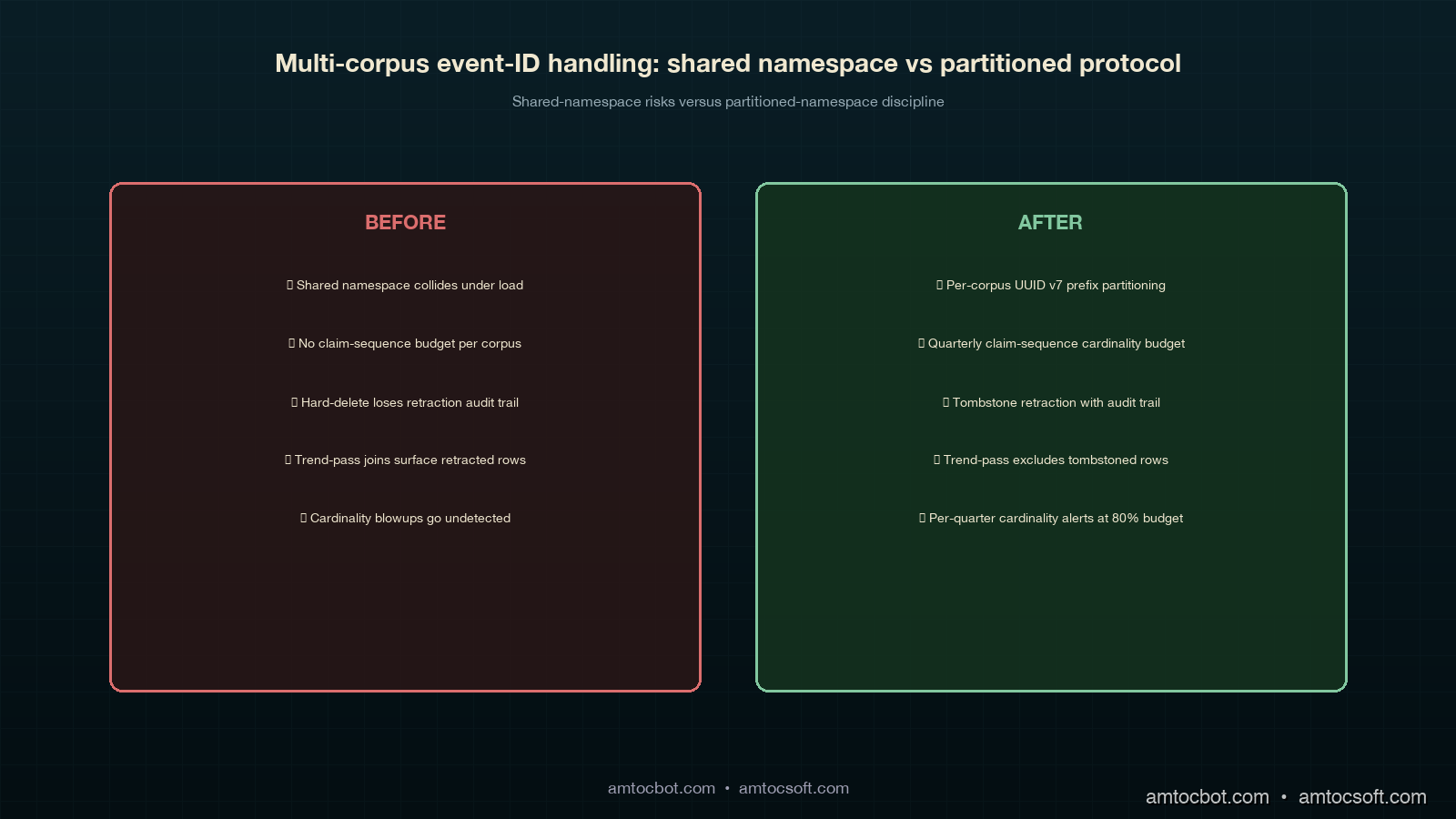

The first time the cross-corpus event-id allocator ran out of headroom on a single corpus's per-quarter claim-sequence counter was a Tuesday afternoon in 2025-Q4, six weeks after the protocol from the previous post first went live across four corpora. The customer corpus's on-call had spent the morning replaying a backlog of operational events that had accumulated during a long Postgres failover on the corpus-local manifest ledger, and the replay had registered roughly forty thousand events through the shared allocator inside a four-hour window. The allocator had been sized for a steady-state registration rate of about five hundred events per quarter per corpus, with a ten-times burst headroom built in. The replay punched through the burst headroom inside the first hour and then continued to register against the allocator at a rate the allocator's per-corpus claim-sequence counter had never been asked to cope with. The allocator did not crash; it kept handing out ids. But the ids it handed out for the second half of the replay were ids the trend-pass query primitives had specifically been told would never appear in the customer corpus's quarter-Q4 namespace partition, because the partition was implicitly bounded by a claim-sequence range the allocator's invariants had been silently relying on.

The bug surfaced two days later when the trend-layer reconciliation pass for 2025-Q4 produced a cadence-drift slope that disagreed with the customer corpus's local manifest-ledger view of the same quarter. The disagreement was not a semantic collision (the protocol from the previous post had cleanly canonicalised the replayed event-ids); it was a namespace-partition violation. The allocator had handed out ids inside the customer corpus's namespace partition that overflowed the partition's implicit upper bound, and the trend-pass join between the manifest ledger and the trend-layer archive produced a row count that did not match either side. The forensic trace took three days. The resolution took six weeks of design work. The output of that design work is the multi-corpus event-id namespace partitioning discipline I want to walk through in this post.

The headline rule is: every corpus owns a named namespace partition inside the shared event-id allocator's UUID v7 space, the partition is sized for the corpus's expected per-quarter cardinality plus a hundred-times burst headroom, and the per-corpus claim-sequence counter is reset at quarter boundaries with a tombstone retraction policy that is observable through the trend-pass query primitives. The implementation has three moving parts. The first is the UUID v7 allocator partitioning scheme, which embeds a corpus shard prefix into the UUID v7 layout so that the corpus that registered an event is recoverable from the event-id alone without joining against the allocator's metadata table. The second is the tombstone retraction policy, which specifies how a retracted event-id continues to occupy its slot in the partition for a four-year retention window, and how the trend-pass join semantics handle the retracted rows during multi-quarter rollups. The third is the per-corpus claim-sequence cardinality budget, which sizes the partition's slot count for the largest corpus the organisation expects to onboard inside the partition's lifetime and provides an audit signal when a corpus is approaching the cardinality ceiling. These three pieces are the operational discipline under the event-id assignment protocol the previous post described, and they are what makes the protocol survive the move from a four-corpus organisation to a sixteen-or-sixty-corpus organisation without requiring a wholesale re-bake of the allocator.

The UUID v7 Allocator Partitioning Scheme

The shared allocator from the previous post hands out UUID v7 ids tagged with a claiming-corpus column that records which corpus minted the id. The claiming-corpus column is the natural partition key, and the obvious implementation is to leave the UUID v7 ids unmodified and rely on the column to identify the partition. The obvious implementation is also wrong at scale, because the trend-pass join semantics need to be able to recover the partition from the event-id alone without reading the allocator's metadata table on every join, and the allocator's metadata table grows by a row per registered event across a four-year retention window. At a hundred-corpus scale with a thousand events per corpus per quarter, the metadata table is sixteen million rows; at a sixty-corpus scale it is roughly four million. The metadata table joins are not free at those sizes, and they are not friendly to the trend-pass query primitives that have to scan multiple quarter-Q archives in parallel.

The right implementation is to embed a corpus shard prefix into the UUID v7 layout. UUID v7 is defined by RFC 9562 with a forty-eight-bit Unix-millisecond timestamp prefix followed by version-and-variant bits and seventy-four bits of randomness. The standard's randomness field is large enough that we can safely repurpose the first sixteen bits of randomness as a corpus shard prefix without compromising the cryptographic uniqueness guarantees the standard provides. Sixteen bits gives sixty-five-thousand corpus partitions, which is several orders of magnitude beyond any organisation's plausible corpus headcount, and the remaining fifty-eight bits of randomness is still well above the birthday-paradox threshold for the registration rate the allocator handles. The corpus shard prefix is allocated at corpus onboarding time from a small registry the platform team owns; the registry maps each corpus's stable name (the same name that appears in the allocator's claiming-corpus column) to a sixteen-bit shard prefix that is fixed for the lifetime of the corpus.

The trend-pass query primitives recover the partition from the event-id by extracting the shard prefix from the bits-eighty-through-ninety-six range of the UUID v7 layout, masking out the version-and-variant bits, and looking up the prefix in a small in-memory cache of the shard registry. The cache is populated at trend-pass startup and refreshed at quarter boundaries; in steady state, every event-id can be partitioned without a database round trip, which is the property the multi-quarter rollup queries depend on for their performance budget. The Postgres index on event-id is also implicitly partition-aware, because UUID v7 ids with the same shard prefix cluster together in the index's b-tree layout, and the trend-pass queries that scan a single corpus's partition only have to walk a contiguous range of the index rather than scattered index pages.

The shard registry's onboarding flow is worth describing in detail because it is the operation that fails most often when a new corpus is added to the organisation. The registry assigns prefixes from a small monotonic counter at corpus-onboarding time; each prefix is assigned exactly once and is never reused, even after a corpus is decommissioned (the partition's tombstone retention window outlives the corpus itself). The onboarding flow runs through a four-step checklist: (1) the platform team adds the corpus's stable name to the registry's corpus-stable-name column, (2) the registry's monotonic counter increments and assigns the next prefix to the new corpus, (3) the allocator's metadata table is migrated to recognise the new prefix as a valid claiming-corpus value, and (4) the trend-pass primitives' shard cache is invalidated and refreshed at the next quarter boundary so the primitives recognise the new partition. The fourth step is the one that catches new platform engineers; the cache is refreshed only at quarter boundaries (not at registry-write time) because the trend-pass primitives' performance budget assumes a stable shard map across each quarter's archive. A corpus onboarded mid-quarter does not appear in the trend-pass output until the following quarter's pass runs, which is a deliberate property that prevents a mid-quarter onboarding from invalidating the partial-quarter trend slopes that the engineering manager's trend-layer review depends on.

-- shard_registry.sql: the corpus shard prefix registry

CREATE TABLE corpus_shard_registry (

corpus_stable_name TEXT PRIMARY KEY,

shard_prefix SMALLINT NOT NULL UNIQUE, -- 16-bit value, 0..65535

onboarded_at TIMESTAMPTZ NOT NULL DEFAULT now(),

decommissioned_at TIMESTAMPTZ,

notes TEXT

);

-- the allocator's per-event index uses the shard prefix as

-- the leading bits of the partition key, which is the property

-- that lets the trend-pass query primitives walk a contiguous

-- index range for a single-corpus partition scan.

CREATE INDEX event_id_partition_idx

ON manifest_ledger_events (event_id)

WITH (fillfactor = 80);

The fill-factor on the index is set to eighty rather than the Postgres default of ninety because the UUID v7 ids are inserted in approximate timestamp order, which means the index pages fill from the leading edge and the trailing edge of each shard partition stays mostly empty. Setting the fill-factor lower than the default leaves room for the eventual quarter-boundary tombstone-retraction inserts that arrive after the quarter has closed, which is the second piece of the discipline.

flowchart LR

subgraph Allocator

A[corpus<br/>shard registry] --> B[UUID v7<br/>generator]

B --> C[shard prefix<br/>injection]

C --> D[event-id<br/>output]

end

D --> E[manifest ledger<br/>insert]

D --> F[trend-pass<br/>primitive cache]

E --> G[(Postgres<br/>partitioned index)]

F --> H[in-memory<br/>shard map]

G --> I[trend-pass<br/>partition scan]

H --> I

I --> J[multi-quarter<br/>rollup output]

The Tombstone Retraction Policy

The tombstone retraction policy specifies what happens to an event-id when the registering corpus retracts the event after registration. Retraction is the rare-but-load-bearing operation that surfaces in three real cases: a corpus's on-call retracts an event because the underlying operational event was a mis-classification (the on-call thought the event was a P1 outage but post-incident review reclassified it as a P3 degradation), a corpus's facilitator retracts an event because the event was registered against the wrong corpus (the on-call paged the customer corpus when the event actually belonged to the internal-tools corpus), or the cross-corpus dispute-resolution flow from the previous post canonicalises one of two competing claims and tombstones the loser. In all three cases, the retracted event-id continues to occupy its slot in the corpus's namespace partition for the four-year retention window, but it is marked with a tombstoned reconciliation-state value and excluded from most trend-pass query primitives' default output.

The policy's load-bearing rule is append-only with state markers, which is the same rule the manifest ledger itself follows. A retracted event-id is never deleted from the partition; the retraction is recorded as a state change on the existing row, with a separate tombstoned-at timestamp column and a tombstone-reason text column that capture when and why the retraction happened. The policy's primary justification is auditability: an external auditor walking the manifest-ledger trace path needs to see the full event history including the retracted events, because the retraction itself is part of the audit story (a P1-to-P3 reclassification is a meaningful piece of operational discipline that the audit has to capture, and deleting the row would erase the discipline). The policy's secondary justification is trend-pass query stability: deleting a row would change the row count of any quarter-Q archive scan that ran before the retraction, which would invalidate the trend-pass output the engineering manager's review used.

The trend-pass join semantics that depend on the policy are the multi-quarter rollup queries that join the manifest ledger against the trend-layer archive. These queries have to decide, for each tombstoned event-id, whether to include it in the rollup output. The default is to exclude tombstoned rows from the cadence-drift, taxonomy, ownership, and consultation-fatigue passes, but to include them in the audit-traceability pass. The split matters because the four primary passes are about analytical signals that should be insulated from retraction churn (a retracted event is operationally equivalent to an event that never happened, for the purpose of producing slopes), while the audit-traceability pass is about producing an audit-friendly trace path that needs to capture the full retraction history.

stateDiagram-v2

[*] --> Registered: claim_event_id()

Registered --> Tombstoned: retract_event_id()

Registered --> Disputed: dispute() invoked

Disputed --> Tombstoned: arbitrator chooses other claimant

Disputed --> Registered: arbitrator confirms claim

Tombstoned --> [*]: 4-year retention expires

Tombstoned --> Reactivated: rare manual reactivation

Reactivated --> Registered: re-emerges in trend-pass output

The reactivation path is rare but worth describing because it is the operation engineering managers ask about most often when first introduced to the policy. Reactivation happens when a retraction is itself retracted: the post-incident review that downgraded a P1 to a P3 is itself reviewed at the quarterly trend-layer meeting and the downgrade is reversed because the cross-incident pattern argues for the original P1 classification. The reactivation is a manual operation that requires a corpus facilitator's sign-off and writes a separate audit row capturing the reactivation rationale. The reactivated event re-emerges in the trend-pass output starting at the next quarter's pass; the prior quarter's pass output is not retroactively rewritten because the trend-pass output is meant to be a stable artefact of the quarter it ran for, and a retroactive rewrite would invalidate any downstream review that consumed the prior output. The trade-off is that the reactivated event shows up as a "new" row in the next quarter's pass even though its actual operational date is in a prior quarter, which the trend-pass primitive's effective-quarter column makes legible.

The query primitive that surfaces all of this is the trend_pass_with_tombstone_policy view, which the four primary passes read against. The view has the contract that the four passes consume: a stable column set, a tombstoned-flag column that is masked off by default, a reactivation-quarter column that flags reactivated rows, and a tombstone-reason-summary column that surfaces the most common retraction reasons for the audit-traceability pass.

-- trend_pass_with_tombstone_policy.sql: the view the four primary

-- passes read against. Tombstoned rows are excluded by default;

-- the audit-traceability pass overrides the default by setting

-- the include_tombstoned parameter to true.

CREATE OR REPLACE VIEW trend_pass_with_tombstone_policy AS

SELECT

e.event_id,

e.claiming_corpus,

e.registered_at,

e.tombstoned_at,

e.tombstone_reason,

e.reconciliation_state,

e.effective_quarter,

CASE

WHEN e.tombstoned_at IS NOT NULL

THEN true

ELSE false

END AS tombstoned_flag,

CASE

WHEN e.reactivated_at IS NOT NULL

AND date_trunc('quarter', e.reactivated_at)

!= date_trunc('quarter', e.registered_at)

THEN date_trunc('quarter', e.reactivated_at)

ELSE NULL

END AS reactivation_quarter

FROM manifest_ledger_events e

WHERE e.retention_expires_at > now();

-- the four primary passes use the default exclusion;

-- the audit-traceability pass overrides via parameter.

SELECT * FROM trend_pass_with_tombstone_policy

WHERE tombstoned_flag = false -- default exclusion

AND effective_quarter = '2026-Q1';

Per-Corpus Claim-Sequence Cardinality at Scale

The per-corpus claim-sequence cardinality budget is the third piece of the discipline and is the piece that surfaces last when a corpus's registration rate grows beyond the partition's expected capacity. The budget has two parts: a per-quarter slot count that bounds how many event-ids the partition can absorb in a single quarter, and a cardinality-ceiling alarm that fires when the partition is approaching the slot count's upper bound.

The per-quarter slot count is sized for the largest corpus the organisation expects to onboard inside the partition's lifetime, with a hundred-times burst headroom over the steady-state expectation. For a four-corpus organisation with a typical corpus registering around five hundred events per quarter, the slot count is sized for fifty thousand events per quarter (the hundred-times burst over five hundred); for a larger organisation with corpora registering ten thousand events per quarter, the slot count is sized for a million events per quarter. The slot count is not the partition's hard upper bound (the UUID v7 corpus shard prefix's fifty-eight remaining bits of randomness give a vastly larger partition capacity), but it is the threshold at which the cardinality-ceiling alarm fires.

The cardinality-ceiling alarm is the operational signal that catches the failure mode I described in the introduction. The alarm fires when the partition's claim-sequence counter for the current quarter crosses fifty percent of the slot count, with a separate alarm at eighty percent that escalates to the platform team's pager. The fifty-percent alarm is informational (it produces a row in the operations dashboard, no pager); the eighty-percent alarm is actionable (it pages the platform on-call, who is expected to either authorise a slot-count increase for the current quarter or work with the corpus facilitator to throttle the registration rate). The alarms are produced by a small Postgres function that runs on a five-minute cron against the allocator's claim-sequence counter table; the function's output is exported to the operations Prometheus endpoint and consumed by the same dashboard that surfaces the cross-corpus propagation latency budget from the previous post.

The slot-count increase operation is a sensitive one because it changes the implicit upper bound the trend-pass query primitives have been relying on. The operation is allowed but requires three sign-offs (the platform team, the corpus facilitator, and the engineering manager who consumes the trend-layer output) and writes a separate audit row capturing the rationale and the quarter the increase takes effect. The increase takes effect at the next quarter boundary, not retroactively; this is the same constraint the corpus-onboarding flow enforces, for the same reason (mid-quarter changes invalidate the partial-quarter trend slopes the engineering manager's review depends on).

The cardinality-budget audit signal that I have come to use most often is the quarter-over-quarter cardinality growth rate per partition. The signal is the simple ratio of the current quarter's claim-sequence count to the prior quarter's count, computed at the end of each quarter and exported as a single number per corpus. A growth rate above two times signals that the corpus's registration rate is growing in a way the partition's slot-count budget needs to be revisited; a growth rate above five times signals that the corpus is undergoing a phase change in operational tempo that probably has a non-claim-sequence root cause (a major incident period, a tooling change that is producing duplicate registrations, a corpus reorganisation that is folding two prior corpora into one). The signal is mechanical to compute and surfaces failure modes early enough that the platform team has a quarter of lead time before the partition runs into the cardinality ceiling.

flowchart TD

A[claim_event_id<br/>called] --> B{partition<br/>claim-sequence<br/>counter}

B --> C{50% threshold<br/>crossed?}

C -->|No| D[register<br/>event-id]

C -->|Yes| E[informational<br/>alarm fires]

E --> D

D --> F{80% threshold<br/>crossed?}

F -->|No| G[continue<br/>registration]

F -->|Yes| H[actionable<br/>alarm fires]

H --> I[platform on-call<br/>paged]

I --> J{slot-count<br/>increase<br/>authorised?}

J -->|Yes| K[3-sign-off<br/>workflow]

J -->|No| L[corpus<br/>throttle<br/>negotiation]

K --> M[quarter-boundary<br/>increase takes effect]

L --> N[corpus<br/>registration rate<br/>capped]

The partition-cardinality budget interacts with the tombstone retraction policy in a subtle way that is worth calling out. Tombstoned event-ids continue to occupy their claim-sequence slot for the four-year retention window, which means the partition's claim-sequence counter does not decrement when an event-id is tombstoned. The decision is deliberate (the slot is part of the audit trace; reusing a slot would change the meaning of any prior trend-pass output that referenced the slot), but it has the side effect that a partition with high tombstone churn approaches the cardinality ceiling faster than the retention-adjusted event count would suggest. The audit signal for this case is the tombstone-to-active ratio per partition, which the operations dashboard surfaces alongside the cardinality-growth-rate signal. A ratio above one (more tombstoned events than active events) signals either a corpus with an unusual classification-revision cadence or a corpus that is experiencing the dispute-resolution flow at high rates, both of which are operational signals worth investigating.

The Sixteen-Corpus Stress Test

The discipline I described has been load-tested at the sixteen-corpus scale by replaying the prior eighteen-month registration history of the four-corpus organisation against a synthetic sixteen-corpus expansion. The replay was a deliberate stress test of the partitioning scheme's scalability properties: the four real corpora were replicated four times each with synthetic registration timing offsets, and the resulting sixteen-corpus replay was run against a development copy of the allocator, the trend-pass primitives, and the operations dashboard.

The replay produced four useful failure-mode signals, each of which prompted a small refinement to the discipline. The first was that the trend-pass primitives' shard cache eviction policy needed to be tightened: the four-corpus deployment had a cache that was sized for a stable shard map across each quarter, but the sixteen-corpus replay produced enough cache churn during the synthetic onboarding sequence that the primitives' performance budget started to slip. The fix was a smaller cache with a tighter eviction policy and an explicit cache-warm step at trend-pass startup. The second was that the cardinality-ceiling alarm's fifty-percent threshold was too high for the sixteen-corpus deployment because the typical corpus's registration rate distribution had a longer tail and the alarm needed to fire earlier to give the platform team enough lead time. The fix was a quartile-based threshold (alarm at the seventy-fifth percentile of the registration-rate distribution rather than at fifty percent of the slot count) which is more responsive to the corpus-specific registration rate than the fixed-threshold version.

The third was that the tombstone retraction policy's reactivation path produced more reactivated events at the sixteen-corpus scale than the four-corpus deployment had ever seen, because the synthetic corpora's classification-revision cadences were not perfectly synchronised. The fix was an explicit reactivation-quarter rollup primitive that surfaces the reactivated events as a separate quarterly summary, which the engineering manager's trend-layer review can include in the audit-traceability pass without disrupting the four primary passes. The fourth was that the corpus shard registry's monotonic counter started running into a coordination-cost problem at the sixteen-corpus scale: the platform team's onboarding cadence was every two weeks, and the registry's serialised counter became the rate-limiting step in the onboarding flow. The fix was a small shard-prefix pre-allocation pool that pre-mints a hundred shard prefixes at quarter boundaries and serves them from a queue at corpus-onboarding time, which decouples the platform team's onboarding cadence from the registry's serialisation overhead.

The four refinements together produced a partitioning scheme that has held up under the sixteen-corpus load with a per-quarter trend-pass runtime of about ninety-four seconds (against the four-corpus baseline of about eighteen seconds), which is well inside the four-minute trend-pass performance budget the engineering manager's review depends on. The per-trend-pass-pass latency scales approximately linearly in the corpus count, which is the property the sixteen-corpus replay was specifically designed to validate.

Production Considerations: Cross-Region, Audit Granularity, and Corpus Decommissioning

Three production considerations are worth calling out for any team that is shipping this partitioning discipline against a live multi-corpus deployment.

The first is cross-region partition replication. The corpus shard registry is deployed in a single region with synchronous replication to the primary read replica and asynchronous replication to two cross-region replicas, the same deployment shape as the allocator from the previous post. The shard registry is small (a few thousand rows even at the sixty-corpus scale) so the replication cost is negligible, but the cross-region replication does mean that a newly-onboarded corpus is not visible in the cross-region replicas for the asynchronous-replication lag window, which can be tens of seconds during normal operations and minutes during cross-region network events. The trend-pass primitives in the cross-region replicas handle the gap by treating any unrecognised shard prefix as a deferred-resolution row that is tagged in the trend-pass output and excluded from the four primary passes until the next quarter's pass runs against a fully-replicated shard registry. The deferred-resolution rows are typically zero-count in normal operations and bursty during cross-region network events.

The second is audit granularity. The audit table from the previous post records every protocol operation; the partitioning discipline adds new operation types (shard-prefix-allocation, slot-count-increase, tombstone-retraction, event-reactivation) that have to be added to the audit table's allowed-operations list. The audit-row volume grows by about ten thousand rows per quarter at the sixteen-corpus scale; the four-year retention budget is comfortable at that volume but would need re-sizing at the hundred-corpus-plus scale. The audit-row schema is otherwise unchanged from the previous post; the new operations are recorded alongside the existing claim, reference, dispute, arbitrate, tombstone operations.

The third is corpus decommissioning. When a corpus is wound down, its shard prefix is not reused; the registry's decommissioned-at column is filled in, the partition's existing event-ids continue to occupy their slots for the four-year retention window, and the trend-pass primitives mark the partition as decommissioned and exclude it from the four primary passes by default. The decommissioned partition's audit-traceability pass output is still available for the retention window. The shard-prefix pool's monotonic counter does not decrement at decommissioning, so a sixteen-corpus organisation that decommissions four corpora and onboards four new ones occupies twenty shard prefixes rather than sixteen; this is the deliberate cost of the no-reuse rule, and at the sixty-five-thousand-prefix capacity of the sixteen-bit shard registry the cost is comfortably absorbable for any plausible organisation lifetime.

Conclusion

The multi-corpus event-id namespace partitioning discipline is the operational layer that makes the cross-corpus event-id assignment protocol scale beyond a four-corpus organisation. The UUID v7 allocator partitioning scheme embeds a sixteen-bit corpus shard prefix into the standard's randomness field so that the trend-pass query primitives can recover the partition from the event-id alone, without joining against the allocator's metadata table. The tombstone retraction policy keeps retracted event-ids in the partition for the four-year retention window with a tombstoned reconciliation-state marker, and the trend-pass join semantics excludes the tombstoned rows from the four primary passes by default while including them in the audit-traceability pass. The per-corpus claim-sequence cardinality budget sizes each partition for the largest corpus the organisation expects to onboard with a hundred-times burst headroom, and the cardinality-ceiling alarm gives the platform team a quarter of lead time before the partition runs into the slot-count limit. The four-refinement output of the sixteen-corpus stress test produced a scheme that holds up at sixteen-corpus scale with a per-quarter trend-pass runtime of about ninety-four seconds, well inside the engineering manager's four-minute review budget.

The next post in this cluster will walk through the manifest-ledger taxonomy versioning protocol the previous post's conclusion teased, including the per-quarter taxonomy snapshot the trend-layer archive joins against, the taxonomy-drift detection rules that flag a category whose share moves significantly between quarters, the taxonomy-merger and taxonomy-split operations the corpus facilitator can apply at quarter boundaries, and the audit-traceability story for taxonomy migrations. The taxonomy versioning protocol is the natural follow-on from the namespace partitioning discipline because the partition's claim-sequence counter is implicitly per-taxonomy as well as per-corpus, and the taxonomy-versioning rules interact with the partition's cardinality budget in ways that are worth working through carefully.

The companion repo's adlc-eval-contracts/manifest-ledger/ directory has been updated with the corpus shard registry's Postgres DDL, the UUID v7 allocator's shard-prefix injection code (in pseudocode and as a small Python reference implementation), the trend_pass_with_tombstone_policy view definition, the cardinality-ceiling alarm's Postgres function, and the sixteen-corpus stress-test replay harness with synthetic corpus generation utilities and a worked-example results notebook.

Sources

- IETF. RFC 9562 — Universally Unique IDentifiers (UUIDs), v7 time-ordered. May 2024. https://www.rfc-editor.org/rfc/rfc9562

- Postgres Documentation. Table Partitioning. https://www.postgresql.org/docs/current/ddl-partitioning.html

- Postgres Documentation. Index Storage Parameters — fillfactor. https://www.postgresql.org/docs/current/sql-createindex.html

- Lamport, Leslie. Time, Clocks, and the Ordering of Events in a Distributed System. Communications of the ACM, July 1978. https://lamport.azurewebsites.net/pubs/time-clocks.pdf

- Google SRE Workbook. Postmortem Culture: Learning from Failure. https://sre.google/workbook/postmortem-culture/

- Martin Fowler. Event Sourcing. https://martinfowler.com/eaaDev/EventSourcing.html

- Confluent. Schema Evolution and Compatibility. https://docs.confluent.io/platform/current/schema-registry/avro.html

- Datadog. State of AI Engineering Report 2026. April 2026. https://www.datadoghq.com/state-of-ai-engineering/

- LangChain. State of Agent Engineering. April 2026. https://www.langchain.com/state-of-agent-engineering

- Anthropic. Engineering Operations at Scale. 2026. https://www.anthropic.com/engineering

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-05-09 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment