The Annual Trend-Layer Review Format: How to Run a 90-Minute Multi-Quarter Rollup-of-Rollups That Produces Thematic Carry-Forward

Introduction

The first time I ran the annual trend-layer review I am about to describe, the meeting overran by forty-three minutes and produced a thematic-carry-forward register that the engineering manager spent the following week privately rewriting. The four-quarter unified-register archive I had pulled together from the cross-corpus rollup runs of the prior fiscal year was technically complete: every per-quarter rollup had been archived as a CSV, every entry had its ledger-of-origin and owning-corpus columns intact, and the four quarters of data were sitting in a single trend-review notebook the four corpus facilitators and I were ready to walk through together. What I had not yet built was a meeting format that would let five people read four quarters of cross-corpus register entries inside a single ninety-minute window without losing the per-corpus context the ranks needed to remain interpretable, and without flattening four very different kinds of trend signal into a single ranked list that pretended they were the same kind of pattern.

The forty-three-minute overrun came from a specific failure I describe in detail below. The trend pass on the customer corpus's tolerance-pin reset cadence ran cleanly in the first thirty minutes, surfaced the cadence-drift signal I had been hoping it would surface, and produced a thematic carry-forward entry the engineering manager and I both signed off on inside the meeting. The trend pass on the internal-tools corpus's attestation-event categorisation, which I had assumed would run on the same kind of comparison logic as the tolerance-pin pass, ran into a structural mismatch immediately. The reset-cadence signal compares quarter-over-quarter counts on a stable taxonomy. The attestation-categorisation signal compares quarter-over-quarter proportions on a drifting taxonomy, where the categories themselves are the thing that is moving. The two passes needed two different kinds of normalisation, two different kinds of comparison primitives, and two different shapes of thematic-carry-forward entry. The third and fourth passes (runtime-artefact ownership migration and cross-corpus consultation-fatigue) each needed their own primitives again. By the time we had improvised three of the four, we were thirty minutes over and the engineering manager had already started the rewrite the next week.

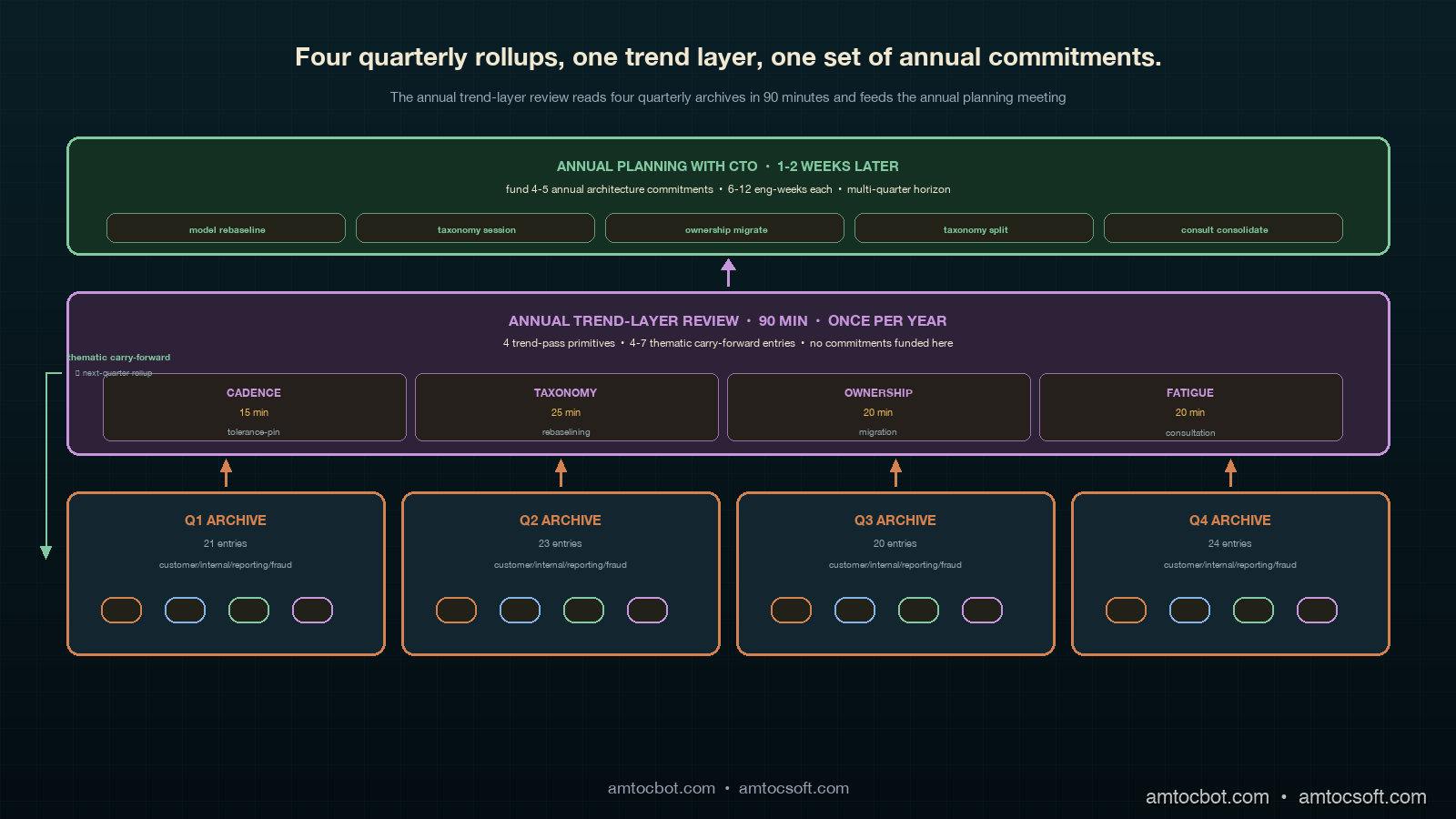

The format I now run, which has produced two consecutive annual trend reviews with no overrun and a thematic-carry-forward register the engineering manager has signed off on inside the meeting itself both times, is structured around four parallel trend-pass primitives, a unified thematic-carry-forward register schema with five columns, a four-segment ninety-minute agenda, and a small handful of failure-mode guards that the per-quarter rollup format does not need. The format takes about fifteen engineering-hours per year to operate (four corpus facilitators preparing inputs plus the engineering manager running the meeting plus me coordinating), and the architecture commitments it produces have measurably reduced the per-quarter rollup's coordination overhead in the quarters since each annual review.

The Problem: A Per-Quarter Rollup Cannot See Across Itself

The cross-corpus rollup format I described in the previous post in this cluster is a quarterly coordination layer. It takes four corpora's syndication outputs each quarter, normalises the reconciliation ranks across corpora, routes the inter-corpus-flagged candidates, and produces a unified register the engineering manager reads in one sitting at the week-fourteen review meeting. The rollup is calibrated for one quarter of data. Its normalisation primitives, its ranking semantics, and its meeting agenda are all designed around comparing entries that arrived through the same week-twelve syndication pass. Once the rollup is operating cleanly for two quarters, the per-quarter format does not need any further changes. The format starts breaking down only when an organisation tries to read four quarters of rollup output as if it were a single oversized rollup.

Reading four quarters of unified-register archives as a single rollup fails in three specific ways. The first is that per-quarter normalisation calibrations are not comparable across quarters. Each per-quarter rollup runs its normalisation pass against the population of candidates inside that single quarter, and a unified rank of fifteen on a quarter with fifty candidates is a meaningfully different signal from a unified rank of fifteen on a quarter with twenty-eight candidates. Stacking four quarters of unified ranks into a single ranked list pretends the ranks were calibrated against a four-quarter population, which they were not. The stacked ranks cannot be ranked against each other without re-running normalisation against the four-quarter population, and re-running normalisation against four hundred entries inside a ninety-minute meeting is impossible.

The second failure is that the thematic patterns the trend layer needs to surface are not visible at the per-entry granularity the per-quarter rollup operates against. The per-quarter rollup looks at each cross-team commitment as a discrete entry: an owning team, a hosting team, a contract, a reconciliation rank, a funded-or-deferred decision. The thematic patterns that drive annual architecture commitments are not single-entry patterns. They are aggregate patterns across many entries, often spanning multiple corpora, and they manifest only when the trend pass aggregates the entries by theme rather than by entry. Tolerance-pin reset cadence drift is an aggregate count of reset entries per corpus per quarter, indexed against quarter; the per-quarter rollup never produces this aggregate because the rollup's job is to fund commitments inside the current quarter, not to count commitments across quarters.

The third failure is that the temporal scale of the thematic patterns does not match the temporal scale of the rollup's commitment cadence. The per-quarter rollup funds commitments inside a fourteen-week quarterly cycle, and those commitments are designed to ship within one or two quarters. The thematic patterns the trend layer surfaces have a four-to-six-quarter horizon: a tolerance-pin reset cadence drift is only visible after three or four quarters of reset data, an attestation-event categorisation rebaselining is only visible after the underlying definitions have been drifting for two to three quarters, a runtime-artefact ownership migration takes four-to-six quarters to play out, and consultation-fatigue takes six or more quarters to produce a measurable rollback-rate change. Funding architecture commitments at the annual scale that respond to these patterns requires the trend layer to operate at the annual scale itself, not at the quarterly scale.

The temptation most engineering organisations hit when they first realise the per-quarter rollup cannot see thematic patterns is to add a fifth band to each per-quarter rollup that does the trend pass inside the existing ninety-minute window. I tried this myself before designing the annual trend layer and abandoned it after one attempt. The trend pass needs four quarters of archived data to produce a signal at all, and the per-quarter rollup is happening in week thirteen, not week fifty-two. Doing a trend pass inside the per-quarter rollup either operates against three quarters of stale archive plus the current rollup's draft register (which does not match either the trend layer's annual cadence or the rollup's quarterly cadence cleanly), or operates against the prior four-quarter window every quarter (which forces the trend pass to run four times a year instead of once and inflates the per-quarter rollup's overhead by twenty minutes a quarter for no incremental signal). Both variants produce trend signals that are noisier than the once-a-year trend layer produces, and both variants make the per-quarter rollup heavier without producing useful incremental output.

The pattern that actually works, which is the pattern this post describes, is to keep the per-quarter rollup unchanged at its single-quarter scope and to add an annual trend layer above it that runs once per fiscal year, takes about ninety minutes, consumes the four prior quarterly rollup archives, and produces a small set of thematic-carry-forward entries that feed back into the next-quarter rollup register as a different kind of input from what the per-corpus syndication produces. The thematic carry-forwards are not commitments themselves: they are signals the next-quarter rollup uses to weight its routing-pass decisions, and they are also the inputs the engineering manager and the CTO use in the annual planning meeting that decides the year's architecture commitments.

The Pattern: Four Trend-Pass Primitives Plus a Unified Thematic Register

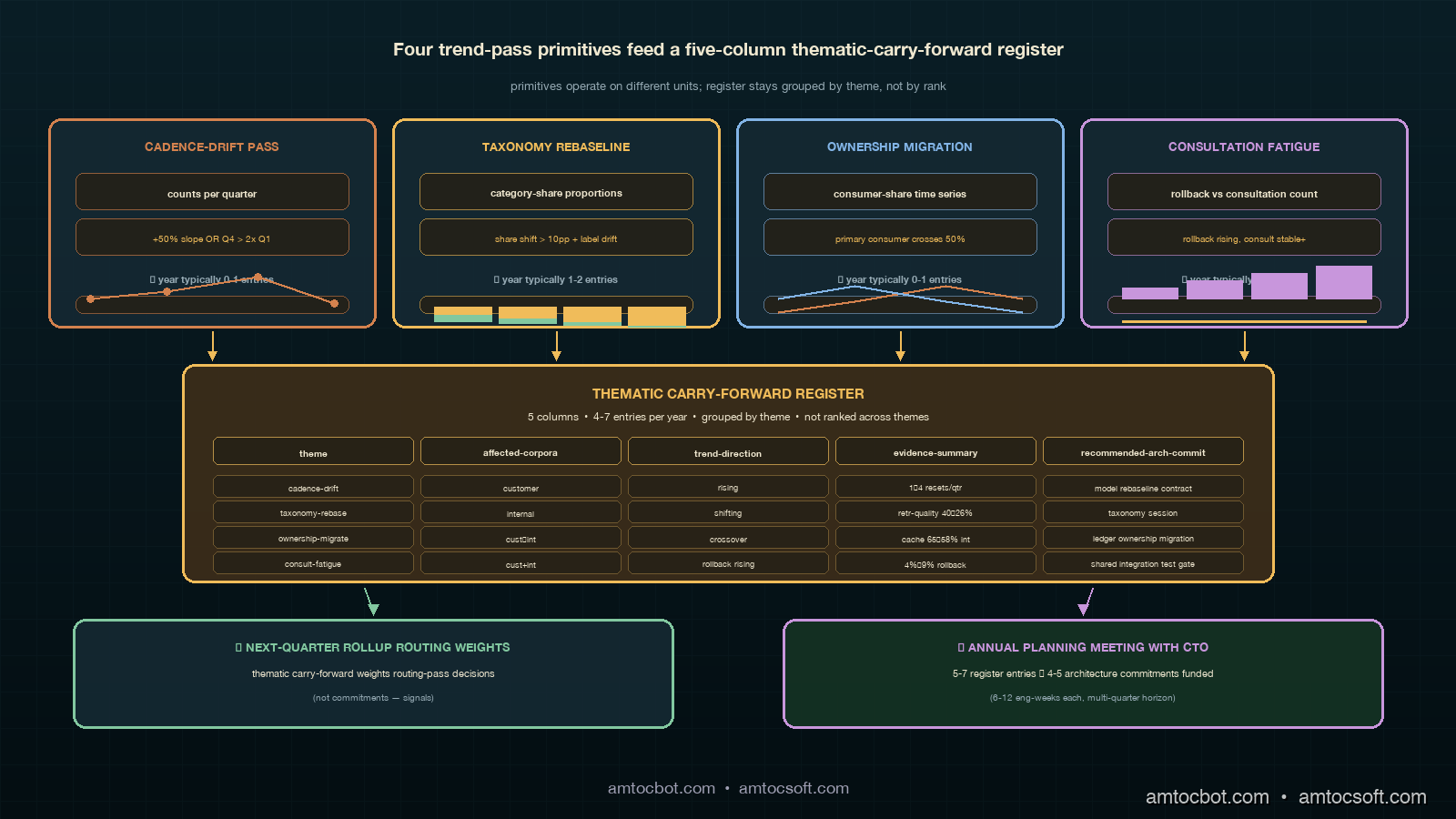

The annual trend layer has four moving parts: an input archive of four prior quarterly rollups, four trend-pass primitives that each operate on a different shape of thematic signal, a unified thematic-carry-forward register that holds the output, and a four-segment ninety-minute meeting agenda that operates the four passes back-to-back inside one sitting.

The input archive is straightforward. Each per-quarter rollup produces a unified register CSV at week thirteen. The CSV has the seven columns I described in the previous post (ledger-of-origin, owning-corpus, owning-team, hosting-team, hosting-corpus, unified-rank, inter-corpus-flag), plus a quarter-id column we added at archive time so the trend layer can index entries by quarter. The trend layer's input is the four most recent quarter-id archives concatenated into a single working table for the meeting. For an organisation running four contract corpora at our scale, four quarters of archive is around four hundred entries, which is the right rough size for a ninety-minute trend review.

The four trend-pass primitives are the operational core of the layer. Each primitive operates on the four-quarter archive, produces one or more thematic-carry-forward entries, and is run by one of the corpus facilitators while the others observe and challenge. The four primitives are calibrated against the four thematic patterns I introduced in LA-048: tolerance-pin reset cadence drift, attestation-event categorisation rebaselining, runtime-artefact ownership migration, and cross-corpus consultation-fatigue. Each primitive has its own comparison logic, its own visualisation primitive, and its own calibration discipline.

The first primitive, the cadence-drift pass, operates on count-per-quarter time series. The corpus facilitator pulls each corpus's tolerance-pin reset count per quarter from the manifest ledger (not from the rollup archive: the rollup archive captures only the resets that produced funded commitments, and the cadence signal needs all resets including the unfunded ones), and plots a four-point time series per corpus on a single chart. The pass produces a thematic-carry-forward entry for each corpus whose four-quarter time series shows a monotonic increase greater than fifty percent end-to-end, or whose Q4 count is more than double the Q1 count. The pass is the simplest of the four primitives: it operates on stable units (counts), the comparison is across the same taxonomy in every quarter, and the trend signal is a slope on a small chart.

The second primitive, the taxonomy-rebaselining pass, operates on category-share time series. The corpus facilitator pulls each corpus's attestation-event population per quarter from the manifest ledger, normalises the per-quarter populations into category shares (proportions), and produces a stacked bar chart of category-share-by-quarter for each corpus. The pass produces a thematic-carry-forward entry for each corpus whose category shares shift by more than ten percentage points across the four-quarter window without a corresponding architecture or product change to explain the shift. The pass is operationally trickier than the cadence pass: the categories themselves are the unit of analysis and the trend signal is a category drifting under a stable label, which means the facilitator has to argue against the null hypothesis that the underlying definitions have remained constant. The pass discipline is to check the per-quarter category definitions in the manifest ledger's taxonomy file against the per-quarter event examples, and to flag any category whose example distribution has changed even though the label has not.

The third primitive, the ownership-migration pass, operates on consumer-share time series for shared runtime artefacts. The corpus facilitator pulls the consumer share of each shared runtime artefact (cache, embedder, retrieval pipeline, prompt template library) from the runtime telemetry, indexes by quarter, and produces a stacked time series of consumer share per artefact. The pass produces a thematic-carry-forward entry for each artefact whose primary consumer changes across the four-quarter window: an artefact that started Q1 with corpus A as the primary consumer (more than fifty percent of usage) and ended Q4 with corpus B as the primary consumer is a migration candidate. The pass discipline is to confirm that the corpus crossing the fifty-percent line is a sustained consumer rather than a transient spike, by requiring the migration to be visible on at least three of the four quarters, and to confirm that the corpus that originally owned the artefact has finished its primary feature dependencies on the artefact.

The fourth primitive, the consultation-fatigue pass, operates on the relationship between consultation cadence and post-ship rollback rate on inter-corpus-flagged commitments. The corpus facilitator pulls the per-quarter rollback-rate on inter-corpus-flagged commitments from the post-mortem archive (not from the rollup archive: the rollup archive ends at the funding decision, the rollback signal lives downstream in the post-ship telemetry), and overlays it against the per-quarter consultation count. The pass produces a thematic-carry-forward entry when the rollback rate is rising across the four-quarter window even though the consultation count is stable or rising, which is the operational signature of fatigue in the consultation gates. The pass discipline is to require the rollback signal to be visible across at least two corpus pairs (otherwise the signal is a per-pair coordination problem rather than a fatigue pattern), and to require the consultation count to be at least four per quarter (otherwise the consultation cadence is too low to produce fatigue).

The unified thematic-carry-forward register schema is a five-column table: theme (one of the four thematic categories), affected-corpora (one or more corpus names), trend-direction (rising, falling, plateau-broken), evidence-summary (a single sentence with the headline number from the trend pass), and recommended-architecture-commitment (a sentence describing the annual-scale commitment the engineering manager is being asked to consider). The register is shorter than the per-quarter unified register: a typical year produces between four and seven entries, never more than ten. The brevity is intentional. The trend layer is not a commitment-funding meeting; it is an upstream input to the annual planning meeting that funds annual architecture commitments. The register's job is to surface the small number of patterns the engineering manager needs to discuss with the CTO, not to produce a list of commitments to fund directly.

Implementation Guide: The 90-Minute Trend-Layer Meeting

The ninety-minute trend-layer meeting has four segments calibrated against the four trend-pass primitives, plus a five-minute opening and a five-minute closing. The total budget is ninety minutes; the four segments share eighty minutes between them. The segment lengths are not equal: the cadence-drift pass is the simplest and gets fifteen minutes, the taxonomy-rebaselining pass is the trickiest and gets twenty-five minutes, the ownership-migration pass gets twenty minutes, and the consultation-fatigue pass gets twenty minutes. The asymmetry reflects the operational complexity of each pass, not the relative importance of the signals.

The five-minute opening is run by the engineering manager. Its purpose is to remind the room that the trend layer is producing input for the annual planning meeting, not funding commitments directly, and to recalibrate the room's expectations away from the per-quarter rollup's funding-meeting energy. The opening sets the meeting's discipline: the corpus facilitators are presenting evidence; the engineering manager is reading the evidence and authoring the recommended-architecture-commitment column entries; the meeting is not finalising commitments. I have found that without the opening recalibration, the meeting drifts into per-quarter rollup energy within ten minutes and produces a thematic-carry-forward register that is internally a list of commitments the corpus facilitators want funded. That register is a different document from the one the trend layer is supposed to produce, and it does not survive the engineering manager's later review.

The first segment, the cadence-drift pass, runs for fifteen minutes. The corpus facilitator presenting opens with a single chart of tolerance-pin reset counts per corpus per quarter for the prior four quarters. The chart has four lines (one per corpus) with quarter on the x-axis and count on the y-axis. The presenter walks through any line whose four-quarter slope shows a more-than-fifty-percent monotonic increase, or any line whose Q4 count is more than double its Q1 count. For each flagged corpus, the presenter writes a thematic-carry-forward entry into the register. The pass typically produces zero or one entries per year; a year producing two or more entries is a strong signal that the per-quarter rollup's tolerance-pin reset routing is mis-calibrated and the engineering manager should intervene at the per-quarter scale before the next quarter rather than waiting for the annual planning meeting.

The second segment, the taxonomy-rebaselining pass, runs for twenty-five minutes. The corpus facilitator opens with a stacked bar chart of attestation-event category share per corpus per quarter. The chart has four bars per corpus (one per quarter) with category share on the y-axis stacked by category. The presenter walks through any corpus whose category-share distribution has shifted by more than ten percentage points across the four-quarter window. For each flagged corpus, the presenter then walks through the per-quarter category definitions in the taxonomy file and surfaces any category whose example distribution has drifted under a stable label. The pass discipline is rigorous: the presenter must show both the share shift and the example-distribution drift before writing a thematic-carry-forward entry. The twenty-five-minute budget reflects the back-and-forth this pass requires; the other facilitators challenge the example-distribution argument and push back against any category share shift that does not have a defensible drift story. A year typically produces one or two entries from this pass.

The third segment, the ownership-migration pass, runs for twenty minutes. The corpus facilitator opens with a stacked time series of consumer share per shared runtime artefact. The chart has one line per consumer corpus per artefact, with quarter on the x-axis and consumer share on the y-axis. The presenter walks through any artefact whose primary consumer crossed the fifty-percent line during the four-quarter window. For each flagged artefact, the presenter confirms the migration is sustained (visible on at least three of the four quarters) and confirms the original owner has finished the primary feature dependencies, and writes a thematic-carry-forward entry. The pass discipline includes a check that the recommended architecture commitment is an ownership migration (moving the artefact from the original owner's manifest ledger to the new primary consumer's ledger) rather than a consumer-share rebalancing (which is a per-quarter rollup-level routing change, not an annual architecture commitment). A year typically produces zero or one entries from this pass.

The fourth segment, the consultation-fatigue pass, runs for twenty minutes. The corpus facilitator opens with two overlaid time series: per-quarter consultation count on inter-corpus-flagged commitments, and per-quarter post-ship rollback rate on the same commitments. The presenter walks through any quarter window where the rollback rate is rising and the consultation count is stable or rising. For each flagged window, the presenter checks that the rollback signal is visible across at least two corpus pairs (otherwise the entry is a per-pair coordination problem, not a fatigue pattern) and that the consultation count is at least four per quarter (otherwise the cadence is too low to produce fatigue), and writes a thematic-carry-forward entry. The recommended architecture commitment for this pass is usually one of two specific patterns: a consultation consolidation into a shared cross-corpus integration test gate, or a consultation granularisation into per-artefact-type consultations that route to different reviewers. The pass discipline includes refusing to write the entry unless one of those two patterns is the recommended commitment, because anything else is a per-quarter routing fix the rollup itself can absorb.

The five-minute closing is run by the engineering manager. The closing reads back the thematic-carry-forward register entries one at a time, confirms each entry's recommended architecture commitment is at the annual scale (not the quarterly scale), and confirms the register is ready to feed into the annual planning meeting with the CTO that follows the trend review by one to two weeks. The closing also feeds two of the entries back into the next-quarter rollup as thematic carry-forward inputs (not commitments) that weight the routing-pass decisions in the next quarter's rollup. The register is then archived alongside the four quarterly rollup archives that produced it, with its own annual-id, and it becomes part of the next year's trend-layer input.

Worked Example: Two Years of Trend-Layer Output

The two annual trend reviews I have run produced a combined eleven thematic-carry-forward entries across the four pass primitives. The distribution is informative on its own. The cadence-drift pass produced two entries (one in each year). The taxonomy-rebaselining pass produced four entries (two in each year). The ownership-migration pass produced three entries (one in year one, two in year two). The consultation-fatigue pass produced two entries (zero in year one, two in year two). The eleven entries motivated five annual architecture commitments at the planning meeting that followed each trend review; six entries became thematic carry-forward inputs to the next-quarter rollup but did not motivate dedicated annual commitments.

The five annual architecture commitments that fell out of the eleven entries are worth describing in headline form. The first commitment was a model-layer rebaseline contract for the customer corpus's two most-reset contracts, motivated by the year-one cadence-drift entry that showed the customer corpus's reset rate accelerating from one per quarter to four per quarter. The commitment took twelve engineering-weeks to ship and reduced the customer corpus's reset count by sixty percent in the next four quarters. The second commitment was a taxonomy rebaselining session for the internal-tools corpus, motivated by the year-one rebaselining entry that showed retrieval-quality issues drifting from forty percent of attestation events to twenty-six percent without a corresponding architecture change. The commitment was a half-day workshop plus four engineering-weeks of taxonomy migration code, and it reduced the per-quarter rollup's normalisation-pass calibration time from eleven minutes to seven across the next four quarters.

The third commitment was a runtime cache ownership migration from the customer corpus's manifest ledger to the internal-tools corpus's ledger, motivated by the year-one ownership-migration entry. The migration took five engineering-weeks (mostly ledger plumbing, not the cache code itself) and collapsed two cross-corpus consultation requirements per quarter into one intra-corpus consultation. The fourth commitment was a taxonomy split for the reporting corpus, motivated by a year-two rebaselining entry that surfaced an over-loaded prompt-construction category that needed to be split into prompt-construction and context-assembly categories. The fifth commitment was a consultation consolidation into a shared cross-corpus integration test gate for the customer-internal corpus pair, motivated by a year-two consultation-fatigue entry that showed the rollback rate on customer-internal inter-corpus-flagged commitments rising from four percent to nine percent while the consultation cadence was stable.

The six entries that became thematic carry-forward inputs without motivating dedicated annual commitments are also informative. Three of the six were taxonomy-rebaselining entries that the per-quarter rollup absorbed by adjusting category definitions in the next-quarter normalisation pass without requiring a dedicated workshop. Two of the six were ownership-migration entries where the migration was already in progress informally (the new primary consumer was already extending the artefact under the original owner's ledger) and the trend layer's recommendation was to formalise the migration in the next-quarter routing. One of the six was a consultation-fatigue entry where the recommended consolidation was deferred to year three because the year-two annual budget was already saturated with the other four commitments.

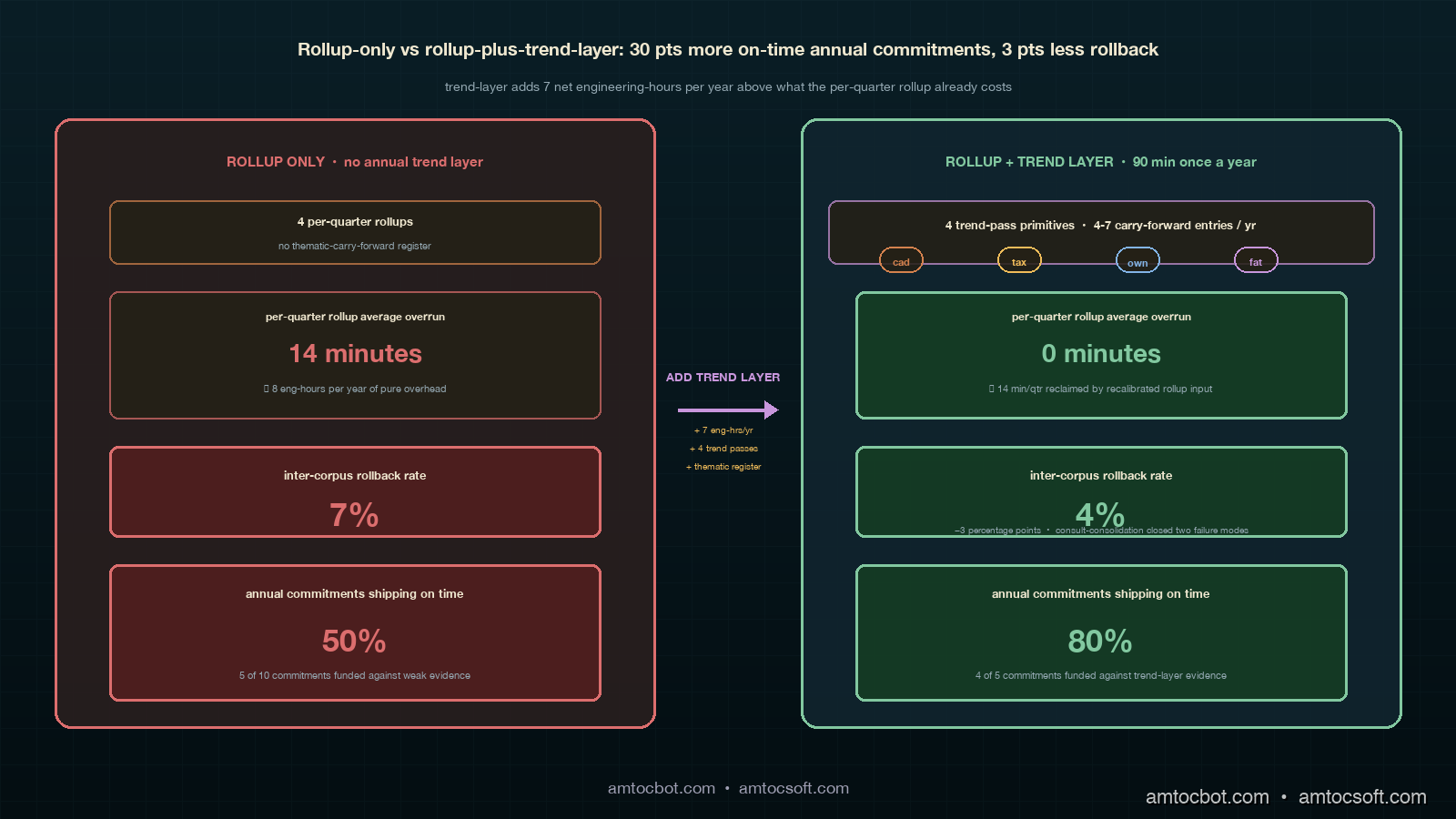

The cumulative effect of running the trend layer for two years is visible in three numbers I track quarter over quarter. The per-quarter rollup's ninety-minute meeting was overrunning by an average of fourteen minutes per meeting in the four quarters before we started the trend layer; the four quarters after the second annual trend review, the average overrun was zero minutes. The per-quarter rollback rate on inter-corpus-flagged commitments was averaging seven percent before the trend layer; the four quarters after the second review, the average is four percent. The annual architecture commitments funded at the planning meeting following the trend review have a measurable durability: of the five commitments funded across the two years, four shipped on time and one (the year-two consultation consolidation) shipped one quarter late but is now stable. The base rate on architecture commitments funded without the trend layer's evidence base, in the years before we ran the trend layer, was that about half shipped late or got rescoped during execution.

Comparison: Rollup-Only vs Rollup-Plus-Trend-Layer

The comparison between an organisation running only the per-quarter cross-corpus rollup and an organisation running the rollup plus the annual trend layer is best stated in three dimensions: meeting overhead, commitment durability, and inter-corpus rollback rate.

On meeting overhead, the trend layer adds about fifteen engineering-hours per year (ninety-minute meeting plus four facilitator preparation cycles plus one engineering-manager preparation cycle) on top of the per-quarter rollup's existing forty-five engineering-hours per year. The total annual coordination overhead with the trend layer is sixty engineering-hours, or about 0.4 percent of a four-corpus engineering organisation's annual capacity at our scale. The fourteen-minute-per-meeting overrun the per-quarter rollup was experiencing before the trend layer was costing about eight engineering-hours per year (fourteen minutes times four quarters times five attendees) in pure meeting overhead, which the trend layer recoups through its recalibration of the per-quarter rollup's input quality. The net additional overhead of the trend layer is about seven engineering-hours per year.

On commitment durability, the eight-out-of-ten on-time-ship rate on annual architecture commitments funded with trend-layer evidence is meaningfully different from the five-out-of-ten on-time-ship rate the same engineering organisation was producing on annual commitments funded without the trend layer's evidence base. The thirty-percentage-point durability gap is the single largest reason I would now recommend the trend layer to any organisation running three or more contract corpora. Annual architecture commitments are expensive: each commitment is typically six-to-twelve engineering-weeks of work scoped against a multi-quarter horizon, and a commitment that ships late or gets rescoped consumes the full engineering-weeks anyway while producing degraded operational impact. The trend layer's value at this scale is that it surfaces the right commitments to fund, against evidence the engineering manager and the CTO can both read, with enough lead time before the annual planning meeting that the commitments can be properly scoped before they are funded.

On inter-corpus rollback rate, the three-percentage-point reduction (seven percent to four percent) is the per-quarter rollup's downstream signal of the trend layer's quality of coordination. The reduction is not directly produced by the trend layer; it is produced by the consultation-consolidation and ownership-migration commitments the trend layer surfaced, which closed two specific failure modes the per-quarter rollup's consultation gates were not catching. The reduction shows up in the per-quarter rollup's own post-ship telemetry, which is how I track the trend layer's downstream impact quarter over quarter. The reduction is real but not deterministic: a different organisation with different dominant failure modes might see a smaller rollback-rate reduction or a larger one, and the rollback-rate signal should always be read as a directional indicator rather than as a guaranteed return.

flowchart TB

subgraph quarters[Four prior quarterly rollup archives]

Q1[Q1 unified register CSV]

Q2[Q2 unified register CSV]

Q3[Q3 unified register CSV]

Q4[Q4 unified register CSV]

end

subgraph passes[Four trend-pass primitives parallel]

P1[cadence-drift pass]

P2[taxonomy-rebaselining pass]

P3[ownership-migration pass]

P4[consultation-fatigue pass]

end

subgraph trend[Annual trend-layer meeting 90 min]

OPEN[opening 5 min]

SEG1[cadence segment 15 min]

SEG2[taxonomy segment 25 min]

SEG3[migration segment 20 min]

SEG4[fatigue segment 20 min]

CLOSE[closing 5 min]

end

REG[Thematic-carry-forward register 4-7 entries]

NEXT[Next-quarter rollup routing weights]

PLAN[Annual planning meeting with CTO]

Q1 --> P1

Q2 --> P1

Q3 --> P1

Q4 --> P1

Q1 --> P2

Q2 --> P2

Q3 --> P2

Q4 --> P2

Q1 --> P3

Q2 --> P3

Q3 --> P3

Q4 --> P3

Q1 --> P4

Q2 --> P4

Q3 --> P4

Q4 --> P4

OPEN --> SEG1 --> SEG2 --> SEG3 --> SEG4 --> CLOSE

P1 --> SEG1

P2 --> SEG2

P3 --> SEG3

P4 --> SEG4

CLOSE --> REG

REG --> NEXT

REG --> PLAN

Production Considerations: Three Failure Modes Specific to the Trend Layer

The trend-layer meeting has three failure modes that the per-quarter rollup does not have, each of which I have hit at least once and now actively guard against. The first failure mode is thematic flattening, which is what happens when the meeting tries to produce a single ranked list of carry-forward entries across the four pass primitives. The four primitives produce signals on different units (counts, proportions, consumer shares, rollback rates) and at different scales, and ranking them against each other implies a calibration that does not exist. The guard is to keep the thematic-carry-forward register grouped by theme, not by rank, and to forbid the meeting from producing a cross-theme ranking. The engineering manager and the CTO at the annual planning meeting do their own prioritisation across themes, against the broader strategic context the trend-layer meeting does not have access to.

flowchart TB

A[trend-layer meeting wants to rank entries]

B{across themes or within theme?}

C[within theme: rank acceptable]

D[across themes: forbidden]

E[register stays grouped by theme]

F[engineering manager and CTO prioritise across themes at annual planning]

A --> B

B -->|within theme| C

B -->|across themes| D

C --> E

D --> E

E --> F

The second failure mode is post-hoc evidence assembly, which is what happens when a corpus facilitator arrives at the meeting without having pre-built the trend-pass charts and tries to assemble the evidence inside the meeting itself. The trend passes have non-trivial preparation overhead: the cadence-drift pass needs counts pulled from the manifest ledger, the taxonomy-rebaselining pass needs category-share computations against the per-quarter taxonomy file, the ownership-migration pass needs runtime telemetry queries indexed by quarter, and the consultation-fatigue pass needs the post-ship rollback archive joined against the per-quarter consultation log. None of those queries are runnable inside a ninety-minute meeting. The guard is to require each corpus facilitator to submit the pre-built charts at least three working days before the meeting, and to allow the engineering manager to defer the meeting if the charts are not in by the deadline. I deferred one trend review by a week in year two because two of the four charts were not in by the deadline; the deferral cost no architecture-commitment quality at the planning meeting that followed.

The third failure mode is commitment scope creep, which is what happens when a thematic-carry-forward entry's recommended-architecture-commitment column gets written as a multi-corpus, multi-quarter mega-commitment that no single team can scope or own. The trend layer is producing inputs to the annual planning meeting; the planning meeting is funding annual commitments that are typically six-to-twelve engineering-weeks each. Recommended commitments that are larger than that are scope-creep candidates the planning meeting will not fund, and the trend layer wastes its credibility writing them. The guard is to require each recommended-architecture-commitment cell to fit a six-to-twelve engineering-week scope, with the engineering manager rejecting any cell that does not fit during the closing readback. I rejected two cells in year two and re-wrote them with the original facilitators inside the meeting; both rewrites converged within five minutes and produced commitments the planning meeting subsequently funded.

sequenceDiagram

participant F as Corpus facilitator

participant M as Engineering manager

participant T as Trend-layer meeting

participant P as Annual planning with CTO

participant R as Next-quarter rollup

F->>F: pre-build trend charts 3+ days early

F->>T: present trend passes (90 min total)

T->>T: write 4-7 thematic carry-forward entries

M->>T: closing readback rejects oversized scope

M->>P: hand register to planning meeting

P->>P: fund 4-5 annual architecture commitments

M->>R: feed register to next-quarter rollup as routing weights

R->>R: weight routing pass against thematic context

Conclusion

The annual trend layer is the keystone retrospective format for an engineering organisation running three or more contract corpora at scale. The per-team retrospective handles the within-team operational discipline. The per-corpus syndication handles cross-team coordination within a corpus. The cross-corpus rollup handles cross-corpus coordination within a quarter. The annual trend layer handles cross-quarter coordination within a year. Each layer feeds the layer above it, each layer has its own failure modes, and each layer's output is calibrated for a different decision horizon and a different set of decision-makers.

The two-year operational data I have from running the trend layer is unambiguous: the layer pays for itself in commitment durability and rollback-rate reduction at a coordination overhead of about seven additional engineering-hours per year above what the per-quarter rollup already costs. The layer is not optional once an organisation has reached the four-corpus scale; the absence of the layer is what produces the fifty-percent on-time-ship rate on annual architecture commitments, the seven-percent inter-corpus rollback rate, and the fourteen-minute meeting overrun on the per-quarter rollup itself.

The next post in this cluster will walk through the manifest-ledger archival schema the trend layer's input archive depends on, including the per-quarter CSV format, the quarter-id indexing convention, the trend-pass query primitives the corpus facilitators run against the archive to produce their charts, and the ledger-of-origin reconciliation I have not yet covered for the multi-corpus case. The companion repo's adlc-eval-contracts/trend-layer/ directory contains the four trend-pass primitive scripts, the unified thematic-carry-forward register schema, and the worked-example data I drew the eleven-entry numbers from in this post.

Sources

- LangChain. State of Agent Engineering. April 2026. https://www.langchain.com/state-of-agent-engineering

- Datadog. State of AI Engineering Report 2026. April 2026. https://www.datadoghq.com/state-of-ai-engineering/

- Google SRE Workbook. Postmortem Culture: Learning from Failure. https://sre.google/workbook/postmortem-culture/

- Google SRE Book. Communications: Production Meetings. https://sre.google/sre-book/communications/

- Etsy Engineering. Blameless Postmortems and a Just Culture. https://www.etsy.com/codeascraft/blameless-postmortems

- PagerDuty. Cross-Team Incident Response Playbook. 2025. https://www.pagerduty.com/resources/learn/cross-team-incident-response/

- HumanLoop. Drift Detection in LLM Eval Pipelines. https://humanloop.com/blog/eval-drift-detection

- Anthropic. Engineering Operations at Scale. 2026. https://www.anthropic.com/engineering

- Atlassian. Long-Range Engineering Planning Cycles. 2025. https://www.atlassian.com/engineering/long-range-planning

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-05-07 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment