The Taxonomy-Aware Quarterly Review Pass: Engineering Manager's Migration Prioritisation, Alignment-Drift Signal Fold-In, and Trend-Pass Projection-Choice Rubric

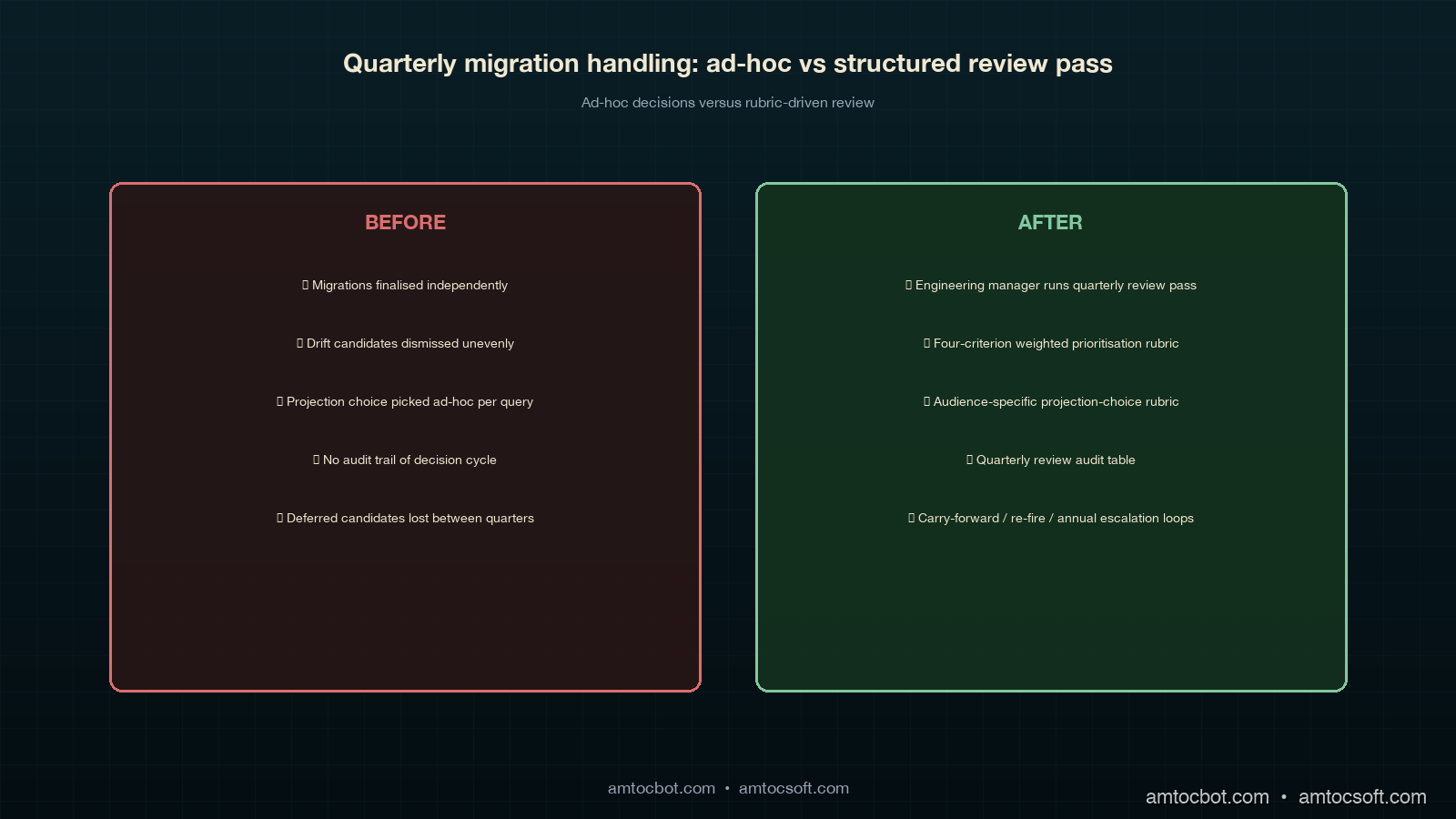

The first time I sat in on the engineering manager's quarterly review pass at the four-corpus deployment we run, the review-pass agenda the engineering manager had written ahead of the meeting was three pages long. There was a per-corpus migration audit-trail walk-through, a cross-corpus alignment-drift candidates list, a list of the prior quarter's incident retrospectives that had touched the manifest-ledger taxonomy, and a placeholder section labelled "trend-pass projection choices" that the engineering manager had not finalised the structure of yet. The two-hour meeting ran four hours, and the reason it ran long was the projection-choice section. Each of the four projection-choice candidates the cross-quarter trend-pass primitive supports (source-quarter snapshots, target-quarter snapshot, synthetic merged snapshot, and a fourth choice the engineering manager surfaced during the meeting that combined two of the prior three) had a different operational implication, and the meeting did not have a structured rubric for choosing between them.

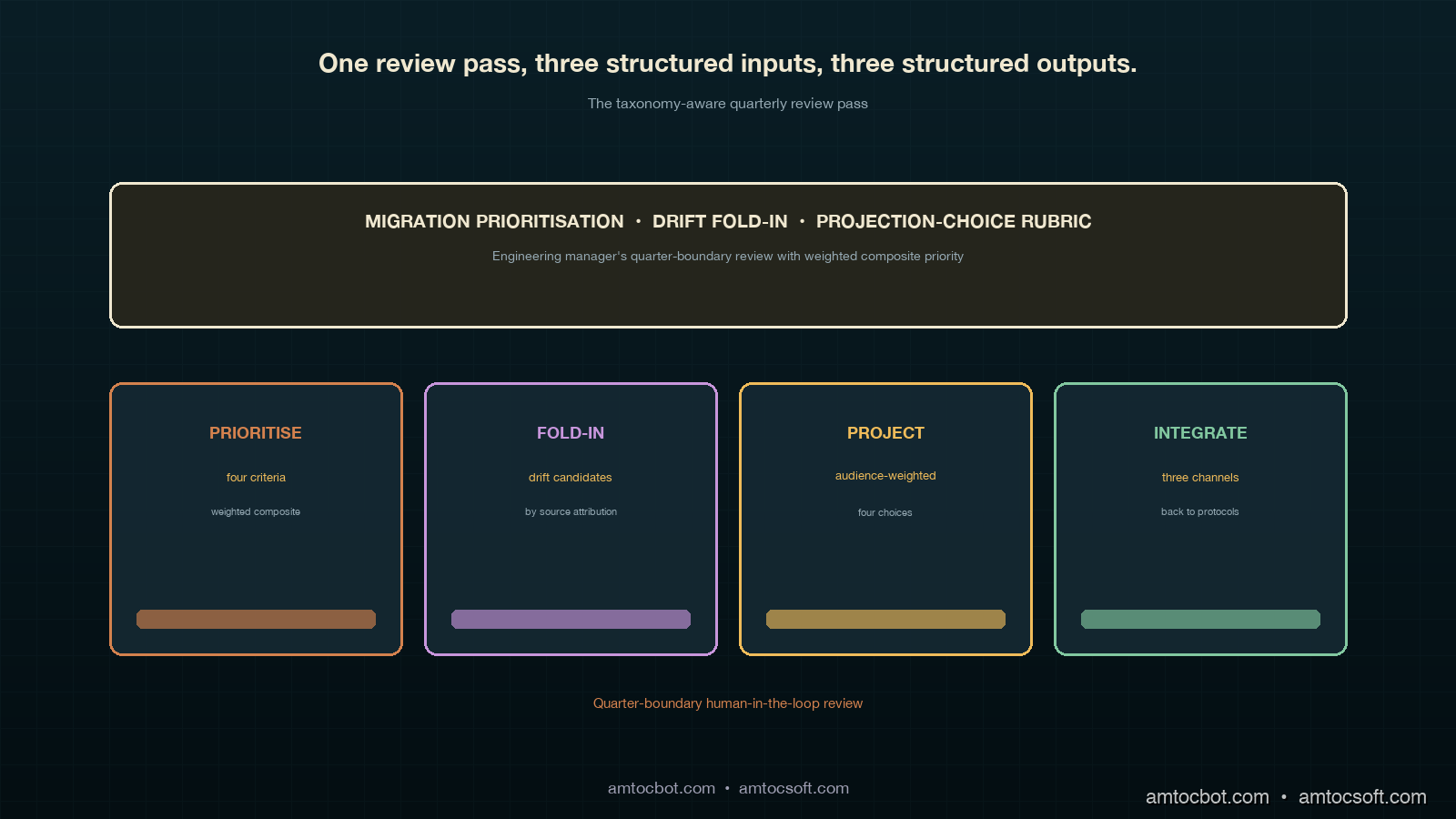

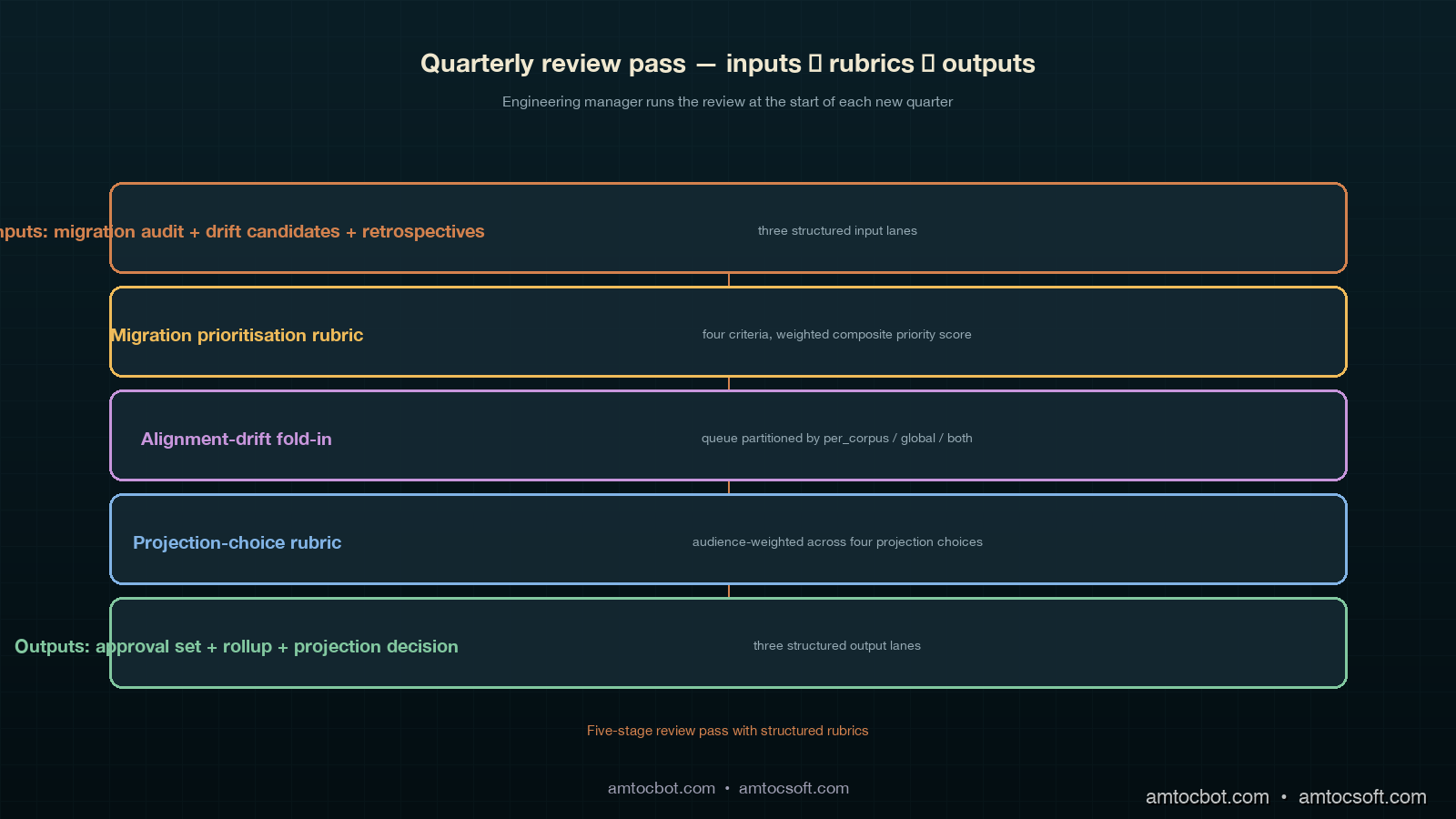

The pattern that emerged from that quarter's review pass and the three quarters that followed is the taxonomy-aware quarterly review pass this post walks through. The pass is the human-in-the-loop layer that sits on top of the per-corpus taxonomy versioning protocol from blog 198 and the cross-corpus taxonomy alignment layer from blog 199. The engineering manager runs the pass at the start of each new quarter, with three structured inputs (the per-corpus migration audit trail from the prior quarter, the cross-corpus alignment-drift candidate queue, and the cross-quarter trend-pass projection-choice rubric) and three structured outputs (the prioritised migration approval set, the cross-corpus rollup review with provenance carried through, and the projection-choice decision for the next cross-corpus trend-pass run).

This post covers four pieces of the review pass: the migration prioritisation rubric (the engineering manager's framework for sequencing per-corpus and cross-corpus migrations across the next quarter), the alignment-drift signal fold-in (how the three drift signals from blog 199 fold into the engineering manager's review queue with their drift-source attribution intact), the trend-pass projection-choice rubric (the structured decision rubric for choosing between the four projection choices the cross-quarter trend-pass primitive supports), and the operational integration (how the review pass writes back into the per-corpus and cross-corpus protocols' state and how the next quarter's signals feed forward into the next review).

Migration Prioritisation Rubric

The engineering manager's first task in the review pass is to prioritise the prior quarter's pending migrations against the next quarter's review-pass capacity. The pending migration set has two sources: the per-corpus migration audit trail's open candidates (per-corpus migrations the corpus facilitator has surfaced but not finalised, plus per-corpus migrations the alignment-drift detection rules have recommended) and the cross-corpus alignment-drift candidate queue (alignment updates the cascade-review flow has surfaced as ready for the platform team's review). Each candidate carries a structured set of attributes (operation type, drift-source attribution, signal value, threshold value, surfacing quarter) the rubric scores against.

The rubric scores each candidate against four criteria. The first is operational impact: how much of the next quarter's cross-corpus rollup volume is affected by the migration. A migration on a per-corpus category whose quarterly rollup count is the largest in the corpus has higher operational impact than a migration on a long-tail category. The score is the candidate's affected rollup volume as a fraction of the corpus's total quarterly rollup count. The second is signal strength: how far past the drift-detection rule's threshold the signal value sits. A candidate whose embedding-similarity score is 0.50 against a threshold of 0.78 has stronger signal than one at 0.75 against the same threshold. The score is the absolute distance between the signal value and the threshold, normalised by the threshold's calibration range.

The third is propagation risk: whether the migration is likely to cascade into additional alignment-drift candidates in the next quarter. A merge-or-split migration on a per-corpus category that aligns to multiple global categories has higher propagation risk than a rename migration on a category aligned to a single global category. The score is a four-band ordinal (low, medium, high, critical) with explicit rules per migration operation type. The fourth is retrospective recurrence: whether the migration is the resolution of a contributing-factor pattern that has surfaced in the prior quarter's incident retrospectives. A migration that closes a contributing-factor pattern has higher retrospective recurrence than one that does not. The score is the count of prior-quarter retrospectives the migration's resolution would have closed.

The four scores compose into a single prioritisation score with explicit weights the engineering manager sets at the start of each quarter. At the four-corpus deployment we run, the weights are 0.40 for operational impact, 0.20 for signal strength, 0.25 for propagation risk, and 0.15 for retrospective recurrence. The weights are reviewed annually at the year-end retrospective and have stayed within a plus-or-minus 0.05 range across the eighteen-month operational history. The composite score is the weighted sum, with each criterion's score normalised to a [0, 1] range before the weighted sum is taken.

CREATE TABLE quarterly_review_prioritisation (

candidate_id UUID PRIMARY KEY,

candidate_source TEXT NOT NULL CHECK (

candidate_source IN ('per_corpus_migration', 'alignment_drift', 'retrospective_recurrence')

),

reviewed_at_quarter_id TEXT NOT NULL,

operational_impact_score NUMERIC NOT NULL,

signal_strength_score NUMERIC NOT NULL,

propagation_risk_score NUMERIC NOT NULL,

retrospective_recurrence_score NUMERIC NOT NULL,

composite_priority NUMERIC NOT NULL,

approval_status TEXT NOT NULL CHECK (

approval_status IN ('approved', 'deferred', 'rejected', 'escalated')

),

approval_rationale TEXT NULL,

approved_at TIMESTAMPTZ NULL,

next_quarter_action_id UUID NULL

);

CREATE INDEX idx_quarterly_review_prioritisation_pending

ON quarterly_review_prioritisation (reviewed_at_quarter_id, composite_priority DESC)

WHERE approval_status IS NULL OR approval_status = 'deferred';

The approval_status column has four values. Approved candidates flow into the next quarter's per-corpus migration finalisation queue or cross-corpus alignment update queue, depending on the candidate source. Deferred candidates carry forward to the next quarter's review pass with their composite priority recomputed against the new quarter's operational state. Rejected candidates are closed with a recorded rationale and do not surface again unless the underlying drift signal re-fires above its threshold. Escalated candidates are forwarded to the year-end engineering-manager review or the platform-team's architectural review, depending on the escalation path.

flowchart TD

A[Per-corpus migration<br/>audit trail open candidates] --> D[Quarterly review<br/>prioritisation table]

B[Alignment-drift<br/>candidate queue] --> D

C[Retrospective recurrence<br/>contributing-factor patterns] --> D

D --> E{composite_priority<br/>+ approval_status}

E -->|approved + per_corpus| F[Per-corpus migration<br/>finalisation queue]

E -->|approved + alignment_drift| G[Cross-corpus alignment<br/>update queue]

E -->|deferred| H[Carry forward to<br/>next quarter's review]

E -->|rejected| I[Close with rationale]

E -->|escalated| J[Year-end review<br/>or architectural review]

F --> K[Next quarter's<br/>cross-corpus rollup]

G --> K

Alignment-Drift Signal Fold-In

The alignment-drift candidates from blog 199 fold into the engineering manager's review queue with their drift-source attribution intact. The drift-source column partitions the candidate set into three review responsibilities. The per_corpus-attributed candidates flow to the corpus facilitator's review track, where the corpus facilitator's review queue is the primary reviewer and the engineering manager's review pass surfaces only the candidates the corpus facilitator has escalated. The global-attributed candidates flow to the platform team's review track, where the platform team's review queue is the primary reviewer and the engineering manager's review pass surfaces only the candidates the platform team has escalated. The both-attributed candidates flow directly to the engineering manager's review pass, because the joint review the candidate requires is exactly the cross-team coordination the engineering manager's review pass is the right venue for.

The fold-in works by having each track write its escalations into a shared review queue the engineering manager reads against, with the drift-source attribution preserved alongside the escalation. The engineering manager's review pass walks the joint queue in composite-priority order, with the corpus facilitator and platform-team reviewers present for the per_corpus and global tracks respectively, and the joint review pass running for the both-attributed candidates with both reviewers present.

There is one specific failure mode worth calling out from the eighteen-month operational history. The third quarter we ran the protocol, the alignment-drift detection function flagged an unmapped-row-count candidate at 4 percent of the corpus's quarterly rollup count, which is twice the 2 percent threshold. The corpus facilitator's review pass came back with no per-corpus migration recommendation and the platform team's review pass came back with no global migration recommendation. The candidate was at risk of being dismissed at the engineering manager's review pass even though the signal was firing strongly. The investigation revealed the unmapped rows were a new category of work the per-corpus classifier had started emitting that quarter, against neither an existing per-corpus category nor an existing global category. The resolution was an add migration on both sides simultaneously, with the cascade-review flow running the alignment in a single coordinated pass. The fix to the review pass was to add a both-side-add candidate type to the joint review queue, so the engineering manager's review pass surfaces the case explicitly rather than relying on either side's reviewer to recognise it independently.

The both-side-add candidate type is now a fifth structured candidate source the prioritisation rubric scores against, with operational-impact and signal-strength scores from the unmapped-row-count rule, a propagation-risk score that defaults to medium because the new category will eventually require its own alignment row, and a retrospective-recurrence score from any contributing-factor patterns the new category's emergence resolves. The eighteen-month history has surfaced four both-side-add candidates across the six-quarter window, a rate the engineering manager's review pass capacity comfortably absorbs.

Trend-Pass Projection-Choice Rubric

The cross-quarter trend-pass primitive supports four projection choices when computing a multi-quarter rollup that spans one or more migrations. Source-quarter snapshots leaves each quarter's rollup labelled against its own taxonomy snapshot, so the rollup output is multi-labelled and the migration is implicit. Target-quarter snapshot forward-projects every prior quarter's rollup onto the target quarter's snapshot using the migration audit's forward-projection mappings, so the rollup output is single-labelled in the target quarter's taxonomy. Synthetic merged snapshot computes a composed snapshot from the chain of migrations and projects every quarter's rollup onto it, so the rollup output is single-labelled in a synthesised taxonomy that captures the full chain. Hybrid projection applies different choices to different quarters of the trend-pass window, typically using source-quarter snapshots for the prior-year quarters and target-quarter snapshot for the current-year quarters.

The engineering manager's projection-choice rubric scores each choice against three criteria for each trend-pass run. The first is interpretability for the audience. Operator-facing dashboards typically prefer single-labelled outputs (target-quarter snapshot or synthetic merged) because the dashboard's UI is single-axis. Engineering-manager-facing rollups typically prefer multi-labelled outputs (source-quarter snapshots or hybrid) because the engineering manager wants to see the migration as an explicit event in the trend rather than as an implicit projection. The score is a three-band ordinal (low, medium, high) per choice per audience.

The second is projection cost. The four projection choices have different query-time costs at the cross-quarter trend-pass primitive. Source-quarter snapshots is the cheapest because no projection logic runs at query time. Target-quarter snapshot is moderate because the forward-projection mapping has to be walked once per migration in the chain. Synthetic merged snapshot is the most expensive because the composed mapping has to be computed across all migrations in the chain before the projection runs. Hybrid projection's cost depends on the choice mix. The score is the projection's expected query-time cost in milliseconds against the trend-pass window's expected size, with a calibration table the platform team maintains.

The third is audit-traceability shape. The four projection choices preserve different aspects of the audit trail in the rollup output. Source-quarter snapshots preserves every quarter's labelling unchanged, so the audit trail is implicit in the rollup output's multi-labelling. Target-quarter snapshot preserves the forward-projection mapping in the rollup output's per-row provenance column, so the audit trail is explicit per row. Synthetic merged snapshot preserves the composed mapping in the rollup output's snapshot anchor hash, so the audit trail is explicit at the snapshot level. Hybrid projection preserves a mix of the three. The score is the audit-trail granularity (row, snapshot, implicit) the audience expects.

The three scores compose into a per-trend-pass projection choice with explicit weights the engineering manager sets per audience type. For operator dashboards, the weight mix is 0.50 interpretability, 0.30 projection cost, 0.20 audit-traceability. For engineering-manager rollups, the weight mix is 0.30 interpretability, 0.20 projection cost, 0.50 audit-traceability. For year-end retrospective rollups, the weight mix is 0.20 interpretability, 0.10 projection cost, 0.70 audit-traceability. The audience-specific weights are reviewed annually at the year-end retrospective alongside the migration prioritisation weights.

flowchart LR

A[Trend-pass run<br/>request] --> B{audience type}

B -->|operator dashboard| C[Weights:<br/>0.50/0.30/0.20]

B -->|eng manager rollup| D[Weights:<br/>0.30/0.20/0.50]

B -->|year-end retrospective| E[Weights:<br/>0.20/0.10/0.70]

C --> F[Score four<br/>projection choices]

D --> F

E --> F

F --> G{highest scored<br/>choice}

G -->|source-quarter| H[Per-quarter<br/>multi-labelled]

G -->|target-quarter| I[Forward-projected<br/>single-labelled]

G -->|synthetic merged| J[Composed mapping<br/>single-labelled]

G -->|hybrid| K[Mixed projection<br/>per quarter]

H --> L[Audience-specific<br/>rollup output]

I --> L

J --> L

K --> L

Operational Integration

The review pass writes back into the per-corpus and cross-corpus protocols' state through three structured channels. The first channel is the per-corpus migration finalisation queue: approved per-corpus migrations are written to the corpus facilitator's finalisation queue with the engineering manager's approval rationale attached, and the corpus facilitator runs the finalisation pass at the start of the new quarter. The second channel is the cross-corpus alignment update queue: approved alignment updates are written to the platform team's update queue with the engineering manager's approval rationale attached, and the platform team runs the cascade-review flow at the start of the new quarter. The third channel is the projection-choice decision: the engineering manager's chosen projection per audience type is written to a per-quarter projection-choice configuration table the trend-pass primitive reads against.

The four projection-choice configuration rows for a typical quarter at our four-corpus deployment look roughly like the following. The operator dashboard's projection choice is target-quarter snapshot, because the dashboard's UI is single-axis and the audience prefers a forward-projected single-labelled output. The engineering-manager rollup's projection choice is hybrid (source-quarter snapshots for the prior-year quarters, target-quarter snapshot for the current-year quarters), because the engineering manager wants the migration boundary visible in the trend. The year-end retrospective's projection choice is source-quarter snapshots, because the audit-traceability weight dominates and the year-end retrospective audience is comfortable with multi-labelled outputs.

| Audience | Projection choice | Why |

|---|---|---|

| Operator dashboard | Target-quarter snapshot | Single-axis UI, forward-projected single-labelled output preferred |

| Engineering-manager rollup | Hybrid | Migration boundary should be visible in trend; source-quarter for prior year, target-quarter for current year |

| Year-end retrospective | Source-quarter snapshots | Audit-traceability weight dominates; multi-labelled output acceptable |

| Annual platform-team review | Synthetic merged | Long-window audit-traceability with composed-mapping snapshot anchor |

The next quarter's signals feed forward into the next review through three loops. The first loop is the deferred-candidate carry-forward: candidates the engineering manager deferred at this quarter's review pass are re-scored at the next quarter's review pass with their composite-priority recomputed against the new operational state. The second loop is the rejected-candidate re-fire: candidates the engineering manager rejected at this quarter's review pass do not surface again unless the underlying drift signal re-fires above its threshold, which is the natural re-entry path for candidates whose underlying drift becomes operationally relevant again. The third loop is the escalated-candidate annual review: candidates the engineering manager escalated at this quarter's review pass are bundled into the year-end engineering-manager review or the platform-team's architectural review, depending on the escalation path.

flowchart TD

A[Quarterly review pass<br/>candidate disposition] --> B{approval_status}

B -->|approved| C[Write to per-corpus<br/>or alignment update queue]

B -->|deferred| D[Carry forward<br/>composite_priority recomputed]

B -->|rejected| E[Close with rationale]

B -->|escalated| F[Year-end review<br/>or architectural review]

C --> G[Next-quarter<br/>operational state]

D --> H[Next quarter's<br/>review pass]

E --> I{drift signal<br/>re-fires?}

I -->|yes| J[Re-enters<br/>candidate queue]

I -->|no| K[Closed permanently]

F --> L[Annual review pass<br/>bundled inputs]

G --> H

J --> H

L --> M[Year-end retrospective<br/>annual planning input]

Production Considerations

Three production considerations are worth calling out for any team that is shipping the taxonomy-aware quarterly review pass against a live multi-corpus deployment.

The first is review-pass capacity sizing. The review pass at our four-corpus deployment runs for two to four hours per quarter, with the engineering manager plus the corpus facilitator plus the platform-team reviewer all present for the joint review portion. The capacity has been comfortable at the four-corpus scale; at the sixteen-corpus scale the platform team estimated a year ago, the joint review portion alone would consume an entire workday per quarter, and the review-pass structure would need re-shaping to handle the load. The mitigation patterns we have considered include a two-stage review pass (a per-domain review preceding a cross-domain joint review), a per-domain engineering-manager assignment (one engineering manager per business domain rather than one per the entire deployment), and an asynchronous review pass for low-priority candidates (the engineering manager reviews the high-priority candidates synchronously and signs off on the low-priority candidates asynchronously). The right pattern depends on the deployment's organisational shape.

The second is audit-traceability of the review-pass itself. The review pass is a structured decision cycle with structured outputs, and the review pass's own decisions are themselves load-bearing for the next quarter's operational state. The pattern that has worked is to instrument the review pass's outputs with the same audit-trail discipline as the per-corpus migration audit table: each approval, deferral, rejection, and escalation is written to a per-quarter quarterly_review_audit table with the engineering manager's identity, the candidate's composite priority, the rationale, and the next-quarter action id. The audit table has the same four-year retention budget as the per-corpus migration audit table and is queryable from the cross-quarter trend-pass primitive's audit-traceability projection.

The third is failure modes. The review pass has two principal failure modes worth instrumenting: review-pass capacity overrun (the review pass runs longer than the scheduled window, indicating either an unsustainable migration rate or under-provisioned review capacity) and deferred-candidate accumulation (the deferred-candidate carry-forward queue grows faster than the review pass drains it, indicating either an unsustainable migration rate or under-prioritised review attention). Both failure modes are caught by per-quarter operational metrics: the review-pass capacity overrun shows up as a scheduled-window-vs-actual-window delta, and the deferred-candidate accumulation shows up as a quarter-over-quarter queue depth growth. Each metric has a per-quarter alert threshold the engineering manager's own review at the next quarter's start consumes.

Conclusion

The taxonomy-aware quarterly review pass is the human-in-the-loop layer that sits on top of the per-corpus taxonomy versioning protocol from blog 198 and the cross-corpus taxonomy alignment layer from blog 199. The migration prioritisation rubric scores each pending migration against four criteria (operational impact, signal strength, propagation risk, retrospective recurrence) with a weighted composite-priority score the engineering manager reviews at the start of each new quarter. The alignment-drift signal fold-in partitions the drift-candidate queue by drift-source attribution into three review tracks (per_corpus, global, both) plus a special both-side-add candidate type for new-category emergence. The trend-pass projection-choice rubric scores the four projection choices against three criteria (interpretability for the audience, projection cost, audit-traceability shape) with audience-specific weights for operator dashboards, engineering-manager rollups, and year-end retrospectives. The operational integration writes back into the per-corpus and cross-corpus protocols' state through three structured channels (per-corpus migration finalisation queue, cross-corpus alignment update queue, projection-choice configuration table) and feeds forward into the next review through three loops (deferred-candidate carry-forward, rejected-candidate re-fire, escalated-candidate annual review).

The next post in this cluster will close the sub-cluster by walking through the annual taxonomy-evolution rollup the platform team runs at the year-end retrospective. The rollup synthesises four quarters of taxonomy migrations across the per-corpus and cross-corpus layers, walks the alignment-drift signal history alongside the migration audit trail, and produces an annual narrative the platform team carries into the next year's planning. The annual rollup is the layer that sits above the quarterly review pass and turns four quarters of structured decisions into a single annual planning input. The post after that will pivot to the cross-deployment alignment layer the production-considerations section of blog 199 flagged, which is the next composition step above the multi-corpus single-deployment shape.

The companion repo's adlc-eval-contracts/quarterly-review/ directory has been updated with the quarterly-review prioritisation table's Postgres DDL, the quarterly-review audit table's DDL, the migration-prioritisation rubric's reference implementation in Python with worked examples for each of the four scoring criteria, the alignment-drift fold-in's reference implementation as a queue-partitioning function with the both-side-add candidate handling, the projection-choice rubric's reference implementation with the audience-specific weight tables, and a small reference implementation of the operational-integration write-back across the three structured channels.

Sources

- Postgres Documentation. Multi-Column Indexes and Partial Indexes. https://www.postgresql.org/docs/current/indexes-multicolumn.html

- pgvector. HNSW and Cosine Similarity. https://github.com/pgvector/pgvector

- Martin Fowler. Event Sourcing. https://martinfowler.com/eaaDev/EventSourcing.html

- Pat Helland. Immutability Changes Everything. ACM CIDR 2015. https://www.cidrdb.org/cidr2015/Papers/CIDR15_Paper16.pdf

- Confluent. Schema Compatibility Modes. https://docs.confluent.io/platform/current/schema-registry/avro.html

- Google SRE Workbook. Postmortem Culture: Learning from Failure. https://sre.google/workbook/postmortem-culture/

- Atul Gawande. Checklist Manifesto. 2009. https://atulgawande.com/book/the-checklist-manifesto/

- Datadog. State of AI Engineering Report 2026. April 2026. https://www.datadoghq.com/state-of-ai-engineering/

- Anthropic. Engineering Operations at Scale. 2026. https://www.anthropic.com/engineering

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-05-10 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment