Your phone has more compute power than the servers that trained GPT-2. So why are we still sending every AI request to the cloud?

Edge AI is changing that. In 2026, language models run directly on phones, tablets, laptops, and even embedded devices -- no internet required. Here's how it works and why it matters.

Why Edge AI Matters

Privacy: Your data never leaves your device. Medical questions, financial queries, personal messages -- processed locally, seen by no one.

Latency: No network round-trip. Responses start in milliseconds, not seconds. Critical for real-time applications like voice assistants and AR.

Cost: No API fees. No cloud compute bills. Once the model is on the device, inference is free.

Availability: Works offline. In airplanes, remote areas, or when your cloud provider has an outage.

What's Possible Today

Phones (2026)

Modern smartphones are surprisingly capable AI devices:

- iPhone 16 Pro: 16 GB unified memory, Apple Neural Engine (38 TOPS). Runs a 3B parameter model at ~15 tokens/second

- Samsung Galaxy S26: 12 GB RAM, Snapdragon 8 Gen 4 NPU. Runs Gemma 2B at ~20 tokens/second

- Google Pixel 10: 12 GB RAM, Tensor G5 with dedicated AI core. Runs Gemini Nano natively

These devices comfortably run 1-3B parameter models. With aggressive quantization (Q2-Q4), you can squeeze in a 7B model, though response times slow down.

Laptops (The Sweet Spot)

Apple Silicon Macs have become the default local AI development platform:

- MacBook Air M3 (24 GB): Runs 7B Q4 at 30+ tokens/sec, 13B Q4 at 15 tokens/sec

- MacBook Pro M4 Max (128 GB): Runs 70B Q4 at 20+ tokens/sec

- Framework Laptop (32 GB, Intel/AMD): Runs 7B Q4 at 15-20 tokens/sec via llama.cpp

The Apple MLX framework deserves special mention. It's designed specifically for Apple Silicon's unified memory architecture, delivering 20-40% better performance than generic implementations.

IoT and Embedded

The frontier of edge AI:

- Raspberry Pi 5 (8 GB): Runs TinyLlama 1.1B at ~3 tokens/sec. Slow, but it works

- NVIDIA Jetson Orin Nano: 8 GB GPU memory, runs 3B models at 10+ tokens/sec. Perfect for robotics

- Coral Edge TPU: Specialized for inference, runs small quantized models for classification and simple generation

The Edge AI Stack

Apple Ecosystem: MLX

MLX is Apple's machine learning framework optimized for Apple Silicon. Key advantages:

- Leverages unified memory (no CPU-to-GPU data copying)

- Lazy evaluation for memory efficiency

- NumPy-like API for Python developers

- Growing model ecosystem on Hugging Face

import mlx.core as mx

from mlx_lm import load, generate

model, tokenizer = load("mlx-community/Llama-3.2-3B-Instruct-4bit")

response = generate(model, tokenizer, prompt="Explain edge AI", max_tokens=200)

Cross-Platform: llama.cpp

llama.cpp runs everywhere -- literally. It's written in pure C/C++ with optional acceleration for:

- Apple Metal (Mac/iOS)

- CUDA (NVIDIA GPUs)

- Vulkan (AMD GPUs, Android)

- OpenCL (broader GPU support)

- CPU with SIMD optimizations (AVX2, NEON)

This makes it the go-to choice for cross-platform edge deployment.

Android: MediaPipe LLM

Google's MediaPipe now includes an LLM inference API for Android. It handles model loading, quantization, and hardware acceleration through a simple API:

val llmInference = LlmInference.createFromOptions(context, options)

val response = llmInference.generateResponse("What is edge AI?")

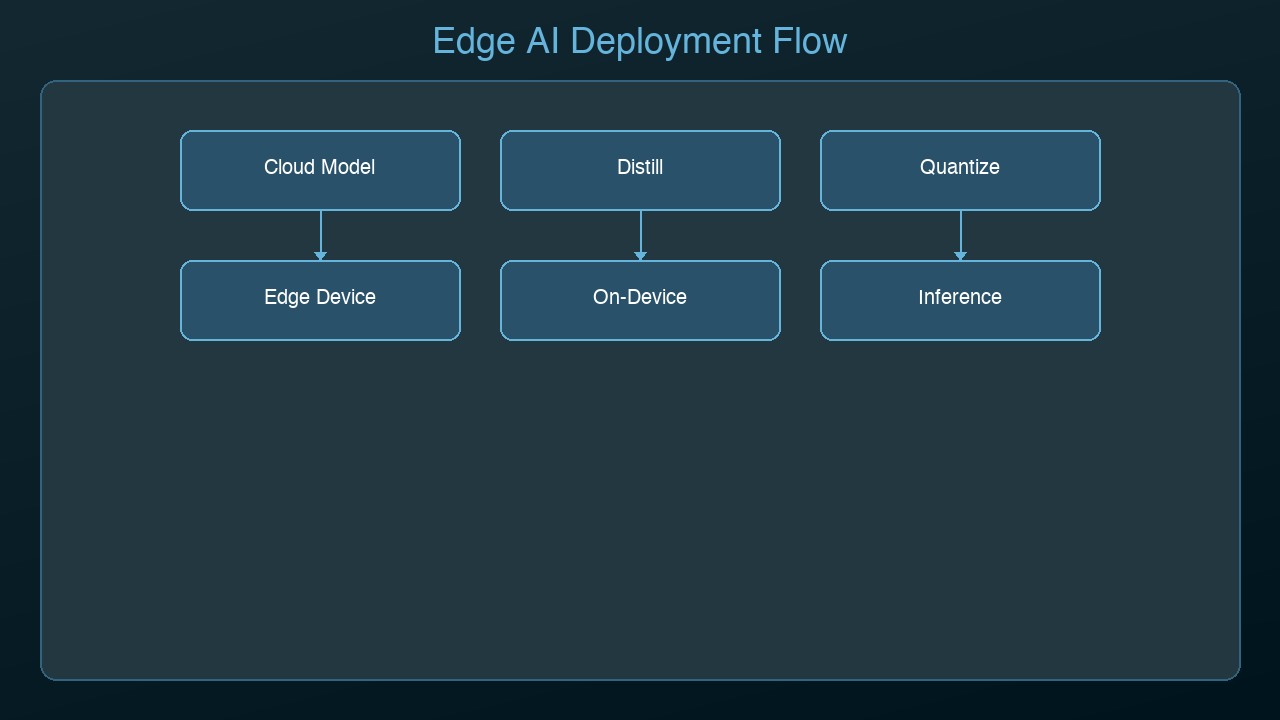

Optimization Techniques for Edge

Running models on constrained devices requires aggressive optimization:

1. Aggressive Quantization

Edge devices benefit most from Q2-Q4 quantization. The quality trade-off is worth it when the alternative is "doesn't fit in memory at all."

2. Knowledge Distillation

Train a small model (1-3B) to mimic a large model (70B). The small model captures 80-90% of the large model's capability at 1/20th the size. This is how Apple Intelligence and Google's on-device models are built.

3. Pruning

Remove unnecessary neurons and connections. Structured pruning can reduce model size by 30-50% with minimal quality loss. Unstructured pruning goes further but requires hardware support.

4. Model Architecture Optimization

Newer architectures designed for edge:

- Gemma 2B: Google's compact model designed for on-device use

- Phi-3 Mini: Microsoft's 3.8B model that punches above its weight

- TinyLlama: 1.1B model trained on 3 trillion tokens -- tiny but capable

5. KV-Cache Compression

On memory-constrained devices, the KV-cache (which grows with context length) is often the bottleneck. Techniques like sliding window attention and grouped-query attention reduce cache size by 4-8x.

Use Cases in Production

Smart Home Assistants: Process voice commands locally. No cloud dependency, instant responses, complete privacy.

Healthcare: Medical devices that analyze patient data on-device. HIPAA compliance is simpler when data never leaves the device.

Automotive: In-car AI for navigation, voice control, and driver assistance. Works in tunnels and dead zones.

Industrial IoT: Predictive maintenance on factory floors. Analyze sensor data locally, alert only when needed.

Education: Offline tutoring apps for students without reliable internet. Especially impactful in developing regions.

The Trade-offs

Edge AI isn't always the right choice:

| Factor | Edge | Cloud |

|--------|------|-------|

| Model Size | 1-7B | Unlimited |

| Response Quality | Good | Best |

| Latency | <100ms | 200ms-2s |

| Privacy | Complete | Depends on provider |

| Cost per Query | Free | $0.001-0.01 |

| Offline Support | Yes | No |

| Updates | Manual | Automatic |

The emerging pattern is hybrid: use edge AI for simple, latency-sensitive, or privacy-critical tasks, and fall back to cloud for complex reasoning that requires larger models.

Getting Started

The fastest path to edge AI:

1. Mac users: Install Ollama, run ollama run llama3.2:3b

2. Mobile developers: Try MediaPipe LLM (Android) or Core ML (iOS)

3. IoT: Start with a Jetson Orin Nano and llama.cpp

4. Web: Use WebLLM to run models directly in the browser via WebGPU

Edge AI isn't a future technology. It's a today technology that's getting better every month. The models are getting smaller, the hardware is getting faster, and the tools are getting simpler.

*Next: Putting it all together -- a complete guide to choosing your AI deployment strategy from development to production.*

Sources & References:

1. Apple — "Core ML Documentation" — https://developer.apple.com/documentation/coreml

2. Google — "MediaPipe Solutions" — https://ai.google.dev/edge/mediapipe/solutions/guide

3. ONNX Runtime — "Mobile and Edge Deployment" — https://onnxruntime.ai/

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

No comments:

Post a Comment