Running an LLM on your laptop is one thing. Serving it to 10,000 concurrent users with sub-second latency is an entirely different challenge. This is where inference servers come in.

Three frameworks dominate production LLM serving in 2026: vLLM, Text Generation Inference (TGI), and Triton Inference Server. Each has a distinct philosophy and sweet spot.

Why You Need an Inference Server

Running ollama run llama3.2 handles one user at a time. Production serving requires:

- Concurrent requests: Handle hundreds of users simultaneously

- Continuous batching: Group requests dynamically for GPU efficiency

- KV-cache management: Efficiently reuse computation across tokens

- Streaming: Return tokens as they're generated, not after completion

- Monitoring: Track latency, throughput, error rates, and GPU utilization

- Fault tolerance: Gracefully handle OOM errors, timeouts, and model failures

An inference server handles all of this so you can focus on your application logic.

vLLM: The Developer Favorite

Best for: Most teams starting with LLM serving

vLLM (Virtual LLM) introduced PagedAttention -- a memory management technique inspired by operating system virtual memory. It dynamically allocates GPU memory for the KV-cache in non-contiguous blocks, eliminating the massive memory waste that plagued earlier serving solutions.

Key features:

- PagedAttention for near-optimal memory utilization

- Continuous batching with preemption support

- Speculative decoding built-in

- OpenAI-compatible API (drop-in replacement)

- Quantization support (GPTQ, AWQ, FP8)

- Multi-GPU tensor parallelism

- Prefix caching for shared system prompts

Getting started:

from vllm import LLM, SamplingParams

llm = LLM(model="meta-llama/Llama-3.2-7B-Instruct")

outputs = llm.generate(["Explain quantization"],

SamplingParams(temperature=0.7, max_tokens=256))

As a server:

vllm serve meta-llama/Llama-3.2-7B-Instruct --port 8000

Then call it like OpenAI:

curl http://localhost:8000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{"model": "meta-llama/Llama-3.2-7B-Instruct",

"messages": [{"role": "user", "content": "Hello"}]}'

Throughput: On an A100 80GB, vLLM can serve Llama 3.2 7B at ~2,500 tokens/second with 32 concurrent users.

TGI: The Production-Hardened Option

Best for: Enterprise deployments, Hugging Face ecosystem users

Text Generation Inference is Hugging Face's production serving solution. Written in Rust for the networking layer and Python for the model layer, it prioritizes reliability and observability.

Key features:

- Flash Attention 2 integration

- Continuous batching with dynamic sizing

- Watermark detection (identifying AI-generated text)

- Prometheus metrics built-in

- Docker-first deployment

- Safetensors support (secure model loading)

- Grammar-constrained generation (force JSON output, etc.)

Getting started:

docker run --gpus all \ -p 8080:80 \ ghcr.io/huggingface/text-generation-inference \ --model-id meta-llama/Llama-3.2-7B-Instruct

Throughput: Comparable to vLLM on standard benchmarks, with slightly better tail latency in some configurations.

Triton: The Enterprise Swiss Army Knife

Best for: Multi-model serving, NVIDIA ecosystem, complex ML pipelines

NVIDIA's Triton Inference Server isn't LLM-specific -- it serves any ML model (computer vision, NLP, recommendation systems). For LLMs, it integrates with TensorRT-LLM, NVIDIA's highly optimized inference backend.

Key features:

- Serves multiple models simultaneously

- Supports multiple frameworks (PyTorch, TensorFlow, ONNX, TensorRT)

- Model ensemble pipelines (chain preprocessing + model + postprocessing)

- Dynamic batching across model types

- Multi-GPU, multi-node scaling

- A/B testing and canary deployments

- Detailed performance analytics

When Triton shines: You're running a Llama model for chat, a CLIP model for image understanding, and a recommendation model for content ranking -- all on the same GPU cluster. Triton manages all three with unified monitoring and resource allocation.

Getting started:

docker run --gpus all \ -p 8000:8000 -p 8001:8001 -p 8002:8002 \ nvcr.io/nvidia/tritonserver:latest \ tritonserver --model-repository=/models

SGLang: The Rising Challenger

Best for: Maximum throughput, prefix-heavy workloads (RAG, multi-turn chat)

SGLang has emerged as the performance leader in 2026. Its RadixAttention mechanism gives it a 29% throughput edge over vLLM on H100 benchmarks (16,215 tok/s vs 12,553 tok/s). On prefix-heavy workloads like RAG and multi-turn chat, gains reach up to 6.4x.

That 29% throughput gap translates to roughly $15,000/month in GPU savings at 1 million requests/day.

Key features:

- RadixAttention for automatic prefix caching

- Constrained decoding with jump-ahead

- Multi-modal model support

- OpenAI-compatible API

SGLang is particularly strong when many requests share common prefixes (system prompts, few-shot examples, RAG context) -- which describes most production LLM workloads.

Head-to-Head Comparison

| Feature | vLLM | SGLang | TGI | Triton + TRT-LLM |

|---------|------|--------|-----|-------------------|

| Setup Complexity | Low | Low | Low | High |

| LLM Throughput | Excellent | Best | Good | Excellent (with TRT-LLM) |

| Multi-model Serving | No | No | No | Yes |

| OpenAI API Compatible | Yes | Yes | Partial | Via adapter |

| Prefix Caching | Good | Best (RadixAttention) | Basic | Good |

| Quantization Support | GPTQ, AWQ, FP8 | GPTQ, AWQ, FP8 | GPTQ, AWQ | FP8, INT8, INT4 |

| Speculative Decoding | Yes | Yes | Yes | Yes |

| Community Size | Largest | Growing fast | Declining | Enterprise |

| Best Hardware | Any GPU | Any GPU | Any GPU | NVIDIA optimized |

Decision Framework

Choose vLLM if:

- You want the fastest path to production

- Your team values simplicity and Python-native tooling

- You need an OpenAI-compatible API

- You're serving 1-3 LLM models

Choose SGLang if:

- Maximum throughput is your priority

- Your workload is prefix-heavy (RAG, multi-turn chat, shared system prompts)

- You want the best performance per GPU dollar

- You're comfortable with a newer but rapidly maturing project

Choose TGI if:

- You're already running it in production (note: Hugging Face now recommends vLLM or SGLang for new deployments)

- You need grammar-constrained generation

- Docker-first deployment fits your infrastructure

Choose Triton if:

- You serve multiple model types (not just LLMs)

- You're on NVIDIA hardware and want maximum performance

- You need model ensembles or complex inference pipelines

- Your organization already uses NVIDIA's ML stack

Cost Optimization Tips

Regardless of which server you choose:

1. Right-size your GPU: A 7B Q4 model doesn't need an A100. An L4 or T4 is sufficient

2. Enable continuous batching: This alone can 3-5x your throughput

3. Use prefix caching: If all requests share a system prompt, cache it

4. Quantize aggressively: Q4 gives you 4x more users per GPU

5. Monitor GPU utilization: If it's below 70%, you're wasting money

6. Consider serverless: For bursty traffic, pay-per-token beats always-on GPUs

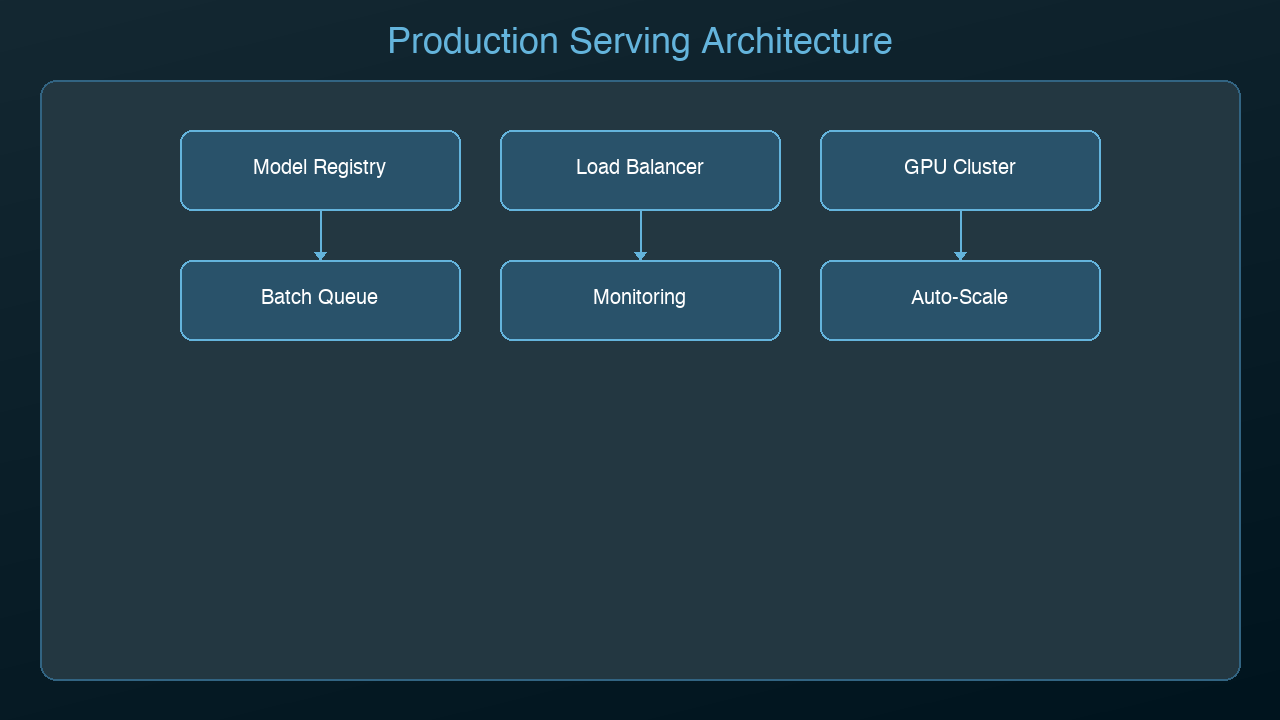

The Emerging Stack

The production AI serving landscape is converging on a standard pattern:

Load Balancer (nginx/Envoy)

-> Inference Server (vLLM/TGI/Triton)

-> Model (quantized, cached)

-> Monitoring (Prometheus/Grafana)

Most teams in 2026 start with vLLM for its simplicity, graduate to TGI for enterprise features, and only move to Triton when they need multi-model orchestration.

Pick the simplest option that meets your requirements. You can always migrate later -- the OpenAI-compatible API layer makes switching relatively painless.

*Next: Edge AI -- running models on phones, IoT devices, and anywhere with no internet connection.*

Sources & References:

1. vLLM — "Official Documentation" — https://docs.vllm.ai/

2. Hugging Face — "Text Generation Inference" — https://huggingface.co/docs/text-generation-inference

3. NVIDIA — "Triton Inference Server" — https://developer.nvidia.com/triton-inference-server

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

No comments:

Post a Comment