Level: Advanced | Topic: Fine-Tuning | Read Time: 8 min

Traditional fine-tuning updates every parameter in a model. For a 7-billion parameter model, that means storing and updating 7 billion floating-point numbers. This requires multiple high-end GPUs and significant memory.

LoRA changed that. Low-Rank Adaptation is a technique that makes fine-tuning accessible to anyone with a single consumer GPU. QLoRA takes it further by adding quantization to the mix.

This article explains both techniques and shows why they have become the default approach for fine-tuning open-source models.

The Problem with Full Fine-Tuning

A 7B parameter model in float16 requires approximately 14 GB of GPU memory just to store the weights. During training, you also need memory for gradients and optimizer states, which typically triples the requirement to 42+ GB. That exceeds the capacity of most consumer GPUs.

For a 70B parameter model, full fine-tuning requires a cluster of A100 GPUs. For most developers and organizations, this is prohibitively expensive.

LoRA: The Key Insight

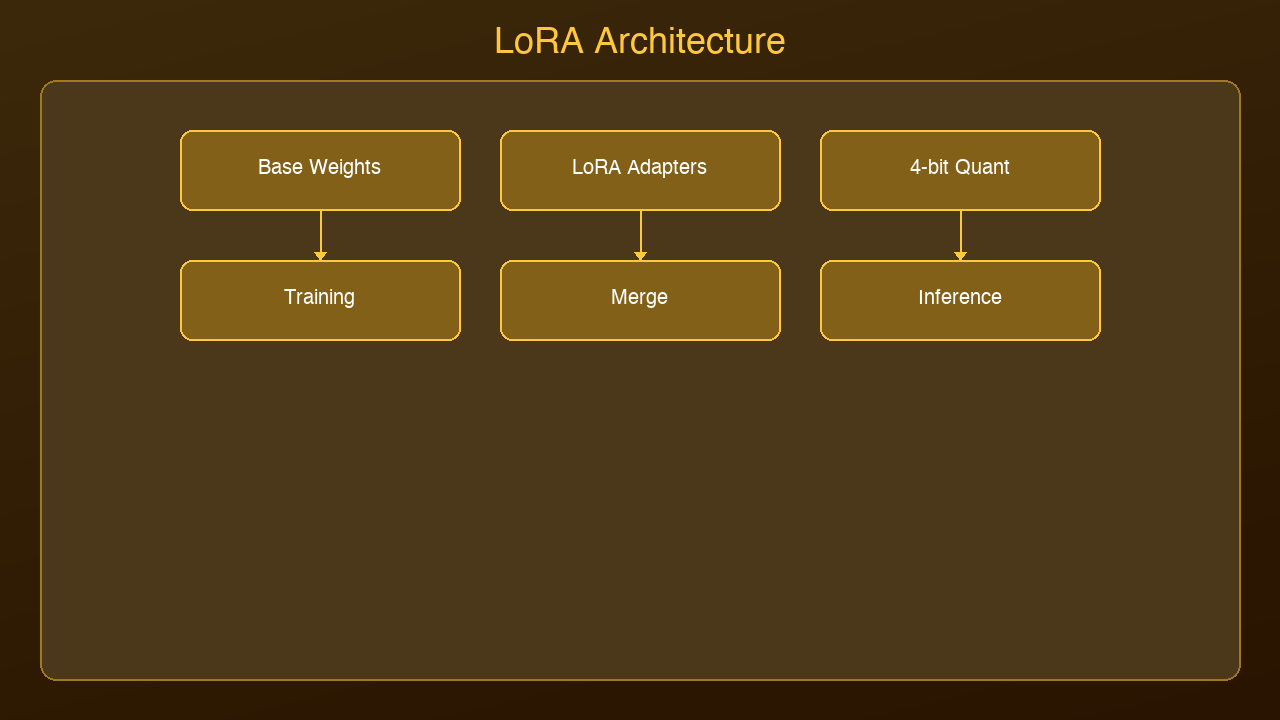

LoRA is based on a simple but powerful observation: when you fine-tune a large language model, the weight updates tend to be low-rank. In other words, the changes can be approximated by much smaller matrices.

Instead of updating the full weight matrix W (which might be 4096 x 4096), LoRA freezes the original weights and trains two small matrices A and B:

- A: 4096 x r (where r is typically 8, 16, or 32)

- B: r x 4096

The effective update is the product A x B, which has the same dimensions as W but is parameterized by far fewer numbers. For rank r=16, you are training 131,072 parameters instead of 16,777,216. That is a 128x reduction.

What This Means in Practice

| Metric | Full Fine-Tuning | LoRA (r=16) |

|--------|-----------------|-------------|

| Trainable parameters | 7B (100%) | ~55M (0.8%) |

| GPU memory required | 42+ GB | 8-12 GB |

| Training time (7B) | Hours on A100 cluster | 30-60 min on single GPU |

| Storage per adapter | 14 GB | 50-200 MB |

| Quality | Baseline | 95-99% of full fine-tuning |

The quality trade-off is remarkably small. Research consistently shows LoRA achieving within a few percentage points of full fine-tuning on most benchmarks.

QLoRA: Adding Quantization

QLoRA combines LoRA with 4-bit quantization of the base model. Instead of loading the frozen weights in float16 (2 bytes per parameter), QLoRA loads them in 4-bit precision (0.5 bytes per parameter).

This reduces the memory footprint by another 4x. A 7B model that requires 14 GB in float16 needs only 3.5 GB in 4-bit quantization. Combined with LoRA's small adapter matrices, you can fine-tune a 7B model on a GPU with 6 GB of VRAM.

The key innovation is that QLoRA uses a technique called NormalFloat4 (NF4), which is information-theoretically optimal for normally distributed weights. Combined with double quantization (quantizing the quantization constants), it achieves quality nearly identical to float16 LoRA.

When to Use LoRA vs QLoRA

Use LoRA when: You have a GPU with 12+ GB VRAM and want the highest quality fine-tuning with minimal trade-offs.

Use QLoRA when: You have a consumer GPU (6-8 GB VRAM) and need to fine-tune within memory constraints. Or when fine-tuning larger models (13B, 70B) on limited hardware.

Use full fine-tuning when: You have enterprise GPU resources and need absolute maximum quality, or you are training a model from scratch.

Tools for LoRA Fine-Tuning

The ecosystem has matured rapidly:

- Unsloth: Fastest LoRA training, 2-5x speedup over standard implementations

- Hugging Face PEFT: The reference implementation, integrates with all HF models

- Axolotl: Simplified config-driven fine-tuning with LoRA/QLoRA support

- LLaMA-Factory: GUI-based fine-tuning with dozens of model templates

Practical Tips

1. Start with rank r=16. Increase to 32 or 64 only if quality is insufficient.

2. Apply LoRA to all linear layers, not just attention. Recent research shows this improves quality.

3. Use a learning rate of 1e-4 to 3e-4. LoRA is less sensitive to learning rate than full fine-tuning.

4. Train for 1-3 epochs. More epochs risk overfitting on small datasets.

5. Use at least 1,000 high-quality training examples. Quality matters more than quantity.

Sources & References:

1. Hu et al. — "LoRA: Low-Rank Adaptation of Large Language Models" (2021) — https://arxiv.org/abs/2106.09685

2. Dettmers et al. — "QLoRA: Efficient Finetuning of Quantized Language Models" (2023) — https://arxiv.org/abs/2305.14314

3. Hugging Face — "PEFT: Parameter-Efficient Fine-Tuning" — https://huggingface.co/docs/peft

*Published by AmtocSoft | amtocsoft.blogspot.com*

*Level: Advanced | Topic: Fine-Tuning, LoRA*

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

No comments:

Post a Comment