What if you could make your LLM generate text 2-3x faster without changing the model, without losing any quality, and without buying better hardware?

That's the promise of speculative decoding -- and it actually delivers.

The Speed Problem

LLMs generate text one token at a time. Each token requires a full forward pass through the entire model. For a 70B model, that means:

- 70 billion multiply-and-add operations per token

- At 30 tokens per second, generating a 500-word response takes ~50 seconds

- The GPU sits idle for much of this time, waiting for memory transfers

The bottleneck isn't compute -- it's memory bandwidth. The GPU can do math faster than it can load model weights from memory. This is called being "memory-bound."

The Key Insight

Here's the trick: most tokens are predictable.

When the model generates "The capital of France is", the next token is almost certainly "Paris". You don't need a 70B model to predict that. A tiny 1B model could get it right.

Speculative decoding exploits this by using a small, fast "draft" model to predict multiple tokens ahead, then verifying those predictions with the large model in a single batch.

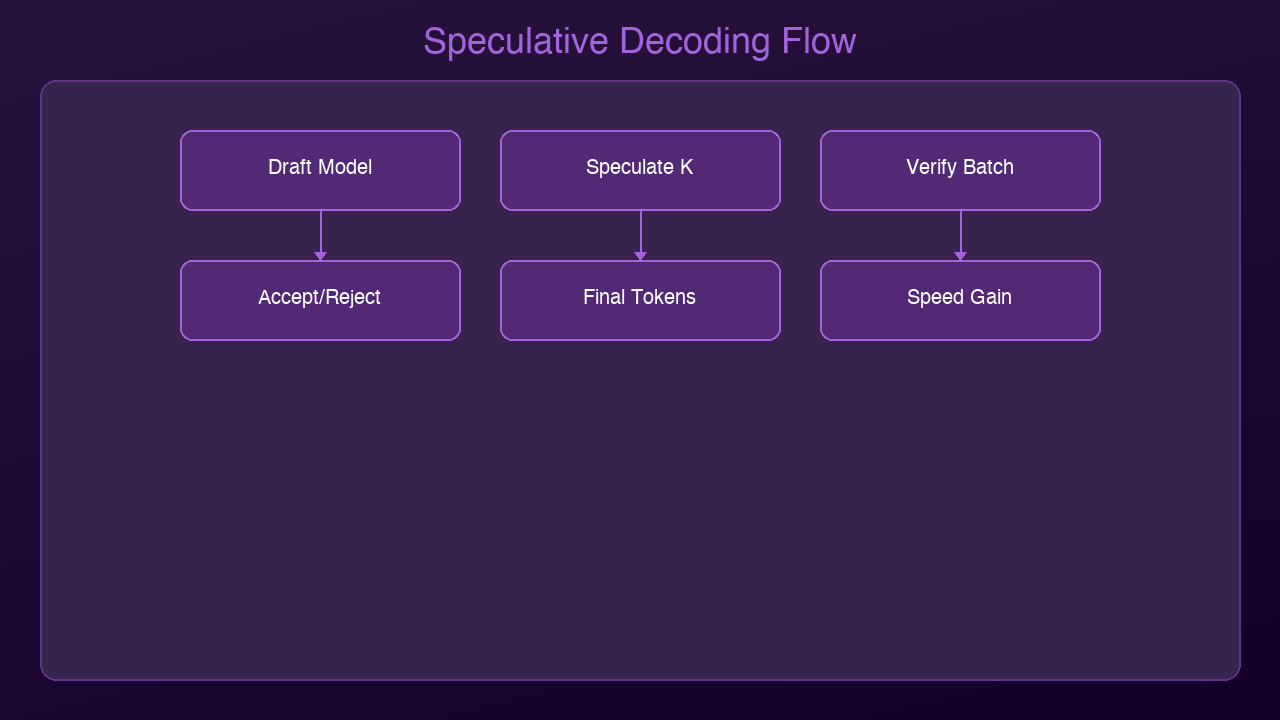

How It Works

Step 1: Draft Phase

A small model (the "draft model") generates K tokens quickly. Let's say K=5:

- "The" -> "capital" -> "of" -> "France" -> "is" -> "Paris"

This takes milliseconds because the draft model is tiny.

Step 2: Verification Phase

The large model processes all K draft tokens in a single forward pass (parallel verification). It checks each token against what it would have generated.

Step 3: Accept or Reject

- If the large model agrees with a draft token: accept it (free speedup!)

- If it disagrees: reject that token and all subsequent ones, use the large model's token instead

Step 4: Repeat

Start a new draft from wherever the last acceptance ended.

Why This Works

The magic is in the verification step. Normally, the large model processes tokens one-by-one (autoregressive). But checking whether a sequence is correct can be done in parallel -- all K tokens verified in a single pass.

If the draft model has an 80% acceptance rate per token:

- 5 draft tokens -> ~3.2 accepted on average

- Cost: 1 small model pass + 1 large model pass

- Gain: ~3.2 tokens for the cost of ~1.5 tokens

- Net speedup: ~2x

The higher the acceptance rate, the bigger the speedup. For predictable text (code, structured data, common patterns), acceptance rates can exceed 90%, yielding 3x+ speedups.

Real-World Performance

| Scenario | Draft Model | Target Model | Acceptance Rate | Speedup |

|----------|------------|--------------|----------------|---------|

| Code completion | 1B | 70B | 85-90% | 2.5-3x |

| General chat | 1B | 70B | 70-80% | 1.8-2.2x |

| Creative writing | 1B | 70B | 60-70% | 1.5-1.8x |

| Technical docs | 1B | 70B | 80-85% | 2.2-2.8x |

Creative writing has the lowest acceptance rate because it's inherently less predictable. Code has the highest because programming languages have rigid syntax.

The Zero Quality Loss Guarantee

This is the critical point: speculative decoding produces mathematically identical output to running the large model alone. It's not an approximation. The verification step guarantees that every accepted token matches what the large model would have generated.

You're not trading quality for speed. You're exploiting the fact that verification is cheaper than generation.

Implementation in Practice

With vLLM (Production)

from vllm import LLM

llm = LLM(

model="meta-llama/Llama-3.2-70B",

speculative_model="meta-llama/Llama-3.2-1B",

num_speculative_tokens=5

)

With llama.cpp (Local)

./main -m llama-70b-q4.gguf \ --draft-model llama-1b-q8.gguf \ --draft-max 8 \ --draft-min 2

Self-Speculative Decoding

Some newer approaches skip the draft model entirely. They use early exit from the large model's own layers as the "draft." Layers 1-8 of a 80-layer model make a quick prediction, and the full 80 layers verify. Same principle, no extra model needed.

2026 Update: EAGLE-3 and Beyond

The speculative decoding landscape has evolved rapidly:

EAGLE-3 achieves 3.0-6.5x speedup over vanilla autoregressive generation -- a 20-40% improvement over EAGLE-2. It fuses information from multiple model layers (not just the top layer) and uses training-time testing to simulate inference conditions during draft model training.

Speculative Speculative Decoding (SSD), published at ICLR 2026, achieves up to 5x over autoregressive and 2x over standard speculative decoding by applying the speculative principle recursively.

Self-speculative decoding is now built into vLLM and SGLang, using early exit from the model's own layers as the draft mechanism -- no separate draft model needed at all.

The trajectory is clear: speculative decoding is moving from "nice optimization" to "table stakes for any serious deployment."

When to Use Speculative Decoding

Great fit:

- Single-user interactive applications (chatbots, coding assistants)

- Latency-sensitive deployments

- GPU memory is available for both models

- Predictable output domains (code, structured data)

Not ideal:

- High-throughput batch processing (batching already saturates the GPU)

- Very short outputs (overhead isn't amortized)

- When GPU memory is too tight for two models

- Extremely creative/diverse outputs (low acceptance rate)

The Bigger Picture

Speculative decoding is part of a broader trend: making inference smarter rather than just making models bigger. Other techniques in this family:

- KV-cache optimization: Reuse computation across tokens

- Continuous batching: Process multiple requests simultaneously

- Flash Attention: Faster attention computation through memory-efficient algorithms

- Quantization: Reduce model size (covered in our previous post)

Combined, these techniques can make a single GPU serve 10x more users than naive inference. The models aren't getting smaller -- we're just getting dramatically better at running them.

*Next: Production AI deployment -- how to serve models to thousands of users with vLLM, TGI, and Triton.*

Sources & References:

1. Leviathan et al. — "Fast Inference from Transformers via Speculative Decoding" (2023) — https://arxiv.org/abs/2211.17192

2. Chen et al. — "Accelerating Large Language Model Decoding with Speculative Sampling" (2023) — https://arxiv.org/abs/2302.01318

3. Li et al. — "EAGLE: Speculative Sampling Requires Rethinking Feature Uncertainty" (2024) — https://arxiv.org/abs/2401.15077

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

No comments:

Post a Comment