Standard RAG has a fundamental flaw: it retrieves every single time, whether it needs to or not. Ask "what is 2+2?" and your RAG pipeline dutifully searches a vector database, finds irrelevant chunks about arithmetic, and feeds them to the LLM alongside the question. The answer was never in your documents. The LLM knew it all along.

This is wasteful. It adds latency, burns API tokens on embeddings, and sometimes the retrieved context actually *degrades* the answer by introducing noise. Self-RAG fixes this by giving the model a choice: retrieve only when it would actually help.

The Problem with Always-Retrieve

Traditional RAG follows a rigid pipeline:

Query → Embed → Search → Retrieve top-k → Augment prompt → Generate

This works well when the answer genuinely lives in your documents. But consider these failure modes:

1. Unnecessary retrieval: General knowledge questions that don't need external context

2. Noisy retrieval: The top-k results are tangentially related but mislead the model

3. Latency overhead: Every query pays the embedding + search cost, even trivial ones

4. Token waste: Retrieved chunks consume context window space that could be used for reasoning

In production systems handling thousands of queries per minute, these inefficiencies compound fast. A system that retrieves on 100% of queries when only 60% actually benefit from retrieval is burning 40% of its retrieval budget for nothing — or worse, degrading quality.

How Self-RAG Works

Self-RAG, introduced by Asai et al. in 2023, trains the language model to make explicit decisions about its own generation process. Instead of blindly following a fixed pipeline, the model outputs special reflection tokens that control the flow:

The Three Reflection Tokens

1. Retrieve Token — Should I search for information?

[Retrieve: Yes]— The model needs external knowledge[Retrieve: No]— The model can answer from its parameters

2. Relevance Token — Is this retrieved passage actually useful?

[Relevant]— The passage supports answering the query[Irrelevant]— The passage doesn't help, discard it

3. Support Token — Does my answer faithfully reflect the source?

[Fully Supported]— Answer is grounded in retrieved evidence[Partially Supported]— Some claims lack evidence[No Support]— Answer contradicts or goes beyond the evidence

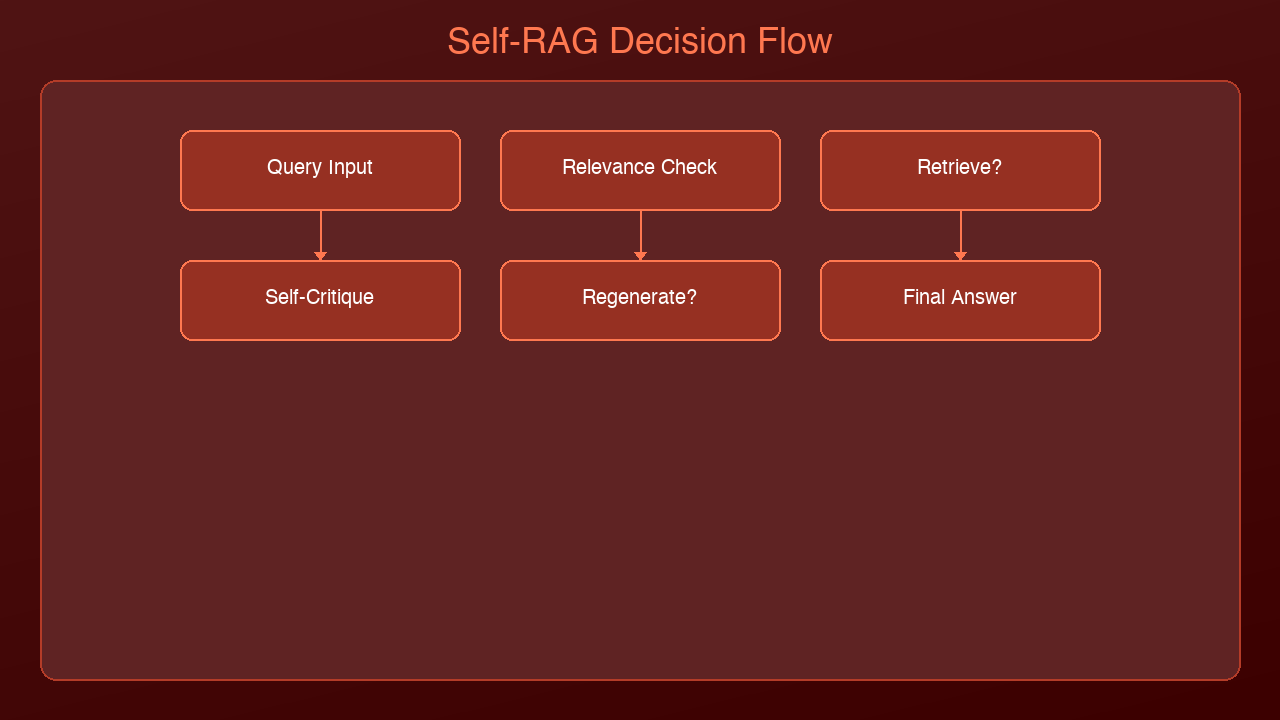

The Self-RAG Flow

Query arrives

→ Model generates Retrieve token

→ If [Retrieve: No]:

Generate answer directly from model knowledge

→ If [Retrieve: Yes]:

Search knowledge base

For each retrieved passage:

→ Generate Relevance token

→ If [Relevant]:

Generate answer candidate using passage

Generate Support token

Score: relevance × support × quality

→ Return highest-scoring answer

This is fundamentally different from standard RAG. The model isn't just consuming retrieved text — it's *critiquing* it at every step and choosing the best path.

Implementing Adaptive Retrieval

You don't need a specially trained Self-RAG model to get most of the benefits. You can implement the core pattern — adaptive retrieval with self-assessment — using any strong LLM:

import anthropic

client = anthropic.Anthropic()

def should_retrieve(query: str) -> bool:

"""Ask the LLM whether retrieval would help."""

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=50,

messages=[{

"role": "user",

"content": f"""Determine if answering this question requires

searching external documents, or if you can answer from general

knowledge alone.

Question: {query}

Respond with only RETRIEVE or DIRECT."""

}]

)

return "RETRIEVE" in response.content[0].text.upper()

def assess_relevance(query: str, passage: str) -> float:

"""Score how relevant a retrieved passage is to the query."""

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=50,

messages=[{

"role": "user",

"content": f"""Rate the relevance of this passage to the question.

Question: {query}

Passage: {passage}

Score from 0.0 (irrelevant) to 1.0 (directly answers the question).

Respond with only the number."""

}]

)

try:

return float(response.content[0].text.strip())

except ValueError:

return 0.5

def self_rag_query(query: str, retriever, threshold: float = 0.6):

"""Self-RAG pipeline: retrieve only when needed, assess relevance."""

# Step 1: Should we retrieve?

if not should_retrieve(query):

# Direct generation -- no retrieval needed

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[{"role": "user", "content": query}]

)

return {

"answer": response.content[0].text,

"retrieval_used": False,

"sources": []

}

# Step 2: Retrieve and assess

passages = retriever.search(query, top_k=5)

scored_passages = []

for passage in passages:

relevance = assess_relevance(query, passage["text"])

if relevance >= threshold:

scored_passages.append({**passage, "relevance": relevance})

# Step 3: Generate with filtered context (or fallback to direct)

if not scored_passages:

# Nothing relevant found -- generate without context

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[{"role": "user", "content": query}]

)

return {

"answer": response.content[0].text,

"retrieval_used": True,

"retrieval_helpful": False,

"sources": []

}

# Sort by relevance, use top passages

scored_passages.sort(key=lambda x: x["relevance"], reverse=True)

context = "\n\n".join(p["text"] for p in scored_passages[:3])

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[{

"role": "user",

"content": f"""Answer this question using the provided context.

If the context doesn't fully answer the question, supplement

with your own knowledge but clearly indicate which parts come

from the context vs your knowledge.

Context:

{context}

Question: {query}"""

}]

)

return {

"answer": response.content[0].text,

"retrieval_used": True,

"retrieval_helpful": True,

"sources": [p["text"][:100] for p in scored_passages[:3]],

"relevance_scores": [p["relevance"] for p in scored_passages[:3]]

}

Cost and Latency Benefits

The impact of adaptive retrieval depends on your query mix, but the savings are real:

| Metric | Standard RAG | Self-RAG | Improvement |

|--------|-------------|----------|-------------|

| Avg latency per query | 800ms | 500ms | 37% faster |

| Embedding API calls | 100% of queries | ~60% of queries | 40% reduction |

| Vector DB queries | 100% of queries | ~60% of queries | 40% reduction |

| Answer quality (noisy queries) | Degraded by irrelevant context | Preserved | Significant |

| Monthly embedding costs | $100 | $60 | $40 savings |

The latency improvement comes from two places: skipping retrieval entirely for direct-answer queries, and reducing the amount of context the LLM must process when retrieval is used but only 2 of 5 passages are relevant.

When to Use Self-RAG vs Standard RAG

Use Standard RAG when:

- Nearly all queries require document retrieval (e.g., customer support over internal docs)

- Your document corpus is narrow and highly relevant

- Simplicity matters more than optimization

- Query volume is low enough that latency/cost isn't a concern

Use Self-RAG when:

- Your system handles diverse query types (some need docs, some don't)

- Cost optimization matters at scale (thousands of queries/day)

- Answer quality is degraded by noisy retrieval

- You need the system to explain its confidence level

- You want to reduce hallucination by assessing source support

Consider Hybrid approaches when:

- You can classify queries into categories with known retrieval needs

- Some document collections are more reliable than others

- You want the benefits without the extra LLM calls for assessment

Advanced: Confidence-Based Routing

For production systems, you can add a confidence router that combines Self-RAG's adaptive retrieval with query classification:

def confidence_router(query: str) -> str:

"""Route queries based on estimated confidence."""

response = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=100,

messages=[{

"role": "user",

"content": f"""Classify this query into one category:

FACTUAL_INTERNAL - Answer is likely in our knowledge base

FACTUAL_GENERAL - Answer is general knowledge (no search needed)

ANALYTICAL - Requires reasoning over multiple sources

AMBIGUOUS - Unclear what information is needed

Query: {query}

Respond with only the category name."""

}]

)

return response.content[0].text.strip()

This lets you skip the retrieval decision for queries you can classify cheaply, and reserve the full Self-RAG assessment for ambiguous cases.

What's Next

Self-RAG is one step on the path from rigid pipelines to fully autonomous AI systems. The next evolution is Agentic RAG, where the model doesn't just decide *whether* to search — it decides *what* to search, *where* to search, and *how many times* to iterate before it's satisfied with the answer. We'll explore that pattern in a future post.

The key insight from Self-RAG is that retrieval should be a tool the model chooses to use, not a mandatory preprocessing step. Once you internalize that shift, it changes how you think about every RAG system you build.

Sources & References:

1. Asai et al. — "Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection" (2023) — https://arxiv.org/abs/2310.11511

2. LangChain — "Self-RAG Implementation" — https://python.langchain.com/docs/concepts/rag/

3. Pinecone — "Self-RAG Explained" — https://www.pinecone.io/learn/self-rag/

*Part of the RAG Deep Dive series on [AmtocSoft](https://amtocsoft.blogspot.com). Follow us on [LinkedIn](https://www.linkedin.com/in/toc-am-b301373b4/) and [X](https://x.com/AmToc96282) for daily AI engineering insights.*

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

No comments:

Post a Comment