Level: Intermediate

Topic: Voice AI, TTS

Text-to-speech has undergone a revolution. Five years ago, TTS voices sounded obviously synthetic -- flat intonation, weird pauses, robotic cadence. In 2026, the best TTS models produce speech that's genuinely difficult to distinguish from a real human recording. Open-source models have crossed the quality threshold that was once exclusive to well-funded commercial APIs, and the cost of generating high-quality speech has collapsed by orders of magnitude.

But choosing the right TTS solution for your project isn't straightforward. You're balancing voice quality, latency, cost, language support, emotion control, and whether you can run it on your own infrastructure. The landscape has expanded dramatically -- ElevenLabs is valued at $11 billion, OpenAI has shipped LLM-powered TTS, Kokoro hit #1 on the TTS Arena leaderboard with just 82 million parameters, and Fish Speech S2 claims to have surpassed every closed-source model on benchmark accuracy.

In this post, we'll compare the major players -- commercial and open-source -- give you real benchmark numbers, and provide a decision framework for choosing the right one for your use case.

The Contenders

We're comparing eight TTS solutions across the commercial and open-source spectrum:

| Solution | Type | Parameters | Best For |

|----------|------|-----------|----------|

| ElevenLabs | Commercial API | Proprietary | Highest quality, voice cloning, emotion control |

| OpenAI TTS | Commercial API | Proprietary | Developer-friendly, GPT ecosystem integration |

| Kokoro | Open-source | 82M | Best quality-per-parameter, self-hosted |

| Fish Speech S2 | Open-source | 4.4B | Benchmark-leading accuracy, emotion tags |

| Cartesia Sonic | Commercial API | Proprietary | Ultra-low latency (40ms) |

| Hume Octave | Commercial API | Proprietary | Emotion-first TTS, LLM-powered |

| Piper | Open-source | ~20-80M | Edge devices, offline, fastest inference |

| Coqui XTTS | Open-source (community) | 467M | Multilingual, zero-shot voice cloning |

How TTS Works: The Modern Pipeline

Before we compare solutions, let's understand what's happening under the hood. Modern TTS systems have evolved through three distinct generations:

Generation 1 -- Concatenative (pre-2018): Stitch together pre-recorded speech fragments. Think early GPS voices.

Generation 2 -- Neural (2018-2024): Encoder-decoder architectures (Tacotron, VITS) that generate mel spectrograms from text, then convert to audio with a vocoder. This is how Piper and early Coqui work.

Generation 3 -- LLM-Powered (2024-present): Language models trained on text and speech tokens jointly. They understand context, emotion, and conversational flow. This is how ElevenLabs v3, Hume Octave, OpenAI gpt-4o-mini-tts, and Fish Speech S2 work.

The shift to LLM-powered TTS is the defining trend of 2026. These models don't just convert text to speech -- they understand what the text means, and they generate speech that reflects that understanding with appropriate emphasis, pacing, and emotion.

ElevenLabs

ElevenLabs remains the commercial quality benchmark for TTS in 2026. Valued at $11 billion after their Series D in February 2026, they've built a comprehensive voice platform with over 1 million users and $330M+ in annual recurring revenue.

Models

ElevenLabs now offers three distinct model tiers:

- Eleven v3 (2025): Their most expressive model. Supports emotional control via audio tags, multi-voice dynamic dialogues, and 70+ languages. Still in alpha with higher latency -- not suitable for real-time conversational AI yet.

- Flash v2.5: Ultra-low-latency model with sub-75ms inference. This is the model to use for voice agents and real-time applications.

- Multilingual v2: The workhorse for voiceovers, audiobooks, and content creation. Most life-like for long-form content.

Strengths

- Voice quality: Consistently top-tier -- natural prosody, emotional range, consistent character across 380+ voices

- Voice cloning: Clone any voice from 30 seconds of audio with high fidelity

- Emotion control: Eleven v3 supports tags like

[excited],[whispered],[sad]for fine-grained emotional direction - Streaming: Flash v2.5 delivers sub-75ms time-to-first-byte for real-time applications

- Ecosystem: Voice library, dubbing studio, conversational AI platform

Weaknesses

- Cost: The most expensive option at scale, especially for voice agents ($0.10/minute for conversational AI)

- Vendor lock-in: No self-hosting option, API-only

- v3 latency: The highest-quality model (v3) has too much latency for real-time conversations

- Rate limits: Can hit throughput limits on lower-tier plans

Pricing

| Plan | Price/Month | Characters/Month | Per-Minute (Conversational AI) |

|------|------------|-----------------|-------------------------------|

| Free | $0 | 10,000 | N/A |

| Starter | $5 | 30,000 | $0.10 |

| Creator | $22 | 100,000 | $0.10 |

| Pro | $99 | 500,000 | $0.10 |

| Scale | $330 | 2,000,000 | $0.10 |

| Business | $1,320 | 11,000,000 | Custom |

Code Example

from elevenlabs import ElevenLabs

client = ElevenLabs(api_key="your-api-key")

# Generate speech with streaming (Flash v2.5 for low latency)

audio_stream = client.text_to_speech.convert_as_stream(

voice_id="JBFqnCBsd6RMkjVDRZzb", # "George" voice

text="Welcome to AmtocSoft. Today we're exploring the state of text-to-speech in 2026.",

model_id="eleven_flash_v2_5",

output_format="mp3_44100_128"

)

# Stream to file

with open("output.mp3", "wb") as f:

for chunk in audio_stream:

f.write(chunk)

# Using Eleven v3 with emotion control

audio_stream_v3 = client.text_to_speech.convert_as_stream(

voice_id="JBFqnCBsd6RMkjVDRZzb",

text="[excited] This is incredible! The open-source models are catching up fast. [thoughtful] But there are still trade-offs to consider.",

model_id="eleven_v3",

output_format="mp3_44100_128"

)

Latency

- Flash v2.5: sub-75ms time-to-first-byte

- Multilingual v2: 150-300ms time-to-first-byte

- Eleven v3: 300-600ms time-to-first-byte (alpha, improving)

OpenAI TTS

OpenAI's TTS offering is the pragmatic choice for developers already in the OpenAI ecosystem. In 2026, they've expanded beyond the original tts-1/tts-1-hd models with gpt-4o-mini-tts -- a new LLM-powered TTS that uses the language model backbone for more contextual, natural speech generation.

Models

| Model | Pricing | Use Case |

|-------|---------|----------|

| tts-1 | $15/1M characters | Cost-effective, good quality |

| tts-1-hd | $30/1M characters | Highest fidelity traditional TTS |

| gpt-4o-mini-tts | ~$0.60 input + $12/1M audio tokens (~$0.015/min) | LLM-powered, contextual speech |

Strengths

- Developer experience: Clean API, excellent documentation, easy integration

- gpt-4o-mini-tts: Uses the LLM backbone for contextual understanding -- it doesn't just read text, it understands meaning and generates speech with appropriate emphasis

- Consistency: Very stable output quality across inputs

- Ecosystem: Pairs naturally with GPT-4o, Whisper, and the Realtime API for complete voice pipelines

- 13 built-in voices: Alloy, Ash, Ballad, Coral, Echo, Fable, Nova, Onyx, Sage, Shimmer, and more

Weaknesses

- No voice cloning: Cannot clone arbitrary voices (custom voice requires an application process)

- Limited emotion control: Less expressive than ElevenLabs v3 or Hume Octave

- gpt-4o-mini-tts limits: Max 2,000 input tokens per request

- Fewer voices: 13 voices vs ElevenLabs' 380+

Code Example

from openai import OpenAI

client = OpenAI(api_key="your-api-key")

# Standard TTS

response = client.audio.speech.create(

model="tts-1",

voice="nova",

input="Welcome to AmtocSoft. Today we're exploring voice AI.",

response_format="mp3",

speed=1.0

)

response.stream_to_file("output.mp3")

# LLM-powered TTS with gpt-4o-mini-tts

# This model understands context and generates more natural speech

response = client.audio.speech.create(

model="gpt-4o-mini-tts",

voice="coral",

input="I can't believe it! The results are in and they're absolutely stunning.",

response_format="mp3"

)

response.stream_to_file("contextual_output.mp3")

# Streaming for real-time applications

with client.audio.speech.with_streaming_response.create(

model="tts-1",

voice="nova",

input="This is a streaming example for real-time playback.",

response_format="pcm"

) as response:

for chunk in response.iter_bytes(chunk_size=4096):

play_audio(chunk) # Your audio player function

Latency

- tts-1: 200-400ms time-to-first-byte

- tts-1-hd: 400-700ms time-to-first-byte

- gpt-4o-mini-tts: 250-500ms time-to-first-byte

Kokoro (Open-Source Champion)

Kokoro is the open-source TTS model that changed the game. With just 82 million parameters -- a fraction of competing models -- it reached #1 on the TTS Arena leaderboard in January 2026, beating XTTS (467M params) and MetaVoice (1.2B params). It proves that a well-designed small model can compete with models 10-50x its size.

Strengths

- Quality: MOS score of 4.2 -- highest among open-source models, competitive with commercial offerings

- Tiny model: Just 82M parameters means it runs on almost anything

- Self-hosted: Run on your own GPU with Apache 2.0 license -- fully free for commercial use

- Fast inference: RTF 0.03 on GPU (96x real-time). A 10-second clip synthesizes in 0.3 seconds

- No API costs: Self-hosted cost under $1 per 1M characters, or under $0.06/hour of audio output

- Community: 2.2M+ downloads on Hugging Face, 5,600+ community supporters

- 8 languages, 54 voices: English, Japanese, Chinese, Korean, French, and more

Weaknesses

- No emotion control: Doesn't support emotional tags like ElevenLabs v3 or Fish Speech

- No voice cloning: Requires fine-tuning for custom voices, no zero-shot cloning

- GPU recommended: CPU inference is usable but 5-10x slower

- Less expressive: Good prosody but less dynamic range than LLM-powered models

Code Example

import kokoro

import soundfile as sf

# Initialize the model (downloads automatically on first run)

pipeline = kokoro.KPipeline(lang_code="a") # American English

# Generate speech

generator = pipeline(

"Welcome to AmtocSoft. Today we're exploring the incredible advances "

"in text-to-speech technology. The open-source ecosystem has reached "

"a quality level that was unthinkable just two years ago.",

voice="af_heart", # Built-in voice

speed=1.0

)

# Collect and save audio segments

for i, (gs, ps, audio) in enumerate(generator):

sf.write(f"output_{i}.wav", audio, 24000)

# For continuous output, concatenate segments

import numpy as np

all_audio = []

for gs, ps, audio in pipeline("Your text here.", voice="af_heart"):

all_audio.append(audio)

combined = np.concatenate(all_audio)

sf.write("full_output.wav", combined, 24000)

Performance

| Hardware | Real-Time Factor | Notes |

|----------|-----------------|-------|

| RTX 4090 | 96x real-time | 10s audio in 0.1s |

| RTX 3080 | ~50x real-time | 10s audio in 0.2s |

| M2 MacBook Pro | ~20x real-time | CPU inference, still very fast |

| CPU-only (16-core) | ~5-10x real-time | Usable for batch, not ideal for streaming |

Training cost was approximately $1,000 in compute on hundreds of hours of data -- a remarkable efficiency achievement.

Fish Speech S2 (The New Challenger)

Fish Speech S2, released in March 2026, is the most ambitious open-source TTS model yet. Using a novel Dual-Autoregressive architecture with 4.4 billion parameters, it claims to surpass every closed-source model on benchmark accuracy -- including ElevenLabs.

Architecture

Fish S2 uses two autoregressive models working in tandem:

- Slow AR (4B parameters): Handles high-level speech planning -- prosody, emotion, speaker identity

- Fast AR (400M parameters): Generates fine-grained audio tokens at high speed

Benchmark Results

| Metric | Fish S2 | Fish S2 Pro | ElevenLabs | Best Prior Open-Source |

|--------|---------|-------------|------------|----------------------|

| WER (Chinese) | 0.54% | - | - | ~2% |

| WER (English) | 0.99% | - | - | ~3% |

| Quality Score | 4.51/5.0 | - | ~4.3/5.0 | ~4.2/5.0 |

| Audio Turing Test | 0.515 | - | ~0.42 | ~0.39 |

Strengths

- Accuracy: Lowest word error rate among all models, including closed-source

- Emotion control: Natural language tags --

[whisper],[angry],[laughing nervously]-- with 93.3% tag activation rate - Multi-speaker: Generate multiple speakers in a single pass

- Low latency: Under 150ms TTFA, RTF 0.195 on NVIDIA H200

Weaknesses

- License: Code is Apache 2.0, but model weights require a separate commercial license from Fish Audio

- GPU requirements: 4.4B parameters needs a serious GPU (A100/H100 class)

- New: Less community tooling and integration support than Kokoro or Piper

- Stability: As a new release, some edge cases and artifacts still being resolved

Code Example

from fish_speech import FishSpeechS2

# Initialize model

model = FishSpeechS2.from_pretrained("fishaudio/fish-speech-s2")

model.to("cuda")

# Generate with emotion control

audio = model.generate(

text="[excited] This is amazing! [thoughtful] But let me think about the implications...",

speaker="default",

language="en"

)

audio.save("output.wav")

# Zero-shot voice cloning

audio = model.generate(

text="This uses a cloned voice from just a few seconds of reference audio.",

reference_audio="reference.wav",

language="en"

)

audio.save("cloned_output.wav")

Cartesia Sonic 3 (The Latency King)

Cartesia has positioned itself as the latency leader in commercial TTS. Their Sonic 3 model achieves 40ms inference time -- the fastest in the industry.

Key Specs

- Inference latency: 40ms (90ms time-to-first-audio including network)

- Languages: 40+ including 9 Indian languages

- Quality: Competitive with ElevenLabs on naturalness benchmarks

- Pricing: Usage-based at 1 credit per character (1.5 for Pro Voice Cloning)

Best for: Voice agents where every millisecond matters. If your pipeline latency budget is tight, Cartesia's 40ms TTS means more budget for STT and LLM.

Hume Octave 2 (Emotion-First TTS)

Hume AI takes a fundamentally different approach: their Octave model is the first TTS powered by an LLM trained jointly on text, speech, and emotion tokens.

Key Specs

- Architecture: LLM trained on text + speech + emotion tokens

- Generation time: Under 200ms (40% faster than v1)

- Languages: 11 (20+ coming)

- Quality: In blind testing, preferred over ElevenLabs 71.6% of the time

- TTS Arena: ELO ~1,565 (top 5)

- Pricing: ~50% of ElevenLabs, Starter plan at $3/month

Best for: Applications where emotional expression matters -- therapy bots, storytelling, character voices, customer service where empathy is important.

Piper (Edge & Offline Champion)

Piper is the speed champion. Built for edge and embedded deployment using VITS architecture exported to ONNX, it generates speech at extraordinary speeds -- even on a Raspberry Pi.

Strengths

- Speed: 100-200x real-time on modern CPUs

- Tiny footprint: Models as small as 20MB

- No GPU needed: Designed for CPU inference (interestingly, CPU can be 5x faster than GPU in some configurations)

- Embedded-friendly: Runs on Raspberry Pi 4, mobile devices, edge hardware

- Offline: Completely self-contained, no network needed

- Integration: Home automation support (openHAB), accessibility tools

Weaknesses

- Lower quality: Noticeably more synthetic than Kokoro or commercial options

- Limited expressiveness: Flat emotional range

- VITS architecture: Older generation neural TTS, not LLM-powered

Code Example

from piper import PiperVoice

import wave

# Load model (20-80MB ONNX file)

voice = PiperVoice.load("en_US-lessac-medium.onnx")

# Generate speech

wav_file = wave.open("output.wav", "w")

wav_file.setnchannels(1)

wav_file.setsampwidth(2)

wav_file.setframerate(voice.config.sample_rate)

voice.synthesize("Welcome to AmtocSoft. Today we're exploring voice AI.", wav_file)

wav_file.close()

# Command-line usage

# echo "Hello from Piper!" | piper --model en_US-lessac-medium.onnx --output_file output.wav

Performance

- Modern CPU: 100-200x real-time

- Raspberry Pi 4: 5-10x real-time

- Memory usage: 50-200MB depending on model size

- Latest version: piper-tts-plus v1.8.2 (March 2026)

Coqui XTTS (Community-Maintained)

Coqui the company shut down in December 2025 after raising $3.3M but failing to find sustainable monetization. The open-source community, led by the Idiap Research Institute, has forked and continues maintaining the XTTS model.

Current Status

- Maintained by: Idiap Research Institute (

github.com/idiap/coqui-ai-TTS) - Install:

pip install coqui-tts - Model weights: Still available on Hugging Face

- Update cadence: Community releases quarterly

- XTTS-v2 parameters: 467M

Strengths

- Voice cloning: Clone any voice from a 6-second reference clip

- Multilingual: Supports 17+ languages natively

- Cross-lingual cloning: Clone a voice in one language, generate speech in another

Weaknesses

- No company behind it: Community-maintained means slower feature development

- Speed: Slower than Kokoro and Piper (5-15x real-time on good GPU)

- Quality: Good but surpassed by Kokoro, Fish S2, and commercial options

Head-to-Head Comparison

Voice Quality Benchmarks

| Model | MOS Score | TTS Arena ELO | WER (English) |

|-------|-----------|---------------|---------------|

| Fish Speech S2 Pro | 4.51 | ~1,560 | 0.99% |

| ElevenLabs v3 | ~4.3 | 1,108 | ~2.5% |

| Hume Octave 2 | ~4.3 | 1,565 | ~2.8% |

| Kokoro | 4.2 | #1 (Jan 2026) | ~3.2% |

| OpenAI tts-1-hd | ~4.0 | - | ~3.5% |

| Cartesia Sonic 3 | ~4.0 | - | ~3.0% |

| Coqui XTTS v2 | ~3.7 | - | ~5.0% |

| Piper (medium) | ~3.2 | - | ~6.5% |

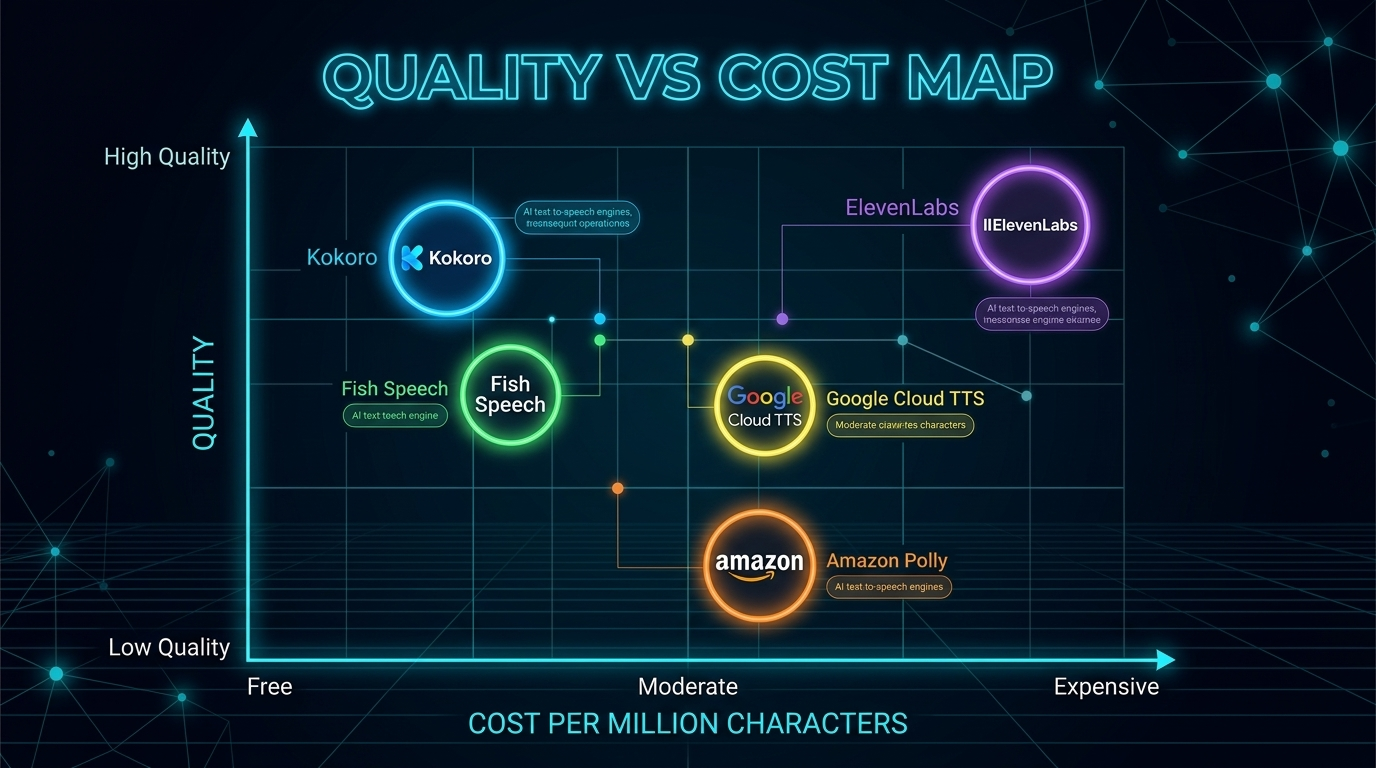

Cost Comparison (per 1 Million Characters)

| Model | Cost | Self-Hosted? |

|-------|------|-------------|

| Piper | $0* | Yes (CPU only) |

| Kokoro | <$1* | Yes (GPU recommended) |

| Qwen3-TTS | $0* | Yes (Apache 2.0) |

| Inworld TTS | $10 | No |

| OpenAI tts-1 | $15 | No |

| OpenAI tts-1-hd | $30 | No |

| Deepgram TTS | $30 | No |

| ElevenLabs (Scale) | ~$33 | No |

| ElevenLabs (Pro) | ~$40 | No |

*Self-hosted models have infrastructure costs. Running a GPU server costs roughly $0.50-2.00/hour depending on the GPU. At scale, this works out to under $1 per million characters.

Latency (Time-to-First-Audio)

| Model | TTFA | Notes |

|-------|------|-------|

| Piper (CPU) | 5-15ms | Fastest overall |

| Cartesia Sonic 3 | 40ms | Fastest commercial |

| ElevenLabs Flash v2.5 | <75ms | Best quality at low latency |

| Qwen3-TTS | 97ms | Open-source, very fast |

| Fish Speech S2 | ~100ms | On NVIDIA H200 |

| Kokoro (GPU) | 100-150ms | On RTX 4090 |

| OpenAI tts-1 | 200-400ms | API latency included |

| ElevenLabs v3 | 300-600ms | Alpha, improving |

The TTS Decision Flow

Use this decision tree to pick the right TTS solution for your project:

The Hybrid Approach

Many production systems combine multiple TTS engines. Here's the pattern we recommend:

Tier 1: Customer-Facing, Real-Time

Use ElevenLabs Flash v2.5 or Cartesia Sonic 3 for voice agents and real-time interactions where quality and latency both matter. Cost: $0.07-0.10/minute.

Tier 2: Content Generation

Use Kokoro or Fish Speech S2 for batch content -- podcast narration, video voiceovers, audiobook generation. Self-hosted cost: under $0.01/minute.

Tier 3: Edge & Fallback

Use Piper for on-device TTS when the network is unavailable, or for privacy-sensitive applications that can't send audio to external APIs.

class HybridTTS:

"""Route TTS requests to the optimal engine based on context."""

def __init__(self):

self.elevenlabs = ElevenLabsClient() # Tier 1: real-time

self.kokoro = KokoroPipeline() # Tier 2: batch

self.piper = PiperVoice.load("model.onnx") # Tier 3: fallback

def synthesize(self, text: str, context: str = "realtime") -> bytes:

if context == "realtime":

return self.elevenlabs.generate(text, model="eleven_flash_v2_5")

elif context == "batch":

return self.kokoro.generate(text, voice="af_heart")

elif context == "offline":

return self.piper.synthesize(text)

else:

# Default to best value

return self.kokoro.generate(text, voice="af_heart")

This gives you quality where it matters, cost savings where it doesn't, and resilience through redundancy.

Key Trends Shaping TTS in 2026

1. Open-Source Parity

The gap between commercial and open-source TTS has functionally closed. Kokoro (82M params) beats models 50x its size. Fish Speech S2 claims lower WER than any commercial model. Qwen3-TTS from Alibaba (Apache 2.0, fully free) outperforms ElevenLabs on word error rate benchmarks. The days of needing a commercial API for acceptable quality are over.

2. Emotion Control Is the New Frontier

Five TTS systems now support emotion tags or emotional direction: ElevenLabs v3, Fish Speech S2, Hume Octave, Chatterbox (Resemble AI), and Sesame CSM. The next generation of voice agents won't just sound human -- they'll sound empathetic, excited, or concerned as the conversation demands.

3. LLM-Powered TTS

Hume Octave, OpenAI gpt-4o-mini-tts, and Fish S2 all use language model backbones. This means TTS that understands context, not just phonemes. When the text says "I can't believe it!", these models know to add surprise to the voice without explicit tags.

4. The Latency War

Cartesia (40ms), ElevenLabs Flash (75ms), Qwen3-TTS (97ms), and Fish S2 (100ms) are all competing to be the fastest. For voice agents, every millisecond of TTS latency is a millisecond subtracted from the user's patience.

5. Cost Collapse

Self-hosted open-source TTS is now under $1 per million characters. Commercial APIs range from $10-40 per million characters. Two years ago, high-quality TTS started at $30+ per million characters with no self-hosted option. The cost of adding voice to any application has dropped by 30-100x.

Conclusion

The best TTS engine is the one that fits your specific constraints -- quality requirements, latency budget, cost sensitivity, and infrastructure capabilities. Here's the quick summary:

| If You Need... | Use This |

|----------------|----------|

| Best overall quality | Fish Speech S2 or ElevenLabs v3 |

| Lowest latency | Cartesia Sonic 3 (40ms) or Piper (5ms) |

| Best value (self-hosted) | Kokoro (82M params, <$1/1M chars) |

| Emotion control | ElevenLabs v3 or Hume Octave |

| Voice cloning (commercial) | ElevenLabs |

| Voice cloning (open-source) | Coqui XTTS or Fish Speech S2 |

| Edge/offline | Piper |

| Simplest integration | OpenAI TTS |

The TTS landscape in 2026 is remarkable. Quality that was exclusive to well-funded labs is now available to any developer with a GPU. Commercial providers are competing on latency, emotion, and ecosystem rather than basic quality. And the pace of improvement shows no sign of slowing.

Don't be afraid to mix and match. The hybrid approach -- commercial for real-time, open-source for batch, Piper for offline -- gives you the best of all worlds.

Sources & References:

1. ElevenLabs — "Text to Speech" — https://elevenlabs.io/

2. OpenAI — "Text-to-Speech API" — https://platform.openai.com/docs/guides/text-to-speech

3. Kokoro — "Open-Source TTS" — https://huggingface.co/hexgrad/Kokoro-82M

*This is part 2 of the AmtocSoft Voice AI series. Next up: a deep dive into speech-to-text engines -- Whisper, Deepgram, and the new challengers.*

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

No comments:

Post a Comment