Deepfake Phishing: How AI-Generated Attacks Are Targeting Your Organization in 2026

Introduction

In early 2024, a finance employee at a multinational firm in Hong Kong joined what appeared to be a routine video conference. The call included his CFO and several other senior colleagues — familiar faces, familiar voices. By the end of the meeting, he had authorized transfers totaling $25 million USD. Every person on that call except him was a deepfake. The attackers had trained AI models on publicly available footage of the real employees, generated convincing video and audio in real time, and walked away with one of the largest social engineering payouts in history.

This is not a future-state scenario. It happened, and the techniques used are now cheaper, faster, and more accessible than they were when that attack took place.

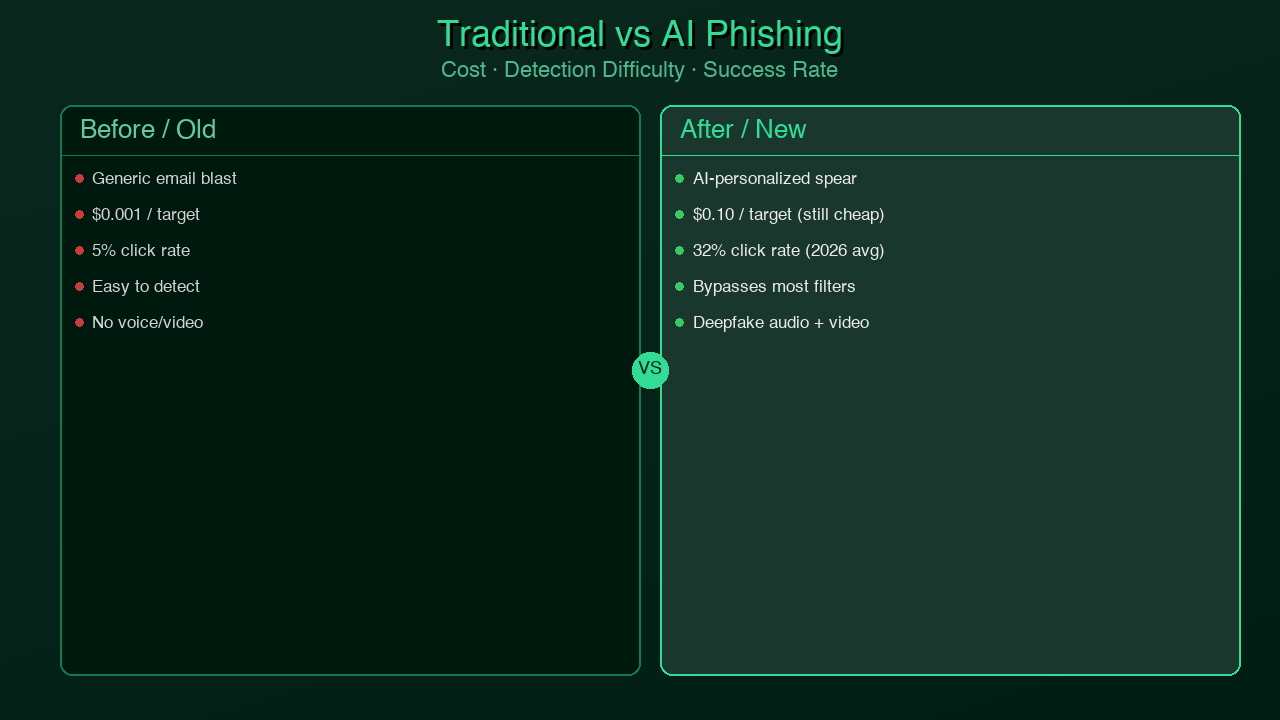

Traditional phishing relied on volume. Attackers sent millions of generic emails and hoped a small percentage of recipients would click a malicious link or hand over credentials. Defense was also volume-based: spam filters, blacklists, user awareness training built around the classic "Nigerian prince" email. The model held for decades because attackers couldn't afford to personalize at scale.

AI broke that equation. Voice cloning services can replicate a person's voice from less than 30 seconds of audio. Open-source face-swap models run on consumer GPUs. Large language models can draft perfectly grammatical, contextually accurate spear-phishing emails by scraping a target's LinkedIn profile, blog posts, and public communications in minutes. The cost of a targeted, personalized attack has collapsed from thousands of dollars and weeks of human labor to a few dollars and a few hours of compute.

For security-aware developers, tech leads, and DevSecOps engineers, this shift demands a different mental model. The question is no longer "does this email look like phishing?" It's "do I have a verified, out-of-band reason to trust this request?" This post breaks down how these attacks work, what the real-world incidents look like, and — most importantly — what technical and process controls you can implement today to harden your organization against them.

The New Attack Surface

To understand why deepfake phishing is different, you need to understand what changed in the cost structure of attacks.

The Economics of Old Phishing

Classic phishing was a numbers game. An attacker might pay a few hundred dollars for a phishing kit, blast millions of emails using a botnet, and convert a fraction of a percent into credential theft or malware installs. Spear phishing — targeted attacks against specific individuals — required real human research time and skilled social engineers. A sophisticated Business Email Compromise (BEC) attack targeting a CFO might take a team of attackers weeks of reconnaissance and impersonation effort. The return had to justify the investment, which limited targeting to high-value organizations.

What AI Changed

The AI toolchain for a modern deepfake attack is disturbingly accessible:

Voice cloning: Services like ElevenLabs allow voice cloning from as little as a 30-second sample. A YouTube earnings call, a LinkedIn video post, a podcast appearance, or a TED talk provides more than enough source material for most executives. The resulting clone can speak any script with convincing prosody and emotional variation. Cost: free tier available, professional tiers starting at $5/month.

Video deepfakes: Open-source projects like DeepFaceLab and commercial tools allow real-time face-swapping during live video calls. While high-fidelity real-time deepfakes still require meaningful GPU resources, the barrier is a $500 gaming PC — not a nation-state budget. Latency and quality continue to improve with each model generation.

LLM-written spear phishing: GPT-4-class models can ingest a target's public digital footprint — LinkedIn connections, GitHub commits, conference talks, published papers — and generate highly personalized phishing emails that reference real projects, real colleagues, and real internal vocabulary. These emails pass traditional spam filters and fool users trained to spot generic phishing cues.

OSINT automation: Tools like Maltego, SpiderFoot, and purpose-built scraping scripts can aggregate a target's digital footprint in minutes, feeding it directly into attack pipeline prompts.

Cost Comparison

| Attack Type | Old Cost (2018) | New Cost (2026) |

|---|---|---|

| Generic phishing email | $0.001/email | $0.001/email |

| Spear phishing email | $500–2,000 (human time) | $2–10 (LLM + OSINT) |

| Voice clone of executive | $50,000+ (actor/studio) | $5–20 (ElevenLabs + sample) |

| Video deepfake (pre-recorded) | $100,000+ (studio) | $50–200 (GPU rental) |

| Real-time video deepfake | Nation-state level | $500–2,000 (consumer GPU) |

The cost curve has a direct implication: attacks that previously targeted only Fortune 500 companies with multi-million dollar fraud potential are now economically viable against mid-market companies, municipal governments, hospitals, and law firms.

How Deepfake Attacks Work

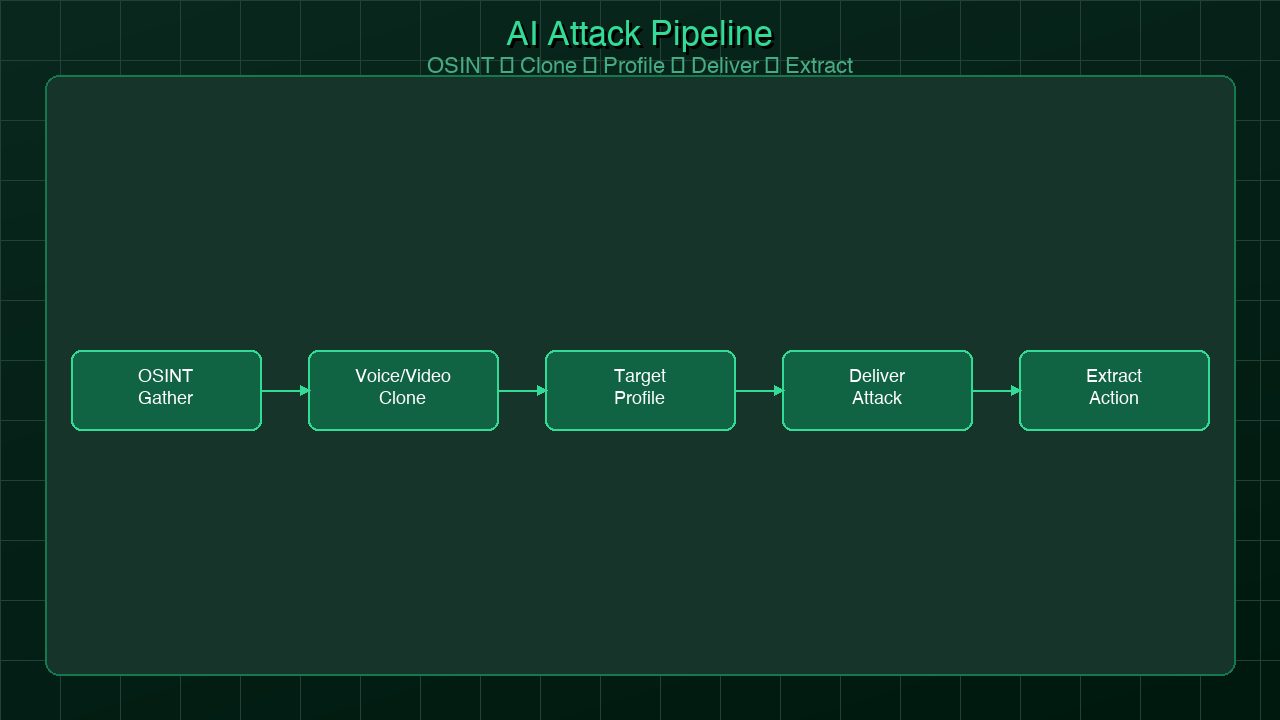

Understanding the anatomy of an attack is the first step to building effective defenses. Modern deepfake phishing follows a consistent kill chain, whether the goal is wire fraud, credential theft, or malware installation.

Step 1: OSINT Gathering

Before any AI model is trained, attackers build a comprehensive intelligence profile. Publicly available sources are shockingly rich:

- LinkedIn: Job titles, reporting structures, internal project names, company vocabulary, and employee connections reveal organizational hierarchy. An attacker can identify the CFO, their direct reports, and which assistant handles calendar requests.

- YouTube and conference recordings: Earnings calls, investor presentations, and conference talks provide hours of high-quality audio and video of executives — exactly the training data needed for voice clones and deepfake models.

- GitHub: Public repositories reveal internal tooling names, infrastructure patterns, and sometimes email addresses and internal domain structures.

- Press releases and news: Merger announcements, funding rounds, and personnel changes create time-sensitive pretexts ("I need this wire transfer completed before the acquisition closes tomorrow").

- Job postings: Reveal internal systems in use (Salesforce, Workday, specific cloud providers), which helps attackers craft believable system-level pretexts.

OSINT phase for a mid-sized target can be completed in 2–4 hours with automated tooling. For high-value targets like public company executives, the data is already pre-aggregated across dozens of data broker databases.

Step 2: Voice and Video Model Training

With source material in hand, attackers train or fine-tune models:

- Voice cloning: 30 seconds to 3 minutes of clean audio is sufficient for services like ElevenLabs. The resulting model captures prosody, accent, speech patterns, and emotional register. Longer samples produce more convincing results with more flexibility for novel scripts.

- Video deepfake: Tools like DeepFaceLab require more source data — typically 300–500 photos or a few minutes of video — to produce stable face-swaps. Real-time face-swap for live calls requires a GPU with enough VRAM to run inference at video frame rates, typically an RTX 3080 or better.

- LLM context priming: The attacker creates a system prompt for an LLM that includes the target's writing style, known projects, colleagues' names, and organizational vocabulary. The LLM then generates emails, chat messages, or call scripts that sound internally authentic.

Step 3: Target Profiling

Not everyone in the organization is the final target. Attackers map the authorization chain:

- Who can authorize wire transfers? (CFO, Controller, Treasury Manager)

- Who has admin access to identity systems? (IT helpdesk, Active Directory admins)

- Who onboards new vendors? (AP team, Procurement)

- Who can reset MFA for executives? (IT helpdesk — often the weakest link)

The deepfake is of a trusted authority figure (CEO, CFO, board member, IT director). The target is whoever has the ability to execute the requested action and is most likely to defer to that authority figure under time pressure.

Step 4: Delivery Vector

The attack reaches the target through whatever channel establishes the most trust with the least friction:

- Fake video conference call: The most sophisticated vector. Attacker creates a meeting invite from a spoofed or compromised email account, joins with deepfake video and cloned voice. The Hong Kong $25M attack used this method.

- Voice message / voicemail: Simpler to execute, no real-time GPU required. A pre-generated voice message from "the CEO" asking for an urgent action.

- Teams or Slack DM: Attackers compromise or spoof an account and send a direct message with a link or credential request. LLM-generated text matches the executive's writing style.

- Email: Still the dominant vector for volume attacks. LLM-generated spear phishing with convincing personalization.

Step 5: Action Extraction

The final step is converting trust into a concrete action:

- Wire transfer: "We need to move funds for the acquisition before end of day. I'll explain in the all-hands next week — keep this quiet for now."

- Credential handover: "IT is doing emergency maintenance on your account — what's your current password / can you approve this MFA push?"

- Malware install: "I'm sending you a contract to review — click the link to download."

- Vendor account creation: "We're onboarding a new vendor urgently — can you process this invoice/banking details?"

The time pressure and authority combination are intentional. Attackers want the target to act before they have time to verify.

graph TD

A[OSINT Gathering\nLinkedIn, YouTube, GitHub, Press] --> B[Model Training\nVoice Clone + Video Deepfake + LLM Priming]

B --> C[Target Profiling\nIdentify Authorization Holders]

C --> D{Delivery Vector}

D --> E[Live Video Call\nReal-time Deepfake]

D --> F[Voice Message\nPre-generated Clone]

D --> G[Chat / Email\nLLM-generated Text]

E --> H[Action Extraction]

F --> H

G --> H

H --> I[Wire Transfer\nor Credential Theft\nor Malware Install]

style I fill:#c0392b,color:#fff

style A fill:#2c3e50,color:#fff

style B fill:#2c3e50,color:#fff

Real-World Incidents

The threat is not theoretical. The incidents below represent documented cases with verified financial losses.

The $25 Million Hong Kong Deepfake Call (2024)

In February 2024, Hong Kong police confirmed that a finance worker at an unidentified multinational corporation was defrauded of HKD 200 million (approximately $25.6 million USD) after attending a multi-person video conference where every other participant was a deepfake. The attacker had used publicly available video of the real employees to create convincing real-time deepfakes. The finance worker initially suspected a phishing email but was reassured when the video call appeared to show familiar faces and voices.

This case is significant because it demonstrated that deepfake video calls in a business context were no longer theoretical — they were operationally deployed against a real organization at scale.

Energy Company CEO Voice Clone ($243,000)

In 2019 — years before the current generation of voice AI tools was available — attackers used AI-generated audio to impersonate the CEO of a UK-based energy company. The fake CEO called the company's German subsidiary and instructed the manager to wire €220,000 (approximately $243,000) to a Hungarian supplier. The manager complied, believing he was speaking to his actual CEO. The attackers called back requesting a second transfer, but the manager grew suspicious and verified with the real CEO. This incident occurred with voice AI tools that were primitive by 2026 standards.

LLM-Powered Spear Phishing Campaigns (2024–2025)

Security researchers at multiple firms documented a significant uptick in LLM-generated spear phishing campaigns targeting enterprise employees. Unlike previous generations of phishing emails — which were detectable by grammar errors, generic salutations, or implausible scenarios — these emails referenced real internal projects by name, used correct organizational terminology, and adopted the writing style of known internal communicators. Defenders reported that standard phishing awareness training was largely ineffective because the emails no longer had the traditional visual tells.

According to IBM's 2024 Cost of a Data Breach Report, the average cost of a data breach reached $4.88 million, with social engineering remaining one of the leading initial attack vectors. Gartner projected that by 2026, more than 80% of enterprises will have encountered AI-generated attack content.

What These Incidents Have in Common

All of these attacks shared a common structure: they exploited the human tendency to trust familiar identities, combined with time pressure that discouraged verification. The technology was the enabler, but the attack surface was process and psychology — not software vulnerabilities.

graph TD

A[Unexpected Request Arrives] --> B{Is the sender identity verified\nthrough a channel you initiated?}

B -- Yes --> C[Proceed with normal authorization]

B -- No --> D{Does it involve money, credentials,\nor system access?}

D -- No --> E[Proceed with standard caution]

D -- Yes --> F[STOP — Verify out-of-band]

F --> G[Call known number from directory\nNOT from the message]

G --> H{Identity confirmed\nvia separate channel?}

H -- Yes --> I[Proceed — document verification]

H -- No --> J[Escalate to security team immediately]

style J fill:#c0392b,color:#fff

style F fill:#e67e22,color:#fff

style I fill:#27ae60,color:#fff

Detection and Defense

The good news: while attackers are using AI to scale attacks, defenders have both technical and procedural tools available. The key insight is that no single control is sufficient — defense requires layering.

Technical Controls

#### Out-of-Band Verification

The single most effective control against deepfake fraud is a mandatory out-of-band verification protocol for any request involving financial transactions or credential changes. The rule is simple: if a request for a wire transfer, credential handover, or system access change arrives via any digital channel — email, chat, video call, voice message — it must be verified by calling a phone number from your organization's internal directory or a previously stored known-good contact. Not the number provided in the message. Not by replying to the email.

This control is low-tech and highly effective because it bypasses the deepfake entirely. The attacker cannot intercept a call to a number they don't control.

#### AI Deepfake Detection Tools

Several technical tools exist to detect AI-generated content at the signal level:

- Intel FakeCatcher: Uses photoplethysmography (blood flow patterns visible in facial skin pixels) to distinguish real human faces from synthetic video. Real faces show subtle pulse-driven color changes; deepfakes typically do not replicate these at the pixel level. FakeCatcher has been demonstrated with real-time detection capabilities.

- Microsoft's deepfake detection research: Microsoft has published detection models as part of its Responsible AI initiative that analyze temporal inconsistencies and frequency-domain artifacts common in face-swap models.

- Voice liveness detection: Dedicated services and APIs analyze audio for artifacts of synthesis — phase inconsistencies, spectral smoothing, unnatural pitch transitions. These are increasingly integrated into fraud detection platforms used by financial institutions.

#### Email Analysis for LLM-Generated Text

LLM-generated phishing emails can be detected with statistical text analysis tools. One approach is perplexity scoring — LLMs tend to produce text with lower perplexity (more predictable token sequences) than human writers in many contexts. While this is not foolproof, it can be used as a risk signal in email security tooling.

import math

import re

import statistics

def estimate_llm_likelihood(text: str) -> dict:

"""

Heuristic estimate of LLM-generated text likelihood.

Uses sentence-level variance and vocabulary richness as signals.

Higher uniformity score = more likely LLM-generated.

NOTE: This is a simplified heuristic for illustration.

Production use should call a dedicated LLM-detection API

(e.g., Originality.ai, GPTZero API, or fine-tuned classifier).

"""

# Split into sentences

sentences = re.split(r'[.!?]+', text.strip())

sentences = [s.strip() for s in sentences if len(s.strip()) > 10]

if len(sentences) < 3:

return {"score": 0.0, "confidence": "insufficient_data"}

# Feature 1: Sentence length variance

# LLMs tend toward uniform sentence lengths; humans vary more

lengths = [len(s.split()) for s in sentences]

length_stdev = statistics.stdev(lengths) if len(lengths) > 1 else 0

length_mean = statistics.mean(lengths)

coefficient_of_variation = length_stdev / length_mean if length_mean > 0 else 0

# Feature 2: Vocabulary richness (Type-Token Ratio)

# LLMs sometimes produce lower TTR on formal text

words = text.lower().split()

ttr = len(set(words)) / len(words) if words else 0

# Feature 3: Filler phrase density

# LLMs overuse certain transitional phrases

llm_fillers = [

"it is important to note", "in conclusion", "furthermore",

"it is worth mentioning", "as mentioned above", "in summary",

"to summarize", "needless to say", "it goes without saying"

]

text_lower = text.lower()

filler_count = sum(1 for phrase in llm_fillers if phrase in text_lower)

filler_density = filler_count / len(sentences)

# Composite heuristic score (0 = likely human, 1 = likely LLM)

# Low CV + high filler density → LLM signal

uniformity_signal = max(0, 1 - coefficient_of_variation)

llm_score = (uniformity_signal * 0.4) + (filler_density * 0.4) + (max(0, 0.6 - ttr) * 0.2)

llm_score = min(1.0, llm_score)

confidence = "high" if len(sentences) >= 8 else "medium"

return {

"score": round(llm_score, 3),

"confidence": confidence,

"signals": {

"sentence_length_cv": round(coefficient_of_variation, 3),

"vocabulary_richness_ttr": round(ttr, 3),

"filler_phrase_density": round(filler_density, 3)

},

"interpretation": "Possible LLM-generated text" if llm_score > 0.6 else "Likely human-written"

}

# Example usage

sample_email = """

Dear John, I hope this message finds you well.

I wanted to reach out regarding an urgent matter that requires your immediate attention.

It is important to note that we need to process a wire transfer before end of business today.

In conclusion, please confirm your availability to discuss this matter at your earliest convenience.

Furthermore, the details of the transfer will be provided upon confirmation.

"""

result = estimate_llm_likelihood(sample_email)

print(f"LLM likelihood score: {result['score']}")

print(f"Interpretation: {result['interpretation']}")

print(f"Signals: {result['signals']}")

#### Domain and Sender Threat Intelligence

For email-based attacks, automated checks against threat intelligence feeds can catch newly registered lookalike domains before they reach inboxes.

#!/bin/bash

# check-domain-threat-intel.sh

# Check a domain against VirusTotal and WHOIS for phishing signals

# Requires: VIRUSTOTAL_API_KEY environment variable

DOMAIN="${1:?Usage: $0 <domain>}"

VT_API_KEY="${VIRUSTOTAL_API_KEY:?Set VIRUSTOTAL_API_KEY env variable}"

echo "=== Threat Intel Check: $DOMAIN ==="

# VirusTotal domain report

echo ""

echo "[VirusTotal] Checking domain reputation..."

VT_RESPONSE=$(curl -s --request GET \

--url "https://www.virustotal.com/api/v3/domains/${DOMAIN}" \

--header "x-apikey: ${VT_API_KEY}")

# Parse malicious vote count and registration date

MALICIOUS=$(echo "$VT_RESPONSE" | python3 -c "

import sys, json

data = json.load(sys.stdin)

stats = data.get('data', {}).get('attributes', {}).get('last_analysis_stats', {})

print(f'Malicious detections: {stats.get(\"malicious\", 0)}/{sum(stats.values()) if stats else 0}')

creation_date = data.get('data', {}).get('attributes', {}).get('creation_date', 'Unknown')

import datetime

if isinstance(creation_date, int):

creation_date = datetime.datetime.fromtimestamp(creation_date).strftime('%Y-%m-%d')

print(f'Domain created: {creation_date}')

" 2>/dev/null || echo "Parse error — check raw response")

echo "$MALICIOUS"

# WHOIS age check (new domains = higher risk)

echo ""

echo "[WHOIS] Checking domain age..."

whois "$DOMAIN" 2>/dev/null | grep -iE "creation|registered|created" | head -3

# DNS anomaly check — does it look like a lookalike?

echo ""

echo "[DNS] Resolving domain..."

dig +short "$DOMAIN" A | head -3

echo ""

echo "=== Manual review recommended if: domain < 30 days old, >"

echo " 0 malicious detections, or MX records point to free email providers ==="

#### Zero-Trust for Financial and Credential Requests

Zero-trust architecture principles apply directly to this threat: never trust a request based on identity alone. Every request for sensitive action should be treated as potentially compromised regardless of how convincing the requester appears. This means:

- No wire transfers authorized via email, chat, or voice message alone — period.

- MFA resets require a secondary approval from a supervisor through a separate channel.

- New vendor banking details changes require a documented callback to the vendor's known phone number.

- Privileged access requests are logged, reviewed, and require multi-party authorization above defined thresholds.

Process Controls

The Call-Back Rule: For any wire transfer above a defined threshold (suggested: $10,000 or whatever is meaningful for your organization), a mandatory callback to a known phone number in the internal directory is required before authorization. This should be policy, not suggestion — written into financial controls with the same weight as dual-signature requirements.

Multi-Person Authorization Thresholds: Define dollar thresholds above which no single person can authorize a transfer. This is standard financial control practice, but many organizations have informal exceptions. Those exceptions are the attack surface.

AI-Generated Phishing Simulations: Security awareness training built on old-style phishing examples is insufficient. Run simulations using LLM-generated spear phishing emails that reference real projects, real colleagues, and real company vocabulary. Users need to develop instincts calibrated to the current threat, not the 2015 threat.

Incident Response Playbook for Deepfake Attempts: Define a clear escalation path when an employee suspects a deepfake call or message. The playbook should include: hang up immediately, do not continue the call, report to security team, do not transfer funds or provide credentials pending verification, preserve call recordings and message artifacts.

graph LR

A[Request Received] --> B[Email / Message]

A --> C[Voice Call]

A --> D[Video Call]

B --> E[Technical Layer\nEmail Authentication\nSPF/DKIM/DMARC\nLLM Detection Scoring\nDomain Age Check]

C --> F[Technical Layer\nVoice Liveness Detection\nCaller ID Verification\nAudio Artifact Analysis]

D --> G[Technical Layer\nDeepfake Detection\nFakeCatcher / Frame Analysis\nLatency Anomaly Detection]

E --> H[Process Layer\nOut-of-Band Verification\nCallback to Known Number\nDo NOT use contact info from message]

F --> H

G --> H

H --> I[Authorization Layer\nDual Approval for Transfers\nZero-Trust Policy\nAudit Logging]

I --> J{Action Type}

J -- Financial Transfer --> K[Multi-Person Approval\n+ Documented Callback]

J -- Credential Change --> L[IT Security Review\n+ Supervisor Sign-off]

J -- System Access --> M[Privileged Access Workflow\n+ Time-limited Grants]

style K fill:#27ae60,color:#fff

style L fill:#27ae60,color:#fff

style M fill:#27ae60,color:#fff

style E fill:#2980b9,color:#fff

style F fill:#2980b9,color:#fff

style G fill:#2980b9,color:#fff

Building a Deepfake-Resistant Culture

Technical controls are necessary but not sufficient. The most sophisticated detection system in the world fails if the person on the video call can be pressured into bypassing it "just this once" under time pressure from an apparent authority figure.

Establish Out-of-Band Codewords

Some organizations — particularly those that have already encountered or seriously modeled this threat — establish shared codewords for executives making unusual requests. The codeword system works like this: any unusual financial or access request from an executive must include a pre-agreed rotating codeword that was established in person or through a verified channel. No codeword, no action. This is simple, low-tech, and difficult for an attacker to compromise without already having physical access to internal communications.

Adopt an Assume-Breach Mindset

Security culture often defaults to assuming that systems and identities are legitimate unless proven otherwise. The deepfake threat inverts this: treat any unexpected request involving money, credentials, or privileged access as suspicious by default, regardless of who appears to be making it. This is not paranoia — it is calibrated skepticism in an environment where identity can be convincingly fabricated.

The practical implication: employees at all levels need psychological permission to say "I need to verify this before I act" even when facing apparent authority pressure. An executive who creates a culture where requests can be questioned without consequence is actively reducing organizational risk.

Red Team Exercises with AI-Generated Attacks

If your security team is not already running red team exercises using AI-generated deepfake content, begin planning them now. This means:

- Sending LLM-generated spear phishing emails that reference real internal projects and colleagues (with appropriate ethical guardrails and employee notification)

- Running simulated fake video calls with AI voice to test finance team response

- Testing whether IT helpdesk staff appropriately escalate requests for MFA resets or password changes that come from convincing but unverified voices

The goal of these exercises is not to catch employees failing — it is to build calibrated intuition for what these attacks feel like in practice, so that the psychological response ("this feels urgent and authoritative, I should comply") is replaced with a trained response ("this is exactly what a deepfake attack is supposed to feel like").

Structured Skepticism as Organizational Norm

Build formal skepticism into authorization workflows. A finance employee who says "I need to call back before I process this" should be executing policy, not being obstructionist. The callback requirement should be so routine that bypassing it feels as unusual as skipping a dual-signature on a large check.

The organizational norm shift required is: verification is not an insult to the requester's authority. It is the policy. The real CEO does not ask employees to skip verification. If someone is pressuring an employee to skip verification because "there's no time," that pressure itself is a red flag worth escalating.

Conclusion

The $25 million Hong Kong deepfake case was not an isolated anomaly. It was a preview. The AI capabilities that made that attack possible have become cheaper, faster, and more accessible with every quarter since. By the time you read this in 2026, the toolchain required to execute a convincing deepfake attack on a mid-market organization is within reach of moderately resourced criminal groups, not just nation-states.

The core insight for developers and security engineers is this: the attack surface has shifted from software vulnerabilities to process vulnerabilities. No amount of endpoint detection or email filtering addresses a threat that succeeds because a human trusted a familiar face and voice. The defenses that work are the ones that make identity verification mandatory before consequential action — regardless of how convincing the requester appears.

The layered approach outlined here — technical detection, out-of-band verification, zero-trust authorization policy, and culture-level skepticism training — is not a checklist to complete and file away. It is an ongoing posture that needs to evolve as attacker capabilities evolve. The AI arms race in security is real, and it is accelerating.

For broader context on the AI security landscape, see our companion posts: [Post 059: Platform Engineering and Security Automation](/blog/059-platform-engineering.md), [Post 060: Internal Developer Platforms](/blog/060-internal-developer-platforms.md), and [Post 061: AI-Powered Cybersecurity](/blog/061-ai-powered-cybersecurity.md). Each covers a different dimension of how AI is reshaping both the attack and defense surfaces for modern organizations.

The organizations that will weather this transition are not necessarily those with the largest security budgets. They are the ones that have built verification into muscle memory — where "let me call you back on a known number" is a reflex, not an afterthought.

*Published by AmtocSoft Tech Insights | amtocsoft.blogspot.com*

Enjoyed this post? Follow AmtocSoft for AI tutorials from beginner to professional.

☕ Buy Me a Coffee | 🔔 YouTube | 💼 LinkedIn | 🐦 X/Twitter

Comments

Post a Comment