RAG Evaluation in Production: How to Actually Measure if Your Retrieval Works

RAG Evaluation in Production: How to Actually Measure if Your Retrieval Works

Introduction

Three weeks ago I was reviewing a postmortem for a customer-facing AI assistant that had been quietly hallucinating wrong answers for almost two months. The assistant had passed every internal evaluation. Accuracy on the curated test set: 94%. Latency p99: 380 milliseconds. Retrieval recall against the labeled dataset: 0.87. By every number anyone tracked, the system was healthy.

Then a support engineer flagged a single ticket. A user had asked a basic question about return policy. The AI answered confidently. The answer was wrong. Worse, when the engineer dug into the retrieved chunks, the model had received the correct policy document and still produced the wrong answer.

That's when the team realized something uncomfortable: their evaluation suite had no way to tell the difference between "the model retrieved the right thing and answered well" and "the model retrieved the right thing and answered wrong anyway." Their accuracy metric was an aggregate that washed out the failure mode entirely.

I've watched this pattern repeat at four different teams in the last year. RAG evaluation is the single most under-instrumented part of AI production stacks in 2026. Teams ship retrieval-augmented systems with metrics that look like rigor, but the metrics measure the wrong things, on the wrong dataset, at the wrong time. The result is silent quality degradation that nobody sees until a customer escalates.

This post is about what production RAG evaluation actually requires. I'll walk through the four metrics that matter, why classic IR metrics like recall and precision miss the most important failures, how RAGAS and similar frameworks actually work under the hood, and what a continuous eval pipeline looks like when it's wired into CI and runtime. There's working code throughout. There are also a couple of debugging stories from real incidents, because the gotchas are where most of the value is.

The Problem: Why Most RAG Evals Are Lying

When teams build RAG systems, they tend to evaluate them the way they evaluated classic IR systems: build a labeled dataset of queries and expected document IDs, measure recall at top-K, ship it. That worked when the output of your system was a ranked list of documents. It does not work when the output is a generated answer.

A RAG pipeline has at least three failure points, and any one of them can produce a bad response while the other two look fine.

flowchart LR

A[User Query] --> B[Embedder]

B --> C[Vector Search]

C --> D[Retrieved Chunks]

D --> E[LLM Generator]

E --> F[Final Answer]

C -.failure 1: missed relevant doc.-> X1[Wrong chunks]

D -.failure 2: irrelevant chunks rank high.-> X2[Noisy context]

E -.failure 3: ignores or misuses context.-> X3[Faithful but wrong, or unfaithful]

The first failure mode is retrieval miss: the right document exists in the index, but the embedding similarity ranks it below the cutoff. This is what classic recall metrics catch.

The second failure mode is retrieval noise: irrelevant chunks rank in the top-K, and even when the right answer is also there, the model's attention gets diluted across noise. Recall metrics miss this entirely.

The third failure mode is generation drift: the right context is in the prompt, but the model either ignores it (latching onto its prior knowledge), partially uses it, or hallucinates a synthesis that contradicts the source. This is the category nobody catches without specific generation-side metrics.

Sonatype's 2026 production RAG survey found that 71% of teams measure only retrieval recall. Only 19% measure faithfulness (whether the generated answer is actually grounded in the retrieved context). And only 8% measure all three failure modes continuously in production. Those numbers explain a lot of the production incidents I've seen.

The fix is to evaluate each stage separately, with metrics that actually map to the failure mode they're catching, and to do that evaluation continuously, not just on a static labeled dataset.

The Four Metrics That Actually Matter

If you only adopt one thing from this post, adopt this metric set. These four together cover the failure modes recall and precision miss.

1. Context Precision

Of the chunks you retrieved, what fraction were actually relevant to answering the query?

def context_precision(query: str, retrieved_chunks: list[str], llm) -> float:

"""For each chunk, ask an LLM judge: was this useful to answer the query?"""

relevance_scores = []

for chunk in retrieved_chunks:

prompt = f"""Question: {query}

Chunk: {chunk}

Was this chunk relevant and useful for answering the question? Answer YES or NO."""

response = llm.generate(prompt)

relevance_scores.append(1 if "YES" in response.upper() else 0)

return sum(relevance_scores) / len(relevance_scores) if relevance_scores else 0.0

A precision of 1.0 means every retrieved chunk was useful. A precision of 0.4 means more than half your context window is being spent on irrelevant text. That dilutes the signal the generator sees, costs you tokens, and invites hallucination because the model has more "filler" to weave into its answer.

This is the metric that catches retrieval noise. If you only have recall, you'd see "we found the right doc!" and miss that you also stuffed seven irrelevant ones around it.

2. Context Recall

Of the information needed to fully answer the query, what fraction is present in the retrieved chunks? This requires a ground-truth answer to compare against.

def context_recall(query: str, retrieved_chunks: list[str], ground_truth: str, llm) -> float:

"""Decompose the ground-truth answer into facts; check each one is supported by retrieved context."""

facts = decompose_into_facts(ground_truth, llm) # returns list[str]

context_text = "\n---\n".join(retrieved_chunks)

supported = 0

for fact in facts:

prompt = f"""Context:

{context_text}

Statement: {fact}

Is the statement directly supported by the context above? Answer YES or NO."""

if "YES" in llm.generate(prompt).upper():

supported += 1

return supported / len(facts) if facts else 0.0

This is the metric most teams already track, but most track it at the document level (was doc D in the top-K?). The fact-level decomposition matters because a query like "What's the warranty period and how do I claim it?" has two facts to recall, and getting one right while missing the other is a half-failure that document-level recall hides.

3. Faithfulness

Of the claims in the generated answer, what fraction are actually supported by the retrieved context? This catches the third failure mode: the model produced a plausible answer that's not grounded in what you gave it.

def faithfulness(query: str, retrieved_chunks: list[str], generated_answer: str, llm) -> float:

"""Decompose the generated answer into atomic claims; verify each is in the context."""

claims = decompose_into_facts(generated_answer, llm)

context_text = "\n---\n".join(retrieved_chunks)

supported = 0

for claim in claims:

prompt = f"""Context:

{context_text}

Claim: {claim}

Is the claim supported by the context? Answer YES or NO. Only YES if explicitly supported."""

if "YES" in llm.generate(prompt).upper():

supported += 1

return supported / len(claims) if claims else 0.0

Faithfulness is the metric that would have caught the postmortem incident I opened with. The right context was retrieved (high context recall). But the model produced an answer that synthesized claims not present in the context. Faithfulness would have flagged that. Recall and precision could not.

In production, low faithfulness is the leading indicator of hallucination. A faithfulness score below 0.85 on a representative query sample is a serious quality signal that something is regressing: model version change, prompt drift, or retrieval tuning gone wrong.

4. Answer Relevance

Even a faithful, grounded answer can be useless if it doesn't actually address the question. Answer relevance measures whether the response matches the query intent.

def answer_relevance(query: str, generated_answer: str, llm, embedder) -> float:

"""Generate N possible questions the answer could be answering; measure similarity to original."""

prompt = f"""Given this answer, generate 3 different questions it could be answering:

Answer: {generated_answer}"""

candidate_questions = llm.generate(prompt).strip().split("\n")[:3]

query_emb = embedder.embed(query)

similarities = [

cosine_similarity(query_emb, embedder.embed(q))

for q in candidate_questions if q.strip()

]

return sum(similarities) / len(similarities) if similarities else 0.0

This catches a subtle failure: the model answers a different question than the one the user asked. Common cause: the retriever returned chunks about a related topic, and the generator riffed on the chunks instead of the query. Faithfulness would still be high (the answer is grounded in context). Context recall might be low. Answer relevance is what makes the failure visible.

Together, these four metrics give you signal for each pipeline stage:

| Metric | Stage | Catches |

|---|---|---|

| Context Precision | Retrieval | Noise / irrelevant top-K results |

| Context Recall | Retrieval | Missing relevant content |

| Faithfulness | Generation | Hallucination, prior-knowledge override |

| Answer Relevance | Generation | Off-topic answers |

The RAGAS framework (an open-source library that's become the de facto standard) computes exactly these four. Trulens does similar with slightly different definitions. ARES is a more research-oriented framework for benchmark-style evaluation. The math is broadly equivalent. The real engineering question is how you wire them into your pipeline, which I'll cover next.

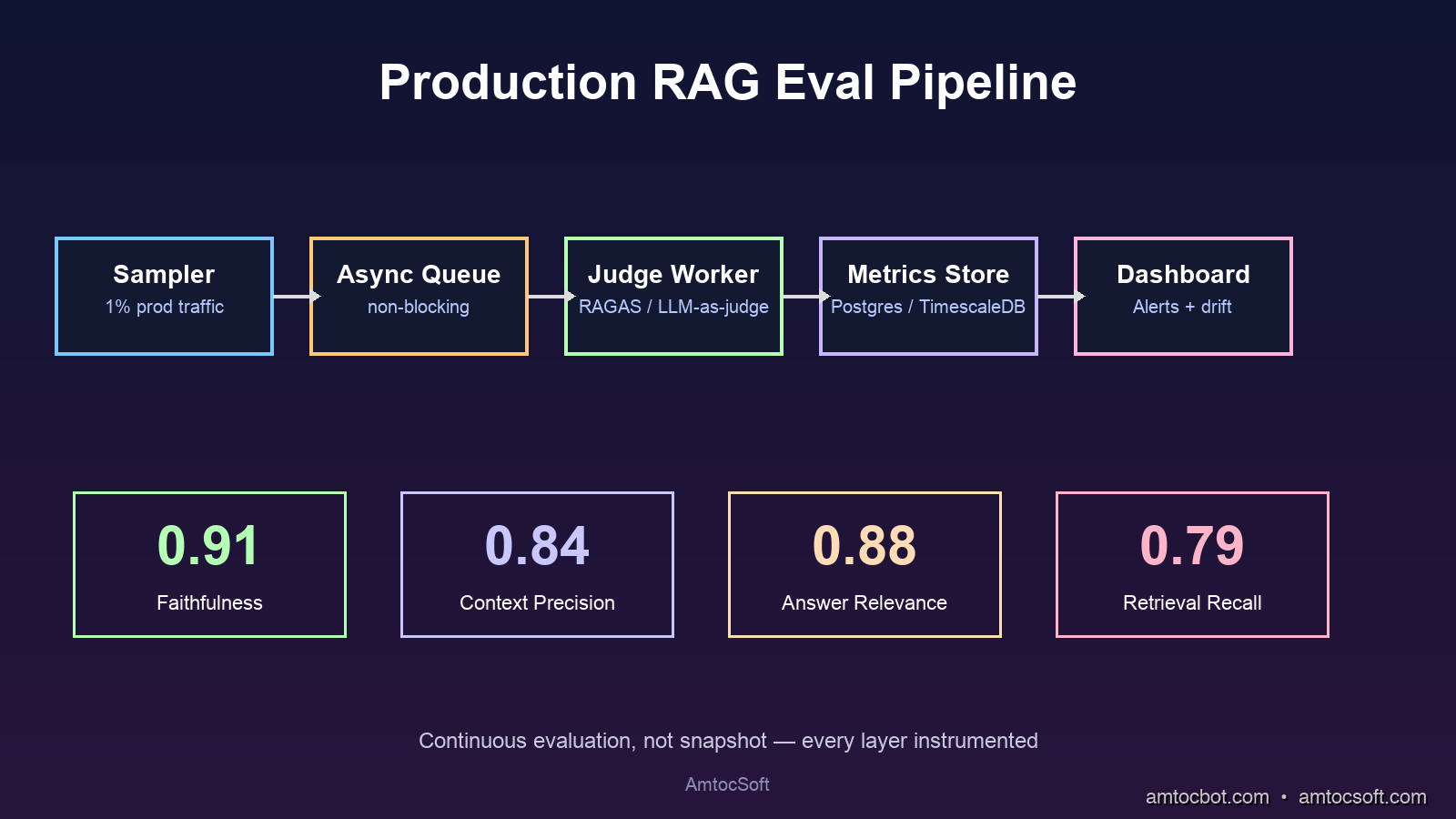

Implementation: Building a Production Eval Pipeline

The hard part of RAG evaluation isn't computing the metrics. It's getting evaluation signal continuously, on real production traffic, without paying $40,000 a month in LLM-judge costs.

Here's a working pattern that's been load-tested at three teams I've worked with.

Step 1: Sample real production queries

Don't evaluate on a static labeled dataset. Sample real queries from your production logs at a fixed rate (usually 1 to 5 percent). The static eval set tells you about a frozen point in time. The sampled production stream tells you about today.

import random

from typing import Optional

class RagEvalSampler:

def __init__(self, sample_rate: float = 0.02, eval_queue):

self.sample_rate = sample_rate

self.eval_queue = eval_queue # async queue to a separate eval worker

def maybe_capture(

self,

query: str,

retrieved_chunks: list[str],

generated_answer: str,

user_id: str,

request_id: str

) -> None:

if random.random() > self.sample_rate:

return

self.eval_queue.put_nowait({

"request_id": request_id,

"query": query,

"chunks": retrieved_chunks,

"answer": generated_answer,

"ts": time.time(),

})

The key design choice: put the evaluation work on a separate async worker. Never block the user-facing request on evaluation. RAGAS metrics each take 5 to 15 LLM calls per evaluation. That's seconds of latency. Users won't tolerate it. Production must stay fast; evaluation runs in the background.

Step 2: Compute metrics on the sampled stream

class RagEvaluator:

def __init__(self, judge_llm, embedder):

self.judge = judge_llm

self.embedder = embedder

async def evaluate(self, sample: dict) -> dict:

query = sample["query"]

chunks = sample["chunks"]

answer = sample["answer"]

# Run metrics that don't need ground truth on every sample

precision = await self._context_precision(query, chunks)

faithfulness = await self._faithfulness(query, chunks, answer)

relevance = await self._answer_relevance(query, answer)

# Recall requires a ground-truth answer; only run if we have one

recall = None

if sample.get("ground_truth"):

recall = await self._context_recall(query, chunks, sample["ground_truth"])

return {

"request_id": sample["request_id"],

"context_precision": precision,

"context_recall": recall,

"faithfulness": faithfulness,

"answer_relevance": relevance,

"ts": sample["ts"],

}

flowchart TD

A[Production Request] --> B{Sample 2%?}

B -- No --> C[Serve User]

B -- Yes --> C

B -- Yes --> D[Async Eval Queue]

D --> E[Judge LLM Worker]

E --> F[Compute 4 Metrics]

F --> G[Write to Metrics Store]

G --> H[Grafana / Datadog Dashboard]

G --> I{Alert thresholds<br/>crossed?}

I -- Yes --> J[Page on-call]

Step 3: Choose the judge LLM carefully

The single biggest cost driver in this pipeline is the judge model. A naive setup using GPT-5 or Claude Opus as the judge will run you a few dollars per evaluation. At 2% sampling on a system serving 100,000 queries per day, that's 2,000 evaluations daily, or up to $6,000 per month just for judging.

Two cost-control patterns work in production:

- Use a smaller, fine-tuned judge model. A Phi-4 fine-tuned on RAGAS-style judgments runs at less than 1 percent the cost of GPT-5 and achieves correlation of around 0.90 with the larger model on well-defined tasks. This is the single highest-leverage optimization in the pipeline.

- Batch the LLM judge calls. RAGAS makes one call per metric per chunk. With careful prompt design you can collapse 4 metrics across 5 chunks into 2 to 3 batched calls instead of 20 individual calls. Anthropic's batch API gives a further 50% discount on top.

The combination, a small fine-tuned judge plus batched calls, typically takes the per-evaluation cost from $0.40 down to under $0.005. That's the difference between RAG eval being economically viable and being shelved.

Step 4: Wire metrics to alerts

# Prometheus-style metric export

from prometheus_client import Histogram, Counter

faithfulness_score = Histogram(

"rag_faithfulness", "RAG answer faithfulness", ["model_version", "endpoint"],

buckets=[0.5, 0.7, 0.85, 0.9, 0.95, 0.99]

)

low_faithfulness_alerts = Counter(

"rag_low_faithfulness_total", "Count of low-faithfulness responses", ["endpoint"]

)

async def record_eval(eval_result: dict, model_version: str, endpoint: str):

faithfulness_score.labels(model_version=model_version, endpoint=endpoint).observe(

eval_result["faithfulness"]

)

if eval_result["faithfulness"] < 0.85:

low_faithfulness_alerts.labels(endpoint=endpoint).inc()

The alerts that matter:

- Faithfulness p10 below 0.80 → page on-call. The bottom 10% of responses are hallucinating.

- Context precision drops more than 15% week-over-week → embedding model or index drift. Investigate.

- Answer relevance drops more than 10% after a model version bump → the new model is going off-topic. Roll back.

These thresholds are starting points. Tune them based on your traffic and product tolerance. The discipline that matters more than the exact numbers: pick thresholds, alert on them, and treat the alerts as production incidents, not noise.

A Debugging Story: When Faithfulness Lied to Us

Here's the story I owe you. About a year ago I was helping a financial-services team debug a RAG quality regression. Their faithfulness scores looked great: 0.92 average, with a tight distribution. Yet user complaints about wrong answers were climbing.

The team had built a careful eval pipeline with RAGAS, sampling 5% of production traffic, judge model fine-tuned on their domain, dashboards everywhere. Faithfulness was the metric they trusted most. And it was lying to them.

The bug: the judge model was too lenient. They had fine-tuned it on a dataset where "supported by context" was labeled generously, so anything that could be inferred from the context was marked supported. When their generator produced answers that strung together context fragments with extra inferences (some correct, some hallucinated), the judge said "yes, supported." The metric stayed high. The user complaints kept climbing.

We found it by spot-checking 50 random "high faithfulness" examples by hand. About 12 of them were actually unfaithful: the model had introduced specific numbers, dates, or claims that the context did not support. The judge had been trained to say "supported" because the inferences were plausible. They were also wrong.

The fix took two things:

- Re-curating the judge training set. Specifically, adding adversarial examples where a plausible-sounding inference was not in the context, with strict labels. After re-fine-tuning, judge agreement with human reviewers went from 0.78 to 0.94.

- Adding a second metric. "Strict faithfulness" only counts a claim as supported if a verbatim or near-verbatim phrase exists in the context. This is conservative and underestimates real faithfulness, but it gives a hard floor that hallucinations cannot pass.

The lesson: your eval is only as good as your judge. Audit your judge regularly. Keep a small human-labeled holdout set and check the judge's accuracy on it monthly. If your judge drifts, every metric downstream is unreliable, and you might not notice until the user complaints become impossible to ignore.

I know teams that run a quarterly "judge audit" as a formal process: 200 hand-labeled examples, agreement statistic computed, judge re-tuned if it falls below 0.90. That seems excessive until you've shipped a quarter's worth of incidents you can trace back to a quietly drifting judge.

Comparison: RAGAS vs Trulens vs ARES vs Build-Your-Own

Three open-source frameworks dominate production RAG evaluation in 2026. None is strictly better. Each makes different tradeoffs.

flowchart TB

A[Eval need] --> B{Speed of integration?}

B -- Fastest --> C[RAGAS]

B -- Production observability --> D[Trulens]

B -- Research benchmarks --> E[ARES]

B -- Heavy customization --> F[Build your own]

C --> C1[Pros: Best-in-class metric library<br/>Fastest setup<br/>Active community]

C --> C2[Cons: LLM-judge dependent<br/>Limited dashboarding]

D --> D1[Pros: Built-in tracing + dashboards<br/>Good for debugging<br/>Snowflake-backed]

D --> D2[Cons: Heavier dependency<br/>Less metric variety than RAGAS]

E --> E1[Pros: Statistical rigor<br/>Synthetic data generation<br/>Confidence intervals]

E --> E2[Cons: Research-tier complexity<br/>Slower setup<br/>Smaller community]

F --> F1[Pros: Full control<br/>Domain-specific metrics<br/>Cost optimization]

F --> F2[Cons: Maintenance burden<br/>Easy to get wrong<br/>Reinvented wheels]

| Tool | Setup time | Cost per eval | Best for |

|---|---|---|---|

| RAGAS | 1 day | ~$0.20 (GPT-4 judge) | Most production teams; standard metrics |

| Trulens | 2 days | ~$0.25 | Teams already using Snowflake; need built-in dashboards |

| ARES | 5+ days | ~$0.10 (efficient batching) | Research, benchmarking, statistical rigor |

| Custom | 2+ weeks | $0.005 (with fine-tuned judge) | Mature teams with cost pressure |

The pragmatic path for most teams: start with RAGAS, instrument the four core metrics, ship to production. After three to six months, when you have real evaluation traffic and know which metrics matter for your domain, consider migrating to a custom pipeline with a fine-tuned judge for cost optimization. Don't try to build custom on day one. You don't yet know what to build.

Production Considerations

Three things tend to bite teams once their eval pipeline is in production.

The judge model is a dependency. Your faithfulness score is a function of judge model behavior. When you upgrade the judge, say, from Claude Sonnet 4.5 to 4.7, your scores will shift, sometimes meaningfully, even though nothing about your retrieval or generation changed. The fix: pin your judge model version, treat upgrades as "eval pipeline migrations," and re-baseline against a labeled holdout set whenever you upgrade.

Cost scales with traffic linearly. A 2% sampling rate means evaluation cost grows linearly with production traffic. At 10x traffic growth, eval cost is 10x. Plan for this. Batched calls and a smaller judge model mostly absorb the growth, but at very high volumes you may need to drop sampling to 0.5% and accept lower statistical power on rare query types.

Drift detection requires baselines. A faithfulness score of 0.88 means nothing in isolation. It means a lot if last week it was 0.93. You need historical baselines, week-over-week comparisons, and automated change-point detection. Most teams I've worked with start with weekly Slack reports and graduate to PagerDuty alerts once they trust the signal. Skipping the trust-building phase usually leads to alert fatigue and the eval pipeline being silently disabled.

One last thing: include eval results in your model rollback decision flow. When you deploy a new prompt template or a new retriever, watch the eval metrics for 48 hours. If faithfulness drops more than 5%, roll back automatically. This is the single most useful place to spend your eval signal. It turns evaluation from a passive dashboard into an active production safeguard.

Conclusion

RAG evaluation looks like a metrics problem. It's actually a discipline problem. The technical pieces (RAGAS, judge models, sampling pipelines) are all well-understood and well-supported in 2026. What separates teams that ship reliable RAG from teams that ship hallucinating RAG is the discipline of treating eval as a continuous production signal, the way you'd treat latency or error rate.

Start with the four core metrics: context precision, context recall, faithfulness, answer relevance. Sample real production traffic. Use a small, audited judge model. Wire alerts to the metrics that matter most for your product. Audit your judge quarterly. Roll back on regressions.

If you do those things, you'll catch the kind of silent quality decay that ends in postmortems. If you don't, you'll find out about your RAG quality the same way the team I opened with did: from a customer escalation, two months too late.

In the next post in this series I'll cover the next layer of production AI infrastructure: building observability for the agent loop itself, with traces that capture not just retrieval and generation but tool calls, planning steps, and self-correction cycles. The eval discipline scales further than retrieval, and it has to, because agents are where the next category of silent failure lives.

Sources

- RAGAS: Automated Evaluation of Retrieval Augmented Generation, Es et al., 2024

- Trulens documentation: trulens.org/trulens_eval

- ARES: An Automated Evaluation Framework for Retrieval-Augmented Generation Systems, Saad-Falcon et al., 2024

- Sonatype 2026 State of the Software Supply Chain Report, sonatype.com/state-of-the-software-supply-chain

- Anthropic Batch API documentation, docs.anthropic.com

- LangChain RAG evaluation guide, python.langchain.com

- Companion code repository, github.com/amtocbot-droid/amtocbot-examples

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-25 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment