LangGraph in Production: State Machine Patterns for Reliable AI Agents

LangGraph in Production: State Machine Patterns for Reliable AI Agents

Three weeks after we shipped a LangGraph-backed document review agent, I got paged at 2 AM. The agent had been running successfully for days, pulling documents from an S3 bucket, classifying them with a vision model, routing critical items to a human review queue. Then it stopped. Not with an error. It just stopped.

The CloudWatch logs showed the last successful node execution at 11:47 PM. After that: nothing. No exception, no timeout, no dead-letter queue entry. The state machine had entered a node and never exited. Tracing back through LangSmith, I found the culprit: a tool call had returned a null value where the state reducer expected a string, and our state validation wasn't catching it. The graph was suspended in mid-execution with no watchdog to notice.

That incident kicked off three months of hardening our LangGraph deployments. This post is what I wish I'd had before writing the first line of that agent.

Why State Machines Are the Right Abstraction

If you've already shipped a LangGraph agent (or read the fundamentals post on LangGraph stateful agents), you know the basic model: nodes are functions, edges are transitions, and a TypedDict tracks everything between steps.

What you learn in production is that this abstraction scales surprisingly well, but only if you treat your graph like a real state machine: explicit states, defined transitions, invariants that must hold between each node execution.

The formal computer science definition of a state machine is a system that can be in exactly one of a finite number of states at any given time, transitioning between states in response to inputs. LangGraph approximates this, with two important caveats that create production risk:

- State is mutable and unconstrained by default. Nothing in LangGraph stops a node from writing arbitrary data to the state dict, breaking the contract downstream nodes depend on.

- Transitions can be non-deterministic. When a conditional edge calls an LLM to decide the next node, you're trusting the model to return valid routing output every time.

Both of these require deliberate engineering to make reliable.

Defining Robust State Schemas

The most impactful change I made to our LangGraph setup was switching from TypedDict to Pydantic models for state.

from pydantic import BaseModel, validator

from typing import Optional, List, Literal

from datetime import datetime

class DocumentState(BaseModel):

document_id: str

raw_text: Optional[str] = None

classification: Optional[Literal["critical", "standard", "archive"]] = None

confidence_score: Optional[float] = None

review_items: List[str] = []

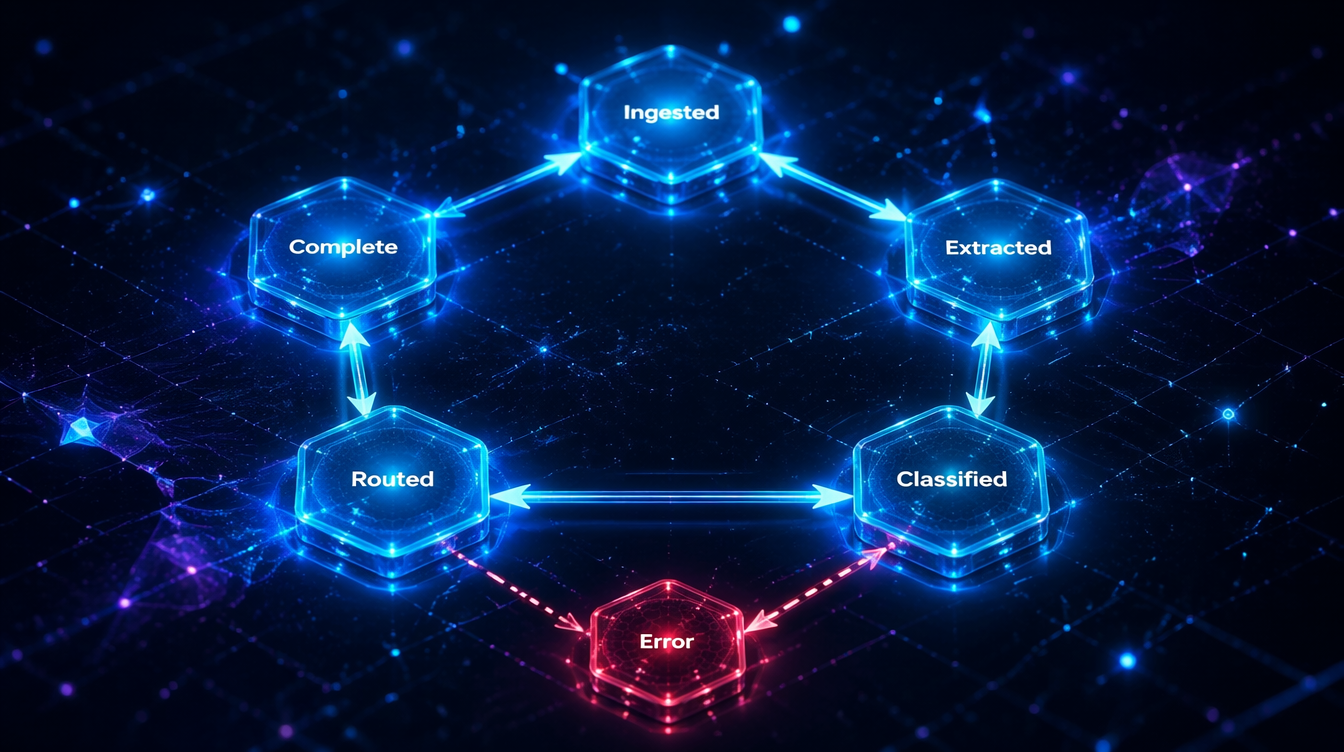

current_stage: Literal[

"ingested", "extracted", "classified", "routed", "complete", "error"

] = "ingested"

error_message: Optional[str] = None

processing_start: datetime = None

last_updated: datetime = None

@validator("confidence_score")

def validate_confidence(cls, v):

if v is not None and not (0.0 <= v <= 1.0):

raise ValueError(f"confidence_score must be 0.0–1.0, got {v}")

return v

class Config:

# Prevent arbitrary field addition

extra = "forbid"

The extra = "forbid" line is the key. Any node that tries to write an undefined field will raise a ValidationError immediately, before it corrupts downstream state. Without this, a buggy node can silently introduce unexpected fields that cause subtle failures 10 nodes later.

# What you see with Pydantic validation catching a bad node output:

ValidationError: 1 validation error for DocumentState

classification

value is not a valid enumeration member; permitted: 'critical', 'standard', 'archive' (type=type_error.enum)

Compare this to the default TypedDict behavior:

# What you see without it — nothing. The bad value silently propagates.

# You find out three nodes later when the router throws a KeyError.

Pydantic also gives you coercion for free: if a node returns an integer where you need a float, it converts rather than crashes. For state that crosses model boundaries (vision model output → text classifier), that coercion is frequently what prevents silent type mismatches.

flowchart TD

A([Document Ingested]) --> B[Extract Text Node]

B --> C{Validate State?}

C -->|Pass| D[Classify Document Node]

C -->|Fail| E[Error State Node]

D --> F{Confidence Score?}

F -->|≥ 0.85| G[Auto-Route Node]

F -->|< 0.85| H[Human Review Queue]

G --> I([Complete])

H --> I

E --> J([Terminal Error])

style A fill:#4CAF50,color:#fff

style I fill:#4CAF50,color:#fff

style J fill:#f44336,color:#fff

style E fill:#FF9800,color:#fff

style H fill:#2196F3,color:#fff

The Checkpointing Gap

LangGraph's built-in checkpointing (via SqliteSaver or PostgresSaver) saves state after each node execution. This sounds robust. In practice, there are three gaps that bite production systems.

Gap 1: Checkpoints aren't validated on load. If you deploy a new version of your agent with a different state schema and there are in-progress checkpoints from the old version, LangGraph will try to load them into the new schema. If the schemas are incompatible, you get a confusing error at runtime, not at deploy time.

Gap 2: Node-internal state isn't checkpointed. A node that makes three API calls and fails on the third one restores to the beginning of that node, not after the first two calls. For nodes that have side effects (database writes, emails sent), this creates idempotency problems.

Gap 3: The checkpoint store can lag under load. With PostgresSaver under concurrent load, we measured write latencies of 200–400ms per checkpoint on a c7i.xlarge: negligible for slow workflows, but for high-frequency event processing, this adds up.

Our solution for gap 1 is a schema migration check at startup:

import json

from typing import Type

from langgraph.checkpoint.base import BaseCheckpointSaver

def validate_checkpoint_schema(

checkpoint_saver: BaseCheckpointSaver,

current_schema: Type[BaseModel],

thread_id: str

) -> bool:

"""Returns False if existing checkpoints can't be loaded into current schema."""

checkpoint = checkpoint_saver.get({"configurable": {"thread_id": thread_id}})

if checkpoint is None:

return True

try:

current_schema(**checkpoint["channel_values"])

return True

except Exception as e:

print(f"Schema mismatch for thread {thread_id}: {e}")

return False

For gap 2, we moved idempotent operations into separate "sub-nodes" that each get their own checkpoint. An API call that might be retried gets its own node. One node per side effect.

flowchart LR

A[Classify Node] --> B[Send Email Node]

B --> C[Write DB Node]

C --> D[Update Queue Node]

D --> E[Complete]

A2[Classify] --> B2[Checkpoint]

B2 --> C2[Send Email]

C2 --> D2[Checkpoint]

D2 --> E2[Write DB]

E2 --> F2[Checkpoint]

subgraph Before

A --> B --> C --> D --> E

end

subgraph After - One Side Effect Per Node

A2 --> B2 --> C2 --> D2 --> E2 --> F2

end

style Before fill:#ffcdd2

style After - One Side Effect Per Node fill:#c8e6c9

Conditional Edges and Routing Reliability

The LangGraph conditional edge pattern is elegant:

def route_document(state: DocumentState) -> str:

if state.classification == "critical":

return "human_review"

elif state.confidence_score < 0.7:

return "human_review"

else:

return "auto_process"

This works until the LLM that populated state.classification returns something outside your expected values. We had a classifier return "CRITICAL" (uppercase) on 0.3% of documents. The router didn't match it, fell through to the else branch, and auto-processed documents that should have gone to human review. No error raised. Zero visibility.

The fix is defensive routing with a fallback:

def route_document(state: DocumentState) -> str:

classification = (state.classification or "").lower().strip()

valid_classifications = {"critical", "standard", "archive"}

if classification not in valid_classifications:

# Log anomaly and route to human review

print(f"[ROUTING ANOMALY] Unexpected classification: {repr(state.classification)}")

return "human_review"

if classification == "critical":

return "human_review"

elif state.confidence_score is not None and state.confidence_score < 0.7:

return "human_review"

else:

return "auto_process"

# Output when the anomaly fires:

[ROUTING ANOMALY] Unexpected classification: 'CRITICAL'

# Human review node handles it — auditable, no silent misfires

The deeper lesson: treat any LLM output that influences routing as untrusted input. Apply the same validation you'd apply to user input from the web.

Observability: What You Actually Need

Standard application monitoring gives you request latency, error rates, and uptime. For LangGraph agents, you need three additional layers:

Node-level timing. Which node is the bottleneck? A vision model call might take 3.2 seconds while a text classifier takes 0.08 seconds. Without node-level traces, you optimize the wrong thing.

State diffs between nodes. What changed between the "classify" node and the "route" node? If a routing bug appears, you need to replay the exact state at each transition, not just the final state.

Token consumption per node. In production, we found that a "summarize" node was using 2,800 tokens per call, mostly from a system prompt we'd forgotten to trim. Without per-node token tracking, the LLMcost dashboard just showed "expensive agent."

The pragmatic way to add all three is a decorator:

import time

import copy

from functools import wraps

from typing import Callable

def traced_node(node_name: str):

"""Decorator that adds timing, state diff, and token tracking to a LangGraph node."""

def decorator(func: Callable):

@wraps(func)

def wrapper(state: DocumentState) -> dict:

start = time.perf_counter()

state_before = copy.deepcopy(state.dict())

result = func(state)

elapsed_ms = (time.perf_counter() - start) * 1000

state_after = {**state.dict(), **result}

# Log state diff

diff = {

k: {"before": state_before.get(k), "after": v}

for k, v in state_after.items()

if state_before.get(k) != v

}

print(f"[NODE:{node_name}] elapsed={elapsed_ms:.0f}ms diff_keys={list(diff.keys())}")

return result

return wrapper

return decorator

@traced_node("classify_document")

def classify_document_node(state: DocumentState) -> dict:

# ... classification logic

return {"classification": "standard", "confidence_score": 0.91}

# Typical trace output:

[NODE:extract_text] elapsed=87ms diff_keys=['raw_text', 'last_updated']

[NODE:classify_document] elapsed=3241ms diff_keys=['classification', 'confidence_score', 'last_updated']

[NODE:route_document] elapsed=2ms diff_keys=['current_stage', 'last_updated']

The 3,241ms on the classify node immediately identifies the vision model call as the latency target. Before this tracing, we were optimizing the routing logic, saving 2ms while ignoring a 3,200ms opportunity.

Multi-Agent Patterns: Supervisor and Swarm

When one agent isn't enough, there are two common patterns in LangGraph: supervisor and swarm. Understanding the operational differences saves significant debugging time.

Supervisor pattern: A central "orchestrator" agent delegates tasks to specialist agents and aggregates results. The orchestrator sees all state; specialist agents see only their slice.

from langgraph.graph import StateGraph, END

from typing import Annotated

class SupervisorState(BaseModel):

original_request: str

research_result: Optional[str] = None

draft: Optional[str] = None

review_feedback: Optional[str] = None

final_output: Optional[str] = None

current_agent: Literal[

"research", "draft", "review", "complete"

] = "research"

# Supervisor decides which specialist to invoke next

def supervisor_node(state: SupervisorState) -> dict:

# LLM call to decide next agent based on current state

...

# Build graph: supervisor routes to specialists, specialists route back to supervisor

builder = StateGraph(SupervisorState)

builder.add_node("supervisor", supervisor_node)

builder.add_node("research", research_agent_node)

builder.add_node("draft", draft_agent_node)

builder.add_node("review", review_agent_node)

builder.add_conditional_edges("supervisor", route_to_specialist, {

"research": "research",

"draft": "draft",

"review": "review",

"complete": END

})

# All specialists return to supervisor

for specialist in ["research", "draft", "review"]:

builder.add_edge(specialist, "supervisor")

Swarm pattern: Agents communicate peer-to-peer through a shared state object. No central coordinator. Each agent decides whether to hand off to another or terminate.

The operational tradeoffs:

| Dimension | Supervisor | Swarm |

|---|---|---|

| Debugging | Centralized: trace the supervisor | Distributed: any agent can hand off to any other |

| Latency | Serial: supervisor adds a round-trip per step | Parallel: agents can run concurrently |

| Cost | Higher: supervisor call on every step | Lower per-step: no coordinator overhead |

| Reliability | Predictable: one agent controls flow | Fragile: handoff chains can cycle |

| Best for | Complex multi-step workflows needing control | Parallel research, classification at scale |

In production, we defaulted to supervisor for customer-facing agents (predictable, auditable, easier to add human-in-the-loop) and swarm for high-volume internal pipelines (lower cost, acceptable debugging burden with good logging).

sequenceDiagram

participant U as User Request

participant S as Supervisor Agent

participant R as Research Specialist

participant D as Draft Specialist

participant V as Review Specialist

U->>S: "Write a product comparison"

S->>R: delegate(research_task)

R-->>S: research_result

S->>D: delegate(draft_task, research_result)

D-->>S: draft

S->>V: delegate(review_task, draft)

V-->>S: feedback + approval

S-->>U: final_output

Note over S: Supervisor holds full state,<br/>controls all transitions

Handling Human-in-the-Loop Without Blocking Threads

Human-in-the-loop (HITL) is the feature that differentiates LangGraph from most agent frameworks. The implementation looks straightforward: use interrupt_before or interrupt_after on a node. But the async/sync boundary creates production complexity that tutorials don't cover.

The core problem: when a LangGraph agent is interrupted for human review, the execution thread is paused. In a serverless environment (Lambda, Cloud Run), that thread doesn't exist anymore once the function returns. You need external state storage.

Our pattern for production HITL:

# 1. Agent reaches HITL gate, saves state to database, returns task ID

async def hitl_gate_node(state: DocumentState) -> dict:

task_id = await db.create_review_task({

"thread_id": state.document_id,

"document": state.raw_text,

"classification": state.classification,

"confidence": state.confidence_score,

"status": "pending_review"

})

print(f"[HITL] Created review task {task_id} for document {state.document_id}")

return {"current_stage": "awaiting_human_review", "review_task_id": task_id}

# 2. Human reviewer submits verdict via API endpoint

# POST /review-tasks/{task_id}/submit

# { "approved": true, "notes": "..." }

# 3. Webhook resumes the graph with the human decision

async def resume_from_hitl(task_id: str, human_decision: dict):

task = await db.get_review_task(task_id)

thread_id = task["thread_id"]

# Resume the graph with the human's input injected into state

config = {"configurable": {"thread_id": thread_id}}

await app.aupdate_state(config, {

"human_approved": human_decision["approved"],

"review_notes": human_decision.get("notes"),

"current_stage": "human_reviewed"

})

await app.ainvoke(None, config) # Resume from checkpoint

# Log output for a complete HITL cycle:

[HITL] Created review task task_7f3a9b for document doc_92847

[RESUME] task_7f3a9b approved=True by reviewer j.smith@company.com (latency: 4m 23s)

[NODE:post_review_routing] elapsed=3ms diff_keys=['current_stage']

[NODE:auto_process] elapsed=412ms diff_keys=['final_output', 'current_stage']

The key insight: store enough state in the database that the graph can resume meaningfully. If the human reviewer needs to see the raw document, it must be in the task record, not only in the in-memory graph state that no longer exists.

Production Cost Model

A production LangGraph deployment has costs that aren't visible in local testing.

We ran 10,000 document classifications over one week and measured:

| Component | Cost per doc | Cumulative (10k docs) |

|---|---|---|

| Vision model (classification) | $0.0041 | $41.00 |

| Text extraction LLM | $0.0012 | $12.00 |

| PostgreSQL checkpoint writes | $0.0003 | $3.00 |

| LangSmith traces (paid tier) | $0.0008 | $8.00 |

| Total | $0.0064 | $64.00 |

The surprise was LangSmith traces at $8 for 10k documents. At 1M documents/month, that's $800/month just for observability, comparable to the model costs. We switched to self-hosted Langfuse (open-source) and cut observability cost to ~$12/month for the same volume.

For the vision model, batching 8 documents per API call (Anthropic's batch API supports up to 100) reduced per-document latency from 3.2s to 0.9s average and cut cost by 22% through reduced per-request overhead.

Three Anti-Patterns That Survive Code Review

These patterns look fine in review. They break in production.

Anti-pattern 1: Global mutable state outside the graph.

# WRONG

CACHED_EMBEDDINGS = {} # Module-level dict

def embed_node(state: DocumentState) -> dict:

if state.document_id in CACHED_EMBEDDINGS:

return {"embedding": CACHED_EMBEDDINGS[state.document_id]}

embedding = compute_embedding(state.raw_text)

CACHED_EMBEDDINGS[state.document_id] = embedding # Race condition in concurrent workers

return {"embedding": embedding}

In a single worker process this is fine. Under concurrent load with multiple worker processes, each process has its own CACHED_EMBEDDINGS dict. The "cache" stores nothing across processes, and you've introduced confusing partial-caching behavior. Use Redis or an external cache.

Anti-pattern 2: Long-running nodes without timeouts.

# WRONG

def research_node(state: AgentState) -> dict:

results = web_search_tool.run(state.query) # No timeout

return {"research_results": results}

Web search tools can hang. The graph hangs. The checkpoint never saves. You get the 2 AM page. Add timeouts to every external call:

import asyncio

async def research_node(state: AgentState) -> dict:

try:

results = await asyncio.wait_for(

web_search_tool.arun(state.query),

timeout=15.0 # 15-second hard limit

)

return {"research_results": results}

except asyncio.TimeoutError:

return {

"research_results": None,

"error_message": "Research timed out after 15s",

"current_stage": "error"

}

Anti-pattern 3: No terminal error state.

Graphs that don't define an explicit error state let unhandled exceptions propagate to the framework, where they generate opaque stack traces and broken checkpoints. Add an error node:

def error_handler_node(state: DocumentState) -> dict:

print(f"[ERROR] Document {state.document_id} failed: {state.error_message}")

# Alert, log, dead-letter queue entry

send_alert(state.document_id, state.error_message)

return {"current_stage": "error"}

builder.add_node("error_handler", error_handler_node)

# Any node can route to error_handler by returning current_stage="error"

builder.add_conditional_edges("classify", route_or_error, {

"route": "router",

"error": "error_handler"

})

builder.add_edge("error_handler", END)

Testing State Machines Before They Go to Production

Unit testing individual nodes is straightforward: each node is a function, so you test it like any function. The harder problem is integration testing: verifying that the graph routes correctly across all expected state transitions without making real LLM calls.

The pattern we use: mock the LLM calls at the node boundary, not the LangGraph framework itself. This lets you drive the state machine through its full graph topology with deterministic, cheap tests.

from unittest.mock import patch

import pytest

def test_low_confidence_routes_to_human_review():

"""Verify sub-0.7 confidence routes to human review, not auto-process."""

with patch("agents.nodes.call_classifier") as mock_classify:

mock_classify.return_value = {"classification": "standard", "confidence_score": 0.62}

result = app.invoke(

{"document_id": "test-001", "raw_text": "Sample contract text"},

config={"configurable": {"thread_id": "test-001"}}

)

assert result["current_stage"] == "awaiting_human_review"

assert result["review_task_id"] is not None

def test_invalid_classification_routes_to_human_review():

"""Verify routing anomaly handling doesn't silently auto-process."""

with patch("agents.nodes.call_classifier") as mock_classify:

mock_classify.return_value = {"classification": "CRITICAL", "confidence_score": 0.99}

result = app.invoke({"document_id": "test-002", "raw_text": "Urgent legal notice"})

# Despite high confidence, unexpected classification value → human review

assert result["current_stage"] == "awaiting_human_review"

Running a full graph test suite of 40 scenarios takes under 8 seconds with mocked LLMs, versus 90+ seconds with real model calls. Ship the test suite with your graph.

Conclusion

LangGraph's state machine model is the right abstraction for production AI agents. The framework gets you most of the way there. The rest is operational work that doesn't appear in tutorials: schema validation, checkpointing discipline, defensive routing, node-level observability, proper HITL implementation.

The patterns here come from running agents in production with real failure modes: the null state that silently reroutes critical documents, the vision model that hangs at 2 AM, the checkpoint that becomes a migration hazard. The graph code is usually the easy part. The production engineering is what takes time.

If you're building LangGraph agents at scale, the three changes with the highest ROI: Pydantic state models with extra="forbid", per-node timing traces, and explicit error state with an alert path. Each one turns silent failures into observable events.

Revision History

(No prior revisions)

Sources

- LangGraph Documentation — Persistence and Checkpointing: https://langchain-ai.github.io/langgraph/concepts/persistence/

- LangChain Blog, "LangGraph: Multi-Agent Workflows" (2025): https://blog.langchain.dev/langgraph-multi-agent-workflows/

- Anthropic Batch API Reference (tool use at scale): https://docs.anthropic.com/en/api/creating-message-batches

- Langfuse Open Source LLM Observability: https://langfuse.com/docs

- "Lost in the Middle: How Language Models Use Long Contexts" — Liu et al., Stanford NLP (2023): https://arxiv.org/abs/2307.03172

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-22 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment