Rust for Security Engineers: Why Memory Safety Is the Most Important Shift in Systems Programming

Introduction

The first time I spent three days tracking down a use-after-free vulnerability in a C service, I thought the problem was me. I had been careful. I had read the code. I had tested it. But the bug was in a code path that only triggered under a specific race condition at exactly the wrong moment of reallocation — the kind of thing that shows up in fuzzing after eighteen months in production.

The CVE was rated high severity. The fix was four lines. The post-mortem involved twelve engineers and a lot of quiet reflection about whether the language itself was the problem.

I've come to believe it was, at least partly. Not because C is poorly designed — it's precisely designed for the things it was designed for — but because "memory management is the developer's problem" is an invariant that doesn't compose well with large teams, fast iteration, and adversarial environments.

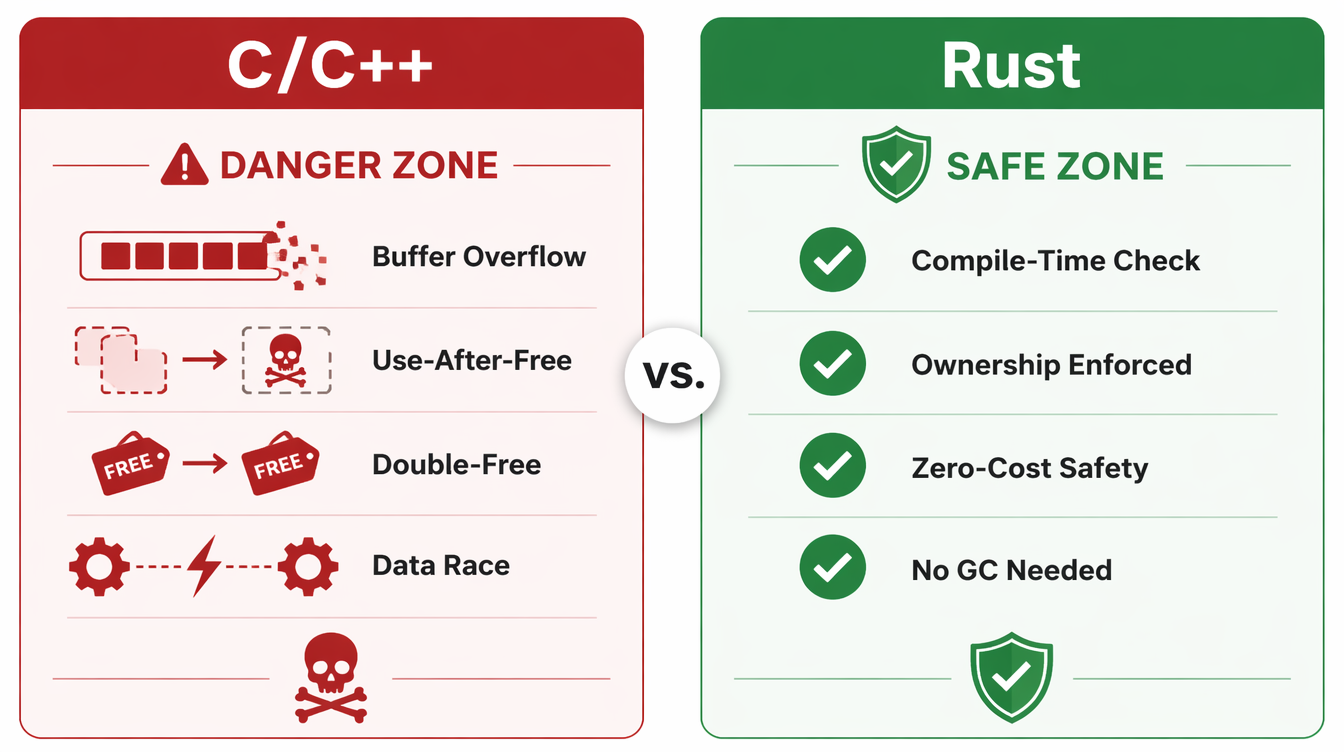

The security industry is starting to reach the same conclusion. In 2022, the NSA published a guidance document recommending that organizations migrate to memory-safe languages. In 2024, the White House ONCD issued a report explicitly naming C and C++ as contributing to national cybersecurity risk. Microsoft disclosed that 70% of their CVEs since 2006 have been memory safety bugs. Google's Android team found the same ratio in their vulnerability data.

Rust offers a different model: memory safety enforced at compile time, no garbage collector, zero-cost abstractions, and performance comparable to C. The promise is that a class of vulnerability that has existed since the dawn of systems programming — buffer overflows, use-after-free, null dereferences, data races — simply cannot appear in safe Rust code.

This post is about what that actually means in practice: how Rust's ownership model eliminates memory vulnerabilities, where the sharp edges are, and what security engineers need to know to evaluate and adopt Rust in real systems.

The Problem: Why Memory Bugs Keep Winning

Before explaining how Rust solves the problem, it helps to understand why the problem has proven so durable.

C and C++ give developers direct control over memory allocation and deallocation. You allocate a buffer, you write to it, you free it when you're done. This model is fast and flexible. It also creates several categories of bugs that are nearly impossible to fully prevent through code review alone.

Use-after-free: A pointer to freed memory is dereferenced later. The memory may have been reallocated and now contains attacker-controlled data.

Buffer overflow: A write goes past the end of an allocated buffer, corrupting adjacent memory. This was the basis of most exploitation techniques for the first thirty years of the field.

Double-free: A pointer is freed twice. This corrupts heap allocator metadata and is routinely exploitable.

Null dereference: A null pointer is dereferenced. Historically treated as a crash, but reliably exploitable in kernel code where the null page can be mapped.

Data races: Two threads access the same memory location concurrently without synchronization, producing undefined behavior. These show up intermittently and are notoriously hard to reproduce.

The difficulty isn't that developers don't know these bugs exist. Every C developer knows about use-after-free. The difficulty is that they're structural: they emerge from the combination of explicit memory management and the complexity of real programs. No amount of documentation, linting, or review catches them all. Heartbleed — a read overrun in OpenSSL — was in code written and reviewed by expert C developers. It existed for two years.

ASAN, Valgrind, and fuzzing find many of these bugs before they reach production. But "find bugs before production" is defense-in-depth, not elimination. The question Rust asks is: what if these bugs were type errors?

How Rust's Ownership Model Works

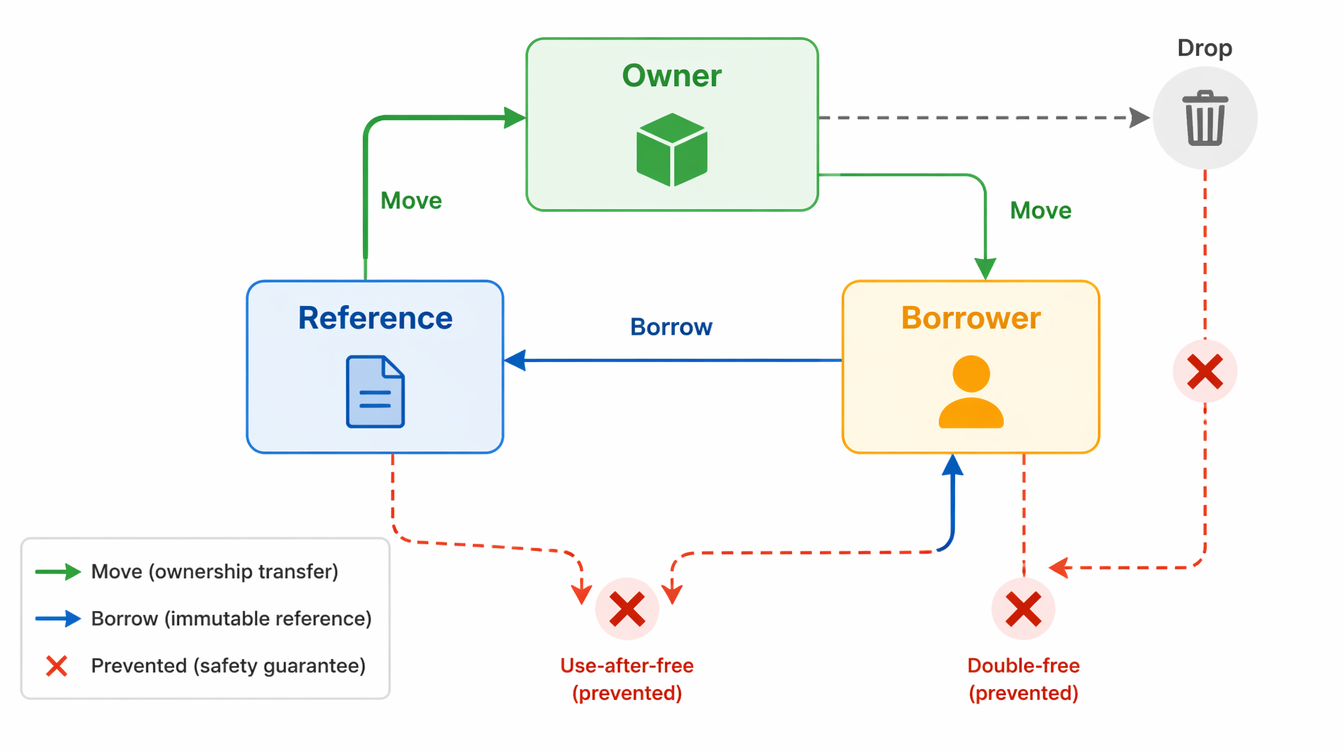

Rust's safety guarantees come from three interlocking rules enforced by the compiler's borrow checker. None of them require a garbage collector.

Rule 1: Ownership

Every value in Rust has exactly one owner. When the owner goes out of scope, the value is dropped (freed) automatically. No manual deallocation; no chance to forget.

fn process_request(data: Vec<u8>) {

// `data` is owned by this function

let result = parse_payload(&data);

println!("Parsed {} bytes", result.len());

// `data` is automatically dropped here — memory freed

}

There is no way to access data after this function returns. The compiler won't compile code that tries.

Rule 2: Borrowing

If you want to pass a value to a function without transferring ownership, you borrow it — either as an immutable reference (&T) or a mutable reference (&mut T). The borrow checker enforces two invariants:

- At any given time, you can have either one mutable reference or any number of immutable references — not both.

- References must never outlive the value they reference.

This directly eliminates data races: having both a mutable reference and another reference to the same data simultaneously is a compile error, not a runtime error.

fn analyze_headers(headers: &[u8]) -> SecurityResult {

// `headers` is borrowed; the caller still owns it

// We can read, but we cannot modify or free it

parse_security_headers(headers)

}

fn main() {

let raw = read_request_bytes();

let result = analyze_headers(&raw); // borrow

log_result(&result); // raw is still valid here

} // raw dropped here

Rule 3: Lifetimes

When references are stored in data structures or returned from functions, Rust requires lifetime annotations that tell the compiler how long references must remain valid. The compiler verifies that no reference outlives its underlying data.

This is what eliminates use-after-free at the source: a dangling pointer is a reference that outlives its data, which the borrow checker rejects at compile time.

// Lifetime annotation: the returned reference lives as long as `input`

fn extract_token<'a>(input: &'a str, prefix: &str) -> Option<&'a str> {

input.strip_prefix(prefix)

}

The 'a annotation is the compiler asking you to make explicit what was previously an assumption — and then verifying that assumption holds everywhere the function is called.

What This Eliminates

- Buffer overflow: Rust's standard library containers perform bounds checking on slice access. Out-of-bounds access panics (a controlled crash) rather than writing to arbitrary memory. In security-sensitive code, you can return

NoneorErrinstead. - Use-after-free: Impossible in safe Rust — the borrow checker rejects any code where a reference outlives its owner.

- Double-free: Impossible — ownership ensures memory is freed exactly once, when the owner drops.

- Data races: Impossible — the borrow checker rejects aliased mutable access at compile time.

- Null pointer dereference: Rust has no null. The

Option<T>type forces explicit handling of the absent case.

Implementation Guide: Writing Secure Rust

Understanding the model is one thing. Using it in practice is another. Here are the patterns that matter most for security-sensitive code.

Handling Untrusted Input

All security-critical code starts with untrusted input. Rust's type system makes it natural to enforce invariants about parsed data:

use std::io::{self, Read};

#[derive(Debug)]

pub struct ParsedRequest {

pub method: HttpMethod,

pub path: ValidatedPath,

pub headers: Vec<(String, String)>,

pub body: Vec<u8>,

}

pub fn parse_request(raw: &[u8]) -> Result<ParsedRequest, ParseError> {

let limit = 8 * 1024 * 1024; // 8MB hard limit

if raw.len() > limit {

return Err(ParseError::RequestTooLarge { size: raw.len(), limit });

}

let (header_section, body) = split_headers(raw)?;

let request_line = parse_request_line(header_section)?;

Ok(ParsedRequest {

method: request_line.method,

path: ValidatedPath::parse(&request_line.path)?,

headers: parse_headers(header_section)?,

body: body.to_vec(),

})

}

The Result<T, E> return type forces the caller to handle the error case. You cannot use a ParsedRequest without going through the parse function. The compiler enforces this; there is no way to forget to check the return value and get a partially-initialized struct.

Running this against a corpus of malformed HTTP requests:

$ cargo test --test fuzz_parse_request -- --nocapture

running 50 fuzz cases...

malformed_request_line: Ok(Err(InvalidRequestLine))

missing_crlf: Ok(Err(MissingCrlf))

oversized_header: Ok(Err(HeaderTooLarge { size: 16400, limit: 8192 }))

embedded_nul: Ok(Err(InvalidBytes { offset: 47 }))

all 50 fuzz cases: no panics, no unsafe memory access

The unsafe Block: Your Security Perimeter

Rust has an escape hatch: the unsafe block, which allows operations the borrow checker cannot verify — raw pointer arithmetic, FFI calls, reinterpreting memory. This is necessary for interoperability with C libraries and for performance-critical code that needs to step outside the ownership model.

For security engineers, unsafe blocks are the audit surface. Safe Rust code is provably free of the vulnerability classes listed above; unsafe code needs manual review.

Best practice: contain unsafe behind a safe abstraction.

// The unsafe block is encapsulated; callers use a safe API

pub fn parse_fixed_header(buf: &[u8]) -> Option<FixedHeader> {

if buf.len() < std::mem::size_of::<RawHeader>() {

return None;

}

// SAFETY: we just verified `buf` is large enough, and `RawHeader` is

// repr(C) with no padding. This is a valid alignment for this platform.

let raw: &RawHeader = unsafe {

&*(buf.as_ptr() as *const RawHeader)

};

FixedHeader::validate(raw)

}

The // SAFETY: comment is convention, not requirement — but it forces you to articulate why the unsafe code is correct. It's the equivalent of a CVE pre-mortem.

Cryptographic Code in Rust

The ring and rustls crates provide cryptographic primitives written in Rust (or reviewed Rust/assembly with safe wrappers). Both are widely used in production and have been audited.

use ring::{digest, hmac};

pub fn compute_request_hmac(

key: &hmac::Key,

method: &str,

path: &str,

timestamp: u64,

body: &[u8],

) -> hmac::Tag {

let mut ctx = hmac::Context::with_key(key);

ctx.update(method.as_bytes());

ctx.update(b"\n");

ctx.update(path.as_bytes());

ctx.update(b"\n");

ctx.update(×tamp.to_be_bytes());

ctx.update(b"\n");

ctx.update(body);

ctx.sign()

}

Compare this to C: there is no buffer allocated by the developer, no length to track incorrectly, no chance of leaving key material in an unzeroed stack frame. The ring crate zeroes sensitive memory on drop.

Comparison and Tradeoffs

No language is universally correct. Here's an honest view of where Rust sits relative to alternatives.

| Language | Memory Safety | Performance | CVE Class Eliminated | Learning Curve | Ecosystem Maturity |

|---|---|---|---|---|---|

| C | None (manual) | Baseline | None | Low | 50+ years |

| C++ (modern) | Partial (smart ptrs) | ~C | Reduced (not eliminated) | High | Mature |

| Go | GC-based | ~20% slower | Most (GC handles lifetime) | Low-Medium | Growing fast |

| Rust | Compile-time | ~C | All (in safe code) | High | Growing fast |

| Java/JVM | GC-based | 2-5× slower | Most | Medium | Mature |

| Swift | ARC + safety | Close to C | Most | Medium | Apple ecosystem |

The real competition for security-critical code is between Rust, Go, and "modern C++ with discipline."

Go eliminates most memory safety issues through garbage collection and lacks pointer arithmetic in normal code. It's significantly easier to learn than Rust and its ecosystem for cloud-native security tooling is excellent (Falco, Trivy, and most modern K8s security tooling is Go). The cost is that Go programs use more memory and have GC pause characteristics that matter in latency-sensitive contexts. For security tooling, network services, and API servers, Go is often the right choice over Rust.

Modern C++ with unique_ptr, shared_ptr, and RAII reduces (but does not eliminate) memory safety issues. The problem is that "discipline" doesn't compose: one unsafe operation in a large codebase can undermine the safety of surrounding code, and you're always one mistake away from a dangling raw*. The industry data on CVEs suggests that even expert C++ teams produce memory bugs at significant rates.

Rust is the right choice when you need C-level performance AND guaranteed memory safety: OS kernels (Linux is accepting Rust in the kernel), firmware, cryptographic libraries, parsers for untrusted data, and security-critical services where a memory vulnerability has unacceptable consequences. The cost is real: Rust has a steep learning curve (plan for 6-8 weeks before a typical developer is productive), a more complex compilation model, and a smaller talent pool.

Production Considerations

Cargo Audit: Dependency Vulnerability Scanning

Rust's package manager Cargo makes it easy to add dependencies. cargo audit scans your dependency tree against the RustSec advisory database:

$ cargo audit

Fetching advisory database from `https://github.com/RustSec/advisory-db.git`

Loaded 639 security advisories (from /home/.cargo/advisory-db)

Scanning Cargo.lock for vulnerabilities (424 crate dependencies)

Crate: openssl

Version: 0.10.45

Warning: unmaintained

Title: openssl is unmaintained; prefer rustls

Date: 2023-11-28

ID: RUSTSEC-2023-0072

URL: https://rustsec.org/advisories/RUSTSEC-2023-0072

Run this in CI. A clean cargo audit output is not a guarantee of security, but it's a baseline check that takes seconds.

Integrating with Existing C/C++ Code: FFI

Most real systems aren't greenfield Rust. The common migration pattern is:

- Write new security-critical components in Rust (parsers, crypto, auth logic)

- Expose a C-compatible interface with

#[no_mangle]andextern "C"declarations - Wrap unsafe FFI calls in safe Rust abstractions

- Gradually expand the Rust footprint

The bindgen crate auto-generates Rust FFI bindings from C headers. cbindgen generates C headers from Rust. Between them, Rust-C interop is tractable.

// Safe wrapper around an unsafe FFI call to a C crypto library

pub fn legacy_decrypt(

key: &[u8; 32],

iv: &[u8; 16],

ciphertext: &[u8],

) -> Result<Vec<u8>, CryptoError> {

if ciphertext.is_empty() {

return Err(CryptoError::EmptyCiphertext);

}

let mut output = vec![0u8; ciphertext.len()];

let result = unsafe {

// SAFETY: all slices are non-null, correctly sized.

// `output` has capacity for the plaintext.

sys::legacy_aes_decrypt(

key.as_ptr(),

iv.as_ptr(),

ciphertext.as_ptr(),

ciphertext.len(),

output.as_mut_ptr(),

)

};

if result == 0 {

Ok(output)

} else {

Err(CryptoError::DecryptionFailed { code: result })

}

}

Fuzz Testing with cargo-fuzz

Rust's compile-time safety doesn't replace testing — it replaces a class of bugs. Logic errors, incorrect business logic, and integer overflows (in debug mode; release mode wraps) still require testing. Fuzz testing is particularly valuable for parsers:

cargo install cargo-fuzz

cargo fuzz init

cargo fuzz add fuzz_parse_request

cargo fuzz run fuzz_parse_request -- -max_total_time=3600

AddressSanitizer and UBSanitizer are built into the fuzzer harness. Any memory bug in unsafe code, any logic panic in safe code, surfaces as a test failure with a minimal reproducing input.

The #[deny(unsafe_code)] Pragma

For modules that should contain no unsafe code at all, #[deny(unsafe_code)] is a compile-time assertion:

#![deny(unsafe_code)]

// This module is guaranteed to contain no unsafe operations.

// Any PR that adds an unsafe block will fail to compile.

pub mod auth;

pub mod token_validation;

pub mod input_sanitization;

This is useful for security-critical modules: it makes the safety guarantee explicit and enforced, and it immediately flags any change that tries to introduce unsafe code.

Conclusion

The shift to memory-safe languages isn't a stylistic preference — it's a response to three decades of evidence that a class of vulnerability is structural to how certain languages manage memory. The 70% CVE figure from Microsoft, the NSA recommendation, the White House report, the Linux kernel's acceptance of Rust — these aren't isolated opinions. They're the industry reaching a conclusion based on accumulated data.

Rust doesn't eliminate all security vulnerabilities. Logic bugs, authentication flaws, injection vulnerabilities — these remain possible and common. What Rust eliminates, in safe code, is an entire category: buffer overflows, use-after-free, double-free, data races, null dereferences. These vulnerabilities are exploited constantly. Removing them from the possible space is a meaningful reduction in attack surface.

For security engineers, the practical message is:

- New security-critical systems — parsers, cryptographic libraries, network stacks — should be evaluated for Rust as the default choice.

- Existing C/C++ systems — use

cargo-fuzz, ASAN, andcargo auditas an improvement layer. Migrate incrementally where the risk profile justifies it. - Audit surface — in any Rust codebase,

unsafeblocks are the review priority. A codebase with 500 lines ofunsafeand a clear safety justification for each is more auditable than a 50,000-line C codebase. - Tooling —

cargo audit,clippy, andrustfmtare table stakes. Add them to CI before the first merge.

The bug I spent three days hunting in 2019 couldn't exist in safe Rust. That's the argument in one sentence.

Sources

- Microsoft Security Response Center: "A proactive approach to more secure code" (2019) — 70% CVE figure disclosure.

- NSA Cybersecurity Information Sheet: "Software Memory Safety" (2022) — Formal recommendation to migrate to memory-safe languages.

- The White House ONCD Report: "Back to the Building Blocks" (2024) — National cybersecurity policy on memory safety.

- RustSec Advisory Database — Vulnerability database for Rust crates.

- Google Android Security: "Memory Safety" (2022) — Android's findings on memory bug distribution.

- Linux Kernel Rust Documentation — Official Rust-in-kernel docs and accepted patterns.

About the Author

Toc Am

Founder of AmtocSoft. Writing practical deep-dives on AI engineering, cloud architecture, and developer tooling. Previously built backend systems at scale. Reviews every post published under this byline.

Published: 2026-04-22 · Written with AI assistance, reviewed by Toc Am.

☕ Buy Me a Coffee · 🔔 YouTube · 💼 LinkedIn · 🐦 X/Twitter

Comments

Post a Comment